DeepMind Genie and the Rise of Interactive World Models: The Next Frontier of Embodied AI

The story of Artificial Intelligence over the last five years has been dominated by the spectacular rise of Large Language Models (LLMs). Tools like GPT-4 demonstrated an unprecedented ability to reason, converse, and generate human-quality text. But as impressive as these digital brains are, they remain tethered to the text box—they understand the world only through the vast corpus of data they were trained on. They lack a persistent sense of space, physics, or continuous interaction.

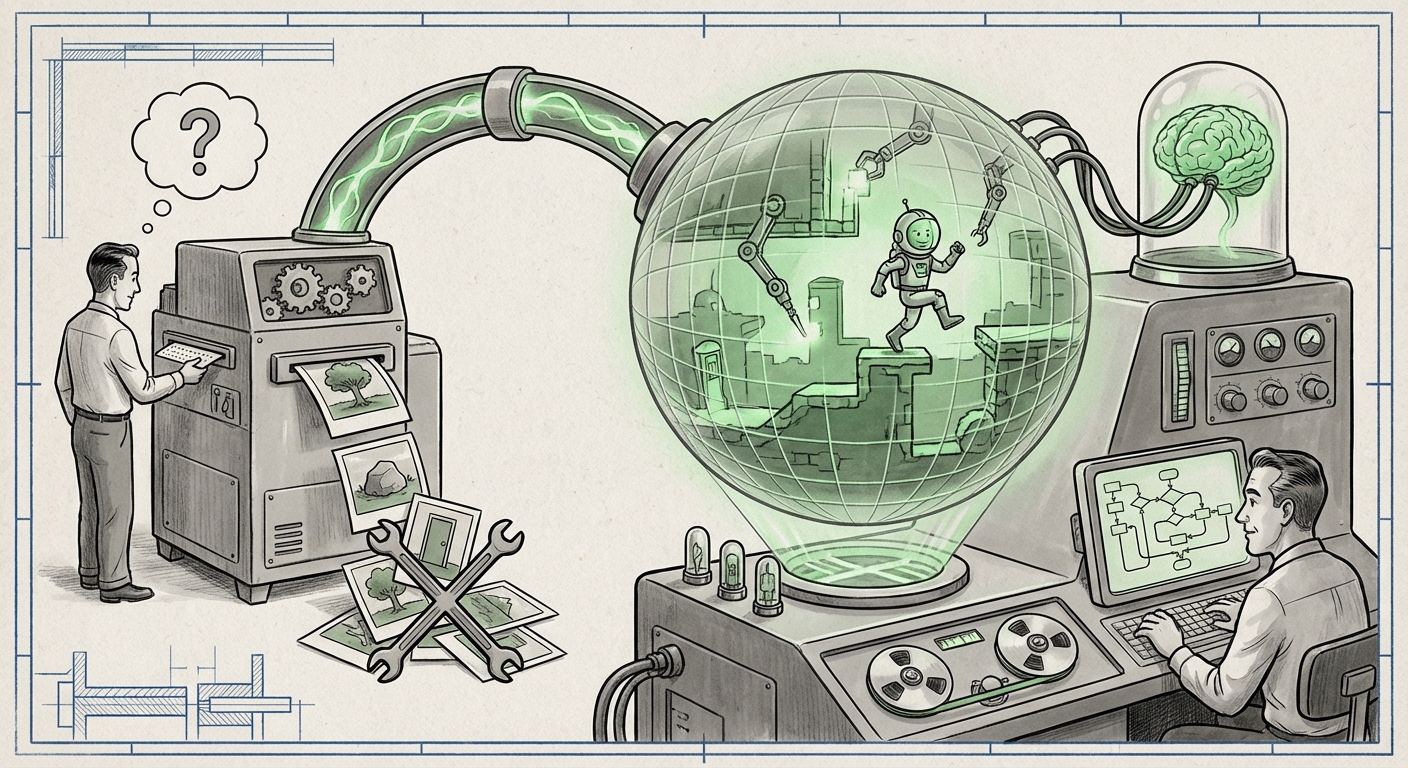

Enter the realm of World Models, and specifically, DeepMind’s recent breakthrough, **Genie**. As highlighted in analysis like "The Sequence Knowledge #817," Genie is signaling a fundamental pivot in AI research: the move from purely linguistic understanding to interactive, navigable simulation. This isn't just about generating pretty pictures; it’s about creating persistent, explorable 2D worlds from simple text prompts, opening the door to truly embodied AI.

The Core Innovation: From Image Generation to World Generation

To grasp the significance of Genie, we must first understand the difference between generative art and generative simulation. Older diffusion models create stunning, high-resolution static images. If you ask for "a whimsical forest path," you get one perfect snapshot. If you want to see what happens when you walk down that path, you must prompt again, and often, the next image won't match the first one logically.

Genie tackles this consistency problem head-on. It is described as one of the most advanced world models yet created because it generates an entire, latent space—a mathematical map—that can be navigated. When a user prompts for "a 2D platformer game world," Genie doesn't just draw a background; it builds the underlying structure that defines walls, floors, and possible movements.

Contextualizing the Tech: The Technical Leap

For researchers and engineers, Genie represents a powerful application of generative modeling techniques, often drawing parallels with advancements in latent space navigation and sequential data modeling (Query 1). The critical hurdle overcome here is *controllability*. Creating a consistent, interactive environment requires the model to maintain an internal understanding of physics and spatial relationships across many sequential frames. This demands that the underlying architecture can disentangle the content (the visual style, like "pixel art" or "cartoon") from the structure (the interactable layout).

This capability relies on training on a massive dataset of existing interactive worlds (like video games). By observing millions of transitions between game states, the model learns the rules of play and interaction implicitly. The result is a generative engine that can create thousands of *unique* playable worlds that adhere to a specific, user-defined style.

The Great Transition: LLMs Meet Embodiment

The most profound implication of models like Genie is how they bridge the gap between language understanding and physical action. This is the cornerstone of the future of **Embodied AI** (Query 2).

LLMs are excellent at *planning* ("To make coffee, first find the mug, then add water..."). However, an LLM cannot execute these steps in the real world or even a complex simulation without a component that translates abstract plans into concrete spatial actions. Genie provides the canvas, the persistent environment where plans can be tested.

Imagine an advanced AI agent. It needs:

- Reasoning (The LLM): To understand the goal: "Clean the kitchen."

- World Model (Genie/Successors): To possess a map of the kitchen, know where the counters are, and understand that "walking to the sink" means traversing a sequence of connected tiles in that map.

- Action Policy: To execute the fine motor skills required (e.g., grasping the sponge).

Genie, by creating navigable worlds from text, is essentially providing the standardized, high-fidelity simulation environment needed for step 2. This is accelerating the roadmap for creating general-purpose agents that can function autonomously, whether that means controlling a complex robotic arm or managing an entire supply chain simulation.

Actionable Insight for Strategists: Investing in Simulation Infrastructure

For tech leaders, this signals that the battleground is shifting from merely training larger foundational models to building richer, more detailed, and rapidly generated *simulation environments*. If an AI agent needs ten million hours of safe practice before operating a physical robot, creating those millions of hours of varied, customized simulated worlds quickly becomes the primary bottleneck. Models that instantly generate these worlds based on natural language instructions drastically reduce training time and cost.

Practical Implications: Rewriting the Rules of Content Creation

While robotics and general intelligence are the long-term goals, the most immediate impact of generative world models is being felt in content creation, especially the video game industry (Query 3).

Democratizing Game Development

For decades, creating a unique 2D platformer or an adventure game required specialized artists, level designers, and programmers to manually place every tile, enemy, and hazard. Genie proposes a future where a single designer can prompt: "Generate a hard-mode steampunk dungeon with three secrets and a lava hazard near the entrance." The AI instantly generates the playable layout.

This profoundly lowers the barrier to entry for creators. Instead of spending 80% of their time on boilerplate asset placement, creators can focus 80% of their time on iterating on narrative, difficulty tuning, and creative interaction design.

The Shift from Procedural Generation (PCG) to Generative Creation

Traditional PCG relies on complex, manually written algorithms (e.g., "place a tree every 50 units, but avoid placing it on a slope greater than 10 degrees"). Generative World Models offer an evolution: they learn the *aesthetics* and *rules* of good design from examples, rather than rigid, programmer-defined instructions. If the training data contains many well-designed levels, the generated level will likely *feel* well-designed, even if the underlying mechanics are complex.

Navigating the Future: Challenges and Ethical Considerations

This progress is exhilarating, but it raises critical questions that must be addressed as we transition from static generation to dynamic worlds.

1. The Consistency Paradox

While Genie is excellent, ensuring perfect, long-term consistency remains a challenge. If an agent pushes a block off a cliff in a world generated by Genie, the world model must flawlessly predict where that block will land, how it will interact with subsequent elements, and maintain that state indefinitely. Failures in consistency—or "hallucinations" in the simulation—can undermine an agent’s learning process.

2. Data Dependency and Bias Amplification

World models are inherently biased by the data they consume. If the dataset of 2D worlds primarily features certain visual styles (e.g., Western fantasy tropes) or game mechanics (e.g., linear progression), the AI will struggle to generate truly novel or culturally diverse interactive spaces. The inherent biases of existing digital content risk being locked in and amplified across future simulated realities.

3. The Simulation Reality Gap

The ultimate test for embodied AI is transferring learning from simulation to the real world—the "Sim-to-Real" gap. While a 2D world model simplifies physics drastically compared to the real world, the better the simulation environment, the smaller that gap becomes. Advances in Genie-like models compel us to invest heavily in making simulations realistic enough that learning within them translates efficiently to physical robots or complex industrial control systems.

Actionable Steps for Engagement

For businesses and technologists looking to harness this wave, the focus should be twofold:

- Experiment with Custom Fine-Tuning: Don't wait for the next general-purpose model. Identify proprietary or niche datasets (e.g., internal schematics for a factory, specific historical architectural styles) and explore fine-tuning smaller foundational models to create highly specialized, interactive training environments.

- Prioritize Agent Integration Pathways: Begin architecting software layers that can seamlessly feed the output of a world model (the navigable map/state space) into an LLM planner and a control system. The integration layer is where competitive advantage will be won, not just in the model itself.

The development of DeepMind’s Genie is more than just a technical upgrade; it represents a maturation of generative AI. We are leaving the era of generating beautiful, isolated artifacts and entering the era of generating functional, interactive realities. This shift promises to revolutionize everything from how we design video games to how we train the autonomous systems that will shape our physical world.