Beyond Text and Images: Why DeepMind Genie's Interactive World Models Are the Key to Truly Autonomous AI

For years, the revolution in Artificial Intelligence has been dominated by language and vision. We have models that can write novels (LLMs) and models that can generate stunning photorealistic images from simple text prompts (diffusion models). These tools are powerful, but they fundamentally operate on static outputs—words on a screen or pixels on a canvas. What happens when AI needs to *do* something, interact with a world that changes based on its actions?

Enter the era of Interactive World Models, epitomized by recent advancements like DeepMind’s Genie project. As detailed in analyses like "The Sequence Knowledge #817," Genie isn't just generating media; it’s generating *experiences*. It suggests a future where AI doesn't just describe the world, but builds and navigates it, offering a fundamental shift in how we conceive of artificial intelligence.

The Leap: From Static Generation to Dynamic Interaction

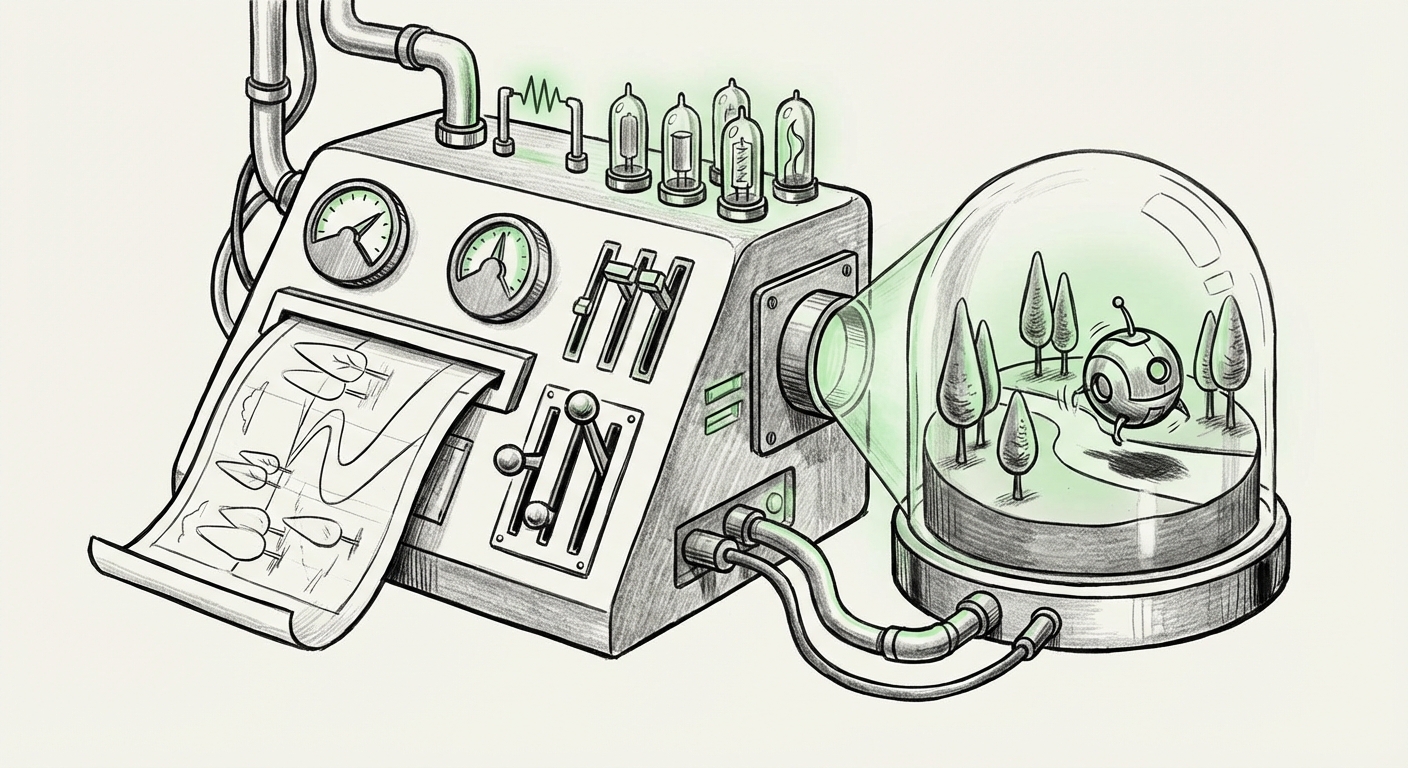

To grasp the significance of Genie, we must understand the difference between an image generator and a world model. Imagine you ask an AI to generate a "forest clearing with a hidden path." A standard model gives you a beautiful picture. A world model, like Genie, generates an environment where you could theoretically step inside, move around, find the path, and perhaps even chop down a tree or build a shelter.

This capability stems from learning a deep, compressed understanding—a latent space—of how objects and physics interact dynamically. This is far more complex than simply predicting the next pixel or word.

What is a World Model, Simply Explained?

Think of the human brain. We don't just see the world; we constantly run simulations in our minds. If you drop a glass, you know it will fall and break, even if you don't actually do it. That internal simulation is a basic world model. Genie attempts to build a digital version of this—a compact set of rules that allows the AI to predict what happens next in a 3D space based on what it has learned.

This transition is crucial because it unlocks Embodied AI—AI that can act purposefully in space, whether that space is a video game, a factory floor, or the real world via a robot.

Contextualizing Genie: The Wider AI Landscape

DeepMind's breakthrough does not exist in a vacuum. It is part of a clear, accelerating trend toward grounding intelligence in simulation and physical interaction. By looking at complementary areas of research, we can better understand Genie’s trajectory.

1. The 3D Content Explosion

The bottleneck for realistic simulation has always been the creation of high-quality 3D assets and environments. Generating a realistic digital world by hand is slow and expensive. If an AI can create entire functional, interactive 3D worlds from a sentence, the cost of building digital reality plummets.

This ties directly into research focusing on Generative AI for 3D Content Creation and Simulation. Techniques like Neural Radiance Fields (NeRFs) or 3D Gaussian Splatting aim to reconstruct complex scenes efficiently. Genie appears to marry the generative power of these spatial techniques with interactive dynamics, moving beyond mere reconstruction to active creation. For industries like gaming and architectural visualization, this means infinite, customizable digital testbeds.

2. The Race for Real-World Agents

Genie is a simulator, but the goal is always deployment. The competition in the Embodied AI Agents and Simulation Environments space is fierce. Google (DeepMind’s sibling company) has pursued models like RT-2 (Robotics Transformer), which aim to translate web knowledge into robotic actions. Meta has invested heavily in building agents that learn within complex simulated physics engines.

Genie’s unique contribution here is the *generative* nature of the environment itself. If an agent trained on simulated environments finds a problem in the real world, the current method is to collect more real-world data. With interactive world models, the agent can simply ask the world model to generate a thousand variations of that difficult scenario until it masters the task. This drastically accelerates the learning loop for physical robotics.

3. Resolving the Reasoning Dilemma: World Models vs. LLMs

One of the key philosophical and technical debates revolves around whether massive LLMs possess emergent reasoning capabilities or if they are merely sophisticated pattern-matchers. Many experts suggest that true, forward-looking planning requires an explicit causal model of reality—a World Model.

This relates to the classic distinction between fast, intuitive thinking (System 1) and slow, deliberate reasoning (System 2). Pure LLMs excel at System 1 pattern recall. World models, by forcing the AI to simulate outcomes before committing to an action, are designed to support System 2 planning. Genie’s success demonstrates that for tasks involving spatial reasoning, causality, and delayed rewards, integrating a dedicated world model is likely necessary to overcome the inherent limitations of purely text-based reasoning architectures.

4. The Technical Engine: Latent Space Mastery

Underneath the impressive demos is profound mathematical progress. The ability to generate interactive worlds relies on mastering the Latent Space Exploration in Next-Generation AI. Latent space is the AI’s compressed, internal language for describing the world. In a standard image model, this space represents colors and shapes. In Genie, this space must represent physics, object permanence, and temporal relationships (what happens when I push this block?).

The architecture must allow the AI to "walk" smoothly through this latent space, ensuring that a small change in the input prompt (e.g., moving a door slightly) results in a small, coherent change in the resulting 3D world, rather than producing chaos. This level of stable, high-dimensional latent space manipulation is a hallmark of the future of generative AI.

Future Implications: How This Technology Will Reshape Industries

The convergence represented by DeepMind Genie has profound implications spanning simulation, design, and autonomy.

For AI Researchers and Developers: The Shift to Agents

The immediate impact is the formalization of the Agentic AI paradigm. We are moving past models that simply answer questions to models that execute complex, multi-stage goals within dynamic environments. Developers will need to shift focus from prompt engineering for static outputs to designing reward structures and goal hierarchies for long-running agents.

Actionable Insight: Teams should begin experimenting with connecting existing high-level LLM reasoning systems (for goal decomposition) with specialized world models (for physics and environmental navigation) to create rudimentary agents capable of planning tasks that require spatial understanding.

For Business and Simulation Industries: Hyper-Realistic Digital Twins

The ability to generate functional 3D simulations on demand will revolutionize training and testing. Consider high-stakes fields:

- Autonomous Vehicles: Instead of relying solely on collected road data, companies can generate infinite edge cases—rare weather, unpredictable pedestrians, complex construction zones—all interactive and perfectly modeled, accelerating safety validation dramatically.

- Manufacturing and Logistics: Warehouses, factories, and supply chains can be modeled as digital twins that can be stressed and optimized by AI agents before any physical changes are made.

- Product Design: Designers can prompt for "a minimalist chair made of recycled aluminum that can hold 400 lbs" and receive not just a render, but a fully simulated, stress-tested model ready for basic physics checks.

For Society: Content Creation and the Metaverse

While the technical implications are vast, the societal implications for digital media creation are staggering. If generating a functional, navigable digital world takes minutes instead of months, the barrier to entry for creating metaverse content, complex video game levels, or interactive educational tools collapses. We are moving toward a world where every user can be a world-builder simply by describing their vision.

However, this raises critical questions about reality and deception. If an AI can generate a perfectly coherent, interactive video demonstrating an event that never occurred, the challenge of distinguishing real footage from synthetic simulation—already difficult with 2D deepfakes—becomes exponentially harder.

Overcoming the Next Hurdles

While Genie is a triumph, several challenges remain before this technology becomes ubiquitous:

- Scalability and Fidelity: Current models often deal with simplified environments. Scaling Genie's latent space to accurately model the complexity of the real world—with its myriad materials, subtle physical interactions, and diverse agents—is a massive computational undertaking.

- Integration with Real-World Sensors: For true embodied intelligence, the world model must seamlessly interpret data from real cameras and sensors, translating the messy reality into the clean latent space it understands, and vice-versa.

- Long-Horizon Planning: World models excel at short-term prediction. Proving that they can maintain causal coherence and strategic planning over very long sequences of actions (hours or days) remains a key research frontier.

Conclusion: The Birth of Interactive Intelligence

DeepMind Genie, viewed through the lens of concurrent research in 3D generation and embodied AI, signals a definitive turning point. AI is graduating from being an observer and narrator of data to becoming an active participant in synthetic realities. The development of interactive world models means that the focus of AI innovation is rapidly pivoting from *what* the AI knows to *what* the AI can do within an environment it helps create.

This shift mandates a new focus for technologists and business leaders alike: preparing for a future where intelligence is measured not by the eloquence of its language, but by the complexity and utility of the worlds it can build and navigate.

Contextual References for Further Reading

To fully appreciate the scope of this development, consider the parallel progress in these specialized areas:

- For insights on the competitive landscape and goals of embodied agents: Look for recent developments concerning Embodied AI Agents and Simulation Environments. The progress of models like Google’s RT-2 shows the race to connect linguistic reasoning with physical action.

- To understand the infrastructure required for realistic digital environments, research into Generative AI for 3D Content Creation and Simulation is essential, often featuring breakthroughs from graphics leaders like NVIDIA on scene representation.

- For the theoretical justification of this architectural choice, exploring discussions on World Models vs. Large Language Models (LLMs) for Reasoning highlights why explicit physics modeling is needed for robust planning.

- Technically, the innovation lies in managing massive data structures. Deep dives into The Role of Latent Space Exploration in Next-Generation AI reveal the mathematical keys to creating coherent, navigable simulated realities.