GPT-5.3 Instant: Why AI Reliability and Speed Are the New Battleground for Frictionless Intelligence

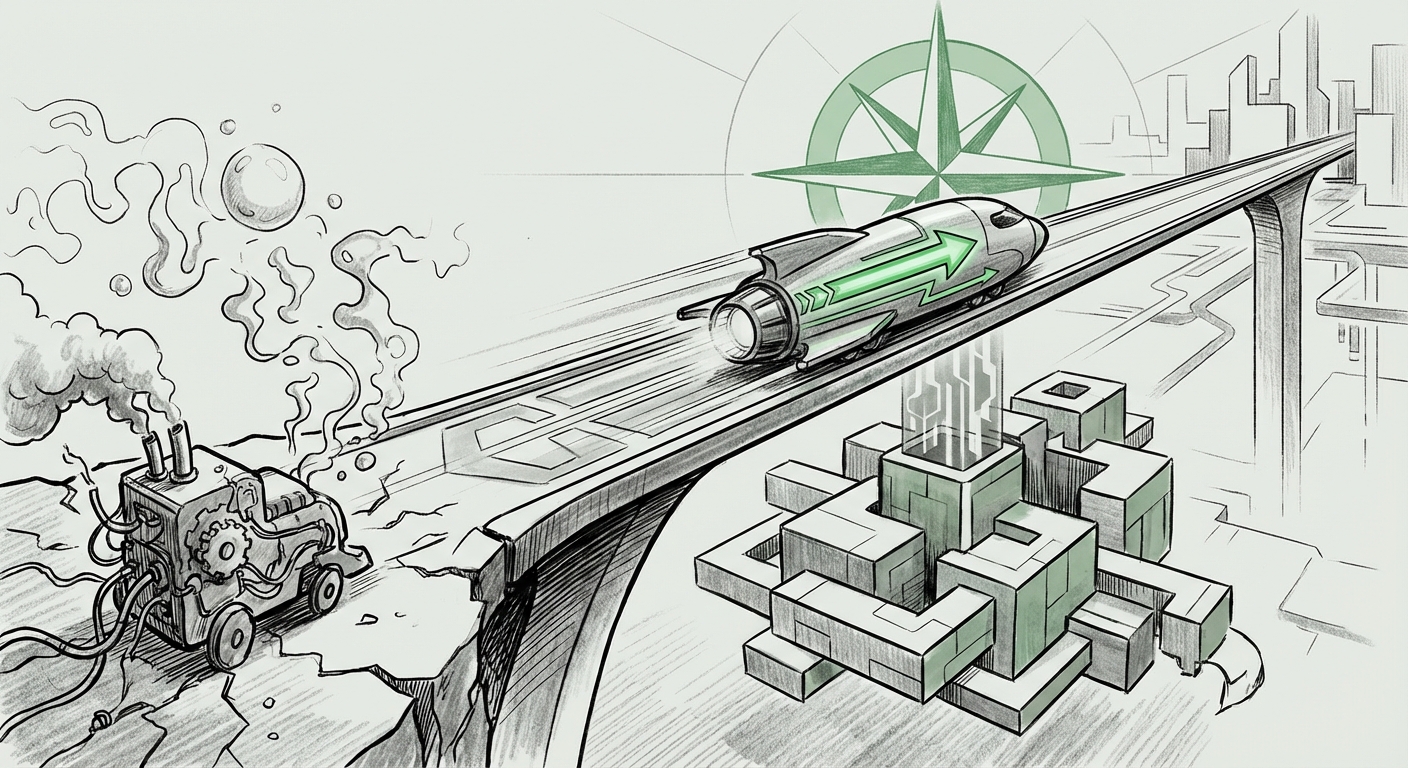

The artificial intelligence landscape is constantly shifting, but a recent announcement from OpenAI—the release of GPT-5.3 Instant—suggests a powerful new direction. We are moving beyond the era defined solely by the size of the model (how many parameters it has) into an era focused on *usability, trustworthiness, and speed*. This model's explicit goal is twofold: delivering smoother everyday conversations and drastically improving the accuracy of responses derived from live web searches by minimizing "hallucinations."

For years, the power of LLMs was hampered by two core issues: they sometimes sounded robotic or awkward in casual back-and-forth, and, more dangerously, they would confidently state falsehoods, especially when pulling in current facts. GPT-5.3 Instant directly targets these pain points, signaling that the next leap in AI isn't just about intelligence, but about integrating AI into our daily workflows without friction.

The Pivot: From Intelligence Quotient to Trust Quotient

Imagine trying to use a calculator that sometimes gave you the wrong answer just because it felt like it. That’s what AI hallucination has often felt like in high-stakes scenarios. While earlier models excelled at creative writing or complex reasoning, their unreliability when asked, "What is the closing stock price today?" created a massive barrier for business adoption.

GPT-5.3 Instant reframes the competitive metric. It suggests that a slightly less powerful (in raw complexity) but demonstrably trustworthy and *fast* model is superior for real-world utility. This shift is best understood by examining the underlying technical goals, which we explored through targeted analysis.

1. The Technical Hurdle: Mastering Grounding and Retrieval

How does a model get better at not lying when it looks things up? This is where technical advancements become essential. Our analysis looking into "LLM hallucination reduction techniques" points directly to improvements in how these models use external data. The key technology here is often an advanced form of Retrieval-Augmented Generation (RAG).

Think of RAG as an open-book exam for the AI. Instead of relying only on what it memorized during training (which is often months or years old), the model is forced to first retrieve relevant, up-to-the-minute documents from the web or an internal database, and then use those specific snippets to formulate its answer. GPT-5.3 Instant suggests a sophisticated evolution of this process:

- Smarter Retrieval: The model is better at deciding when it needs to search and what search terms to use.

- Better Cross-Referencing: It is more adept at checking conflicting information found across multiple sources, prioritizing the most authoritative data.

- Citation Integrity: The resulting answer is more tightly "grounded" in the retrieved facts, making the claim verifiable and reducing the chance of mixing internal knowledge with external data inaccurately.

For ML Engineers and Researchers, this move is confirmation: the focus is shifting from better prediction algorithms to better *information synthesis and verification* algorithms.

2. The Conversational Experience: Latency and Fluency

The "smoother everyday conversations" aspect touches on both speed (latency) and naturalness (fluency). Our investigation into "AI latency and user trust benchmarks" highlights why speed matters so much.

Humans are accustomed to near-instantaneous responses in dialogue. When an AI pauses for three or four seconds to construct a sentence, the user feels like they are waiting on a slow computer, not having a fluid conversation. Industry UX studies suggest that responses taking longer than a fraction of a second can break immersion. GPT-5.3 Instant aims for a performance profile that feels inherently instantaneous. This is critical because:

- Reduced Cognitive Load: Fast responses mean the user doesn't have to hold the context of their question while waiting, leading to more complex and nuanced follow-up questions.

- Accessibility: For non-technical users, a model that 'just works' instantly feels less like software and more like an intuitive tool.

This blend of low latency and high conversational quality means that AI assistants are finally ready to transition from being novelty tools to becoming core digital collaborators.

The Competitive Arena: Speed, Trust, and the Search Wars

OpenAI’s release does not occur in a vacuum. The AI industry is engaged in a brutal platform war, and the success of GPT-5.3 Instant directly challenges incumbent leaders, most notably Google.

Our second search query regarding "Google Gemini accuracy improvements vs OpenAI instant" reveals the intensity of this competition. For Google, whose identity is intrinsically tied to the authoritative delivery of web information via Search, the idea of a competitor offering faster, more reliable web-grounded answers is an existential threat.

The battleground is now defined by trust in real-time data:

- The Search Generative Experience (SGE): Google is embedding generative AI directly into search results. If GPT-5.3 Instant proves significantly better at avoiding falsehoods while maintaining high speed, users accessing information through third-party platforms (like Microsoft Copilot, which often runs on OpenAI models) will find superior results compared to native Google Search features.

- Platform Lock-in: The platform that delivers the most reliable, integrated experience across productivity suites (email, documents, spreadsheets) and search will achieve significant user lock-in. Speed and truth are the keys to winning this integration war.

This competitive pressure forces all major players—Anthropic, Google, Meta—to accelerate their own efforts in grounding and low-latency deployment. The user benefits immensely from this rivalry.

Implications for Business: The Trust Barrier Crumbles

The most profound impact of GPT-5.3 Instant will be felt in the enterprise. As highlighted by research on the "Impact of conversational fluency on enterprise AI adoption," the biggest hurdle for CIOs deploying generative AI wasn't compute cost; it was the risk associated with incorrect outputs.

For businesses, reliability translates directly into regulatory compliance, customer satisfaction, and operational efficiency. Consider these use cases:

Finance and Legal Compliance

In regulated industries, an AI hallucinating a financial report figure or misinterpreting a legal precedent is unacceptable. When models are demonstrably grounded in verifiable, real-time documents, they become powerful drafting and summarization assistants rather than just risky content generators. The speed ensures that analysts can review and refine documents much faster than before.

Customer Experience (CX)

In customer service chatbots, frustration mounts rapidly when the bot cannot resolve an issue or provides outdated information about pricing or shipping policies. GPT-5.3 Instant’s focus on fluency and factual grounding means chatbots can handle complex, multi-turn support conversations more autonomously, elevating the customer experience while lowering operational costs.

Internal Knowledge Management

Many large companies struggle with fragmented knowledge spread across Sharepoint, Slack, and shared drives. An LLM that can instantly and accurately synthesize answers from this messy internal corpus—without inventing policies or procedures—transforms internal search from a frustrating chore into immediate productivity.

Actionable Insights for Adopting AI Today

For businesses evaluating their AI strategy, the release of models like GPT-5.3 Instant offers clear guidance on where to invest and what to prioritize:

- Prioritize Grounding Over Generalism: When testing new models, do not focus solely on creative benchmarks. Demand rigorous testing on tasks that require real-time data lookup (e.g., pricing comparisons, current news summaries). The ability to cite sources accurately should be non-negotiable.

- Benchmark Latency Critically: Establish internal benchmarks for acceptable response times based on the task. A creative prompt can afford a few extra seconds, but a real-time customer interaction cannot. Look for vendor SLAs (Service Level Agreements) that guarantee sub-second response times for production workloads.

- Invest in RAG Infrastructure Now: If your company has proprietary, high-value data (customer records, technical manuals), ensure your AI integration strategy focuses heavily on building robust RAG pipelines. The success of GPT-5.3 Instant suggests the best performance will come from models augmented by *your* trusted data, not just the open web.

- Embrace Conversational Design: Begin re-designing workflows around conversational interaction. The expectation for AI is shifting from an API endpoint to a proactive, articulate team member. Fluency reduces the need for complex prompt engineering from end-users.

The Future is Frictionless, But Built on Trust

The narrative around AI is maturing. We are past the initial shock of generative capability and entering the phase of disciplined deployment. GPT-5.3 Instant is a powerful indicator that the industry understands that the true value of AI isn't in what it can generate, but in what it can generate reliably and instantly.

This drive toward Frictionless AI—where the technology seamlessly blends into human workflows without demanding constant correction or enduring long pauses—will redefine digital interaction. The future AI assistant won't just be smart; it will be fast enough to keep up with your thoughts and honest enough to earn your unwavering trust in the deluge of real-time information.