GPT-5.3 Instant: The Pivot from Raw Power to Reliable, Real-Time Utility in AI

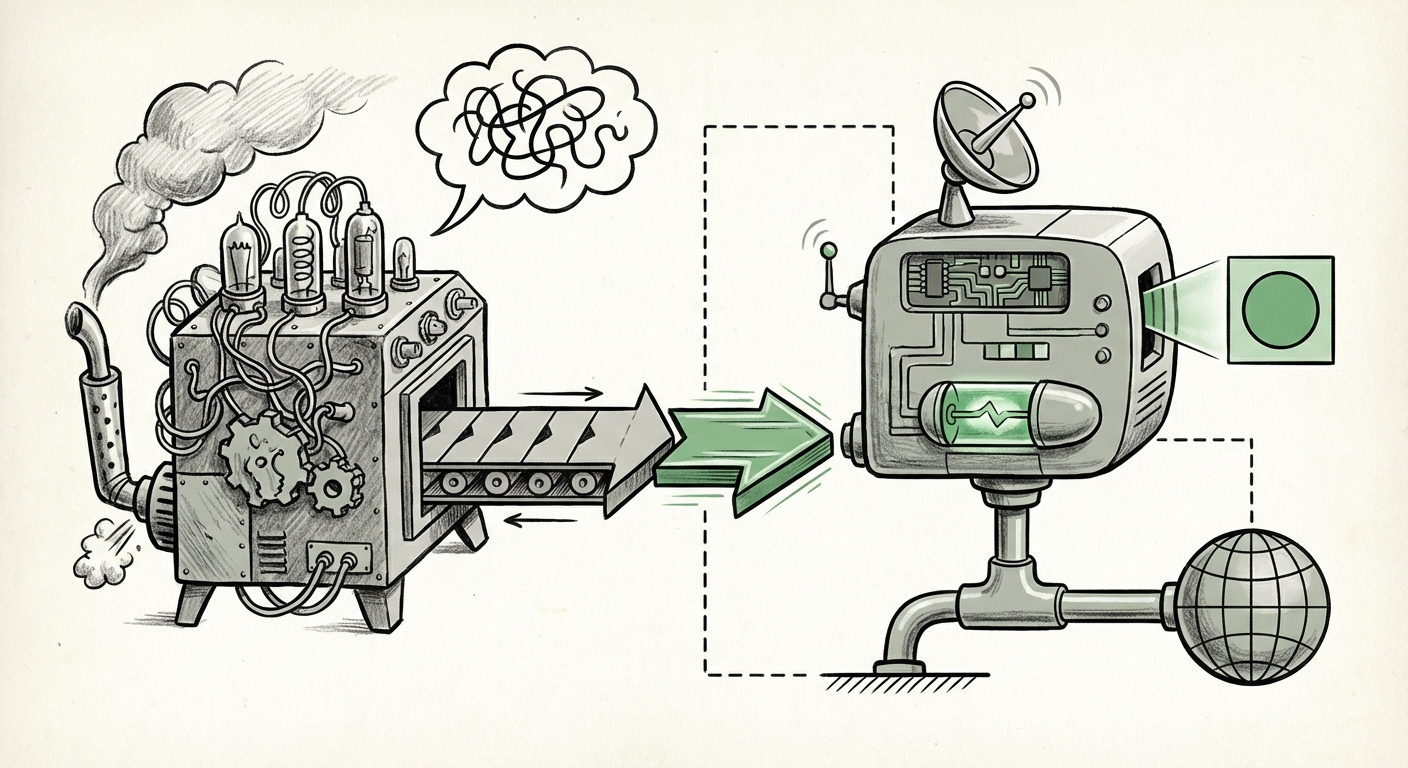

The landscape of Large Language Models (LLMs) has long been defined by a race for sheer scale: more parameters, more data, and more astonishing, albeit sometimes inaccurate, outputs. However, the recent announcement of GPT-5.3 Instant signals a vital maturation point. This is not just another iteration; it represents a decisive market pivot toward utility, speed, and—most critically—trustworthiness, especially concerning web-integrated tasks.

As technology analysts, we must look beyond the headline number. The focus on "smoother everyday conversations" and reduced hallucination, specifically during search functions, indicates that the industry is moving AI out of the laboratory showcase and into the mission-critical workflows where reliability is paramount. This article synthesizes this development by examining the technical underpinnings of "Instant," the growing imperative to curb fabrication, and the seismic shifts this means for the future of enterprise and consumer AI.

The Engineering Behind "Instant": Latency as the New Frontier

For the average user, an AI that takes five seconds to answer a simple factual question feels slow; for a high-frequency trading application or a live customer service agent, it's unusable. The term "Instant" suggests that OpenAI has prioritized inference optimization—the process of generating an answer once the model has received a prompt.

From Benchmarks to Real-Time Interaction

The pursuit of lower latency is the industry’s current technical obsession. It requires complex engineering feats that are often less glamorous than developing a new trillion-parameter model, but far more impactful for everyday adoption.

- Speculative Decoding: This technique involves using a smaller, faster model to predict the next few tokens (words), which the larger model then verifies in parallel. If the small model is correct, the system outputs tokens much faster than traditional sequential processing.

- Quantization and Sparsity: Engineers are finding ways to shrink the model footprint (using fewer bits to represent weights) or identifying and removing unimportant connections (sparsity) without significantly damaging output quality. A lighter model runs faster on existing hardware.

- Hardware Optimization: The integration of custom silicon (like specialized TPUs or GPUs) paired with highly optimized model architectures ensures that every computational cycle is used efficiently.

If corroborating analysis into "LLM latency improvements real-time applications" confirms significant speed gains, it democratizes AI access. It allows LLMs to power sophisticated mobile apps, instantaneous code completion, and seamless voice interfaces where any delay breaks the sense of natural dialogue.

The Trust Deficit: Why Halucination Reduction Matters Most

The most significant hurdle for widespread LLM adoption in professional settings has been the “hallucination problem”—when the model confidently generates false or nonsensical information.

GPT-5.3 Instant's specific focus on reduced hallucination *when using web search* highlights the industry’s recognition that LLMs cannot operate effectively as isolated knowledge silos. They must interface with verifiable, external truth.

The Maturation of Retrieval-Augmented Generation (RAG)

This improvement strongly suggests advancements in **Retrieval-Augmented Generation (RAG)** systems. RAG allows the LLM to perform a web search (or access an internal knowledge base) *first*, and then use that retrieved, verified information to formulate its final answer. The "Instant" model likely features a tighter, faster, and more discerning coupling between the retrieval agent and the generative core.

If we investigate "AI hallucination reduction techniques 2024," we expect to see competitors also emphasizing better grounding. The competitive advantage now lies not just in how well the model generates text, but how effectively it can cite its sources and reject unreliable data retrieved from the web.

For enterprise risk managers and ethicists, this is a non-negotiable feature. A model that reliably minimizes fabrication drastically lowers the barrier for adoption in regulated industries like finance, law, and healthcare, where factual accuracy is paramount.

The Market Shockwave: Disruption in Search and Conversation

When a leading foundational model improves its search capabilities, the impact ripples across established market segments. GPT-5.3 Instant is directly challenging the hegemony of traditional search engines and transforming what "conversational interface" means.

Confronting the Search Giants

For years, search has been a task of keyword matching and link aggregation. Modern conversational AI promises semantic understanding—understanding the *intent* behind the query and synthesizing a direct answer, rather than presenting ten blue links. When this synthesis is fast ("Instant") and accurate (low hallucination), the value proposition of traditional search diminishes rapidly.

Market analysis concerning the "Impact of conversational AI on enterprise search market" will show a rapid acceleration toward LLM-first tools. Businesses will look to integrate these models directly into their documentation, CRM systems, and internal communication platforms, transforming static data access into dynamic knowledge synthesis.

Defining "Smoother Everyday Conversations"

Beyond just search, the emphasis on conversational flow affects every customer interaction layer. A "smoother" conversation implies better context tracking, more human-like pacing, and less robotic repetition or topic derailment. This moves AI from being a clunky chatbot to a genuinely capable digital assistant capable of handling multi-turn, nuanced dialogue.

Future Implications: Specialization and Verticalization

The trajectory set by GPT-5.3 Instant—optimized speed and reliability—suggests that future LLM development will specialize significantly.

The Rise of the Optimized Model Family

We are likely moving away from the idea of one monolithic, generalist model that must perform every task optimally. Instead, OpenAI, and its competitors, will likely release specialized variants:

- The Utility Model (e.g., 5.3 Instant): Optimized for speed, real-time grounding, and general Q&A. The workhorse for consumer applications and standard enterprise tasks.

- The Expert Model (e.g., Hypothetical GPT-5.X Pro): Optimized for deep reasoning, complex creative generation, and multi-step problem-solving, perhaps retaining a higher parameter count but accepting higher latency.

This specialization answers the query about "OpenAI model naming conventions": sub-versioning like '5.3 Instant' confirms that developers are now fine-tuning models for *specific functional requirements* rather than just broad capability improvements.

Actionable Insights for the Tech Ecosystem

How should the industry react to this pivot toward usable, reliable AI?

For AI Engineers and Developers:

Shift focus from prompt engineering alone to **system engineering**. Building robust applications now requires mastering the integration layer—optimizing RAG pipelines, managing retrieval latency, and implementing verification steps post-generation. Proficiency in fine-tuning models for speed (quantization awareness) will become as important as proficiency in parameter tuning.

For Business Leaders and Strategists:

This is the moment to accelerate deployment in customer-facing and knowledge-worker roles. The primary risk—inaccurate output—is being aggressively mitigated by providers. Businesses should audit their existing use cases, prioritizing those that require low latency (e.g., real-time recommendations, immediate support) and high factual grounding (e.g., internal documentation querying).

For Society and Governance:

Improved grounding is a step toward AI accountability. When models are forced to rely on external, verifiable sources, the pathways for tracing bias, misinformation, or error become clearer. This development creates an opportunity for regulators and developers to build better audit trails directly into AI-driven information pipelines.

Conclusion: The Age of Frictionless AI

GPT-5.3 Instant is not a revolution in sheer intelligence; it is a revolution in *delivery*. The transition from impressive parlor tricks to indispensable tools requires models that are fast enough to be forgotten and accurate enough to be trusted. By aggressively targeting latency and hallucination, OpenAI is signaling that the next wave of AI value creation will be found in the seamless, invisible integration of these powerful tools into the fabric of daily digital life.

The future of AI is no longer about how smart the model is in isolation, but how reliably it can interact with our complex, noisy, real-world data streams. This focus on utility ensures that AI will finally move from being a fascinating technology to being an essential utility.