The Trust Pivot: Why GPT-5.3 Instant’s Focus on Speed and Accuracy Changes the AI Game

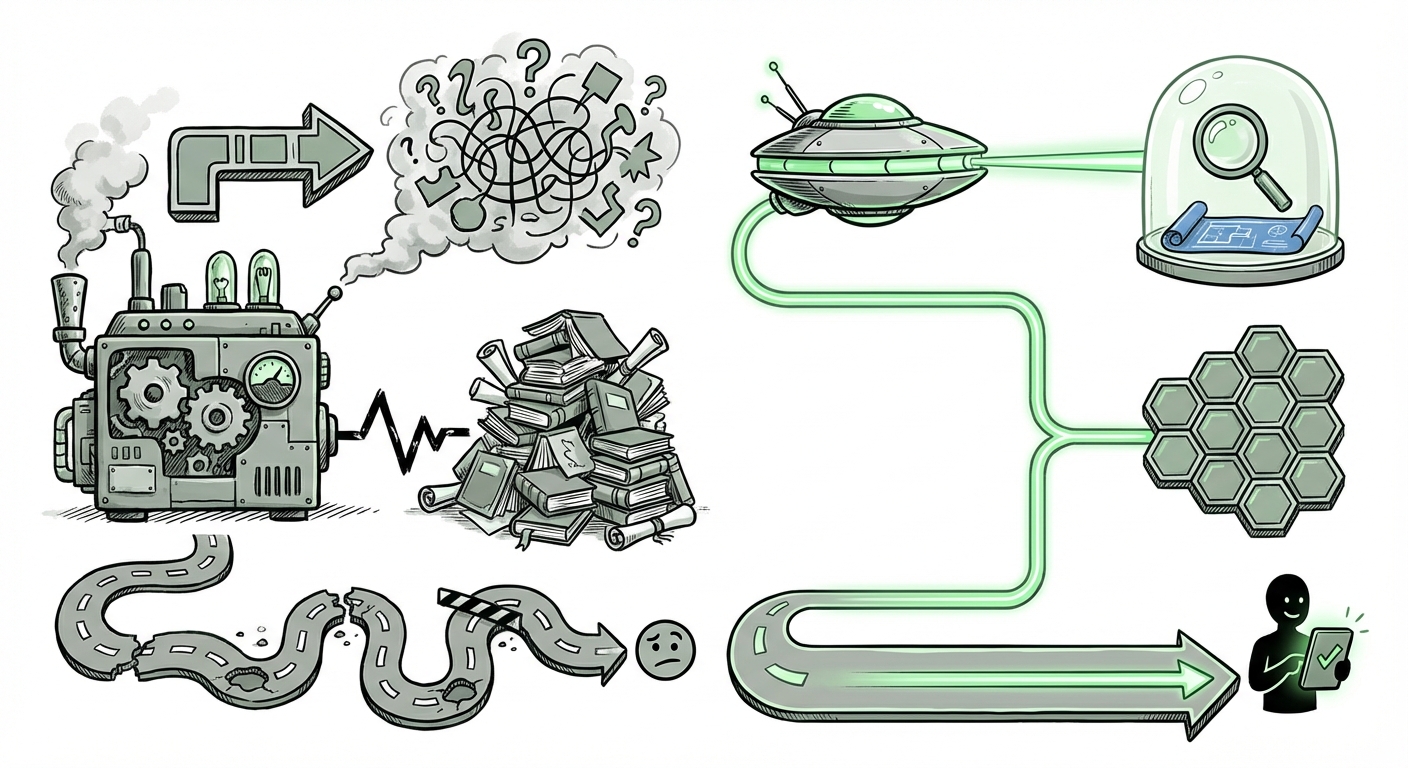

The world of Artificial Intelligence often moves in grand leaps—new architectures, stunning benchmark scores, and models boasting trillions of parameters. But the latest release, **GPT-5.3 Instant**, signals a crucial, perhaps more significant, shift: the pivot from sheer generative *power* to foundational *reliability* and *speed* for everyday use.

OpenAI’s introduction of this model, explicitly designed for "smoother everyday conversations and better search," is not merely an iterative update; it’s a direct address to the primary friction points hindering mass AI adoption. When an AI tool is slow, or worse, confidently wrong (hallucinates), its utility plummets. GPT-5.3 Instant suggests the industry is finally moving past the "wow" factor and settling into the "how" of reliable integration.

From Brute Force to Bullet Speed: The "Instant" Imperative

The nomenclature—"Instant"—speaks volumes. In the context of conversational AI, latency is the silent killer of engagement. A slight pause in a conversation, whether with a chatbot or a digital assistant, breaks the natural flow and reminds the user they are interacting with a machine, not a partner. This friction is precisely what stalls user adoption for mission-critical applications.

To achieve true integration into daily workflows, AI must operate near-instantaneously. This likely necessitates deep engineering breakthroughs beyond just scaling up. We must look toward technical advancements that correlate with this claim:

- Model Distillation and Quantization: To make a model "instant," it often needs to be smaller or more efficient than its largest predecessor. This involves taking the knowledge from a massive "teacher" model and compressing it into a highly optimized "student" model, sacrificing minimal accuracy for massive speed gains. This is crucial for running models efficiently on consumer devices or at high server loads (as suggested by potential searches on latency optimization benchmarks).

- Optimized Context Handling: In conversational search, context must be managed instantly. If the model is bogged down retrieving and re-evaluating massive amounts of external data for every turn of dialogue, speed suffers. Instant models suggest a highly streamlined Retrieval-Augmented Generation (RAG) pipeline where grounding is fast and integrated into the core generation loop.

For developers and MLOps teams, the value here is clear: lower operational costs and the ability to deploy AI in real-time customer service, dynamic tutoring, or gaming applications where milliseconds matter.

The Trust Deficit: Why Hallucination Reduction is the New North Star

The second pillar of GPT-5.3 Instant’s design—significantly reducing hallucinations—is arguably the most vital for mass market acceptance. A hallucination is when an AI confidently states something false or nonsensical. While entertaining in early demos, this becomes a severe liability when the AI is used for medical queries, financial advice, or mission-critical business summaries.

This focus confirms what consumer adoption trends suggest: **trust trumps novelty.** Users will tolerate slightly less articulate answers if they are factually grounded. This pursuit of reliability is transforming the underlying AI architecture:

The Technical Fight Against Falsehoods

If we examine corroborating industry efforts (as suggested by searches on hallucination reduction), we see competitors intensely focused on improving grounding. This is often achieved through superior RAG implementations, ensuring the LLM bases its response strictly on verifiable, up-to-date external documents rather than relying solely on its internal, potentially outdated, training data. The success of GPT-5.3 Instant will likely rely on how seamlessly and quickly it can cross-reference live web data while maintaining a natural conversational tone.

For technical product managers, this means the integration layer—the bridge between the LLM brain and the real-time data—is now the most valuable piece of intellectual property.

Implications for the Future of Search and Information Access

The most profound societal impact of a reliable, conversational search model is the direct confrontation with the established internet search engine model. Traditional search requires users to input keywords, sift through ten blue links, evaluate source credibility, and synthesize the answer themselves.

GPT-5.3 Instant aims to collapse that workflow into a single, intelligent interaction. Instead of searching for "best hiking boots 2024 reviews," a user asks, "What are the three most durable, mid-weight hiking boots recommended this year, considering recent user feedback?" The model instantly synthesizes multiple reviews, cross-references pricing, and presents a distilled, sourced answer.

This transition from *information retrieval* to *knowledge synthesis* challenges the entire digital economy built around ad-supported search result pages. It is the **erosion of the search engine monopoly**, replacing passive link consumption with active, personalized knowledge delivery.

The Competitive Arena

This move also clarifies the competitive positioning against rivals like Anthropic’s Claude, which often emphasizes ethical guardrails and detailed, thorough responses. If OpenAI prioritizes "Instant" fluency, they are targeting the *utility* layer of daily computing, whereas others might prioritize comprehensive safety checks for highly sensitive contexts. Understanding these diverging strategies (as reflected in competitive analysis) is crucial for understanding market segmentation.

Actionable Insights for Businesses and Society

This evolution demands a strategic response from enterprises across all sectors.

For Business Strategists: Embracing Ambient Intelligence

The goal for businesses is no longer simply *deploying* an LLM chatbot; it is embedding reliable AI into every customer-facing and internal process. Since reliability is now paramount:

- Audit Your Knowledge Bases: If your internal AI assistant is built on your company’s documentation, ensure that documentation is current, non-contradictory, and structured for rapid RAG integration. A fast model chained to bad data yields fast, bad answers.

- Prioritize Conversational UX: Invest in testing how users naturally interact with your AI tools. If GPT-5.3 Instant smooths out conversation, your customer service models must keep pace or risk feeling clunky by comparison.

- Re-evaluate Search Spend: As AI provides synthesized answers directly, the need for users to click traditional search ads diminishes. Businesses must begin exploring direct integration strategies where their content is provided contextually by the LLM, rather than waiting for a click.

For Society: Navigating the New Trust Framework

On a societal level, the focus on reduced hallucination necessitates a new social contract with AI. If AI becomes the primary interface for information (the "Future of AI Search Integration"), the source of truth becomes less visible. While the model claims better grounding, users must remain critically engaged:

- Demand Citation Visibility: Even the best models require transparent sourcing. Future successful tools will prominently display the underlying web pages or documents used to synthesize the answer.

- Adapt to Ambient Computing: We are moving toward a world where AI assistance is always on—in our cars, our homes, and our professional tools. This demands robust privacy frameworks and clear guidelines on when and how these "instant" assistants are listening, processing, and acting on data.

The Road Ahead: Utility Before Genius

The release of GPT-5.3 Instant serves as a powerful market signal. The era of wildly ambitious, often inaccurate, large-scale models is gracefully giving way to the era of refined, highly optimized, and trustworthy utility tools. Speed and accuracy are the new gatekeepers to the mainstream.

For AI analysts, this means our focus must shift. We should be less concerned with theoretical parameter counts and more concerned with latency benchmarks, RAG pipeline efficiency, and rigorous user testing metrics around conversational success rates. The next phase of the AI revolution won't be about what AI *can* generate, but what it can reliably *do* for us, right now, without error.