GPT-5.4 Context and Reasoning Leap: The End of Memory Limits and the Dawn of True AI Planning

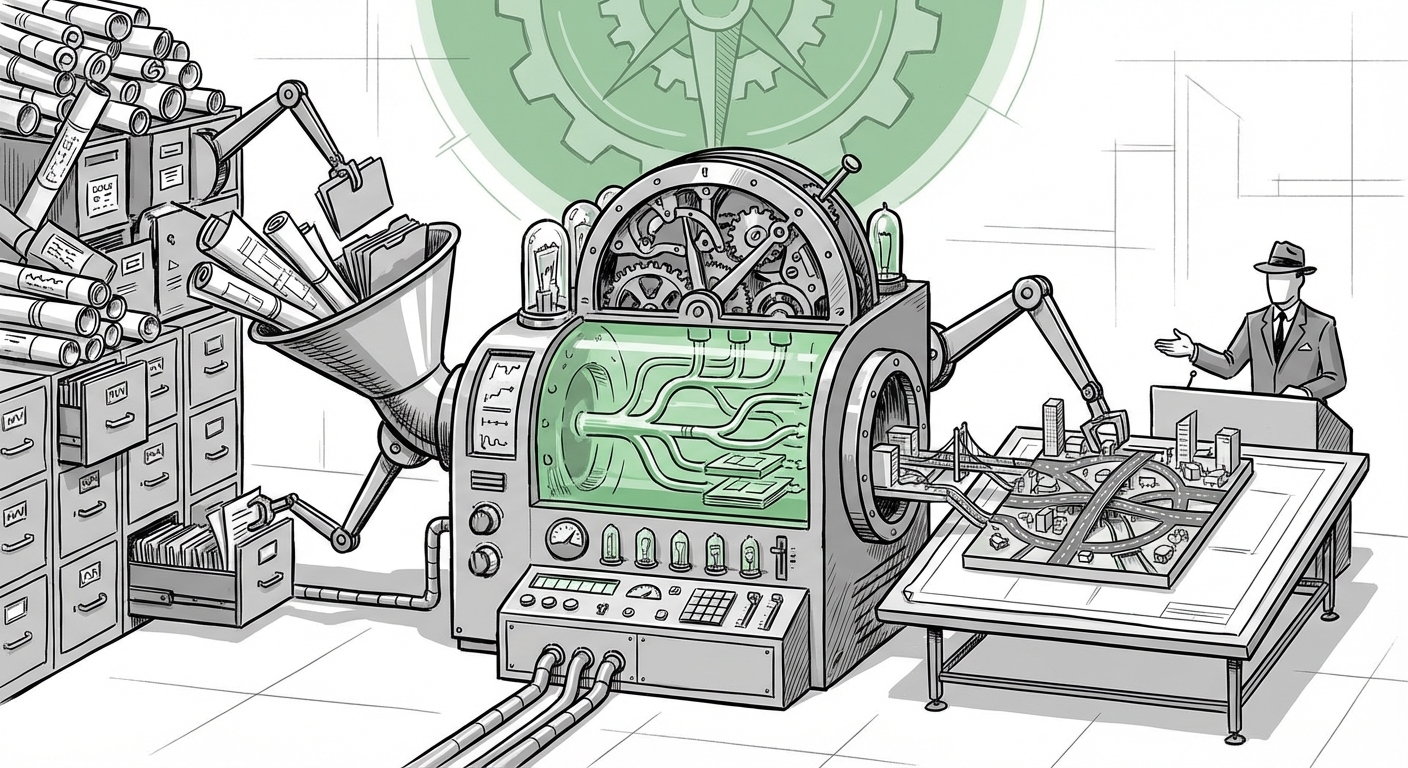

The whisperings around the next significant iteration of large language models (LLMs)—reportedly GPT-5.4—suggest a dual revolution that moves the needle beyond mere speed increases. If the rumors are accurate, we are looking at two headline features that redefine AI capability: a staggering **million-token context window** and a dedicated “extreme reasoning mode.”

These aren't incremental updates; they represent foundational shifts that address the primary pain points of current generation models. To understand the gravity of this rumored release, we must look at the competitive landscape and the established engineering challenges that these features promise to solve. This development doesn't just mean smarter chatbots; it means AI systems capable of handling entire organizational knowledge bases in one glance and tackling problems requiring deep, multi-layered thought.

The Context Barrier Shattered: The Power of a Million Tokens

For years, the context window—the amount of text an AI can "remember" or process at one time—has been the primary bottleneck in applied AI. Older models, like early GPT-4 versions, were limited to tens of thousands of tokens. While iterative updates have expanded this, the jump to a million tokens is transformative. To put this in perspective, a million tokens is roughly equivalent to 1,500 pages of text, an entire codebase, or several long, complex legal documents simultaneously fed to the model.

What a Million Tokens Means for Users (The "Read Everything" AI)

Imagine asking an AI to synthesize the findings of ten scientific papers published over the last five years, cross-reference them against a company’s internal R&D reports from the last decade, and then draft a competitive strategy memo based on all of it—without missing any crucial connections. This is the capability unlocked by massive context. For technical audiences, this means:

- Codebase Analysis: Debugging complex software architectures by feeding the model hundreds of interdependent files simultaneously.

- Comprehensive Legal Review: Analyzing entire contracts or litigation histories in a single prompt, drastically reducing retrieval errors common in Retrieval-Augmented Generation (RAG) systems.

The industry is already seeing this benchmark set by competitors. Google’s Gemini 1.5 Pro has demonstrated remarkable capacity with its 1-million-token window, establishing this massive context as the new expected standard for flagship models [See analysis on Gemini 1.5 Pro's breakthrough context: TechCrunch on Gemini 1.5 Pro]. If GPT-5.4 matches or surpasses this, it signals an intense period of competition where context capacity is no longer a differentiator but a basic requirement for enterprise deployment.

The Engineering Gauntlet: Challenges of Scale

While the use cases are exciting, massive context brings engineering headaches. Our research into the challenges of "million token context windows" confirms that scaling this feature reliably is difficult [See discussions on technical hurdles: Query 1: "million token context window" LLM challenges]. The primary technical hurdles include:

- Computational Cost: Processing sequences of a million tokens requires exponentially more memory and processing power (attention mechanisms scale quadratically or near-quadratically).

- Latency: The time taken to generate a response can increase significantly as the model must attend to vast amounts of input data.

- Needle-in-a-Haystack Performance: The model must maintain perfect fidelity, ensuring it doesn't ignore relevant data points buried deep within the prompt—a persistent challenge even with longer windows.

For businesses relying on these tools, sustained reliability is key. If GPT-5.4 can maintain GPT-5.2’s performance reliability while doubling the context, it solves a major adoption barrier for high-stakes enterprise applications.

The Reasoning Revolution: Beyond Memorization to True Cognition

The second rumored feature, the "extreme reasoning mode," suggests an architectural shift toward deeper cognitive capability. Current LLMs excel at pattern matching and retrieving vast amounts of data, often utilizing techniques like Chain-of-Thought (CoT) prompting to simulate step-by-step logic.

An "extreme reasoning mode" implies a dedicated operational state where the model prioritizes structured, verifiable, and complex problem-solving over fast, conversational output. This moves the technology closer to achieving what researchers call true, structured planning.

Structuring Complex Thought

This rumored mode likely leverages, or significantly improves upon, advanced search and planning techniques such as Tree-of-Thought (ToT) or Graph-of-Thought (GoT) methodologies. Instead of a linear path of thought, these methods allow the model to explore multiple potential solution branches, evaluate the likelihood of success for each branch, and backtrack when a path proves fruitless.

For AI researchers, this is the holy grail: moving from an educated guesser to a methodical planner. The focus shifts from general knowledge to deductive synthesis. This means tackling multi-step mathematical proofs, generating complex project timelines with dependency management, or optimizing highly constrained logistical problems where early decisions dramatically affect later outcomes [See research framing these reasoning advancements: arXiv paper on advanced reasoning techniques].

The Benchmark of Intelligence

If this mode performs as advertised, we should expect significant jumps on benchmarks designed to test reasoning, not just knowledge recall—tests like advanced coding challenges, complex SAT-style analytical sections, and abstract problem-solving.

The existence of such a mode also hints at the market readiness for "Agentic AI." True agents need to plan, execute, monitor, and correct course over extended periods. An extreme reasoning mode could serve as the core cognitive engine for AI agents that can operate autonomously on complex business tasks for hours or days.

Industry Implications: Enterprise Adoption and the Competitive Race

The combination of massive memory (context) and deep intelligence (reasoning) has profound implications, especially concerning enterprise adoption and the broader competitive landscape. The AI industry is rapidly coalescing around the idea that next-generation models must be both powerful generalists and robust specialists.

The Future of Enterprise AI (Query 4)

For business leaders, the adoption curve for AI is directly tied to its ability to handle proprietary, massive datasets reliably. As analyzed in the context of enterprise adoption, the ability to ingest and reason over all relevant internal data in one go eliminates many current integration complexities [Referencing trends in Query 4: Future of long context LLMs enterprise adoption].

We are moving away from "search and summarize" towards "analyze and decide." Consider pharmaceutical research: a model with million-token context could ingest every patent, every trial report, and every known side effect profile related to a class of compounds, then use extreme reasoning to suggest novel, non-obvious molecular combinations that satisfy stringent regulatory requirements.

The Competitive Context (Query 5)

The release cadence itself is telling. The rumored designation "GPT-5.4" suggests a strategy of rapid, powerful, iterative updates preceding a full "GPT-5" flagship launch. This mirrors the competitive tension observed in analyst predictions tracking OpenAI’s expected roadmap against rivals [Contextualizing the timeline: The Verge on OpenAI release predictions].

This aggressive layering of capabilities—improving reasoning while exponentially increasing memory—is designed to maintain market leadership. It forces competitors not just to catch up on one metric (like context length) but simultaneously on another (like structured reasoning).

Actionable Insights for Navigating the Next AI Wave

For organizations and developers building on the bleeding edge, the rumored GPT-5.4 capabilities demand a proactive strategy shift:

- Re-evaluate RAG Architectures: If massive native context becomes commonplace, organizations relying heavily on complex, manually tuned RAG pipelines to overcome short context windows may find their infrastructure needs simplification. Focus shifts from efficient *retrieval* to efficient *prompt design* within the expanded window.

- Embrace Multi-Step Tasks: Begin prototyping use cases that were previously deemed impossible due to cognitive overload—long-term planning, managing complex financial audits spanning years of data, or comprehensive software migration planning.

- Invest in Reasoning Evaluation: Do not rely solely on speed or general accuracy metrics. Develop internal evaluation suites tailored to test complex, verifiable reasoning chains. If an "extreme reasoning mode" is available, ensure your testing rigorously challenges it.

Conclusion: Towards True Cognitive Scale

The rumors surrounding GPT-5.4 paint a picture of an AI system achieving cognitive scale. The million-token context window breaks the data barrier, allowing AI to comprehend systems of unprecedented size. Simultaneously, the extreme reasoning mode promises to break the logic barrier, allowing AI to solve problems that require deep, verifiable thought rather than just pattern recognition.

This rumored iteration suggests that the next frontier isn't simply making models bigger, but making them fundamentally better at complex, sustained cognitive labor. We are rapidly moving from models that assist with tasks to models that can own and execute entire complex workflows autonomously. For those ready to adapt their data pipelines and rethink their problem-solving strategies, the era of truly comprehensive and powerfully intelligent AI assistance is nearly here.