The Million-Token Leap: How Extreme Context and Reasoning Redefine Frontier AI Capabilities

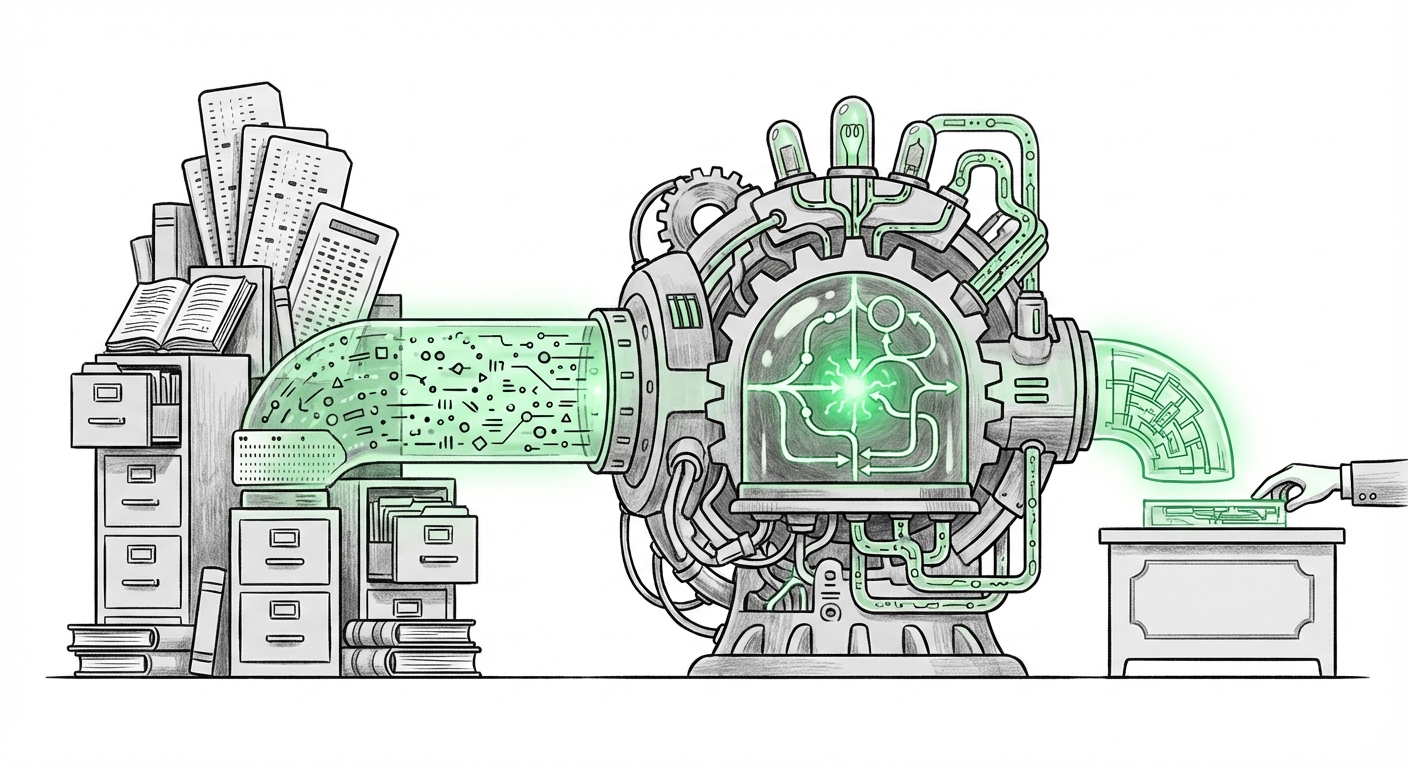

The world of Artificial Intelligence is currently experiencing exponential growth, but most advancements focus on slightly better performance scores. When a rumored update like GPT-5.4 promises a **million-token context window** alongside an "extreme reasoning mode," this is not just an incremental step; it’s a fundamental shift in what Large Language Models (LLMs) can manage.

As an AI technology analyst, my focus shifts from *if* these features will be adopted to *how* they will instantly render older models obsolete for high-stakes, complex workflows. This development moves AI from being an excellent assistant to a genuine long-term digital colleague.

Understanding the Context Revolution: From Paragraphs to Libraries

To understand the magnitude of a million-token context window, we must first define what a "token" is. Think of a token as a piece of a word, maybe four characters on average. Current leading models often handle context windows ranging from 100,000 to 200,000 tokens. A million tokens is, therefore, roughly five to ten times that capacity.

Imagine the difference between reading a single page versus reading an entire novel in one sitting. The million-token window allows the AI to absorb and cross-reference an enormous amount of information simultaneously. This addresses the notorious "forgetfulness" problem in long AI interactions.

The Context Race: Where This Development Fits

The AI industry is currently engaged in an intense "context race." Competitors are rapidly expanding their capacities. For example, models like Anthropic's Claude 3 have demonstrated impressive large context handling. However, a jump to one million tokens, if reliable, sets a new benchmark. This isn't just about input size; it’s about throughput—how effectively the model can utilize every piece of data in that vast window.

For developers and strategists, the key question, often explored when analyzing competitor moves (as seen in discussions about the LLM context window competition 2024), is whether this large context is achieved efficiently. If the underlying architecture is sound, it opens doors that were previously locked by computational limits.

The Power of Extreme Reasoning: Solving Complex, Multi-Step Problems

A huge context window is useless if the model can’t intelligently sort through it. This is where the reported "extreme reasoning mode" becomes the critical partner to the massive context.

Traditional LLMs often use techniques like Chain-of-Thought (CoT) prompting, where the model is asked to "think step-by-step." This is effective for moderate complexity. However, for tasks requiring synthesizing hundreds of documents, debugging a massive software repository, or drafting a complex regulatory filing, standard CoT breaks down due to attention decay.

From CoT to Metacognition

The term "extreme reasoning" suggests a leap beyond simple sequential logic. Analysts studying emerging techniques often look toward concepts that involve self-correction and planning, such as Tree-of-Thought (ToT) or other metacognitive layers. These methods allow the AI to explore multiple reasoning paths simultaneously and evaluate which path is most promising before committing to an answer. This mirrors how human experts approach truly difficult problems.

If GPT-5.4 incorporates such a robust reasoning engine, it means:

- Deeper Problem Decomposition: The model can break down a complex goal into hundreds of necessary micro-steps without losing track of the initial objective.

- Bias Correction: It can hold conflicting evidence within its context and actively weigh which pieces of evidence are most reliable, dramatically improving outputs.

- Improved Iteration: The system can rework a massive document based on highly nuanced feedback across several rounds of correction—a key measure of AI reliability on long-running tasks.

Practical Implications: Rewriting the Enterprise Playbook

These combined features—vast memory and superior thinking—will rapidly change which tasks are suitable for automation. For business leaders and CIOs, the focus shifts from "Can AI draft this email?" to "Can AI manage this merger integration?"

Use Cases Unlocked by Context and Reasoning

The implications of massive context are thoroughly discussed when considering real-world applications. Here are areas set for immediate transformation:

- Software Engineering: An engineer could feed an LLM an entire, multi-file codebase (hundreds of thousands of lines) along with the bug report and error logs. The model, seeing the entire system contextually, can identify obscure dependencies and suggest fixes with high certainty, bypassing tedious manual cross-referencing.

- Legal and Compliance: Reviewing thousands of pages of discovery documents, cross-referencing testimony against internal memos, and drafting litigation strategy based on the *entire* case file simultaneously becomes feasible. This reduces the risk of critical evidence being missed due to context overflow.

- Scientific Research: Analyzing decades of scientific literature on a specific topic, identifying subtle contradictions between papers, and hypothesizing novel experimental paths—all within a single prompt session.

- Financial Modeling: Ingesting yearly financial reports, market sentiment analyses, and internal risk assessments to generate predictive models that account for deep, historical relationships.

These capabilities elevate the utility of AI from a productivity tool to a strategic partner. Enterprise adoption will favor scenarios where the cost of error is high, as the enhanced reasoning targets AI reliability directly.

The Challenge of Evaluation: Benchmarks Must Evolve

A major challenge arising from these breakthroughs is how we measure them. If a model can perfectly recall a sentence buried on page 800 of a 1000-page document (a classic long-context test), standard metrics might miss the subtlety of its reasoning.

This necessitates a move towards more complex evaluation standards. We must look toward research exploring next generation large language model benchmarks that specifically stress-test long-term coherence, multi-hop reasoning across disparate documents, and the ability to resist adversarial input designed to confuse the model within its large context window.

The Competitor Response: Architecture vs. Scale

The industry’s response will be fascinating. Some firms might race to match the raw million-token input (Scale). Others, perhaps taking a different stance as debated in industry analysis (like views on Anthropic CEO Discusses the Practical Limits of Context Windows vs. Retrieval Architectures), might argue that retrieval-augmented generation (RAG) architectures, which intelligently fetch only necessary information, offer a more cost-effective path to high performance.

If GPT-5.4 proves that massive *native* context is scalable and affordable, the RAG-focused path might be momentarily sidelined for tasks requiring holistic comprehension. However, for pure data retrieval in massive, ever-changing corporate databases, RAG will remain essential.

Actionable Insights for Today’s Decision-Makers

For businesses planning their AI roadmap over the next 12 to 18 months, these developments demand proactive adjustment:

- Audit Long-Form Workflows: Identify processes currently bottlenecked by manual review of large documents (contracts, internal codebases, regulatory filings). These are the immediate, high-ROI targets for implementation once these capabilities are generally available.

- Invest in Data Structuring (Even for Context): While the context window is huge, models still perform better when data is organized. Structure internal knowledge bases not just for retrieval, but for *contextual ingestion*. Think fewer raw text dumps and more segmented, well-indexed reports.

- Prioritize Validation Teams: As reasoning becomes "extreme," the complexity of verifying its output increases. Do not assume 100% accuracy. Establish small, specialized human teams to rigorously validate model outputs on the most critical, complex tasks until the "extreme reasoning mode" is empirically proven trustworthy across various industry verticals.

- Rethink Training Pipelines: If your current AI fine-tuning relies on small, curated datasets, you may need to shift focus to creating "mega-task" training exercises that test the model’s ability to hold context over long simulations.

The Societal Horizon: Trust and Complexity

On a broader scale, increased reasoning power raises crucial questions about trust. When an AI provides a highly complex, layered decision based on millions of data points it digested instantly, how does society audit that decision? We are moving into an era where the AI's "thought process" is potentially too intricate for a human to trace manually—a technical challenge mirroring the philosophical challenge of algorithmic transparency.

The promise of enhanced reliability is crucial here. If the reliability holds, it builds trust in automated decision-making systems. If it fails in spectacular ways—a "million-token hallucination"—the backlash could delay adoption in sensitive fields like medicine and finance. The engineering challenge is therefore inextricably linked to the public trust challenge.

The rumored features of GPT-5.4 are not just technological bragging rights; they are indicators of the next phase of human-computer interaction. We are moving past simple task execution toward complex cognitive partnership, enabled by models that can finally see the whole picture.