The 3x Price Tag on Speed: Why Gemini 3.1 Flash-Lite's Cost Hike Signals the Future of AI Tiers

The Contradiction: Faster, Smarter, and Pricier

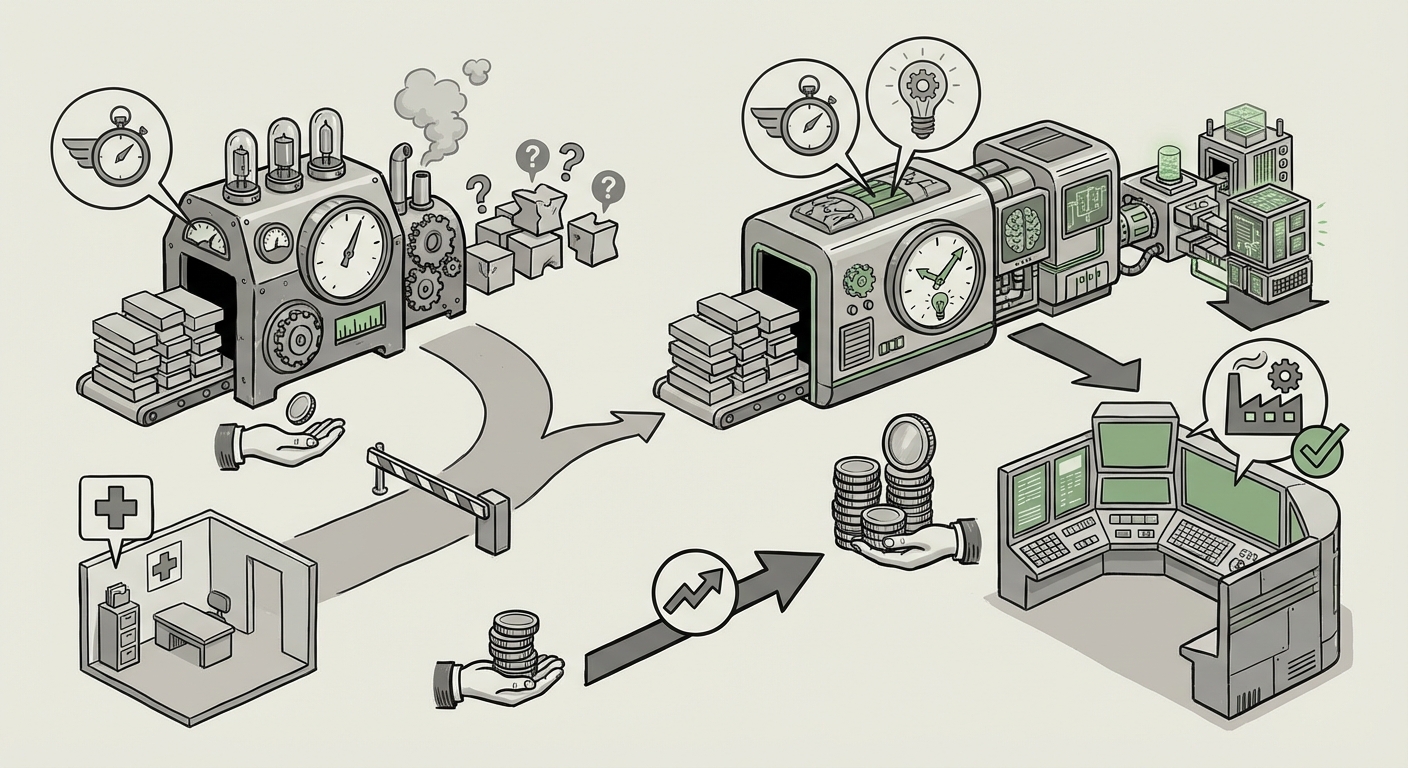

The recent preview of Google DeepMind’s **Gemini 3.1 Flash-Lite** has sent ripples through the developer community. The model’s description is an immediate contradiction: it is positioned as the *fastest and cheapest* in the new Gemini 3 series, yet its output costs have reportedly *more than tripled* compared to its predecessor. For many years in software, "Lite" meant "compromised but affordable." It was the model you used when you needed a quick answer, and you accepted that the quality might be fuzzy. This development reveals that the market for large language models (LLMs) has matured past this simple equation. We are witnessing a necessary, if jarring, recalibration: **the cost of true utility.** When an AI company like Google significantly increases the price of its supposed entry-level, high-speed model, it is making a profound statement about the engineering challenge involved in creating *good* AI. As we explored through targeted search strategies (including comparing against competitors like Anthropic's Haiku tier and reviewing general AI pricing shifts), this move is less about inflation and more about redefining the baseline expectation for accessible AI. ### Trend 1: The True Cost of Smarts (Performance Isn't Free) The primary implication is that the foundational improvements driving the "significantly more capable" nature of 3.1 Flash-Lite are expensive. Imagine building two types of cars. Car A is fast but frequently breaks down. Car B is slightly slower but almost never breaks down. If you upgrade Car B to be as fast as Car A *and* make it even more reliable, the cost of the engineering, better parts, and rigorous testing will increase substantially. In the AI context, this translates to: * **Better Reasoning:** The model isn't just faster at spitting out words; it appears to be better at logic and coherence. Achieving this requires training on more complex, curated datasets and likely more sophisticated decoding methods that consume more GPU time per query. * **Reduced Hallucinations:** The most significant hidden cost for businesses using cheap models is debugging errors. If a cheap model hallucinates a legal statute or a medical dosage, the cost to correct that output (and the resulting risk) far outweighs a slightly higher per-token fee. Google is charging a premium to reduce this **downstream operational risk**. This forces organizations tracking expenditure (CTOs and procurement officers) to look beyond simple tokens-per-dollar comparisons and factor in the **Cost of Unreliability (CoU)**. ### Trend 2: The Strategic Necessity of Tiered Refinement The previous generation of "Flash" models may have suffered from a problem known as "upward drift." Developers, eager to save money, pushed these cheap models into use cases they weren't designed for—complex coding tasks, nuanced customer service flows, or intricate data analysis. When the model failed on these high-stakes tasks, users blamed the model's cheapness, not their misapplication. Google’s price adjustment acts as a necessary **gating mechanism**. By tripling the price, Google signals: "This model is now excellent for *near-production-grade* tasks, but if you need deep expertise or complex multi-step reasoning, you still need our 'Pro' or 'Ultra' models." This strategy is about **maximising perceived value** across the entire product stack. It prevents the high-end models from being cannibalized by high-volume users who are content with "good enough" quality, ensuring that the most powerful models retain their premium positioning and profitability. ### Trend 3: The Shifting Value Proposition: From Utility to Partnership The narrative of AI is rapidly moving from "cheap novelty" to "essential infrastructure." When AI becomes critical infrastructure, reliability trumps frugality. As confirmed by broader market analysis searches regarding 2024 pricing shifts, we see a pattern: competitors are also investing heavily in making their lighter models smarter. The race is no longer to build the *smallest* model, but the *smallest model that can reliably perform a defined set of tasks* without human oversight. The value proposition is now **Speed + Capability + Reliability (SCR)**. Developers are now willing to pay 3x the cost if it means they spend 50% less time validating the output. This shift elevates LLMs from being mere API calls to being trusted, semi-autonomous digital workers. ## Contextualizing the Move: Competitive Landscape and Market Signals To fully appreciate the scope of Gemini 3.1 Flash-Lite's repositioning, we must look outside Google’s immediate announcement. The search strategies employed aimed to find external validation points: #### Benchmarking Against the Speed Demons (Query 1) When comparing the new pricing against models like Anthropic’s Claude 3.5 Haiku, it becomes clear that Google is positioning 3.1 Flash-Lite not as the *absolute* cheapest, but as the *best value for performance* in its tier. If the performance leap is sufficiently large—say, moving from 70% accuracy on certain logic tests to 95%—the 3x price increase looks like a bargain. This competitive positioning dictates how CTOs budget: they are likely evaluating the cost of *migrating* between platforms based on these new performance thresholds. #### The Macroeconomic Reality of AI OpEx (Query 2) Analysis of overall AI infrastructure expenditure shows that the underlying costs for cutting-edge inference are soaring due to demand for specialized hardware (like high-end NVIDIA GPUs). Therefore, even if architectural efficiencies are achieved, the *cost floor* for high-quality models is rising across the industry. Google’s move reflects this broader reality: premium performance now demands a premium price point simply to cover the escalating costs of compute and energy. #### The Developer Vibe Check (Query 3) Initial developer reactions often act as an immediate market litmus test. If the community consensus, found across forums and specialized tech blogs, validates the performance gains (e.g., "Yes, this new version finally handles JSON parsing reliably"), the price increase is absorbed as a necessary operational cost. If, however, the performance improvements are marginal but the price is triple, it signals developer backlash and a potential adoption slowdown for the new version in favor of older, cheaper iterations. ## Future Implications: What This Means for Business and Society The trajectory set by Gemini 3.1 Flash-Lite—smarter models commanding higher base prices—has profound implications for the next phase of AI adoption. ### 1. The Bifurcation of the AI Market We are moving toward a distinct, two-tiered system: * **The "Triage Tier" (Truly Cheap Models):** These will be hyper-specialized, smaller models (perhaps fine-tuned open-source models or highly distilled versions) used only for simple, high-volume, non-critical tasks like basic sentiment tagging or content moderation where an error is not catastrophic. These models will be cheap, but their capabilities will be strictly limited. * **The "Production Tier" (The New Flash):** These models, like the new Flash-Lite, will be the workhorses for most business applications. They are *fast enough* and *smart enough* for 80-90% of workflows, justifying a higher, yet predictable, cost structure. This stratification means that businesses must become significantly better at **model routing**—building the logic to ensure the right query hits the right-priced model. Routing errors will become a new source of technical debt. ### 2. Shifting Focus to Agentic Workflows If the model is more capable, it can handle more complex instructions autonomously. This accelerates the move toward **AI Agents**. Instead of using the model just to write an email, developers will deploy agents powered by these enhanced "Lite" models to manage entire project pipelines, such as: * Analyzing weekly sales reports, summarizing key findings, and drafting follow-up emails for the sales team—all in one go. * Handling Level 1 IT support tickets, executing troubleshooting steps, and only escalating the most complex 5% to a human engineer. The increased cost is offset because one high-quality agent call replaces potentially dozens of low-quality API calls and hours of human review. ### 3. Accessibility Versus State-of-the-Art This pricing shift has societal implications regarding accessibility. If the *baseline* for useful AI performance (the Flash tier) becomes substantially more expensive, it creates a higher barrier to entry for startups, researchers, and developers in emerging markets who rely on low-cost access to innovate. While this is an economic reality driven by silicon costs, it underscores the growing divide between those who can afford cutting-edge, reliable AI infrastructure and those relegated to using slower, older, or less capable open-source alternatives that may require significant self-hosting infrastructure. Innovation will increasingly concentrate where the capital investment is highest. ## Actionable Insights for Tomorrow's AI Strategy For businesses integrating or scaling their AI infrastructure, the Gemini 3.1 Flash-Lite pricing news serves as a clear mandate for strategic planning: 1. **Audit Your Current Model Usage:** Immediately review which processes are currently using previous "Flash" models. If those processes involve any degree of critical decision-making, schedule a pilot migration to the 3.1 Flash-Lite to measure the performance-to-cost ratio empirically. 2. **Prioritize Model Governance:** Develop a clear governance framework for model selection. Do not default to the cheapest model. Implement internal scoring metrics that weigh latency, accuracy, and cost together. This proactive system prevents developers from defaulting to the old, cheap habit when a smarter, slightly pricier tool is required. 3. **Budget for Performance, Not Just Volume:** Future AI budgeting must allocate funds based on *complexity of task* rather than raw transaction volume. A project requiring high reliability (e.g., financial document processing) needs a significantly larger budget allocation per thousand tokens than a project requiring simple content generation. The story of Gemini 3.1 Flash-Lite is not a tale of corporate greed; it is a snapshot of technological reality. As AI models transition from impressive demos to indispensable business infrastructure, the cost of performance—the cost of guaranteed intelligence—is rising to meet its true value. The era of "free lunch" in high-quality generative AI is officially over.

TLDR: Google’s Gemini 3.1 Flash-Lite doubling in capability but tripling in cost shows that the "cheap" AI tier is evolving. The industry is moving past simple speed tests; developers now demand higher reliability, forcing providers to charge more for truly useful performance, fundamentally reshaping how businesses budget for AI integration.