The End of Cheap AI? Analyzing Google's Smarter, Costlier Gemini 3.1 Flash-Lite Strategy

The initial promise of generative AI was intoxicating: near-infinite intelligence available at pocket change. For a time, the market operated in a "race to the bottom," where model providers fought fiercely for adoption by slashing token costs. However, a recent development from Google DeepMind—the release of Gemini 3.1 Flash-Lite, a model noted for being significantly more capable than its predecessor, yet tripling in output cost—signals a fundamental pivot. This isn't just a pricing adjustment; it’s a declaration about the *true cost of intelligence* in the next generation of AI.

As an AI technology analyst, I see this move as a critical inflection point. We are moving away from the era defined purely by low-cost adoption and entering a new phase focused squarely on value delivery and highly differentiated, tiered performance.

The Economics of Smarter Models: Why Costs Are Tripling

To understand the price hike, we must look past the marketing and examine the engineering reality. A model that is "significantly more capable" is rarely achieved without greater computational investment. Think of it like upgrading a car engine: a faster engine burns more fuel, but it delivers superior performance.

The Intelligence-Efficiency Tradeoff

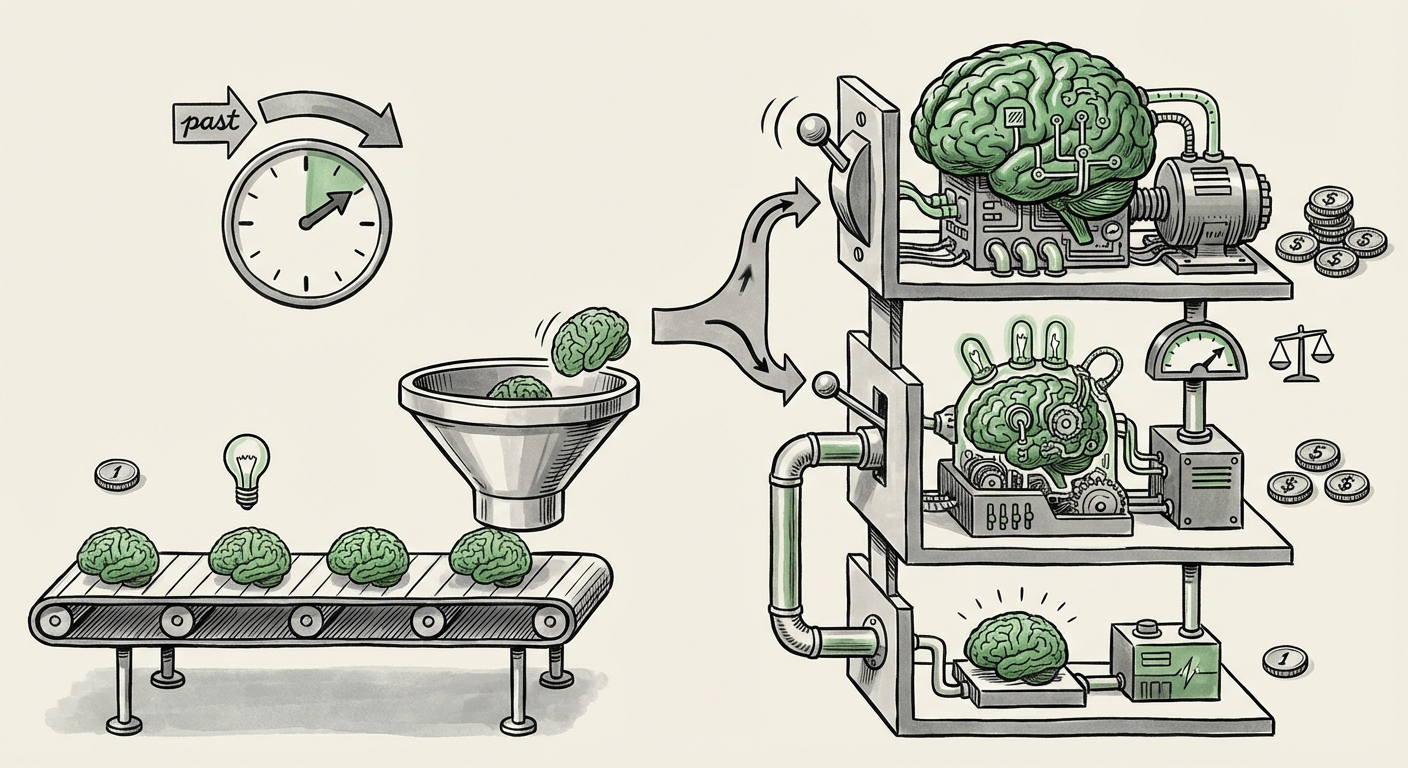

The core tension here is the inherent trade-off between model efficiency and raw intelligence. Early, cheap models focused on speed and surface-level tasks—summarization, basic classification. When models like Gemini 3.1 Flash-Lite receive substantial capability upgrades—likely involving better reasoning chains, larger internal memory states, or improved accuracy on complex tasks—they demand more processing power (FLOPs) per token generated.

We must consider this within the context of industry costs. As explored by analysts tracking the sector, the massive operational expenses (OpEx) of running LLMs are under immense pressure due to hardware scarcity and demand. A tripling of the price strongly suggests that the previous cost structure for this performance level was simply unsustainable for Google, or that the new capabilities push it into a higher hardware utilization bracket. This phenomenon aligns with ongoing industry discussions about the true cost of inference, especially as models tackle more complex, multi-step reasoning.

This observation is supported by external analysis concerning the general AI pricing strategy shift in 2024. The initial land-grab phase is over; now the focus is on profitable scalability.

The New Competitive Tiering Landscape

Google’s decision doesn't exist in a vacuum. The generative AI market is a three-way race between Google, OpenAI, and Anthropic, each aggressively segmenting their offerings.

Mapping the Model Hierarchy

The term "Flash-Lite" is itself strategic. It implies a fast, lightweight offering, but the "3.1" update suggests it’s been heavily optimized for quality within that lightweight framework. This puts it in direct competition with speed-focused, yet capable, tiers from rivals, such as the models often benchmarked against OpenAI’s GPT-4o or Anthropic’s Haiku/Sonnet tiers. Developers are no longer choosing one model; they are architecting multi-model pipelines.

- Ultra-High Cost/Performance: Models reserved for mission-critical, complex reasoning (e.g., GPT-4 Turbo, Gemini Ultra).

- Mid-Tier Value: Models like the newly priced Flash-Lite, offering significantly better intelligence than older versions, but at a higher barrier to entry.

- Ultra-Low Cost/Speed: Models dedicated strictly to rapid, low-stakes tasks (e.g., simple chatbots, quick classification).

If Gemini 3.1 Flash-Lite’s new price places it closer to the established price points of competitors’ high-end models (but still faster), Google is signaling that it believes the *value* provided by this improved intelligence warrants that price tag. For developers choosing APIs, the calculus shifts from "Which model is cheapest?" to "Which model delivers the required accuracy per dollar spent?"

This competitive dynamic is crucial for investors and CTOs evaluating long-term vendor lock-in and cost management.

Practical Implications for Developers and Enterprises

For the vast majority of companies embedding AI into their workflows, the increased cost of a key model tier has immediate, tangible consequences. This development forces a rigorous re-evaluation of AI usage.

For Developers: Sharper Selection Criteria

Developers can no longer afford to default to the fastest model for every task. If the cost triples, using the new Flash-Lite for simple tasks like checking spelling or formatting dates becomes economically unjustifiable. This mandates the adoption of sophisticated model routing:

- Task Decomposition: Breaking down complex user requests into micro-tasks.

- Intelligent Dispatch: Sending the simplest tasks to the cheapest, lowest-tier models, and reserving the newly priced Flash-Lite only for tasks requiring its superior reasoning capabilities.

This is not about being cheap; it's about **cost-efficient performance.** Building applications that cannot dynamically route tasks will find their operational budgets ballooning unexpectedly.

For Businesses: Budgeting and Total Cost of Ownership (TCO)

Enterprises must update their TCO models. If a core application relied heavily on the predecessor model's low price point for high volume, a sudden tripling of cost for equivalent performance means that application is no longer profitable unless the revenue generated can absorb the increased compute expenditure.

Furthermore, this trend highlights the growing importance of specialized infrastructure. Companies heavily invested in custom silicon or optimized data center solutions (like those utilizing Google’s TPUs) might see slightly mitigated cost increases due to direct vendor optimization, but the overarching market pressure remains.

The Future: A Bifurcated AI Ecosystem

What happens next? I predict the AI landscape will continue to bifurcate sharply, driven by this new cost reality:

1. The Rise of Fine-Tuning and Specialization

If paying for premium foundational models becomes too expensive for niche, high-volume applications, companies will pivot aggressively toward **fine-tuning smaller, less expensive open-source models** (or older, cheaper proprietary models) specifically for their proprietary data and tasks. Why pay a premium for general reasoning if you only need expert knowledge in one domain?

The higher price floor on top-tier models makes the investment in custom-trained, efficient models far more appealing as a long-term strategy.

2. Increased Scrutiny on Inference Optimization Techniques

The search for better inference optimization will intensify. Techniques like **quantization** (reducing the precision of numbers used in the model without destroying accuracy), **distillation** (training a smaller model to mimic a larger one), and advancements in hardware acceleration will become more than just academic curiosities; they will be critical survival tools for maintaining competitive pricing.

3. Value as the New Benchmark

The headline metric will no longer be just "speed" or "accuracy" in isolation. The industry will standardize around Price-to-Performance Ratios (PPR), measuring the utility gained for every dollar spent. A model that is twice as expensive but five times smarter might offer a better PPR than a model that is half the price but only 10% smarter.

Actionable Insights for Navigating the New AI Economy

The shift indicated by Gemini 3.1 Flash-Lite is a clear signal to move from opportunistic AI adoption to strategic infrastructure planning. Here is what leaders should be doing now:

- Audit Current Usage: Immediately review API call logs for high-volume applications that utilize fast-but-capable models. Calculate the projected cost impact if that model's price increases by 200% or 300%.

- Establish a Model Matrix: Stop treating the API endpoint as a monolith. Create a documented matrix classifying every task severity (Low, Medium, High) and assign the most cost-effective model tier to that severity level.

- Invest in Routing Infrastructure: Prioritize development efforts on building robust routing and orchestration layers (using frameworks like LangChain or custom logic) that automatically send requests to the appropriate model endpoint.

- Evaluate Open-Source Viability: Re-assess the Total Cost of Ownership (TCO) for fine-tuning and hosting open-source alternatives versus paying increasing API premiums for closed models.

In conclusion, the era of cheap foundational intelligence is fading, replaced by a more mature, segmented market. Google is establishing that the frontier of complex AI reasoning comes with a commensurate price tag. This forces all stakeholders—from individual developers to massive enterprises—to become far more thoughtful and disciplined architects of their AI consumption. The race is no longer just to build the best model; it is to deliver the best *value* for every unit of computation spent.