The AI Pricing Shockwave: Why Google's 'Smarter, Pricier' Gemini 3.1 Flash-Lite Redefines Value

The generative AI landscape is in a constant state of flux, driven by relentless innovation from giants like Google DeepMind. Every new model release is scrutinized for speed, capability, and, crucially, cost. When Google announced the preview of **Gemini 3.1 Flash-Lite**—touted as the fastest and most efficient model in the Gemini 3 series—the expectation was clear: superior speed should translate to cheaper inference.

However, the reality presented a significant disruption: while the model is markedly smarter than its predecessor, its output costs have reportedly *tripled*. This development is not just a footnote in a press release; it represents a profound inflection point in how the industry values and monetizes artificial intelligence.

The Paradox: Capability Over Commodity Pricing

For years, the mantra in cloud computing and AI deployment was that efficiency gains lead to deflation. We saw this with early LLMs where newer, faster models often undercut the prices of their slower predecessors. The name "Flash-Lite" suggests a model built for high-volume, low-latency tasks—the bread and butter of customer service bots or quick content summaries. Why, then, would Google charge 300% more?

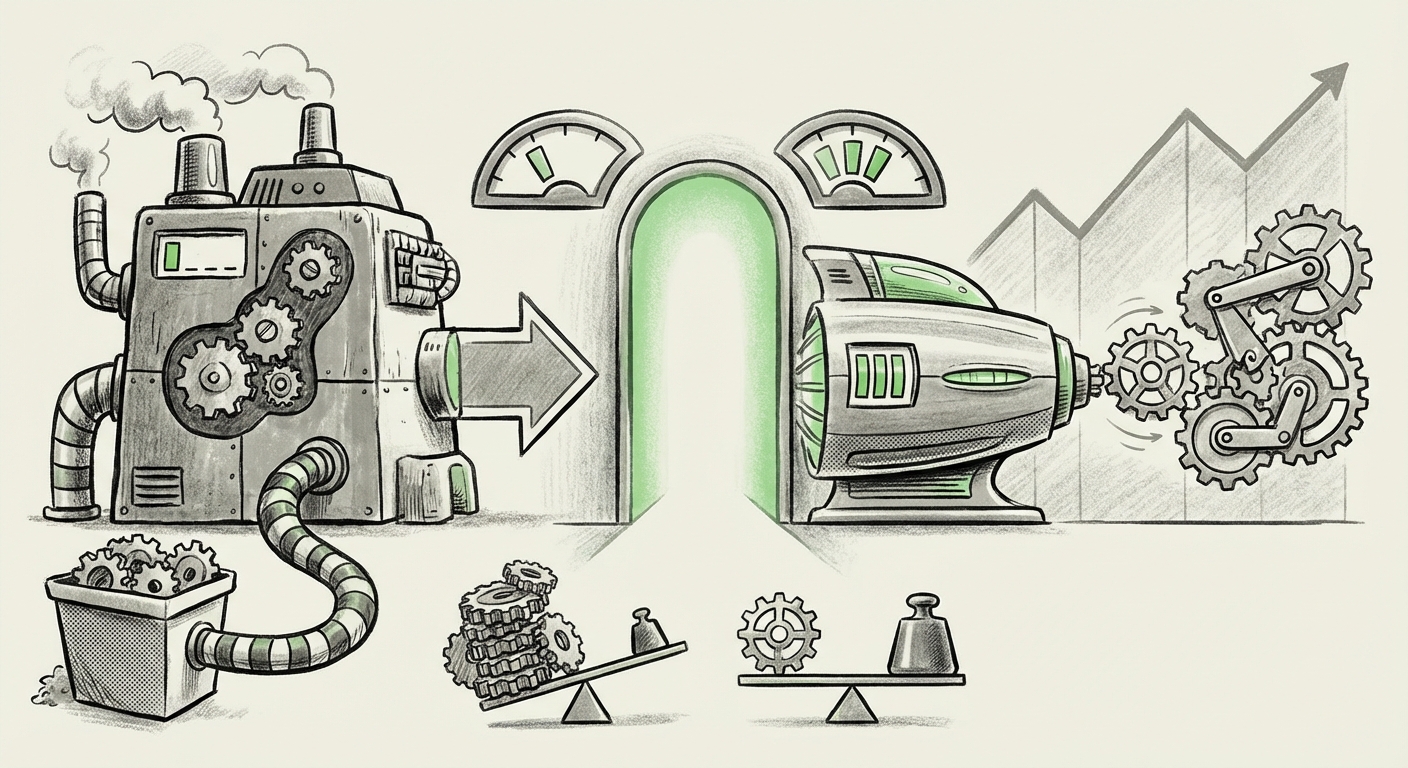

This paradox suggests a necessary recalibration of market dynamics. The cost of *simple speed* might be approaching a commodity floor, but the cost of *reliable, advanced reasoning* remains stubbornly high. Gemini 3.1 Flash-Lite appears to have crossed an invisible threshold where the added capability—better understanding, fewer hallucinations, and more nuanced output—is now priced according to the *value* it delivers, rather than the *cost* to run it.

Decoding the "Smarter" Premium

For business users, "smarter" translates directly to lower Total Cost of Ownership (TCO). Imagine a chatbot that requires 20% fewer human reviewers to check its answers because the AI is inherently more accurate. That labor saving quickly dwarfs the 3x increase in token cost. Similarly, in code generation or complex data analysis, a smarter model reduces engineering time spent on debugging or re-prompting.

This move forces developers and CTOs to stop calculating cost based purely on input/output tokens and start assessing the **End-to-End Effectiveness Cost (EEC)**. If the cheaper model costs $1 to run but requires $5 in human oversight, the $3 cost of the smarter model that needs only $0.50 in oversight is a massive net win.

Contextualizing the Price Hike: A Look at the Competitive Field

To understand if this is a Google-specific strategy or an industry-wide shift, we must look at the competition. The AI marketplace is fiercely competitive, currently dominated by OpenAI (GPT models) and Anthropic (Claude). Our investigation into competitive pricing structures reveals a pattern:

- The Race to the Middle: Competitors are also heavily marketing their "Fast" or "Haiku-level" models, but the newest, highly capable versions often come with higher introductory pricing or quickly migrate to a "premium efficiency" bracket.

- Capability Segmentation: The market is clearly segmenting. There are now ultra-cheap, low-logic models for basic tasks, and premium, high-logic models for complex tasks. Gemini 3.1 Flash-Lite seems to be establishing a very high-quality middle ground, demanding a premium for its speed/smart combo.

If rivals have shown similar pricing elasticity when boosting reasoning capabilities—even if they package it differently—it confirms that the underlying economic reality is that significant performance leaps do not come free. The foundational hardware (e.g., advanced TPUs or GPUs) and the specialized data required to make models significantly smarter are expensive to develop and serve at scale.

The search for external analysis focusing on "OpenAI" "Claude" "pricing structure" "performance tiers" validates that major players are adjusting their pricing based on measurable intelligence gains, not just token throughput.

The Economics of Inference: Why Smarter is Harder

It is tempting to think that once a model is trained, serving it should be cheap. But "Flash-Lite" suggests optimization for inference speed, not necessarily simplicity of architecture. The tripled price points toward the complex economic realities facing AI infrastructure providers, an area explored by looking at "cost to serve" generative AI models 2024.

Building a model that is "significantly more capable" requires immense resources:

- Advanced Training Regimes: Achieving better reasoning often means training the model on higher-quality, curated, and often proprietary datasets, or employing sophisticated, resource-intensive reinforcement learning techniques (RLHF/RLAIF).

- Architectural Complexity at Scale: Even an optimized "Lite" model might utilize advanced sparse activation or Mixture-of-Experts (MoE) techniques that increase complexity during serving, even if they make the model faster overall. These complex computations still require high-end, expensive processing units.

- Recouping R&D Investment: Google has invested billions into the Gemini family. The pricing structure must eventually reflect the need to recoup these massive Research and Development costs, especially as the gap between consumer-facing applications and underlying research widens.

The "Lite" label might refer to the model's *latency* or *throughput* capabilities for the end-user, but not its *underlying computational footprint* required to maintain that high level of intelligence.

Future Implications: A Maturing AI Market

The Gemini 3.1 Flash-Lite pricing strategy suggests that the AI market is maturing beyond the initial "land grab" phase. This market reaction, captured by analysts studying "AI model capability vs cost," indicates a crucial shift:

1. End of the Absolute Cheapest Tier

The market for "good enough" AI is being aggressively redefined. Users who previously chose the cheapest option (and accepted frequent failures or poor reasoning) may now find the slightly more expensive, significantly smarter tier—like the new Flash-Lite—is the *de facto* standard for production use.

2. Focus on Vertical Integration and Cloud Strategy

This decision is deeply tied to Google’s cloud strategy (Query #4). By pricing models aggressively high in their proprietary ecosystem (Google Cloud), they incentivize high-value enterprise migration. If the best combination of cutting-edge models and superior infrastructure is only available through their platform, they lock in lucrative long-term contracts, viewing the model pricing as a key driver for cloud consumption.

3. Increased Barrier to Entry for Startups

While open-source models provide a floor, if the leading proprietary providers continually raise the cost floor for "production-ready" performance, smaller startups relying on pay-as-you-go API access will face mounting operational costs faster than ever before. This could inadvertently consolidate power among well-capitalized incumbents who can better absorb initial high costs while they secure enterprise contracts.

Actionable Insights for Businesses

For organizations leveraging generative AI today, the Gemini 3.1 Flash-Lite announcement mandates a strategic realignment of procurement and development:

- Re-evaluate TCO, Not Just Token Cost: Immediately audit existing workflows currently running on older, cheaper models. Quantify the hidden costs: human review time, context-window failures, and output refinement steps. If the new model saves these soft costs, the 3x price increase is a bargain.

- Develop Multi-Model Portfolios: Do not rely on a single model tier. Use the truly cheapest models for simple, low-risk tasks (e.g., simple data classification) and reserve the "Flash-Lite Premium" models only for tasks requiring high coherence, complex reasoning, or sensitive accuracy.

- Negotiate Cloud Contracts: If you are a high-volume user on Google Cloud, engage with your account team now. Model pricing, especially for previews, is often highly negotiable based on committed usage spend. Leverage your current cloud commitment to secure more favorable future pricing on newer models.

- Invest in Prompt Engineering Training: If you cannot afford the new pricing tier for all tasks, deep investment in specialized prompt engineering to maximize the output quality of your *current* cheaper models becomes even more critical. You must extract maximum utility from the lower-cost resources you rely on.

Conclusion: The Intelligence Premium is Here

Google’s decision to triple the price of its "fastest and cheapest" offering is a clear signal to the industry: the race for raw speed is over; the competition is now centered on delivering reliable, high-quality intelligence efficiently. The era of expecting AI services to follow the traditional Moore's Law deflation curve seems paused, replaced by a model where **value extraction commands a premium price tag.**

As developers, we must adapt our budgeting models from simple per-token metrics to sophisticated effectiveness metrics. The future AI stack will not be defined by the cheapest model, but by the most cost-effective intelligent component for the specific job at hand. The AI industry is growing up, and like any mature technology sector, it is learning to charge appropriately for transformative capability.