The MCP Revolution: How API-Centric AI and Function Calling Are Redefining Enterprise Intelligence by 2026

The journey of Artificial Intelligence from research lab curiosity to essential enterprise backbone is accelerating. We are moving past simple chatbot deployments and entering an era defined by sophisticated, reliable, and integrated AI operations. A recent look into the emerging **MCP Architecture**—touted as the blueprint for 2026—signals a major architectural shift:

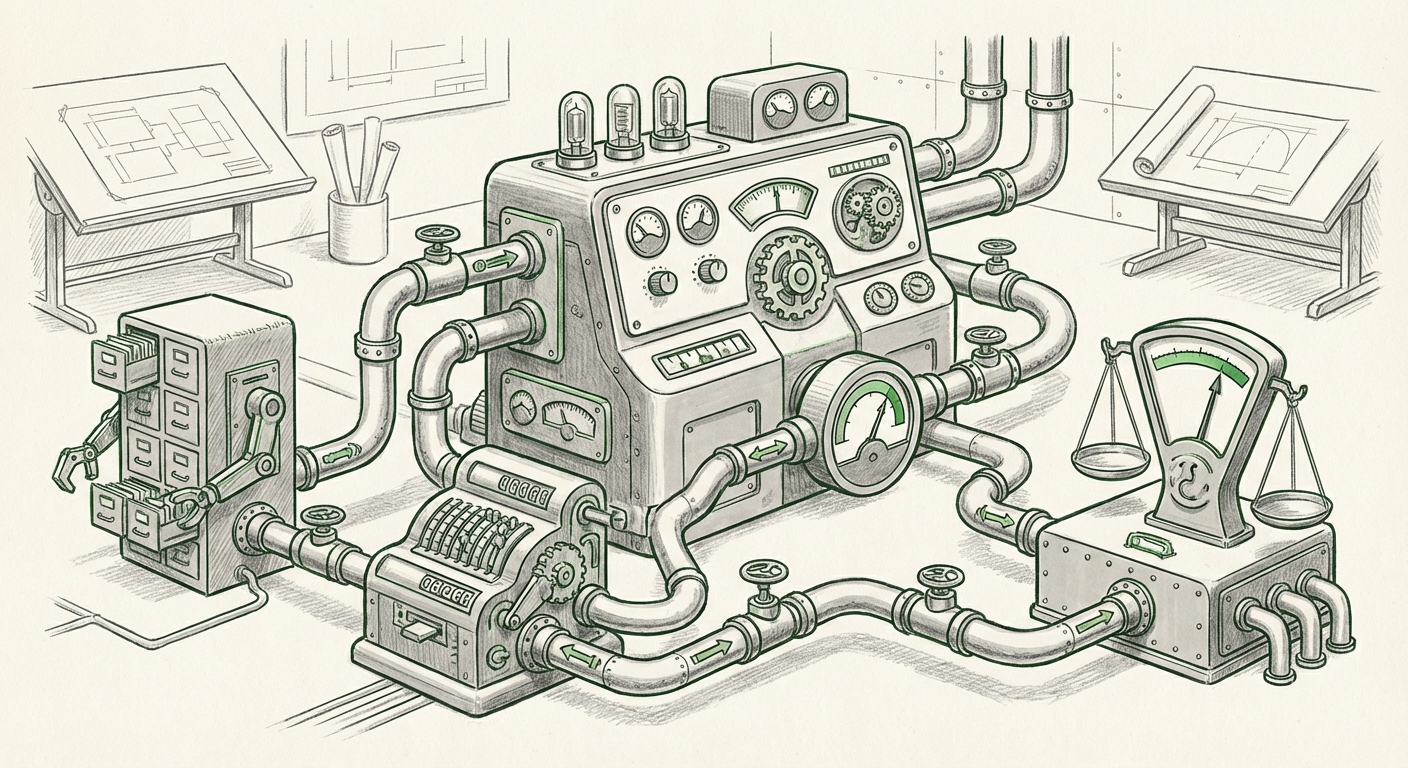

The central tenet of the MCP model (whether interpreted as Model Confidence Prediction or Model Control Platform) is its focus on operationalization. Specifically, it highlights deploying these intelligent components as standard **API endpoints** and driving their utility through **function calling** within large language model (LLM) workflows. This is not just incremental improvement; it is a fundamental realignment of how AI components interact with the real world.

To understand the significance of this vision, we must examine the underlying technological currents that validate and support this predicted 2026 structure. We look at four critical areas corroborating the rise of the MCP paradigm.

The Foundation: Why Architecture is Shifting Towards Modular AI

For years, integrating AI meant stitching together various models and data sources, often resulting in brittle, hard-to-update systems. The MCP vision directly addresses this complexity by advocating for **decoupling**—treating specialized AI functions (like prediction, validation, or data retrieval) as independent, consumable services.

1. The Ubiquity of LLM Function Calling

The most crucial enabling technology for the MCP architecture is the maturity of **function calling** (or tool use) within foundational models. An LLM, by itself, is a brilliant predictor of text sequences. But for an enterprise application, it needs to act. It needs to check inventory, run a calculation, or query a database.

The concept detailed in the Clarifai guide—integrating MCP tools into LLM workflows via function calling—confirms that the industry is standardizing on this mechanism for connecting "brain" (the LLM) to "hands" (the tools).

For the technical audience (MLOps Engineers): This means your focus shifts from prompt engineering alone to designing robust, schema-defined APIs that the LLM can reliably call. The reliability of these external service endpoints dictates the reliability of the entire workflow. If an MCP server acts as a specialized tool API, its uptime and response format are mission-critical.

This trend is maturing rapidly. Major model providers are pushing standardized formats for defining these tools, ensuring interoperability across different platforms. The search for "LLM function calling production adoption trends" reveals that vendors are heavily investing in making this process stable, auditable, and fast—a prerequisite for any platform architecture aiming for wide adoption by 2026.

2. The Business Imperative for API-First AI

Why deploy these MCP servers as standalone **API endpoints**? The answer lies in scalability and agility. In the pre-MCP world, many companies trained massive, monolithic models to handle several tasks, making updates difficult and expensive.

An API-first approach flips this model. If the 'Confidence Prediction' module needs an upgrade (perhaps moving to a new, smaller calibration model), it can be swapped out behind its API gateway without touching the core LLM application.

For the business leader: This means faster iteration cycles. You can pilot a new risk assessment tool (an MCP service) with one client group, monitor its performance via API logs, and roll it out broadly only after validation. This modularity directly translates to reduced deployment risk and lower maintenance overhead.

Analyzing trends in "API-first architecture for large language models" shows a clear migration toward treating every significant AI capability—from summarization to compliance checking—as a scalable microservice. This decoupling is essential for managing the sheer volume and diversity of models enterprises will soon rely on.

The "Confidence" Factor: Building Trust into the Core

If the 'P' in MCP stands for Prediction, it implies a focus on measurement. In the world of generative AI, the greatest barrier to broad enterprise adoption remains trust—the fear of hallucination, bias, or outright error.

3. Operationalizing AI Trust Through Confidence Scoring

A system designed around "Model Confidence Prediction" suggests that the output of an LLM workflow will always be accompanied by a calibrated score indicating how much the system trusts its own result. This shifts the conversation from "Is this output correct?" to "How much confidence do we assign to this output?"

This directly addresses the critical industry need identified by analyzing "AI model confidence calibration enterprise adoption." Simply put, high-stakes applications (like medical diagnosis aids or financial compliance checks) cannot use a simple "best guess." They require quantifiable uncertainty.

Practical Implication: If an MCP returns a confidence score below a threshold (say, 85%), the system can automatically trigger a fallback mechanism: rerouting the query to a human expert, initiating a more rigorous internal audit tool (another MCP service), or demanding more context from the user.

This integration of trust metrics into the core architecture is perhaps the most significant evolution driving AI adoption over the next few years. It moves AI from being an 'oracle' to being a 'consultant' whose reliability is transparently reported.

4. Feeding the Beast: Sophisticated Data Retrieval (RAG Evolution)

For any confidence predictor or tool executor to function accurately, it needs grounding. Modern AI systems rarely run purely on their base training data; they use Retrieval-Augmented Generation (RAG) to pull in real-time, proprietary information.

An MCP architecture implies that the tools it executes often involve advanced retrieval. This necessitates a deep look into the infrastructure supporting that retrieval, explored by examining "Vector database evolution beyond basic RAG."

A simple RAG system pulls similar documents based on embedding similarity. A 2026 system—the kind that an advanced MCP architecture would rely on—needs:

- Hybrid Search: Combining keyword search with vector similarity for highly precise results.

- Metadata Filtering: Ensuring the model only retrieves documents published after a certain date or approved by a specific department.

- Contextual Synthesis: Tools that don't just return documents but synthesize the required facts into a ready-to-use snippet for the LLM.

If the MCP decides, via function call, that it needs the latest sales figures, the underlying vector database must be sophisticated enough to return that data accurately and quickly. The architecture suggests a tight coupling between high-level workflow orchestration (MCP) and high-performance data grounding (Vector DBs).

Future Implications: What This Means for Business and Society

The shift toward this modular, API-driven, and trust-calibrated architecture has sweeping implications across industries.

The Democratization of Complex AI Workflows (For the Business Audience)

In the past, building complex, multi-step AI processes required specialized AI engineers working deeply inside proprietary model frameworks. The MCP model, leveraging standardized APIs and function calling, allows line-of-business application developers to assemble sophisticated AI workflows using off-the-shelf, well-defined building blocks.

Imagine a Customer Service platform. Instead of one giant LLM trying to handle everything, the workflow becomes:

- User asks a question.

- LLM recognizes it needs external data and calls the "Data Retrieval MCP" tool.

- The Data MCP queries the Vector DB and returns grounded facts, alongside a 98% confidence score.

- If the user asks for a complex refund calculation, the LLM calls the "Calculation MCP" tool (a deterministic Python function exposed as an API).

- The final answer is synthesized, and the overall confidence score is reported.

This modularity means specialized teams can own specific parts of the AI pipeline, leading to faster innovation and clearer accountability.

The Governance Challenge: Auditing the Interconnected Machine

While modularity aids agility, it complicates governance. If a mistake occurs, tracing the error back through a chain of API calls involving an LLM, a confidence predictor, and a vector search engine is complex.

This is where the ModelOps maturity cycle must catch up. The architecture demands comprehensive observability. We need tools that can trace every function call made by the LLM, log the confidence score associated with that call, and record the input/output parameters of the executed MCP service.

For AI Governance Teams, the focus shifts from scrutinizing the model weights to auditing the interaction protocols. Auditing must confirm that the LLM only calls authorized tools and that the confidence scoring mechanisms are unbiased and correctly integrated.

Actionable Insights: Preparing Your Infrastructure for 2026

The MCP architecture isn't a far-off dream; it’s the logical destination for current trends. Organizations that prepare now will gain a significant competitive edge.

For Infrastructure Teams: Embrace Service-Oriented AI

Stop thinking of models as monolithic applications. Start treating every distinct AI capability (e.g., translation, summarization, sentiment analysis, confidence scoring) as a distinct, versioned, and scalable microservice accessible via a standardized REST or gRPC API. Invest heavily in API gateway management and observability tools that understand AI traffic patterns.

For Data Science Teams: Focus on Interface Contracts

Your LLMs will be the orchestrators, but the success of the orchestration depends on the clarity of the tools they call. Define precise input/output schemas (like OpenAPI specs) for every tool you expose to your orchestrating LLM. The model's ability to reliably call your function is paramount.

For Leadership: Prioritize Trust Engineering

Begin defining what "acceptable confidence" means for your most critical business processes today. Start running shadow A/B tests where one path uses current LLM outputs and another path requires a confidence score from a dedicated calibration model. This builds the muscle memory needed to deploy highly trustworthy, MCP-enabled systems when they become the industry standard.

The integration of function calling, API deployment, and inherent confidence measurement—as suggested by the MCP concept—is charting the course for robust, scalable, and auditable enterprise AI. The future of intelligence isn't about one super-model; it’s about a highly coordinated ecosystem of specialized, trustworthy services.