The Hidden Cost of Smart Glasses: AI Training, Global Labor, and the Looming Privacy Wars

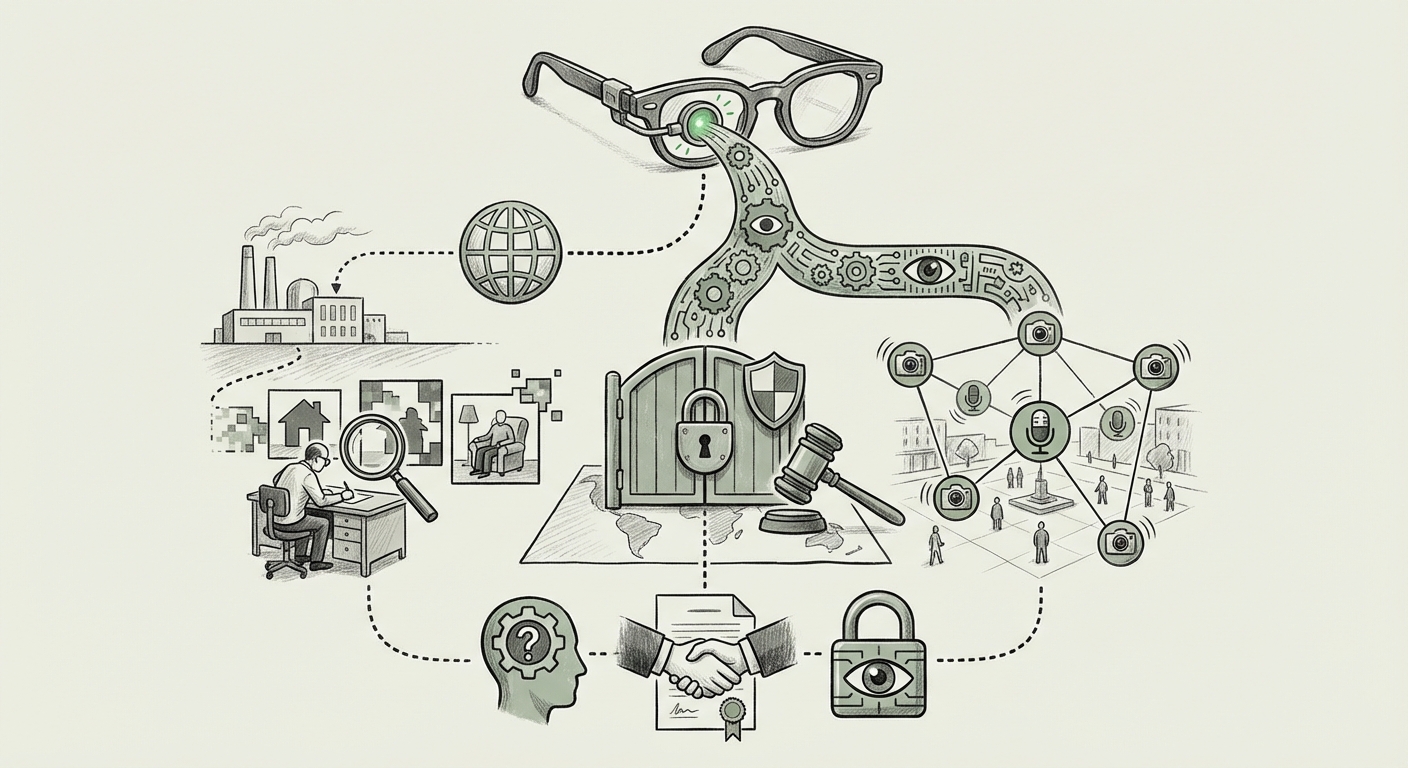

The race for next-generation Artificial Intelligence is not just fought in labs with massive server farms; it is increasingly dependent on the most intimate, raw data captured from human lives. When Meta’s smart glasses—designed to blend seamlessly into daily activities—were revealed to be sending profoundly private footage—including intimate moments and bank details—to data workers in lower-cost regions with few safeguards, the industry was forced to confront an ugly truth.

This incident is more than just a PR blunder; it is a flashing red light illuminating three converging fault lines in modern technology: the opaque supply chain of AI training data, the territorial reach of global privacy law, and the inevitable erosion of privacy when devices become permanently "always-on." For businesses, developers, and regulators, understanding this nexus is critical to navigating the future of computing.

The Shadow Supply Chain: Outsourcing the Unseen Labor of AI

Modern AI models, from large language models (LLMs) to computer vision systems powering smart glasses, require enormous amounts of labeled data to learn. This isn't just neat photos of cats; it requires humans to review countless hours of video and audio to tag objects, understand context, filter toxicity, and correct errors. This process, known as data annotation or labeling, is often outsourced.

When a wearable device captures the world as seen through the wearer's eyes—a capability essential for teaching the AI context—the resulting data is inherently personal. The challenge arises when this highly sensitive data, often captured in Western households, is shipped across borders to annotation centers located in regions like Nairobi, where labor costs are lower, but local privacy standards may be less stringent or less rigorously enforced by the commissioning company.

This practice confirms what many investigative tech journalists have uncovered about the AI pipeline: **the "human-in-the-loop" is often invisible, under-protected, and exposed to extreme content.** Reports exploring the general dangers of AI data labeling outsourcing reveal a landscape where workers are routinely asked to view trauma, violence, and extreme sexual content to make AI safer for end-users elsewhere (Query 1: "AI data labeling outsourcing privacy risks"). This ethical burden is compounded when the raw data they are viewing hasn't been sufficiently scrubbed of legally protected or deeply private information, like financial details or explicit personal moments.

For the AI developer, outsourcing is an economic necessity for rapid iteration. For society, it creates a fragile, high-risk data transfer mechanism where the principle of data minimization—the idea that you should only collect the data you absolutely need—is often ignored in the pursuit of comprehensive training sets.

Implication for Businesses: The Hidden Liability of the Vendor Chain

Technology companies can no longer treat their third-party data processors as an insulated cost center. Under increasing scrutiny, the liability for poor data handling follows the data, regardless of where it is being processed. CIOs and CTOs must recognize that the data handling practices of a vendor in Kenya are, legally and ethically, extensions of their own corporate policy.

The GDPR Hammer: When Global Tech Meets Local Law

The most immediate and tangible threat stemming from this type of data leakage stems from European regulators. The General Data Protection Regulation (GDPR) is perhaps the world's most comprehensive data privacy framework, and it casts a long shadow over any company processing the personal data of EU citizens.

When footage containing identifiable EU citizens is sent abroad for processing, the transfer must meet strict adequacy requirements. If Meta’s smart glasses are used by European customers, that raw footage—even if anonymized poorly, or in this case, not well enough to prevent the identification of nudity or bank details—falls under GDPR jurisdiction. Legal analysis consistently highlights the severe implications for smart, always-on devices (Query 2: "GDPR implications smart glasses recording data").

The concern isn't just about the location of the processing; it's about the **nature of the data**. Audio recordings, real-time visual feeds, and intimate videos are classified as sensitive personal data. The law requires robust technical and organizational measures to protect this data. If those measures are demonstrably weak in the outsourced setting, the resulting fines can reach up to 4% of global annual turnover.

Furthermore, this incident serves as a powerful reminder of Meta’s own history. The company has faced repeated, massive fines for past data handling failures concerning Facebook and Instagram. This pattern suggests a regulatory disposition: regulators view Meta’s recurring privacy issues not as accidents, but as systemic corporate negligence (Query 4: "Meta Ray-Ban stories data privacy scandal history").

Implication for Law and Policy: Defining the Digital Border

This case forces lawmakers to clarify how established laws apply to data streams generated by *wearable, passive surveillance devices*. Current laws were largely designed for data actively uploaded by users (like a social media post). Smart glasses blur this line: the data is passively collected by a device the user *chooses* to wear, but the resulting stream is anything but passive.

The Future of Data Sourcing: Trust vs. Technological Capability

Looking ahead, the bottleneck for achieving highly capable, contextual AI is almost always the quality and quantity of training data. As we move toward more sophisticated augmented reality, pervasive sensing, and truly personalized AI agents, the need for data mirroring real-world complexity will only increase (Query 3: "Future of AI training data sourcing and surveillance").

The Meta glasses incident presents a stark choice for the future:

- The Path of Pervasive Sensing: Continue to rely on devices that record everything, pushing the industry to develop ever-more complex, and often ethically compromised, methods of cleaning, transferring, and annotating that data globally. This path risks the complete collapse of public trust in wearable technology.

- The Path of Privacy-Preserving AI: Shift R&D investment heavily toward synthetic data generation, differential privacy techniques, and federated learning, where the AI model travels to the data (e.g., training locally on the user's glasses) rather than the data traveling to a centralized cleaning farm.

If tech leaders prioritize the first path—the need for massive, messy real-world data—they must fundamentally rethink their data governance. If the AI cannot be trained without viewing someone’s most private moments, perhaps the AI feature isn't ready for market, or perhaps the device itself is too invasive for widespread public adoption.

Implication for Users: The Death of Inadvertent Privacy

For the average person, the lesson is severe: any device that can see or hear everything you do is creating a digital shadow that will eventually be seen by someone else for training purposes. Inadvertent privacy—the expectation that you can walk around your home or a public space without every gesture, conversation, or outfit being logged, reviewed, and stored—is rapidly becoming a relic of the past.

Actionable Insights: Rebuilding Trust in the AI Supply Chain

For technology leaders aiming to innovate responsibly while managing immense regulatory and reputational risk, concrete actions must be taken now:

1. Embrace Data Minimization by Design

Before launching any new sensing feature, companies must ask: Can this AI function effectively with highly anonymized, aggregated, or synthetic data? If the answer is no, the feature requires a significantly higher level of internal review. Raw, unredacted video of private spaces should never leave the device environment without absolute, cryptographic assurance of individual privacy protections.

2. Mandate Data Residency and Auditable Safeguards

For data that *must* be reviewed externally, institute strict residency requirements. Sensitive data should be processed within jurisdictions that match the data's origin (e.g., EU data stays in the EU for labeling). Furthermore, companies must commission independent, ongoing audits of vendor facilities specifically targeting data handling protocols, not just standard IT security.

3. Invest in On-Device Intelligence (Edge Computing)

The most powerful defense against this risk is keeping processing power on the device itself. Future R&D budgets should prioritize optimizing smaller, specialized AI models that can perform basic contextual recognition (like "that's a dog," or "that's a credit card screen") directly on the glasses, sending only the resulting *metadata* (e.g., "Object identified: Credit Card") back to the cloud, not the image itself.

4. Prioritize Worker Welfare and Data Sanitization

The welfare of data labelers is inseparable from the quality of the AI product. Companies must ensure contracts mandate robust content filtering, psychological support for workers exposed to graphic content, and high privacy standards that match the expectations of the end-user. A commitment to privacy must extend to the people who enforce that privacy.

The Inevitable Collision

The incident involving Meta’s smart glasses footage is a textbook example of technological capability outpacing ethical governance. Cutting-edge AI requires massive data fuel, and the path of least resistance often leads to the exploitation of weaker regulatory environments and the exposure of deeply private user information.

The future of AI hinges on whether companies can shift from a model of *data extraction at all costs* to one of *privacy-preserving innovation*. If the trend of outsourcing the review of intimate user data continues unchecked, the result will not only be crippling regulatory action—particularly from GDPR enforcers—but also a fundamental breakdown in the consumer trust required for the next generation of pervasive computing devices to succeed. The smart glasses of tomorrow depend on the responsible stewardship of today’s private moments.