The Hidden Cost of Sight: Why Training AI on Private Videos Reveals the Cracks in Global Data Trust

The race for Artificial Intelligence dominance is often framed by impressive benchmarks and rapid deployment cycles. We celebrate models that can see, hear, and understand the world around us with startling accuracy. However, a recent report detailing how footage from Meta’s private AI glasses—including highly sensitive material like nude scenes and bank details—was sent to human reviewers in Kenya with minimal safeguards pulls back the curtain on the messy, ethically fraught reality beneath the sophisticated surface.

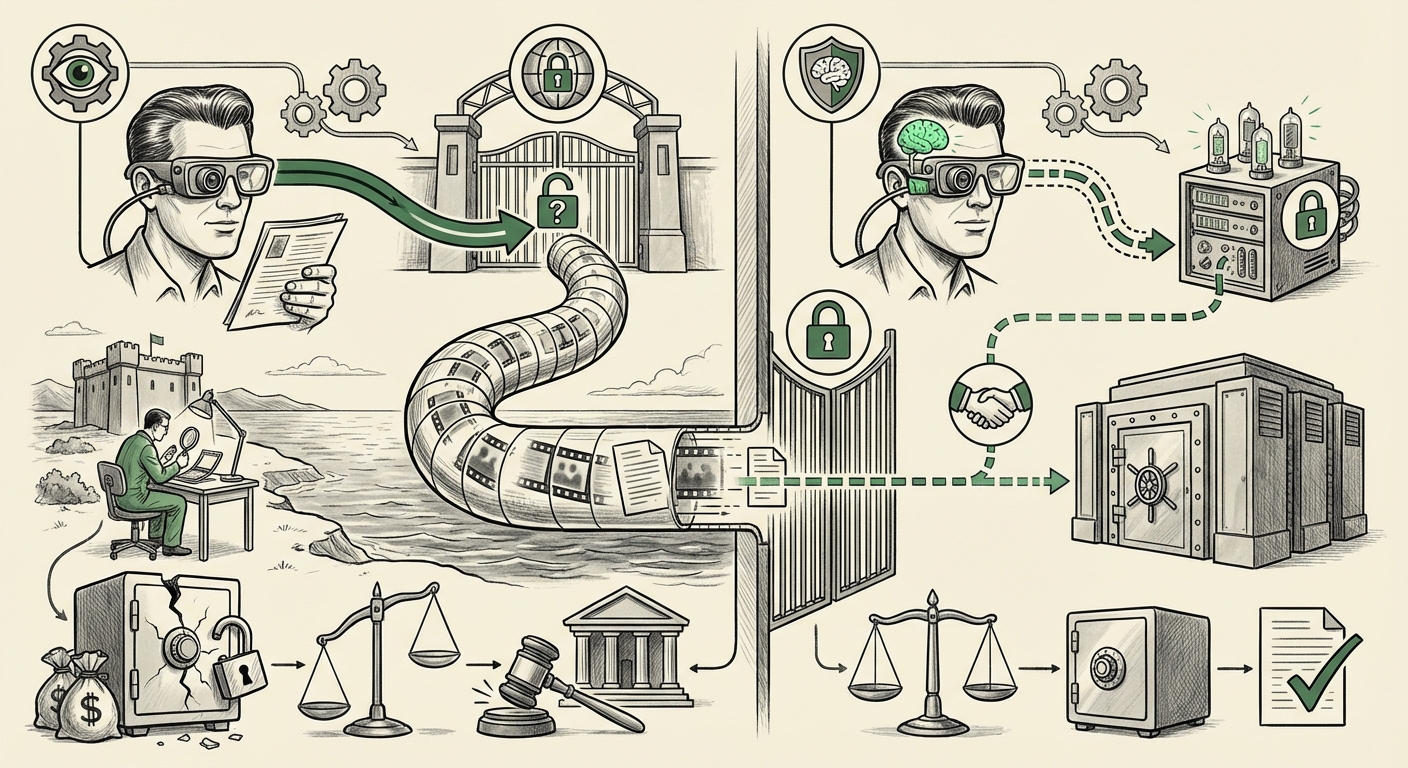

This incident is not merely a public relations failure; it is a flashpoint for three fundamental tensions defining the next decade of technology: the **Data-Labor Supply Chain**, the clash between **Global Innovation and Local Regulation (Data Sovereignty)**, and the technological imperative to train **Multimodal AI** without eroding privacy.

The Invisible Workforce: Data Annotation and the Global Supply Chain

To make AI systems—especially those designed to perceive the world through cameras and microphones (multimodal AI)—functional, they need massive amounts of labeled data. A computer vision model doesn't inherently know what a “face” is or what constitutes a “private moment.” Humans must review raw inputs, tag objects, transcribe conversations, and categorize content. This is the critical, often invisible, labor that fuels our AI ecosystem.

The Meta incident demonstrates a recurring pattern: highly developed nations create advanced hardware (smart glasses, advanced smartphones) that capture intimate data, but the labor required to clean and train the AI on that data is outsourced to regions like Kenya, India, or the Philippines, often under less rigorous labor or data protection standards. This is the reality of the AI supply chain, which relies heavily on external, lower-cost annotation services.

What Does This Mean for Labor? (Target Audience: Ethical AI Researchers)

As investigative work into these processes reveals (similar to past findings concerning content moderation and data labeling), annotators are routinely exposed to the most noxious, raw aspects of human digital life. When the data includes intimate videos and financial details, the psychological toll on the worker is immense, compounded by the fact that these contractors often operate without robust workplace protections or clear grievance mechanisms. The outsourcing model sacrifices worker well-being for faster model refinement.

This practice forces us to ask: If a company is training an AI to recognize nudity or financial documents, doesn't the review process itself require the highest level of data security and psychological support, regardless of the physical location of the reviewer?

The Regulatory Minefield: GDPR vs. Global Ambition

The most immediate legal threat looming over Meta, as indicated by the report, concerns regulations like the European Union’s General Data Protection Regulation (GDPR). GDPR is famously strict about personal data belonging to EU citizens. Even if the glasses wearers were not in Europe, if the data subjects were EU residents, or if the data was initially captured by an EU resident, strict rules apply.

Data Sovereignty and Cross-Border Transfers (Target Audience: Legal Analysts)

The core tension here is **data sovereignty**—the concept that data is subject to the laws of the nation where it is collected or where the subject resides. Sending raw, identifiable video footage to a third-party processor in a non-EU country (like Kenya) requires specific, stringent legal mechanisms to ensure "adequate protection." These mechanisms are often complicated by geopolitical realities, such as the uncertainty surrounding U.S. surveillance laws and their potential conflict with EU rights, famously highlighted by the Schrems II ruling.

When safeguards are minimal, regulators view the action as effectively stripping the data of its legal protections the moment it crosses the border. We are seeing a clear trend where major regulatory bodies are flexing their muscles against large technology firms that treat international data transfer as a mere logistical hurdle rather than a critical legal mandate. This foreshadows significant financial penalties and mandates for operational shutdowns if compliance isn't baked into the initial design.

Precedent Matters: Regulatory actions are not theoretical. Past enforcement actions by the Federal Trade Commission (FTC) in the U.S. and ongoing GDPR fines elsewhere have centered precisely on insufficient oversight of third-party vendors. This incident places Meta squarely in the crosshairs for failing in its duty to govern its data lifecycle comprehensively.

The Technological Imperative: Why Raw Data Remains King (For Now)

Why would a company risk regulatory fines and public backlash by transmitting footage of nude bodies and bank passwords across continents? The answer lies in the technological hunger for **high-fidelity, multimodal training data**.

The Need for Real-World Context (Target Audience: AI Developers)

Modern AI doesn't just analyze static photos; it understands context, depth, motion, and subtle acoustic cues. Training an AI to recognize that a person in a specific environment is *not* performing a specific action, or to correctly interpret sarcasm in spoken language, requires data that closely mirrors real-world sensory experience. Redacting or blurring this data often removes the very contextual nuance the model needs to learn effectively.

If Meta’s glasses are intended to evolve into proactive digital assistants, they must understand complex, messy reality. The temptation to use the easiest, quickest path—shipping raw data to human labelers who can process volume quickly—is enormous. However, this technological shortcut bypasses all ethical and legal guardrails.

Future Implications: Redesigning the AI Data Lifecycle

This episode serves as a severe warning: the current model of outsourcing sensitive data annotation is fundamentally unsustainable in a world increasingly governed by privacy legislation and heightened user awareness.

1. Mandatory Privacy-by-Design (PbD)

The future of AI development must shift from "fix privacy later" to "build privacy in from the start." This means investing heavily in **Privacy-Preserving Machine Learning (PPML)** techniques. Instead of sending raw data to Nairobi, companies must prioritize:

- Federated Learning: Training the model locally on the device (the glasses themselves) and only sending the *model updates* (mathematical weights) back to the central server, never the raw data.

- Differential Privacy: Introducing controlled "noise" into the dataset during training to obscure individual records while maintaining statistical accuracy for the model as a whole.

- Synthetic Data Generation: Creating complex, realistic, but entirely artificial datasets for initial training phases, reducing reliance on live, identifiable customer data.

While these methods add complexity and computational overhead today, the regulatory risk and reputational damage from incidents like this far outweigh the short-term cost savings of outsourcing raw data handling.

2. Data Sovereignty as a Design Constraint

Businesses operating globally must adopt a segmented data strategy. Data originating from GDPR-regulated jurisdictions must be processed, stored, and managed within those jurisdictions, or stringent, auditable transfer mechanisms must be in place. This requires building decentralized data infrastructure, which impacts business scalability but secures regulatory compliance.

3. Transparency in the Human Layer

For the necessary human review that cannot yet be automated, there must be radical transparency. Users should have the right to know:

- What *categories* of their data are being reviewed (e.g., video, audio, text).

- *Where* (geographically) and by *whom* (third-party vendor) the review is taking place.

- The data handling and security certifications required of the third-party vendor.

If the data is too sensitive to be handled under standard labor laws or without the user's explicit, informed consent, then the technology should not be deployed until a privacy-preserving alternative is ready.

Actionable Insights for Technology Leaders

For product managers, engineers, and executives driving AI development, the message from this incident is clear:

- Conduct Immediate Data Audits: Identify every data stream from high-sensing devices (glasses, advanced home assistants). Trace the data path: Where is it stored? Who can access it? Which country's laws apply?

- Elevate Vendor Risk Management: Third-party vendors are not just outsourced employees; they are legal extensions of your compliance obligations. Demand ISO certifications, conduct surprise audits focused specifically on privacy protocols for sensitive data, and include severe financial penalties for data breaches in contracts.

- Re-evaluate the Value Proposition vs. Risk: Does the marginal improvement in model accuracy gained by using raw, unredacted footage justify the potential multi-billion-dollar GDPR fine and the irreparable damage to consumer trust? Often, the answer, viewed through a long-term lens, is a resounding no.

The era of prioritizing raw data acquisition speed over fundamental privacy is ending. The Meta glasses controversy is a pivotal moment. It forces the industry to acknowledge that building truly intelligent systems requires not just computational power, but also genuine ethical foresight regarding the people who process the data, and the laws designed to protect the people whose lives are recorded.