The AI Pivot: Why Meta's Applied Engineering Division Signals the End of Pure Research Dominance

In the rapidly evolving landscape of artificial intelligence, organizational structure often telegraphs strategic intent more loudly than any press release. The recent internal announcement that Meta is establishing a dedicated Applied AI Engineering division is not just a reshuffling of personnel; it is a seismic indicator of where the center of gravity is shifting in Big Tech’s AI race. For years, the narrative has been dominated by the pursuit of ever-larger, more capable foundational models, often housed within pure research labs like Meta’s FAIR (Fundamental AI Research). Now, the focus is pivoting sharply toward the messy, complex, but ultimately revenue-generating world of deployment.

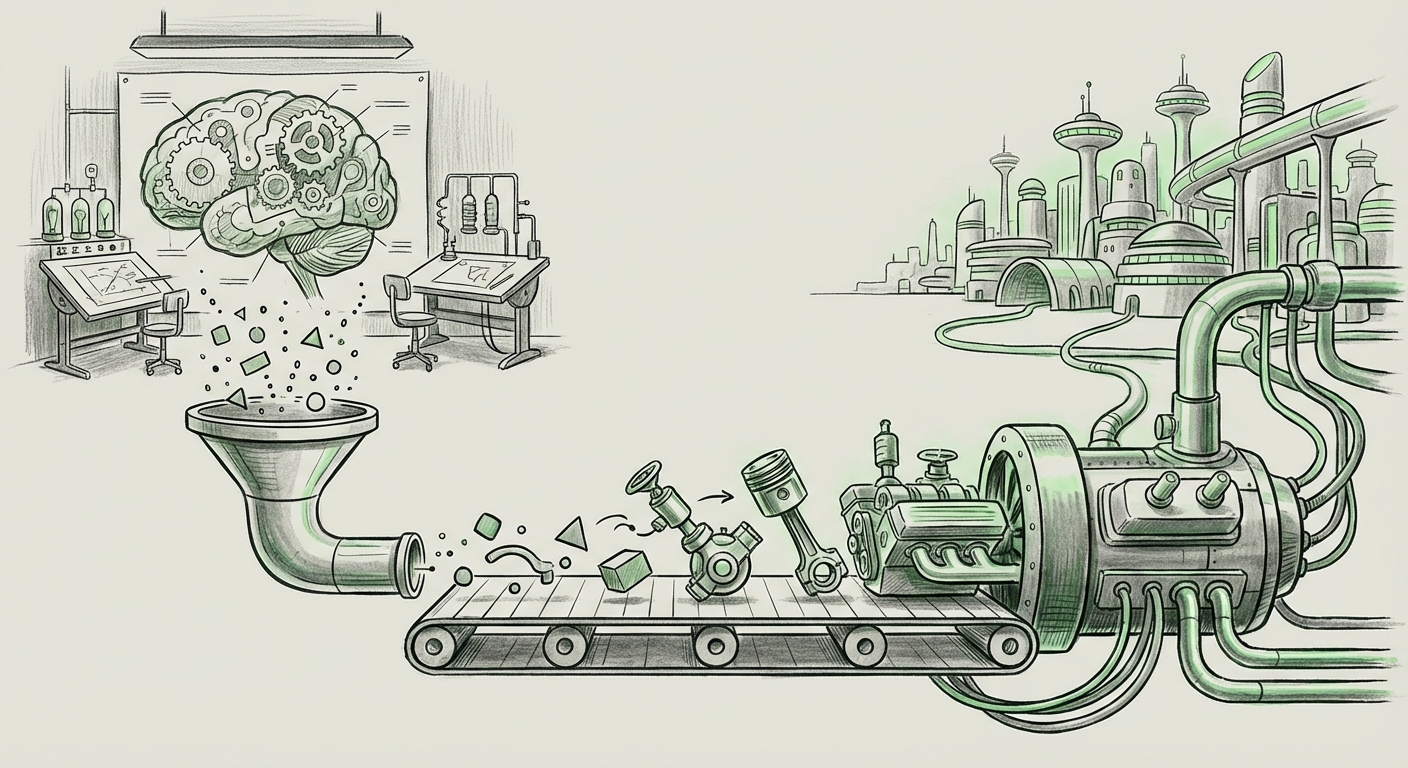

As an AI technology analyst, I view this move as the necessary, mature evolution of any AI-focused corporation. We are leaving the era where "building the biggest brain" was the primary goal. We are entering the age of the "AI Engineer"—the professional tasked with taking that brilliant brain and making it run reliably, affordably, and safely for billions of users.

From Lab Bench to Billions of Users: The Shift to Applied Focus

Meta’s prior structure often saw a separation: the researchers dreamed up innovations, and then the engineering teams had to figure out how to turn those academic breakthroughs into real-world features. This transition can be slow and fraught with friction. The new Applied AI Engineering division is designed to dissolve that friction point.

For those unfamiliar with corporate AI structures: Imagine a massive library (the research arm) full of blueprints for incredible machines. The new Applied Engineering division is the factory floor manager whose job is to actually build those machines, ensure the assembly line doesn't break down when 100 million people try to use them at once, and figure out how to power them without bankrupting the company.

This strategic clarity is vital because Meta's portfolio—Facebook, Instagram, WhatsApp, and Reality Labs—operates at a scale unmatched by most competitors. A model that performs excellently in a controlled lab environment can crash under the weight of instantaneous global queries or introduce unacceptable latency on a mobile feed refresh.

Corroborating the Trend: The Competitive Mirror

Meta is clearly reacting to, and learning from, industry-wide maturation. We see parallels to major organizational shifts across the sector. For instance, analyzing the implications of Google’s decision to merge its DeepMind and Google Brain research groups reveals a crucial trend: consolidation drives deployment speed.

When research entities operate too independently, redundancy and slow handoffs occur. By creating a unified structure, both Google and now Meta are aiming for faster iteration cycles. The focus shifts from academic publication frequency to feature rollout velocity. This consolidation suggests that the core theoretical gaps have been sufficiently bridged, and now it's time to maximize the return on existing technological investments.

The Deployment Dilemma: Why Engineering is the New Frontier

The reason "Applied Engineering" has become the keyword of 2024 is because the industry has hit the efficiency wall. We have models that are astonishingly capable, but running them at the scale required by Meta (trillions of tokens processed daily) is computationally staggering and prohibitively expensive.

The Triple Threat of Applied AI

The work cut out for this new division falls into three critical areas, confirming industry challenges we see in articles discussing Generative AI deployment challenges:

- Inference Cost & Latency: How do you run Llama 3 on an Instagram story recommendation engine without the query taking three seconds and costing ten cents? The engineers must master techniques like quantization, distillation, and pruning to shrink massive models into lean, fast performers suitable for mobile and high-throughput servers.

- Safety and Alignment at Scale: Deploying AI means exposing it to the raw, unpredictable chaos of global social media. The Applied AI team is responsible for rigorous guardrails, automated moderation systems, and ensuring that personalized AI features (like advanced content ranking or AI companions) remain helpful and non-toxic across diverse cultures and languages.

- Infrastructure Optimization: This is about hardware utilization. It means optimizing model deployment across proprietary hardware (like Meta’s custom silicon or high-end GPUs) to maximize FLOPS per watt. For a company that spends billions on infrastructure, engineering efficiency directly translates to billions in operational savings.

This focus validates the sentiment that "The real AI race isn't about building bigger models—it's about using them efficiently." Pure scale is hitting diminishing returns; focused application is the new profit center.

The Llama Bridge: Connecting Research to Reality

For Meta, the Applied AI Engineering division has one overwhelmingly important, immediate mandate: successfully integrating the Llama family of open-source models into consumer-facing products. Llama is Meta’s strategic differentiator, designed to democratize access while simultaneously ensuring Meta controls the deployment ecosystem.

The success of Meta's strategy for integrating Llama into consumer products hinges entirely on this new applied team. They must transition Llama from a powerful research artifact into:

- Personalized AI assistants within WhatsApp.

- Advanced moderation and content creation tools for Reels.

- The intelligence layer underpinning the Metaverse experience in Reality Labs.

This is more than just plugging in an API. It requires deep system-level integration, training models specifically on Meta’s proprietary interaction data, and ensuring the user experience feels instantaneous, not clunky or robotic. If the Applied team stumbles, Llama remains a powerful open-source tool, but Meta loses its competitive advantage in productizing that technology faster than rivals.

The Talent War: Fueling the Applied Engine

Building this division isn't just a structural choice; it’s a massive resource commitment. This shift confirms the explosive demand for a specific type of AI professional: the Applied AI Engineer, who possesses fluency in both advanced ML theory and robust, production-grade software engineering principles.

Articles tracking AI engineering hiring trends and salary data for 2024 consistently show that these hybrid roles command premium compensation. Meta is signaling to the market that they are willing to pay top dollar to secure the talent capable of moving models from the research notebook directly onto the production stack. This intensifies the talent war, moving it away from just hiring PhDs in obscure mathematical fields toward hiring expert distributed systems engineers who *also* understand transformer architecture.

Future Implications: What This Means for AI Adoption

Meta’s restructuring provides a clear roadmap for where the entire technology sector is heading. This is the path from "AI innovation" to "AI ubiquity."

For Businesses and Developers

The primary takeaway for enterprises leveraging AI is that the era of waiting for perfect, massive models is over. The focus must now shift to efficiency and integration. If you are a business looking to adopt generative AI, your focus should mimic Meta’s:

- Prioritize Inference Budget: Understand the cost of every AI query. Optimization engineers will be more valuable than pure model trainers in the short term.

- Embrace Hybrid Architectures: Don't try to run the world's largest model for every task. Use smaller, specialized models (like Meta's Llama derivatives or fine-tuned versions) for specific tasks where cost and speed matter most.

- Build the Application Layer: The real competitive advantage lies not in the base model, but in the unique, proprietary application layer built on top of it—the user experience, the data pipelines, and the safety tooling.

For Society

On a societal level, the move towards highly integrated, applied AI brings safety considerations to the forefront with increased urgency. When an AI feature touches billions of people daily—governing what they see, who they communicate with, and how information flows—the stakes for failure are astronomical.

The Applied AI Engineering division must therefore serve as the primary locus for safety alignment. If Meta is successful, we will see a new standard for real-time AI content moderation and personalized experience governance. If they fail, the amplification of bias, misinformation, or harmful content across their massive platforms will be immediate and global.

Conclusion: The Engineer Inherits the Throne

Meta's organizational blueprint for Applied AI Engineering is a clear declaration: the age of the AI generalist is giving way to the age of the specialized, production-focused AI expert. Research will continue, but the engine of value creation has definitively moved from the discovery phase to the deployment phase.

The foundational models are the engine block; the Applied AI Engineering division is the transmission, the fuel injection system, and the driver—the component that actually makes the machine move forward in the real world. Watching this division function will provide critical insight into the timeline for truly ubiquitous, integrated artificial intelligence across all consumer technology.