Meta's Applied AI Division: The Hard Pivot from Research Hype to Real-World AI Engineering Power

The artificial intelligence landscape is constantly shifting, often moving faster than public understanding. While headlines tend to celebrate the raw power of new large language models (LLMs)—the creativity, the reasoning leaps, and the sheer scale of parameters—the true battleground for tech giants is no longer discovery, but deployment. This is why Meta's reported creation of a new, dedicated Applied AI Engineering division is not just internal housekeeping; it is a landmark signal about the next phase of the AI race.

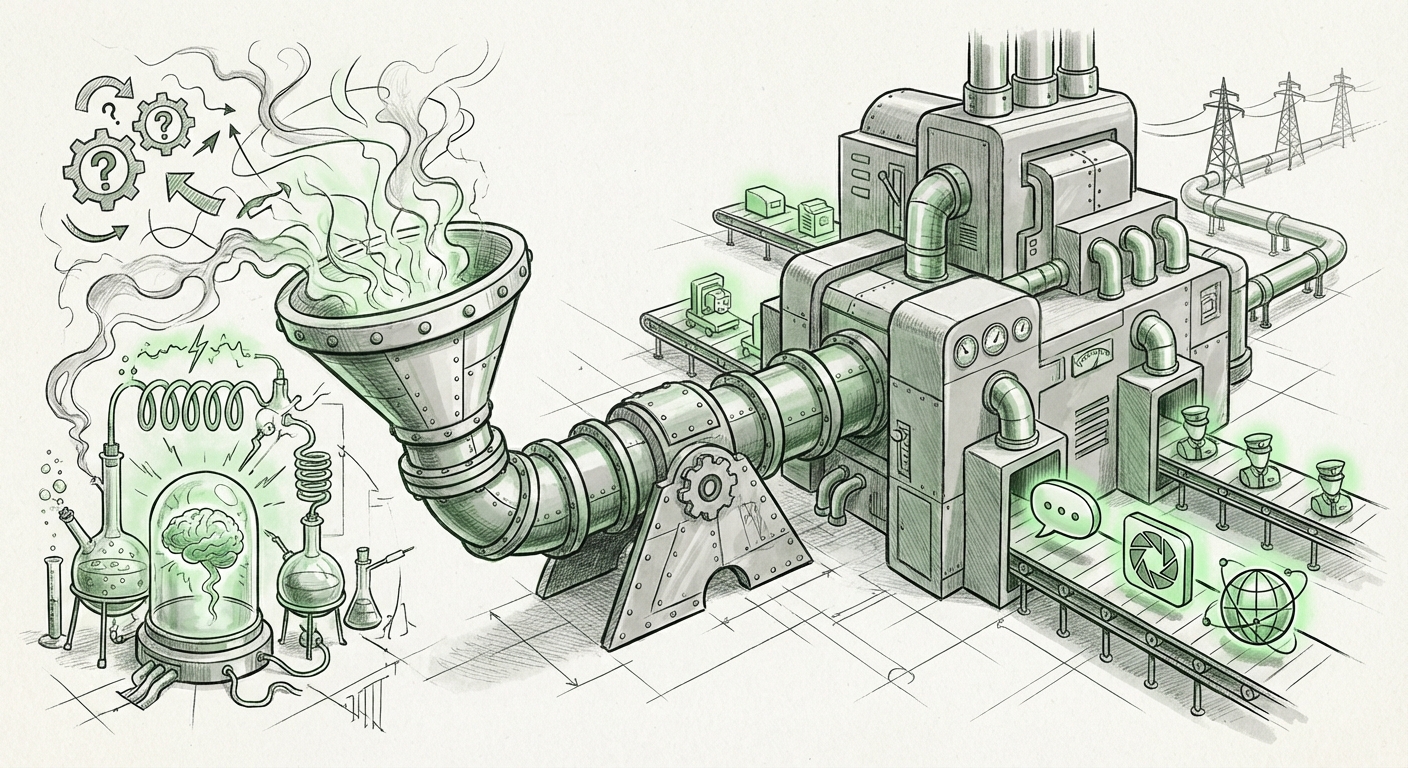

For years, Meta (like Google’s DeepMind and OpenAI) prioritized foundational research. They built the engines. Now, it appears the mandate has changed: the focus is aggressively shifting to building the car, ensuring it runs smoothly on every road, and making sure it doesn't crash while carrying millions of passengers. This move codifies the transition from the "What can AI do?" era to the "How do we get AI to do this reliably, cheaply, and everywhere?" era.

The Maturity Curve: Why Application Trumps Pure Research Now

AI development typically follows a familiar pattern: breakthrough research leads to exciting demos, followed by a humbling realization about the difficulty of scaling those demos into profitable, stable products. Meta, with its billions of users across Facebook, Instagram, WhatsApp, and Reality Labs, knows this better than anyone. They are sitting on one of the world's largest concentrations of user data and interaction points, making the need for robust application engineering paramount.

The initial burst of generative AI excitement relied on relatively small, controlled experiments. Scaling these experiments—serving personalized AI features to billions daily—introduces monumental challenges in latency, cost management, and security. A dedicated Applied AI Engineering division signals that Meta recognizes these hurdles require specialized teams, separate from the core research labs, whose Key Performance Indicators (KPIs) are tied directly to product performance and operational efficiency.

Corroborating the Trend: The Industry Follows the Money (and the Infrastructure)

Meta’s organizational shift is not happening in a vacuum. To truly analyze its significance, we must look at competitors. If other major players have made similar structural changes, it confirms a broad industry consensus that the R&D phase is over, and the engineering phase has begun.

When we investigate industry moves—by searching for structures like "AI engineering division" "Microsoft" "Google" "organizational structure"—we find clear parallels. Both Microsoft and Google have increasingly emphasized integrating their large AI teams (like Google’s Gemini efforts and Microsoft’s Copilot integration structure) directly into product groups. This mirrors Meta’s goal: moving AI out of the laboratory and onto the assembly line.

For technology strategists, this is crucial. It implies that the primary investment focus is shifting from simply acquiring the most parameters to building the best internal tooling to support those parameters. It’s a sign that the market for foundation models is maturing, and competitive advantage is found in the efficiency of the *application layer*.

The Unseen Battle: Infrastructure and the Cost of Inference

What does "Applied Engineering" actually mean in the context of massive LLMs? It means tackling the "unsexy" but ultimately decisive problems of AI deployment. We need to understand this by looking into "Generative AI scaling challenges".

Building a state-of-the-art model like Llama 3 is one feat; running it millions of times per second at sub-second latency across diverse devices (from a high-powered server farm to a smartphone) is an entirely different engineering discipline. Key challenges include:

- Inference Cost Optimization: Every query costs real money in computing power. If a new Instagram AI feature costs too much per user, the feature dies, no matter how clever it is. Applied AI focuses on quantization, pruning, and efficient hardware utilization to slash these running costs.

- Latency Reduction: Users won't wait three seconds for an AI-generated caption. Engineers in this new division will work on optimizing model serving frameworks to ensure instantaneous feedback.

- Data Pipelining: Ensuring the data feeding the models remains high-quality, unbiased, and continuously flows from user interactions back into the retraining loops safely and efficiently.

For AI engineers and CTOs, this organizational decision validates their focus. The future value isn't just in designing the next giant model, but in mastering the software and hardware stack that delivers that model reliably. If Meta’s past experience with scaling social feeds taught them anything, it’s that software engineering excellence determines platform dominance.

Practical Impact: What This Means for Instagram and WhatsApp

The creation of an applied division provides a direct roadmap for product integration. We can anticipate what this means by reviewing Meta's "Meta AI integration roadmap" announcements.

The mandate of this new group is likely the rapid, cross-platform deployment of Meta’s open-source Llama family of models. Consider the implications:

- Instagram/Facebook: Expect highly sophisticated, real-time creation tools. This might include AI editors that understand complex prompts for video clips, highly contextualized ad targeting that requires deep, on-the-fly reasoning, or much more proactive content moderation based on nuanced understanding rather than simple keyword flagging.

- WhatsApp: This is a prime area for applied AI focused on privacy and immediacy. We might see more advanced features like AI assistants capable of summarizing long group chats or drafting complex replies based on context, all while operating under strict on-device or end-to-end encrypted server constraints—a significant engineering hurdle.

- Reality Labs (The Metaverse): Applied AI is essential here for creating believable digital avatars and dynamic, responsive non-player characters (NPCs). The AI must be fast enough to interact naturally in real-time 3D spaces, a requirement far more stringent than text generation alone.

This structure ensures that the brilliant theoretical work done by Meta AI Research (FAIR) gets translated into features users actually see and pay attention to, rather than languishing as interesting but unusable research papers.

The Talent War: Signals in the Hiring Market

Organizational shifts of this magnitude are always reflected in the talent market. When we examine trends related to "Head of Applied AI Engineering" executive hiring trends, we see that titles emphasizing *application*, *production*, and *scale* are commanding premium compensation.

This signals a clear hierarchy emerging within Big Tech: Researchers design the breakthrough concepts; Applied Engineers figure out how to manufacture them cheaply and reliably. The latter group is now positioned as the critical bottleneck breaker.

For individuals looking to advance their careers in AI, this is vital context. While theoretical knowledge remains important, demonstrating mastery over MLOps pipelines, cloud-native deployment strategies, and infrastructure optimization is now arguably the most direct path to high-impact roles. Meta is essentially formalizing a need for AI Generalists who bridge the gap between pure math and market delivery.

Actionable Insights for Businesses and Society

Meta's pivot holds lessons for every organization currently integrating AI:

For Businesses: Follow the Application Leader

If you are a business leader struggling to move your AI proofs-of-concept into production, understand that you likely need to create an internal structure similar to Meta's. Do not expect your research scientists to become infrastructure experts overnight. You must formally bridge the gap between your "Innovation Lab" and your "Product Delivery Team." Success in the next wave of AI is about operationalizing—moving from 10 amazing demos to 10 million reliable, cost-effective features.

For Society: The Inevitability of Ubiquitous AI

When a company commits significant organizational structure and engineering resources to *application*, it signals long-term commitment. This isn't a fad; it's becoming embedded infrastructure. This means AI features will become so seamless within platforms like Instagram and WhatsApp that users will stop noticing them as "AI features" and start seeing them simply as "how the app works." This deep integration raises societal questions around algorithmic transparency, bias baked into deployment pipelines, and the sheer scale of personalized influence wielded by the platform.

Conclusion: Engineering the AI Future, One Deployment at a Time

Meta’s move to establish a dedicated Applied AI Engineering division is a sophisticated declaration of intent. It’s a recognition that the raw intelligence of the model is only half the battle; the other half is the industrial-grade engineering required to deploy that intelligence responsibly and profitably across billions of daily interactions. This organizational architecture mirrors the maturation of the entire AI sector—moving out of the lab and onto the factory floor.

The winners in the next five years of AI won't just be the teams that discover the next paradigm-shifting architecture, but the teams—like this new Meta division—that master the art and science of making that architecture ubiquitous, affordable, and unbreakable. The age of pure AI research is giving way to the demanding, but ultimately more impactful, age of AI Engineering.