The Great Pivot: Why Meta's Applied AI Engineering Division Signals the End of AI Research as We Knew It

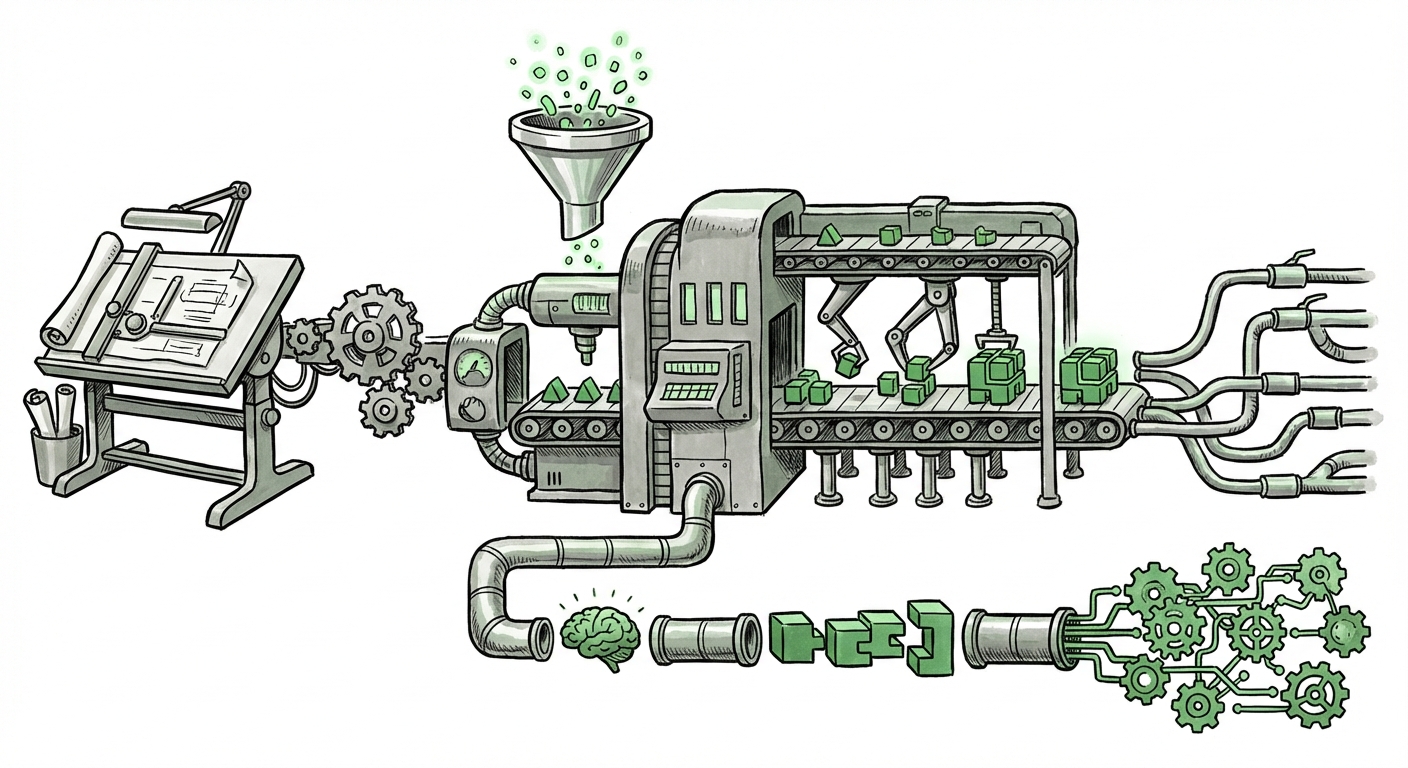

The landscape of Artificial Intelligence development is undergoing a profound structural transformation. For years, the race in Big Tech was defined by the breakthrough paper—the record-breaking model announced by a pure research lab. But the ground is shifting. Meta’s recent announcement of a new Applied AI Engineering organization is not just corporate restructuring; it is a loud declaration that the era of pure, siloed AI research is yielding to an age of relentless, scalable deployment.

As technology analysts, we must look beyond the internal memo. This move signals a critical inflection point: AI is no longer just something we study; it is something we must integrate into the fabric of society at immense speed and scale. This requires a different kind of organization, one dedicated solely to conquering the daunting engineering chasm between a cool lab demo and a functional feature used by billions.

The Chasm Between Research and Reality

To understand the significance of Meta’s new division, we must first appreciate the difference between a Research Scientist and an Applied Engineer in the current AI climate. Imagine building a sports car:

- The Researcher (e.g., Meta FAIR): Focuses on inventing a revolutionary engine—new physics, new materials, maximizing raw power (the foundational Large Language Model, or LLM).

- The Applied Engineer: Focuses on making that engine fit into a safe chassis, ensuring it starts reliably every morning, uses fuel efficiently, and can handle stop-and-go traffic without overheating (productionization, latency, cost management).

Historically, these teams often operated separately. However, the sheer size and capability of modern models (like Llama) demand a unified approach. As reports on LLM productionization challenges consistently show, deploying a state-of-the-art model to millions or billions of users is an engineering feat vastly more complex than training it initially. Challenges include:

- Inference Costs: Running massive models constantly burns huge amounts of computing power (and money). Applied AI must optimize these models for efficiency.

- Latency: Users expect instant responses on Instagram or WhatsApp. Slow AI isn't AI to the end-user.

- Safety and Guardrails: Applied teams are responsible for embedding necessary safety protocols directly into the production pipeline, ensuring models remain aligned with company policies.

Meta’s move recognizes that their foundational models are now mature enough that the bottleneck to gaining market share is no longer model performance, but deployment velocity.

Corroborating Industry Trends: The Mirror of Google’s Realignment

Meta is not operating in a vacuum. This structural change echoes significant prior moves across the tech elite, most notably at Google. When companies attempt to govern both foundational breakthroughs and massive product integration, internal friction often arises. A key area of interest involves looking at the history of Google’s AI groups. Queries surrounding the **"Google DeepMind vs Google Brain restructuring"** highlight this historical parallel.

Google faced a similar dichotomy. The research excellence of Google Brain needed to consistently feed innovations into the real-world applications managed by other product teams. Eventually, Google consolidated these efforts, aiming to create a clearer pipeline from fundamental discovery to tangible user features. Meta appears to be proactively establishing this pipeline before internal organizational friction slows down their progress in the current generative AI sprint.

This indicates a maturation cycle for all major AI labs: Initial phase is discovery; subsequent phase is integration.

Competitive Positioning: The Microsoft Shadow

No analysis of Meta’s AI strategy is complete without contrasting it with its primary competitor in the Generative AI race: Microsoft. Understanding the **"Microsoft AI strategy vs Meta AI strategy"** reveals Meta’s intent.

Microsoft, through its deep partnership with OpenAI, has aggressively focused on the enterprise application layer—embedding Copilots across Office, Azure, and developer tools. Their strength lies in providing high-value, subscription-based B2B solutions.

Meta’s core business, however, remains the consumer social graph: Instagram, Facebook, and WhatsApp. Therefore, Meta’s Applied AI Engineering division is fundamentally geared toward **consumer-facing application**. Their goal is to make AI indispensable within the daily social interactions of billions, whether through smarter content feeds, novel creation tools, or next-generation assistants embedded directly into messaging.

While Microsoft sells productivity improvements, Meta is betting that the next trillion-dollar interaction point will be in synthetic media generation and personalized social experiences, requiring an applied team laser-focused on low-latency, high-volume consumer deployment.

The Future of AI: From Lab Notebooks to Production Pipelines

The rise of dedicated Applied AI Engineering teams solidifies the professionalization and industrialization of artificial intelligence. As expert commentary on **"The role of applied AI teams in big tech"** suggests, this organizational shift defines the new maturity curve for AI adoption:

1. Engineering Dominates the Road Map

For the next 18 to 24 months, the most significant competitive advantages will not come from discovering a fundamentally new neural network architecture, but from who can deploy the existing best architectures (like Transformers) most cheaply, reliably, and quickly.

This means hiring skilled ML Ops (Machine Learning Operations) engineers, distributed systems experts, and performance tuners will become just as—if not more—critical than hiring PhD researchers focused solely on abstract theory.

2. Democratization Through Optimization

When AI models are optimized for efficiency, they become accessible to a wider array of businesses. If Meta can drastically reduce the inference cost of Llama 3 derivatives through its applied engineering efforts, it lowers the barrier for smaller developers wishing to build applications on top of that technology. Applied AI, therefore, becomes the key driver of wider AI democratization.

3. The Blurring Lines of Responsibility

In the past, researchers handed off models to engineering teams. Now, engineers must be deeply embedded in the research feedback loop. An applied engineer discovering that a model consistently fails in a specific regional dialect during deployment needs to communicate that need directly back to the research scientists. This forces greater cross-functional collaboration, making the entire development lifecycle faster and more responsive to real-world feedback.

Actionable Insights for Businesses and Society

What does this strategic pivot by Meta mean for everyone else?

For Technology Leaders and CTOs:

Actionable Insight: Stop viewing AI as an R&D luxury; treat it as core infrastructure. If your organization is attempting to integrate LLMs or generative AI into customer-facing products, you must staff or partner with teams specialized in deployment, not just model creation. Evaluate your current engineering teams: Do they understand quantization, model serving frameworks, and continuous integration/continuous deployment (CI/CD) pipelines for machine learning models?

For Investors and Strategists:

Actionable Insight: Look for deployment velocity, not just announcements. The next wave of market leaders will be those who move quickly from proof-of-concept to market presence. Pay attention to companies demonstrating robust, low-latency inference capabilities, as these are the companies solving the hardest problems in modern AI.

For Society and Policy Makers:

Actionable Insight: The focus on "Applied" AI amplifies governance concerns. When research is siloed, governance debates focus on theoretical risks. When AI is deployed instantly across consumer platforms by dedicated applied teams, the risk of real-world impact—misinformation, algorithmic bias becoming instantly scalable—increases dramatically. Regulatory attention must now pivot from the creation of foundation models to the engineering practices used to govern their deployment.

Conclusion: The Age of the AI Implementer

Meta’s creation of an Applied AI Engineering division is a definitive marker in the AI timeline. It confirms that the research breakthrough phase of the current generative AI cycle is largely complete. We have the engines; now we need the factories and the roads.

The future of AI supremacy will belong not just to those who dream up the next revolutionary algorithm, but to those who possess the engineering prowess to embed that revolution seamlessly, reliably, and profitably into the daily lives of the world’s users. This organizational shift is the most tangible sign yet that the AI industry has transitioned from the theoretical whiteboard to the relentless, complex demands of the assembly line.