The Great Platform War: Why OpenAI Building a GitHub Competitor Signals the Future of AI Development

The bedrock of modern software development rests upon collaboration platforms like GitHub. It’s where code lives, where teams work together, and where version control is king. So, when reports emerge that OpenAI—the leader in generative AI—is developing its own alternative, the implications ripple far beyond simple application rivalry. This isn't just about writing code faster; it’s about building an entirely new ecosystem where AI doesn't just assist development, but *owns* the environment.

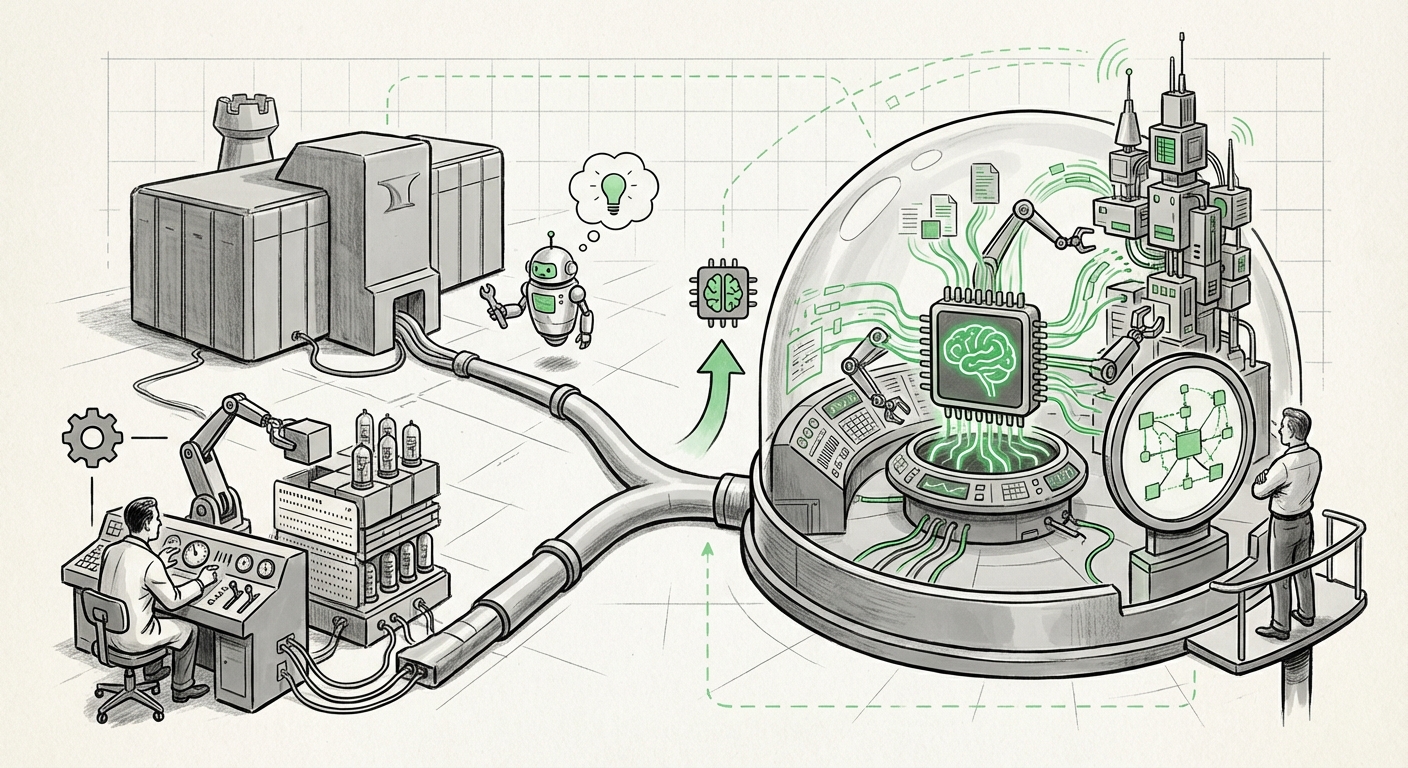

This potential move suggests a strategic pivot: moving away from being a powerful tool plugged into existing infrastructure (like GitHub Copilot integrated into Microsoft’s environment) toward creating a vertically integrated platform that controls the entire software development lifecycle (SDLC). For those watching the race between tech giants, this signals a deepening of competitive fronts and a radical shift in how we imagine software being built.

The Evolution from Assistant to Agent: AI Takes the Driver's Seat

For the last few years, the conversation around AI in coding focused on productivity boosters—tools that suggested the next line of code or helped write boilerplate functions. GitHub Copilot exemplified this perfectly: a valuable add-on. However, the landscape is rapidly maturing.

The market is now buzzing about autonomous AI agents. These are not simple autocomplete tools; they are systems designed to take high-level instructions—like "Build a responsive user login page using React and connect it to this specified API"—and execute the entire task, from initial commit to debugging. This functional leap is crucial context for OpenAI’s reported ambitions.

If OpenAI is building a GitHub alternative, it suggests they see the limitation in being confined to third-party platforms. They are likely designing an environment perfectly tailored for their most advanced models to manage complex codebases end-to-end. This is about moving from AI providing suggestions to AI actively managing infrastructure.

Research into the evolving landscape confirms this trajectory. The battleground is shifting from who has the best code suggestion model to who controls the workflow that utilizes that model most effectively. As noted by analyses tracking the **AI coding assistants market**, the next frontier is integration—making the AI feel less like a helper and more like a co-developer embedded within the repository itself. If external platforms like GitHub impose bottlenecks or limit data flow, OpenAI has a strong incentive to build its own optimized, AI-native infrastructure.

Actionable Insight for Developers: Prepare for Agency

Developers need to start experimenting not just with code generation, but with platforms that promise execution and deployment capabilities driven by AI. The skill of the future will be prompting the agent and verifying its output, rather than writing every line manually.

The Microsoft Quandary: Navigating Partnership and Competition

This potential move creates the most fascinating strategic wrinkle: OpenAI’s primary financial backer and cloud partner is Microsoft—the very owner of GitHub. While Microsoft has exclusive agreements to use OpenAI’s models, this new venture creates a direct ideological and commercial challenge to one of Microsoft’s most valuable software franchises.

We must examine this through the lens of corporate strategy. Is OpenAI asserting its need for independence? Or is this a highly coordinated, though perhaps publicly opaque, strategy where Microsoft gains first access to the AI infrastructure layer while still profiting from Azure cloud usage that underpins it?

Analyses into **OpenAI’s governance and strategic independence** often highlight their mission-driven nature, which sometimes conflicts with pure commercial strategy. Building a core infrastructure piece like a code host provides OpenAI control over data standards, model deployment speeds, and, crucially, the developer experience—things that can be diluted when operating entirely within Microsoft’s broader ecosystem.

For Microsoft, this is a delicate balancing act. They have invested billions to secure an early lead in foundational models. Allowing their partner to build a superior developer platform—potentially undercutting GitHub’s value proposition—is a risk. However, if they block it, they risk alienating OpenAI and falling behind in the AI infrastructure race themselves.

Implications for Business Strategists: Understanding the Layering of the Stack

Businesses relying on the current tech stack must monitor this tension closely. If OpenAI builds a superior, AI-first code hosting platform, it forces a migration decision. Do you stay integrated with Microsoft's familiar tools, or migrate your IP to the environment that promises higher AI efficiency?

This signals that the platform layer—where code management occurs—is now seen as the next battleground for AI dominance, just as the cloud layer (AWS vs. Azure vs. GCP) was a decade ago. Control over the software supply chain is now synonymous with control over AI adoption velocity.

The Open Source Dilemma: Data, Licensing, and Trust

GitHub is fundamentally built on the concept of open source—the sharing and collaboration around publicly accessible code. This shared repository of human knowledge is precisely what trains the world's most powerful LLMs. OpenAI’s potential new platform immediately throws a spotlight on data provenance and licensing.

If OpenAI launches a platform, how will it handle the code uploaded there? Will it be aggressively used to train future iterations of its models, potentially without the same reciprocity offered by GitHub’s current approach? This directly feeds into existing **licensing debates regarding proprietary AI training**.

For the open-source community, this is a moment of reckoning. They must decide if they trust a platform owned by a for-profit AI research lab to manage their life’s work, especially when that lab is simultaneously competing with the incumbent steward of open source. The friction is palpable; if developers feel their contributions are being leveraged without sufficient return or control, they might hesitate to migrate sensitive or proprietary projects.

Tools from competitors like **Sourcegraph Cody** and **Tabnine** are already navigating this tightrope, trying to offer AI assistance while maintaining trust within the open-source ecosystem. OpenAI, with its massive influence, could either set a new, potentially restrictive standard or, conversely, establish a new model for compensating or acknowledging contributions used in training.

Societal Impact: Defining the Future of Shared Knowledge

The future of shared code is at stake. We are moving from an era where code was shared for mutual improvement to an era where code is shared for commercial model enhancement. Businesses need clear governance policies on what code can be placed on which platform, given the different intellectual property implications of each environment.

What This Means for the Future of AI and How It Will Be Used

The pivot toward an AI-native development platform by OpenAI is not incremental; it is foundational. It tells us three major things about the near future of artificial intelligence:

- Vertical Integration is Inevitable: AI powerhouses realize that the true value is not just in the model, but in the proprietary workflow surrounding it. Controlling the platform where the model is deployed and refined creates a feedback loop that competitor tools cannot easily replicate.

- The SDLC Becomes Autonomous: The goal is not just AI-assisted development, but AI-managed development. A platform tailored by the model’s creators will offer deeper integration for planning, testing, debugging, and deployment, pushing human involvement toward high-level architecture and ethical oversight.

- Platform Wars Shift to Infrastructure: The major tech competition is no longer just about search engines or cloud storage; it is about owning the core infrastructure layers that support future digital creation. Code management is now recognized as a critical infrastructure layer, on par with operating systems or cloud compute.

Practical Implications and Actionable Takeaways

For enterprises and engineering leaders, the path forward requires proactive strategy, not reactive adoption:

- Audit Your AI Toolchain: Understand exactly where your code is interacting with third-party AI tools. Are you using Copilot today? What is the exit strategy if OpenAI’s platform becomes demonstrably superior for your specific model types?

- Invest in Agent Understanding: Start training key engineering teams on how to interact with next-generation tools like Devin or whatever OpenAI releases. The interface for software creation is fundamentally changing from a text editor to a conversational agent interface.

- Develop Internal Data Strategy: If you are building highly proprietary software, you must decide whether to keep it entirely off platforms known for aggressive model training, or if you can leverage the efficiency gains of a dedicated AI platform by segmenting your repositories carefully.

In conclusion, OpenAI’s foray into building a GitHub competitor is a massive signal fire. It confirms that the next major competitive moat in the AI race will be built around the development environment itself. We are witnessing the transformation of the programmer's toolkit from a set of productivity apps into an AI operating system. The companies that control the operating system—where the code is managed, tested, and deployed—will dictate the pace and shape of software innovation for the next decade.