The Great Copyright Divide: Why the Supreme Court Ruling on AI Authorship Solved Nothing for Generative Tech

The world of technology watched closely as the U.S. Supreme Court recently weighed in on the copyright eligibility of works created solely by Artificial Intelligence. The case, brought by AI inventor Stephen Thaler, asked a monumental question: Can a machine be legally recognized as the sole author of an image?

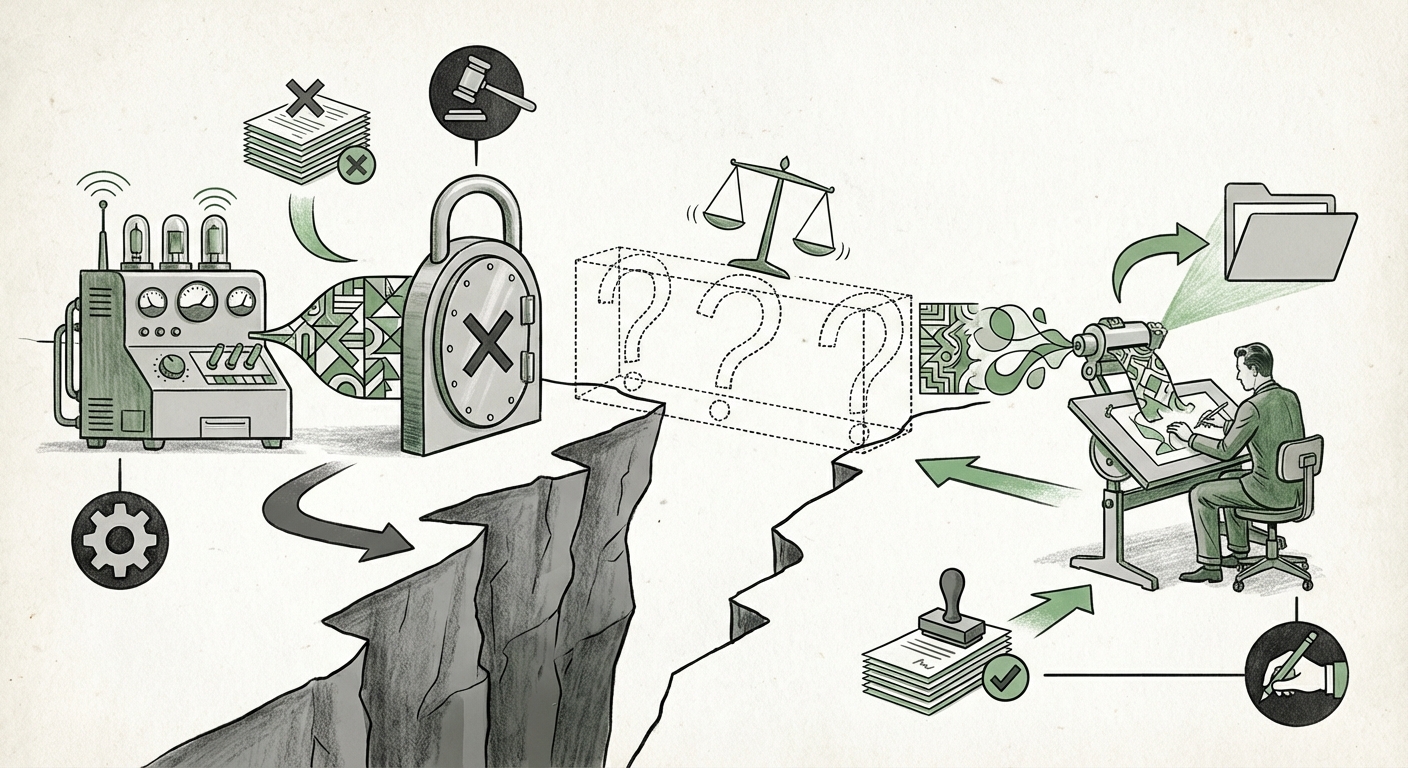

The answer, delivered by the Court, was a definitive "no." But here is the crucial pivot for everyone building, using, or investing in generative AI: This decision only settles the most extreme edge case. It confirms that current US copyright law—which requires a work to originate from a human mind—does not recognize a non-human entity as an author. What the ruling pointedly *does not* address is the explosion of creativity happening in the vast middle ground: works created by humans *with* the assistance of AI tools.

As an AI technology analyst, I see this narrow ruling not as a resolution, but as the starting gun for a much larger, more complex legal and technological race. The ambiguity left in the wake of this decision will define investment strategies, creative workflows, and platform liabilities for the next decade.

The Unsettled Frontier: Human-Assisted Creation

Imagine you are an artist today. You type a detailed prompt into Midjourney or use GitHub Copilot to suggest complex code blocks. You then spend hours refining, selecting, compositing, and editing the AI’s initial output. Under the current legal framework, who owns the copyright to the final piece? The Supreme Court offered no guidance.

The Threshold of Creativity

The key challenge now revolves around defining the "human authorship requirement." For years, copyright has protected the expression of human creativity—the small choices, the unique arrangements, the spark of originality. When an AI does 95% of the heavy lifting, how much human tinkering qualifies as "meaningful contribution"?

- Prompt Engineering: Is writing an incredibly complex, detailed prompt enough? Legal analyses suggest that mere instruction, like ordering a sandwich, isn't authorship.

- Selection and Arrangement: If a user generates 100 images and meticulously chooses the best three, adding final touches in Photoshop, that looks much closer to human creativity. Legal experts monitoring guidance from the U.S. Copyright Office often look for evidence of this level of human modification.

This ambiguity forces businesses relying on AI creation into a legal grey zone. They need clarity on whether their output is truly protectable IP, or if it exists in the public domain the moment it’s generated.

Global Divergence: The International Copyright Landscape

Copyright law is rarely uniform across borders. While the US maintains a staunchly human-centric view, other major economic blocs are charting different courses, creating potential friction points for global technology firms.

Watching the European Union

The ongoing development of the EU AI Act signals a focus on governance, transparency, and managing risk associated with AI systems, rather than strictly adhering to US-style authorship definitions. While the EU’s primary focus is on high-risk applications, its approach to IP surrounding AI output may eventually diverge significantly from the US, potentially offering protection based on the investment made in developing the AI, rather than just the human input into the final prompt.

This difference is crucial. If the US maintains a high bar for human involvement, companies operating globally might choose to use EU frameworks (if applicable) to secure protection for outputs that the US might deem uncopyrightable. This creates regulatory arbitrage—where businesses structure activities to take advantage of the most favorable legal environment.

The Immediate Business Fallout for AI Platforms

For the major players—the platforms like OpenAI, Google, and Midjourney—this Supreme Court decision highlights significant risk in their current business models, which largely rely on granting users ownership of AI-generated output.

Terms of Service Under Scrutiny

Most generative platform Terms of Service (ToS) state that the user owns the content they create using the service. However, if a user cannot legally claim copyright on that output because the human contribution was deemed insufficient, the platform has essentially promised an asset (protected IP) that it cannot guarantee.

This forces two critical business shifts:

- Indemnification Demands: Businesses using AI are now demanding that platform providers indemnify them—meaning the platform takes on the legal risk if the AI-generated work is later found to be unprotectable or infringing.

- Work-for-Hire Models: We may see platforms transition toward more formal licensing or "work-for-hire" style agreements. Instead of saying, "You own it," they might say, "We grant you an exclusive, perpetual license to use this output, provided it meets our internal threshold for human input."

For investors (Venture Capitalists), this means that any company whose primary value proposition relies on defensible IP generated by AI needs a much clearer legal strategy than simply trusting current ToS agreements.

Legislative Momentum: The Ball is in Congress’s Court

Since the judicial branch refused to update the definition of "author," the political branch—Congress—is now under immense pressure to act. This is where the true technological and societal implications will be hammered out.

Searching for Legislative Clarity

The current state of affairs is unsustainable for large industries like publishing, film, and software development. These sectors require clear rules on ownership and training data usage. Therefore, monitoring proposed legislation aimed at generative AI copyright reform is essential for future planning.

Congress must decide whether to:

- Create a new, unique category of intellectual property specifically for AI-assisted works, perhaps with a shorter protection term.

- Amend existing copyright law to precisely quantify the required level of human intervention (e.g., requiring X hours of editing or Y percentage of non-AI input).

- Leave the issue alone, relying on existing common law precedents, which would maintain the current state of uncertainty.

Whichever path Congress chooses will send powerful signals about the value society places on human creativity versus technological efficiency.

Actionable Insights for the AI-Driven Future

As analysts looking forward, we must move beyond the courtroom drama to focus on operational reality. What do businesses and creators need to do now?

For Creative Professionals and Teams: Document Everything

If you use AI tools in your workflow, treat the process like a scientific experiment where documentation is key. Keep detailed logs:

- Save all prompts used.

- Record the time spent selecting, refining, and editing AI output.

- Use version control to show the step-by-step progression from raw AI generation to final product.

This paper trail is your only defense should a future court case challenge your claim of authorship. You must prove that the final expression originated in your mind, even if the tool was digital.

For Corporate Legal and Technology Officers: Review Contracts Now

Audit all vendor contracts and internal policies regarding AI usage. Ensure that ownership language clearly addresses the distinction between outputs created *without* AI and those created *with* AI. If your product relies on AI-generated content, you must assess your risk exposure against the possibility that your "assets" might not be legally defensible IP.

For AI Platform Builders: Embrace Transparency

Future regulatory success will likely favor platforms that are transparent about what they offer. Instead of vaguely promising "ownership," clearly define the scope of the license you provide for outputs, acknowledging the current legal ambiguities. Transparency builds user trust where legal certainty is currently lacking.

Conclusion: The Inevitable Evolution of Originality

The Supreme Court decision confirming that machines cannot be authors is both expected and profoundly unhelpful for the current wave of innovation. It simply locks in the past legal reality while ignoring the present technological reality.

The technology trend is clear: AI integration will become deeper, not shallower. The question is no longer *if* humans will use AI, but *how much* they can use it before their resulting work loses its legal shield. The future hinges on the coming legislative and common law battle to define the concept of meaningful human contribution. Until Congress or subsequent court rulings clarify this threshold, the digital creative economy will continue to operate under a cloud of essential, high-stakes uncertainty. This is the messy, exciting, and legally perilous frontier of the next stage of the AI revolution.