The AI Copyright Cliffhanger: Why the Supreme Court Ruling Settles Almost Nothing for Generative Creation

The narrative around technological breakthroughs often involves definitive legal clashes. When the US Supreme Court recently weighed in on the copyright claim filed by Stephen Thaler—who sought to have an AI system recognized as the sole author of an image—the resulting decision sounded sweeping. However, as observers quickly noted, the reality is far more nuanced: the ruling only definitively settled one extreme scenario.

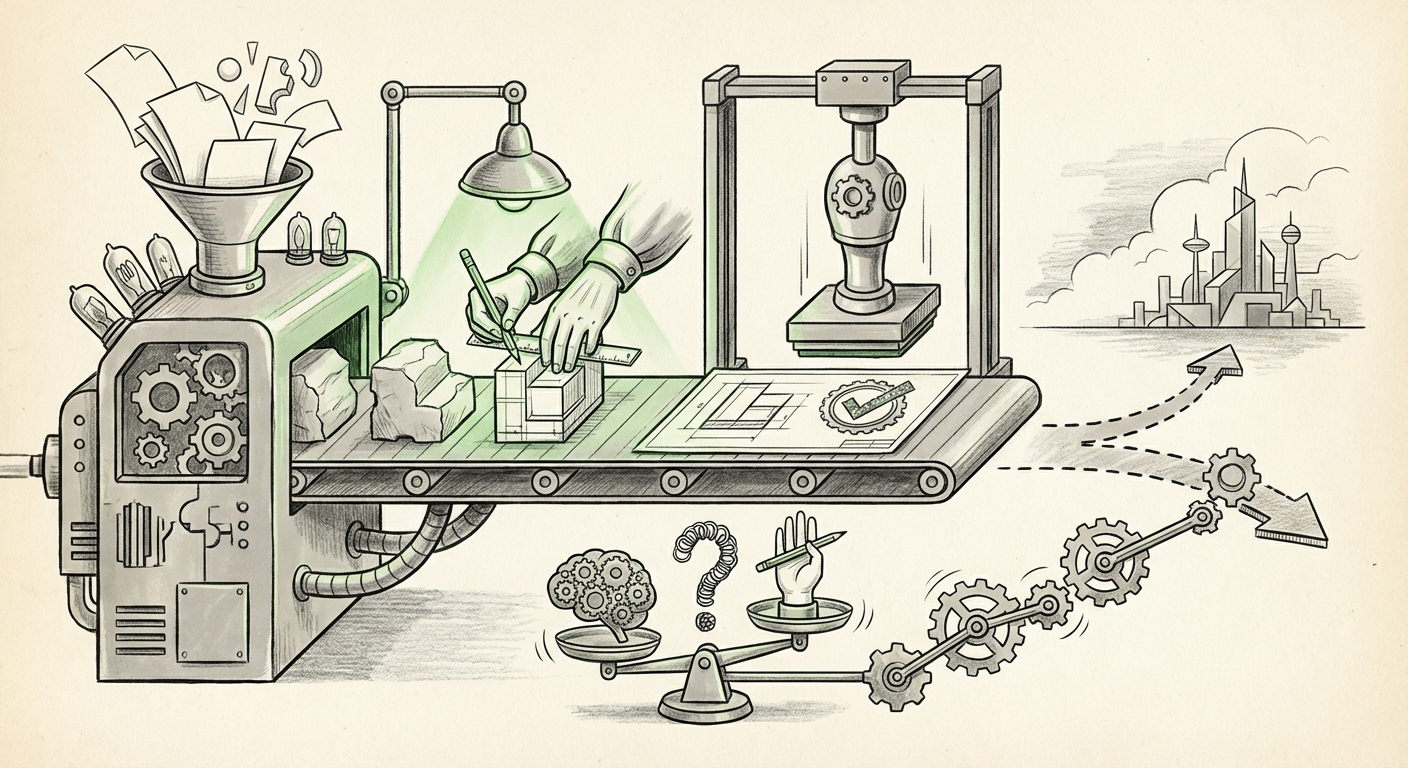

By rejecting the machine as the author, the Court reaffirmed the bedrock principle of US copyright law: creation requires a human mind. While this settles the question of whether an autonomous machine can hold IP rights, it completely ignores the far more prevalent, economically significant reality: **works created collaboratively between humans and sophisticated AI tools.**

This narrow focus creates a vast, undefined gray area that businesses, artists, developers, and policymakers must now navigate. To understand the true impact of this legal non-decision, we must look beyond the machine-as-author argument and examine the unresolved tensions surrounding human input, regulatory clarity, and the foundational data used to train these powerful models.

The Legal Truce: Upholding Human Authorship

The core function of the Supreme Court’s refusal was to maintain the status quo established in centuries of intellectual property jurisprudence. Copyright law is designed to reward and incentivize human creativity. When the court confirmed that AI lacks legal personhood, it was, essentially, an administrative affirmation rather than a revolutionary legal statement.

For the technical audience, this means that AI systems remain sophisticated *tools*, akin to a camera or a synthesizer, rather than independent creators. This aligns with the current directives from the US Copyright Office (USCO), which has consistently stated that works generated purely by machine are not eligible for protection.

The Unresolved Spectrum: Human Prompting vs. Machine Output

The decision avoids the million-dollar question: What level of human involvement—prompt engineering, curation, iteration—is sufficient to transform an AI output into a copyrightable human creation?

Consider the spectrum of AI involvement:

- Minimal Prompting: A user inputs "a futuristic cityscape at sunset." The output is largely determined by the model's training. Is this enough human authorship? Probably not, according to current guidance.

- Iterative Refinement: A user runs the prompt, dislikes the clouds, asks the AI to change them, merges two outputs, and edits the final product in Photoshop. Here, the human involvement is substantial.

- Custom Model Fine-Tuning: A developer fine-tunes an open-source model specifically on their existing proprietary artwork, then uses that unique model to generate new styles. Does the specialized training grant authorship rights to the developer?

As legal analysis often points out (mirroring discussions in sources found via searches like "human authorship requirement AI copyright implications"), the clarity required for commercial use—especially for licensing and enforcement—is missing. Businesses need predictability. If a company commissions an AI-generated logo, they need assurance that they actually own the rights to defend it against infringement.

The Regulatory Crossroads: Where the Copyright Office Steps In

With the Supreme Court sidestepping the collaborative question, the immediate legislative and regulatory focus shifts squarely to the **US Copyright Office**. The USCO is now the de facto rule-maker for the vast majority of creators.

The search for "US Copyright Office guidance on AI generated content" reveals a landscape of evolving, often cautious, directives. The Office has signaled that human creative input—the selection, arrangement, or modification of AI-generated material—is what matters. They are attempting to draw a bright line where human control supersedes mere mechanical execution.

For product managers and developers, this means that the *process* of creation becomes as important as the *result*. Future AI tools might need built-in auditing features that log every human decision, timestamp, and revision applied to an AI draft, creating an irrefutable chain of human agency to satisfy future registration requirements.

Practical Implications for Creative Industries

For artists, writers, designers, and musicians utilizing tools like Midjourney, Stable Diffusion, or advanced LLMs, the message is clear: use AI as a powerful brush, not as the painter.

- Documentation is Key: Creators must aggressively document their iterative work. If you generate 50 options and only choose one, the other 49 are likely unprotectable machine output. The chosen one must show significant human modification or selection to have a chance at registration.

- Licensing Chaos: Companies that license AI-generated content face risk. If a third party challenges the copyrightability of the licensed asset, the licensee may find their legal foundation crumbling, leading to massive financial exposure.

The Future Landscape: Evolving IP Frameworks

The Thaler case serves as a powerful reminder that current law is based on an industrial-age concept of creation. The search for the "future of copyright law in the age of generative AI" reveals that many experts believe the existing framework is fundamentally inadequate.

As AI models become more capable—moving from sophisticated tools to genuine partners in ideation—the legal definitions of "creativity" and "authorship" will need serious re-evaluation. Will we see a *sui generis* (unique) category of IP rights created specifically for AI-assisted works, perhaps with shorter protection terms or different royalty structures?

This shift is not just academic. For Venture Capitalists funding deep-tech AI firms, the regulatory uncertainty surrounding IP ownership directly impacts valuation and market viability. If ownership is cloudy, investment slows.

The Unseen Legal Battlefield: Input vs. Output

Crucially, analyzing the output ownership debate without considering the **input** controversy paints an incomplete picture. The single greatest legal risk facing the generative AI industry today revolves around the data used for training.

As demonstrated by ongoing litigation regarding "AI model training data copyright litigation status," copyright holders are challenging whether scraping billions of protected works from the internet to build a commercial AI model constitutes fair use. If courts rule against the AI developers in these large-scale lawsuits (like those involving Getty Images or various author/artist collectives), the models themselves could face crippling liabilities or require expensive licensing retroactively.

This reality underscores the fragility of the entire ecosystem. Even if a user successfully proves their AI-assisted output is copyrightable, the underlying technology that created it might be deemed built on a foundation of mass infringement. This parallel legal track is arguably more critical to the immediate future of AI deployment than the narrow Thaler decision.

Actionable Insights for Navigating the Gray Zone

For technology leaders and creative directors operating today, navigating this ambiguity requires a proactive, risk-mitigation strategy:

- Adopt a "High-Intent" Standard: For any asset intended for commercial protection, enforce workflows that maximize human input. Treat the AI as a high-powered brainstorming partner whose suggestions must be visibly selected, altered, or substantially guided by human expertise.

- Monitor USCO Registration Results: Keep meticulous records of which AI-assisted works are successfully registered by the Copyright Office. These accepted registrations set the practical, albeit unofficial, standard for what currently passes muster.

- Demand Data Lineage Transparency: When evaluating third-party AI tools, prioritize those that can offer clearer documentation or warranties regarding their training data provenance. While perfect transparency is unlikely, inquiring about it mitigates future liability exposure stemming from input disputes.

- Advocate for Legislative Clarity: Recognize that courts are ill-equipped to define these technological boundaries. Support industry efforts lobbying for legislative clarity that explicitly defines the necessary threshold of human involvement for copyright protection in the AI era.

Conclusion: The Era of Conditional Creativity

The Supreme Court’s recent ruling was not the grand finale of the AI copyright debate; it was merely the closing of the opening act. By definitively stating that a machine cannot author, the court preserved the human element at the center of IP law but left a massive operational vacuum in its wake.

We are now entering the era of conditional creativity. Copyright protection is no longer granted by mere creation; it is granted by demonstrating sufficient, documented, and intentional human intervention upon the creation offered by a non-sentient tool. The technology trend is clear—AI integration will only deepen. The challenge for the coming decade will be for our legal frameworks, guided by evolving regulatory action and informed by these foundational rulings, to catch up with the speed of innovation without stifling the collaboration that defines modern creative work.