Federal AI Shakeup: Why US Agencies Are Flipping LLM Vendors & What It Means for AI Adoption

The recent news surrounding the US State Department swapping one leading Large Language Model (LLM) provider for another—dropping Anthropic’s Claude in favor of OpenAI’s GPT-4—is far more than an internal IT footnote. It is a dramatic snapshot of the turbulent, high-stakes reality of deploying cutting-edge AI within the strictest environments: the U.S. Federal Government. This shift illuminates a critical lesson for every business aiming to adopt generative AI: model volatility is the new normal, and procurement agility is paramount.

As an AI technology analyst, this development immediately triggered a deeper investigation into the underlying drivers. Why drop Claude, a model often lauded for its safety focus and sophisticated reasoning, for what the source described as "aging" GPT-4? The answer lies at the intersection of compliance, real-world performance under pressure, and the brutal speed of technological iteration.

The Crucible of Government AI Procurement

For commercial enterprises, swapping an AI vendor might mean a dip in customer satisfaction scores or a delay in a feature launch. For federal agencies like the State Department, the consequences of a poor AI choice are exponentially higher, involving national security, diplomatic communications, and the handling of classified information. This environment acts as a high-pressure crucible, rapidly boiling down vendor claims to actionable reality.

The initial wave of federal AI adoption often prioritized models from newer players like Anthropic, attracted by their strong alignment research and proactive safety narratives. However, the pivot suggests that the practical, deployable utility of the incumbent, OpenAI’s ecosystem, provided a superior immediate benefit.

Corroborating the Trend: Beyond the State Department

Is this an isolated incident, or part of a wider federal recalibration? To understand the gravity of this "shakeup," we must look for corroborating evidence across the defense and intelligence sectors. The search for other agencies **"switching large language models"** or examining **"DoD AI procurement shifts"** is vital. If multiple agencies are experiencing similar evaluation cycles—moving from one vendor to another—it signals a systemic pattern where initial pilot programs are ending, and the real-world suitability of models is being tested against concrete mission requirements.

This search helps us identify if the market is consolidating around proven frameworks or if agencies are simply chasing the latest performance leader. For federal contractors, tracking these shifts is crucial; where the government spends its limited AI budget today dictates where future contracts will flow.

The Technical Tug-of-War: Performance Versus Maturity

The most contentious element of the swap is the description of GPT-4 as "aging." In the world of foundation models, six months can feel like an eternity. Newer models, like Claude 3 Opus or Gemini 1.5, consistently push the boundaries of context window size and benchmark scores.

So, why revert or stick with an older model? The investigation must turn to **"GPT-4 vs Claude 3 Opus government performance benchmarks."** Federal work often involves nuanced tasks: summarizing lengthy regulatory text, drafting precise diplomatic language, or synthesizing intelligence from disparate sources. A model might score higher on general knowledge tests (like MMLU), but fail in the specific, mission-critical areas important to the State Department.

It’s possible that GPT-4, despite its age, possesses superior fine-tuning for the specific API structures, tool-use capabilities, or integration points the State Department relies on. Furthermore, older, well-understood models often have more predictable latency and reliability metrics—factors that matter immensely when human jobs depend on instant, accurate AI assistance.

The Unseen Barrier: Security and Compliance

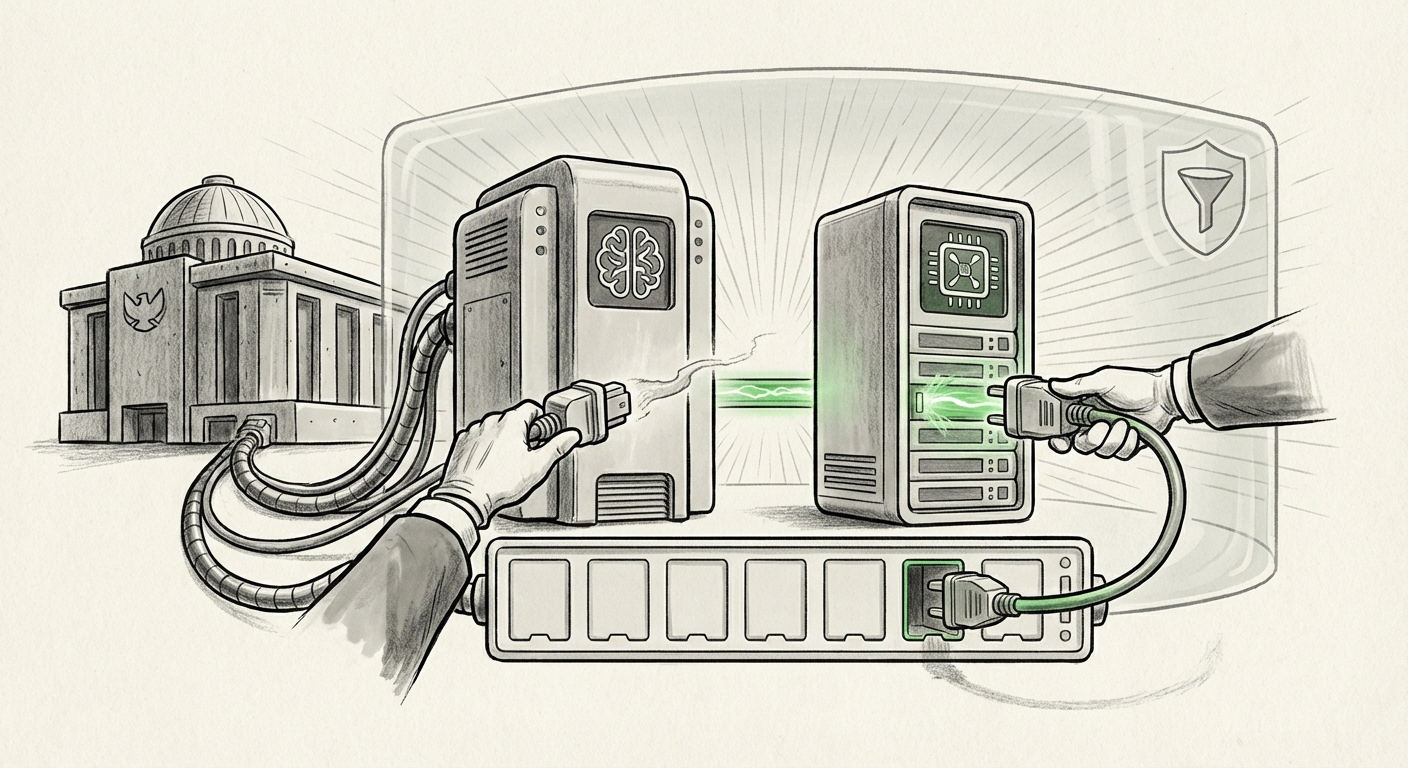

The greatest technical barrier in government AI adoption is not capability, but compliance. This brings us to the critical role of certification frameworks. We must investigate the **"FedRAMP authorization status OpenAI vs Anthropic."**

FedRAMP (Federal Risk and Authorization Management Program) is the baseline standard for cloud service providers accessing government data. A model upgrade, even if superior in performance, might temporarily halt deployment if the vendor hasn't secured the necessary authorization level (e.g., Moderate or High Impact) for the specific operational environment. A switch might indicate that OpenAI secured a necessary certification faster, or that the specific architecture required by the State Department was more readily available under the GPT-4 ecosystem.

This reveals a core tension: Innovation constantly outpaces compliance timelines. Agencies are forced to choose between the *safest* technology currently authorized and the *most effective* technology slightly behind on certification paperwork. The CISA guidance on LLM security further pressures this choice, demanding verifiable security protocols.

Implications for the Future: Embracing Volatility

The State Department’s decision serves as a powerful warning: **The LLM landscape is not stable, and betting on a single champion is a liability.** This instability has profound implications for how businesses, both public and private, must architect their AI stacks.

Actionable Insight 1: Moving Beyond Single-Vendor Dependency

The most critical takeaway is the necessity of developing a **"Federal government multi-cloud AI strategy."** Agencies and corporations can no longer afford vendor lock-in. If today’s best model is tomorrow’s legacy system, the technological architecture must be platform-agnostic where possible, utilizing wrappers or abstraction layers that allow seamless swapping between vendors.

For businesses, this means investing in orchestration tools that can route requests based on cost, latency, or specific task requirements. If Claude excels at creative writing and GPT-4 is better for structured data extraction, the system should dynamically assign the task to the best-fit model, mitigating the risk of any single provider failing to meet standards or experiencing outages.

Actionable Insight 2: The Performance Gap vs. The Trust Gap

The analysis must differentiate between performance gaps and trust gaps. While benchmarks define the performance gap, the trust gap—the confidence an agency has in a vendor's security, commitment to the government market, and long-term support—often drives procurement decisions. Anthropic built significant trust through its explicit focus on safety alignment. OpenAI has built trust through sheer market dominance and rapid integration into existing enterprise systems.

When a switch occurs, it’s often because the trust relationship—encompassing support responsiveness, regulatory compliance pathways, and data governance—is weighted more heavily than a few percentage points difference in an MMLU score.

Societal Impact: The Speed of Governance

This constant churn in government adoption creates friction for governance. Regulatory bodies struggle to issue guidance when the underlying technology is morphing monthly. If agencies are constantly testing new models, how can policymakers establish stable rules regarding data provenance, bias mitigation, and accountability? The legislative cycle is inherently slow, whereas the LLM release cycle is blindingly fast. This gap ensures that governance will often lag behind deployment, creating regulatory ambiguity for commercial firms attempting to follow the government's lead.

Conclusion: Agility as the Ultimate AI Strategy

The Federal AI shakeup—the shift from Claude back to GPT in the State Department—is a loud signal echoing across the technology sector. It reminds us that AI adoption is not a one-time integration; it is continuous competitive evaluation. The models that win today may be sidelined tomorrow, not necessarily because they became "bad," but because a new model better satisfied the complex matrix of security mandates, budgetary constraints, and real-world performance needs of a mission-critical user.

For organizations looking to maximize their AI investment, the lesson is clear: Treat LLMs as interchangeable utilities rather than monolithic partners. Invest heavily in the infrastructure layer that allows you to switch vendors instantly. In the fast-moving world of generative AI, the greatest competitive advantage is not having the best model today, but having the agility to deploy the best model tomorrow.