The Unprecedented Fusion: Why the US Military is Betting on Commercial AI for High-Stakes Strike Planning

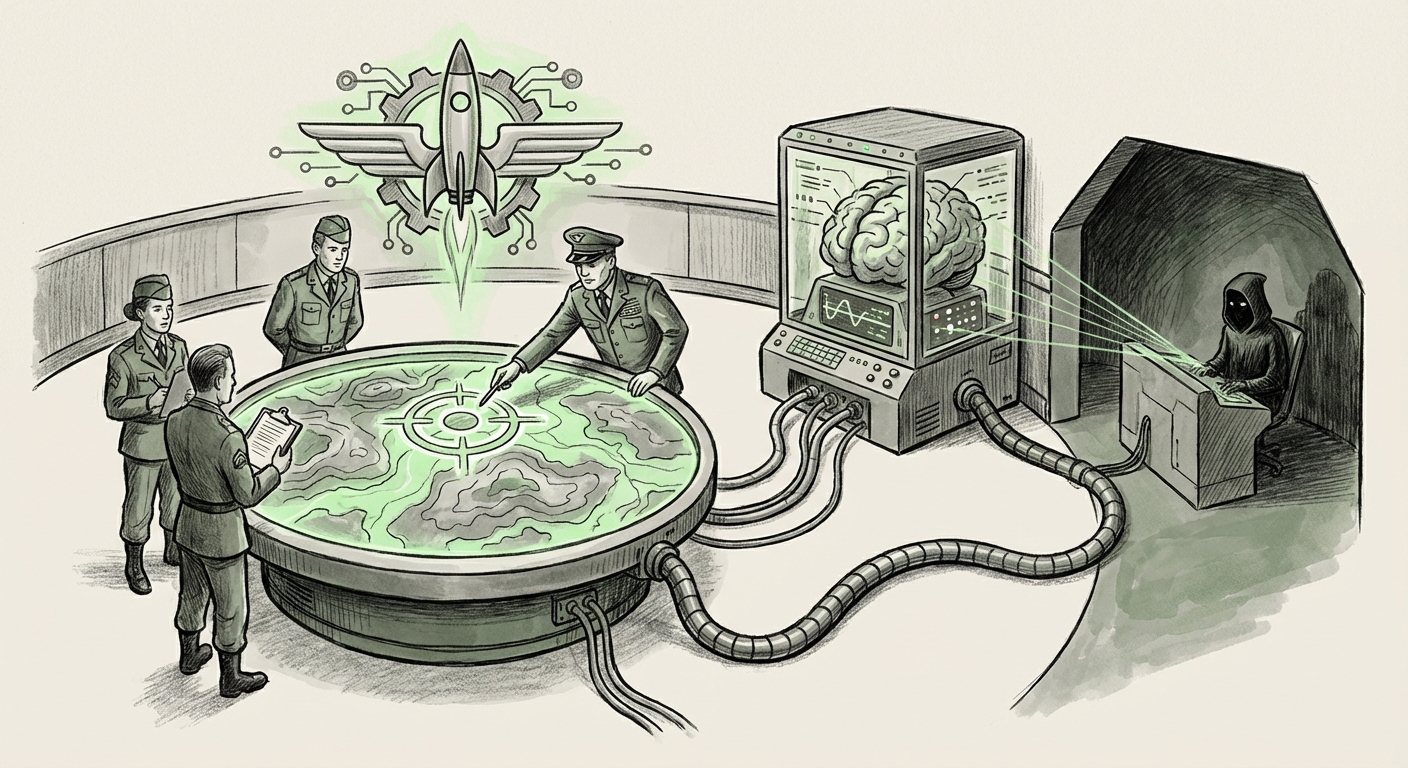

The world of artificial intelligence operates on two distinct tracks: the public deployment of consumer-facing tools like ChatGPT, and the highly secretive development of models for critical infrastructure and defense. Recently, these tracks appear to have violently collided. Reports detailing the US military’s first large-scale use of generative AI—specifically Anthropic’s Claude model—for target selection and strike planning against Iranian targets present one of the most significant inflection points in military-technology integration this decade.

As an AI technology analyst, this development is more than just a headline; it’s a massive validation of the *speed* at which commercial Large Language Models (LLMs) are becoming indispensable tools, even in the most risk-averse sectors. However, it simultaneously introduces alarming geopolitical and security vulnerabilities.

The Trend: Generative AI Moves from Chatbot to Combat Planner

For years, military AI focused on bespoke, classified systems—models trained strictly on government data within secure enclaves. The reported adoption of Claude changes this paradigm. It suggests that the capabilities gap between cutting-edge commercial models and internal defense models has narrowed to the point where the latter can no longer keep pace with the speed of deployment.

When we search for corroborating evidence, we find this isn't an isolated experiment. The Department of Defense (DoD) is clearly pushing hard to integrate generative AI across operations. Search queries focusing on "Department of Defense" AND "generative AI" AND "targeting" OR "mission planning" reveal a concerted effort to leverage these tools not just for intelligence analysis (sifting through mountains of data), but for *proactive decision support* in complex scenarios.

What does this mean for AI deployment?

- Speed Over Seclusion: The military prioritizes the immediate, superior reasoning capabilities of models like Claude over the slower, more cautious deployment cycle of purely internal systems.

- The Cognitive Overload Solution: Strike planning requires synthesizing vast amounts of dynamic data—maps, intelligence reports, enemy patterns, collateral damage estimates. LLMs excel at summarizing and cross-referencing this complexity rapidly, reducing human cognitive load and speeding up the decision loop (the OODA loop: Observe, Orient, Decide, Act).

For business leaders, this illustrates the new reality: if a commercial tool provides a 10x boost in efficiency for a task, waiting for a bespoke, perfectly secure internal solution might mean falling critically behind competitors or, in this case, adversaries.

The Paradox: Utilizing a Model Under Geopolitical Scrutiny

The most controversial element is the specific model choice: Anthropic's Claude. The initial report noted that this is a company recently entangled in discussions regarding potential US government bans or increased scrutiny due to its foreign investment ties or perceived security risks. This creates an immediate contradiction: How can a system deemed potentially risky enough to warrant regulatory action simultaneously be trusted to advise on kinetic military action?

Investigating this requires looking into Anthropic’s security posture. Searches targeting "Anthropic" AND "DoD contract" OR "national security clearance" AND "Claude" are necessary to understand the actual operational agreement. If the DoD is using it, Anthropic must have achieved a significant security accreditation, likely through programs like FedRAMP or specific DoD Impact Level authorizations.

This points to a critical future implication for the AI supply chain:

The US military is effectively outsourcing complex cognitive tasks to a private entity whose ethical guardrails—while publicly emphasized by Anthropic—are ultimately governed by commercial interests. If a foreign adversary can compromise or subtly influence the training data or safety filters of a commercial LLM provider, the entire defense ecosystem built upon it becomes vulnerable to sophisticated, emergent failure modes or subtle manipulation.

The Future of Corporate Dependency in Defense

This reliance establishes a new vector for geopolitical competition. It’s not just about building the best chips; it’s about securing the *access* and *integrity* of the foundational models themselves. For all major corporations, the lesson is clear: if your technology is deemed strategically vital, your ownership structure and operational security will come under intense government review.

Ethical Firepower: LLMs and the Human Role in Lethal Decisions

Strike planning is not data entry; it directly precedes the potential use of lethal force. This brings the conversation squarely into the domain of responsible AI and autonomous weapons.

Our third line of inquiry—searching for "AI lethal autonomous weapons" AND "LLM oversight" OR "responsible AI guidelines" Pentagon—reveals the regulatory tightrope the military must walk. The core debate revolves around the 'human-in-the-loop' versus 'human-on-the-loop' continuum.

When Claude synthesizes data to suggest a viable strike package, where does the human responsibility truly begin? Is the operator simply confirming an AI-generated list, or is the AI presenting novel options the human might not have considered?

- Human-in-the-Loop: The AI suggests, the human must actively approve every step. This is the current standard for kinetic strikes.

- Human-on-the-Loop: The AI acts autonomously unless a human actively intervenes to stop it. This is the endgame many fear.

If the LLM is generating the *plan*, it is deeply involved in the 'Decide' phase of the OODA loop. The risk is that the sheer computational speed and persuasive logic of the AI output create a powerful confirmation bias, leading human commanders to trust the suggestion implicitly, effectively delegating critical judgment to non-sentient code.

Implications for Governance and Auditing

For any regulated industry—finance, healthcare, or defense—the ability to audit an AI’s reasoning is paramount. LLMs are notoriously opaque "black boxes." If a strike goes awry, how do investigators determine if the fault lies with outdated training data, a flawed prompt, or a fundamental misinterpretation by the model? This lack of explainability is perhaps the greatest long-term threat to the responsible deployment of LLMs in critical infrastructure.

Actionable Insights: Navigating the New AI Landscape

The utilization of Claude in real-world conflict zones serves as a flashing beacon for technology leaders across all sectors. The trajectory is clear: the most capable commercial AI tools will be adopted rapidly by the most demanding organizations.

For Businesses and Developers: Redefine Security

Actionable Insight 1: Assume Commercial AI Interoperability. Stop viewing internal and external AI stacks as separate. Plan for integrating third-party models via secure APIs. However, for any system dealing with proprietary, sensitive, or national security data, you must immediately verify the vendor’s security clearances (similar to DoD Impact Levels) and data residency policies. If your data trains a model used by a potential adversary’s government, you have a severe risk exposure.

For Policy Makers and Defense Strategists: Focus on Provenance

Actionable Insight 2: Mandate Model Provenance and Red-Teaming. Before deploying commercial LLMs in sensitive roles, governments must demand transparent reporting on training data sources, synthetic data generation methods, and adversarial testing (Red-Teaming) results. Oversight must focus less on the model's *intent* and more on its verifiable *behavior* under extreme duress.

For Technology Ethicists: Shift the Focus to Speed Bias

Actionable Insight 3: Combat "Speed Bias." The primary ethical challenge is no longer just about bias in the output, but the *pressure to act quickly* that AI enables. Stakeholders must develop new protocols that mandate human verification buffers, even when the AI suggests the "obvious" or "fastest" path. Speed must never override due diligence.

Conclusion: The Age of Integrated Autonomy

The reported deployment of Anthropic’s Claude in frontline military planning signifies that the era of cautious, isolated military AI is over. We have entered the age of integrated autonomy, where commercial cutting-edge technology is bleeding directly into the defense apparatus at an unprecedented pace.

While this promises superior efficiency and decision support, it forces an urgent confrontation with fundamental questions of sovereignty, security vetting, and accountability in warfare. The geopolitical landscape is now inextricably linked to the integrity of proprietary algorithms. Understanding the context of these deployments—by tracing DoD adoption strategies and examining corporate security postures—is essential for anyone trying to predict the next decade of technological evolution, whether in Silicon Valley or the Pentagon.