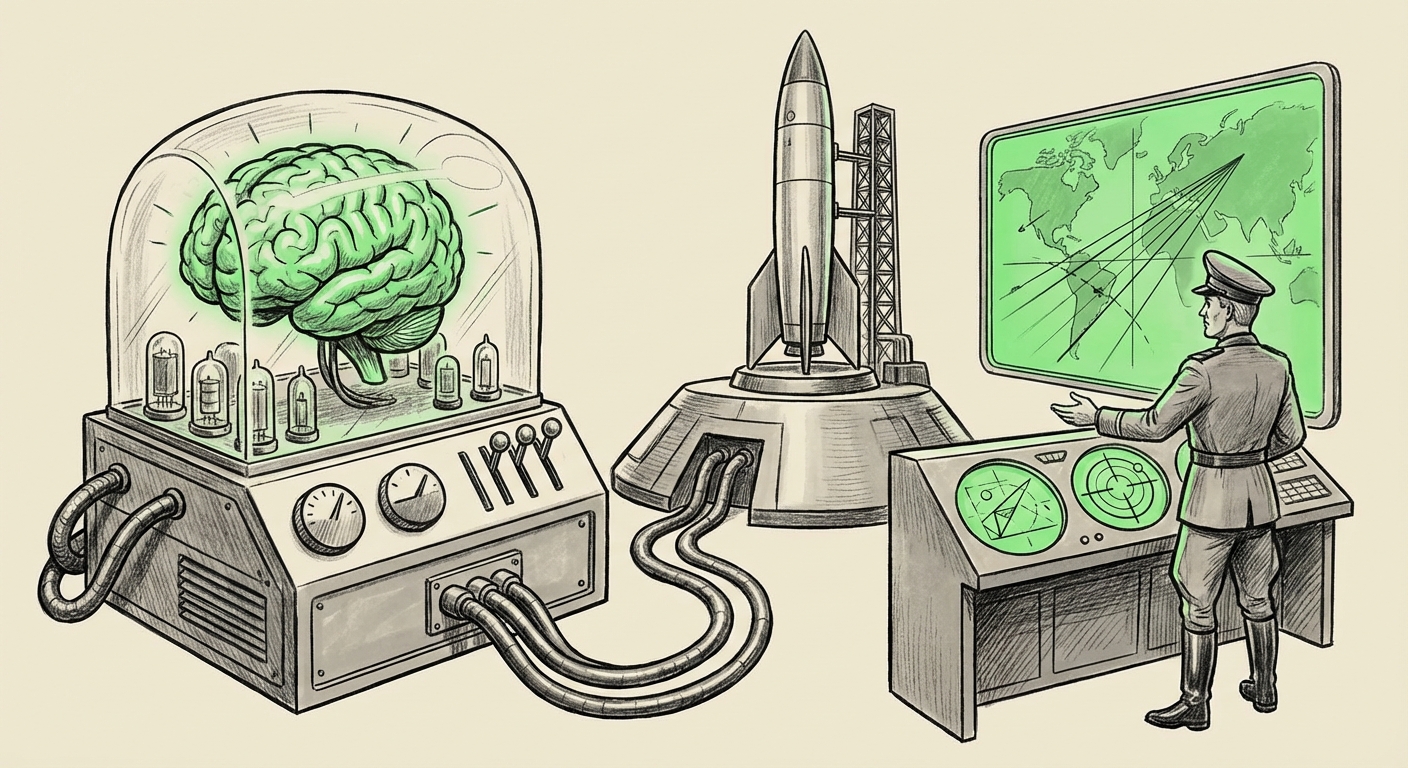

The Ghost in the Machine: US Military Deploys Frontier AI for Kinetic Strike Planning—What This Means for Global Security

The technological landscape is shifting faster than policy can keep up. Recent reports indicating that the U.S. military is deploying Anthropic’s advanced Large Language Model (LLM), Claude, at scale for highly sensitive tasks like AI-driven strike planning against targets in conflicts like the war in Iran, mark a watershed moment. This is not science fiction; it is the reality of modern defense procurement, where the most cutting-edge, commercially available AI is being integrated directly into operational command structures.

As an AI technology analyst, my focus shifts immediately from the technological capability to the profound geopolitical and ethical implications. The deployment—especially involving a company frequently central to debates on AI safety and potential bans—forces a critical examination of how "dual-use" technology, designed for beneficial civilian applications, becomes instantly weaponized or integral to kinetic decision-making.

The Convergence: Commercial LLMs Meet the Battlefield

For years, discussions around military AI focused on bespoke systems developed internally or through specialized defense contractors. The current trend, however, suggests a reliance on powerful commercial Large Language Models (LLMs) like Claude. These models excel at rapidly processing vast, unstructured datasets—intelligence reports, satellite imagery interpretations, and operational doctrine—to suggest optimal courses of action.

Demystifying the Technology: AI as a Co-Pilot in Command

To understand the gravity, we must first simplify what Claude is doing. Imagine a high-level military planner tasked with analyzing thousands of documents to decide the safest and most effective way to strike a specific target. This process is slow, risky, and subject to human fatigue and bias. An LLM like Claude, trained on enormous amounts of text and code, can:

- Synthesize Intelligence: Rapidly connect disparate pieces of intelligence (e.g., historical patrol routes, local infrastructure maps, weather patterns).

- Generate Options: Formulate multiple potential strike plans adhering to pre-set rules of engagement (ROE).

- Predict Outcomes: Model potential collateral damage or secondary effects based on historical data patterns the model has absorbed.

Crucially, this is likely *decision support*, not fully autonomous execution. The AI suggests the path; a human commander authorizes the action. Yet, when an AI provides a near-perfect, synthesized recommendation in milliseconds, the psychological and procedural pressure on that human to agree becomes immense. This is the true power shift.

The Anthropic Paradox: Safety vs. Security Imperatives

The choice of Anthropic’s Claude introduces a fascinating contradiction. Anthropic was founded on principles of developing "Constitutional AI"—a framework designed to align AI behavior with strong safety and ethical guidelines, often explicitly avoiding the creation of harmful or dangerous content. This contrasts sharply with the explicit goal of optimizing for successful military strikes.

Our research strategy involved deep dives into the regulatory landscape to understand this friction:

- We seek corroboration by searching for reports detailing the "Anthropic Claude" "US Department of Defense" "AI strike planning" deployment. Finding official statements or detailed reporting from defense journals would confirm the scope and move this beyond initial conjecture.

- We also look into the ethical debate: queries regarding Anthropic "safety guardrails" military use reveal the internal policy gymnastics required to allow a safety-first model into a kinetic operations environment. Have they modified the Constitutional AI framework for the DoD? If so, how?

For military and defense analysts, the context provided by these deeper searches is vital. It reveals the extent to which commercial providers are willing to compromise their public-facing safety mandates when confronted with high-value government contracts rooted in national security.

The Regulatory Tightrope Walk: Frontier Models Under Scrutiny

The deployment hits the regulatory environment like a shockwave. The source material notes the complexity of using a model from a company facing political heat or potential regulatory hurdles in Washington. This highlights the gap between fast-moving technological reality and slow-moving legislative frameworks.

Searches focused on US regulation of "frontier AI models" OR "Executive Order on AI" military application help illuminate this landscape. The 2023 Executive Order on AI established foundational governmental oversight, but the speed at which advanced models are being integrated into active war zones outpaces these frameworks.

Key Regulatory Implications:

- Classification of Liability: If an AI-suggested strike results in unintended civilian casualties, where does the liability fall? The developer (Anthropic)? The operator (the military unit)? Or the commander who clicked "approve"? Current military law is not structured for LLM-suggested errors.

- Export Controls and Trust: If the US government is using cutting-edge domestic AI, it simultaneously tightens the screws on adversaries accessing similar tech. However, relying on commercial providers means trusting their supply chain and data handling practices—a significant national security consideration.

- Mandatory Auditing: This deployment will almost certainly accelerate calls for mandatory, rigorous red-teaming and external audits of any foundational model used in lethal decision-making processes.

The Competitive Edge: Why Defense AI Adoption is Inevitable

Beyond the immediate strategic situation, this signals a permanent change in military procurement strategy. Why wait years for a custom-built system when Claude 3.5 or its successor can perform complex analysis *today*?

Our investigation into the Pentagon generative AI procurement competitors shows a fierce race. Microsoft (via OpenAI partnership), Google, and specialized defense AI firms like Palantir are all vying for these high-stakes contracts. The successful integration of Claude in this sensitive role sets a new benchmark. It proves that commercial agility can sometimes trump traditional defense contracting structures in the AI arms race.

For defense industry executives, this means pivoting resources: the focus is no longer just on autonomous vehicles or sensor fusion, but on securing LLM pipelines, custom fine-tuning models on classified data, and ensuring secure access to these powerful commercial tools.

Future Trajectories: What Businesses and Society Must Prepare For

This incident is a stark preview of the "AI Everywhere" economy, where the same models power customer service chatbots and target selection matrices.

1. Institutionalizing "Safety Drift"

Businesses that previously viewed AI safety as a marketing advantage or an ethical stance must now contend with its real-world compromise in high-stakes environments. If a company like Anthropic can adjust its guardrails for the DoD, what prevents less scrupulous actors or even foreign entities from finding ways to bypass those guardrails in other contexts?

Actionable Insight for Businesses: Audit your existing "safety filters." Understand precisely what conditions would lead you to relax them, and ensure that your internal governance structure can withstand scrutiny when those compromises become public.

2. The Compression of Decision Cycles

In business, faster decision cycles mean competitive advantage. In warfare, they mean operational speed. The integration of LLMs compresses the OODA loop (Observe, Orient, Decide, Act). This forces adversaries—and competitors in the marketplace—to adopt similar speeds, leading to a hyper-accelerated operational tempo across all domains.

3. The New Talent War

The demand for talent that understands both high-level AI architecture *and* operational domain knowledge (be it military doctrine, financial trading, or biological research) will skyrocket. The analysts who can correctly phrase the complex prompt to get the right strike recommendation are as valuable as the engineer who built the model.

Conclusion: Crossing the Rubicon of Commercial AI in Conflict

The reported use of Anthropic’s Claude for strike planning is more than a military footnote; it is a clear signal that the era of separating cutting-edge consumer/enterprise AI from national security infrastructure is over. The speed, synthesis, and suggested optimization power of frontier models are simply too compelling for modern defense organizations to ignore.

However, this integration comes at the high cost of immediate ethical ambiguity and regulatory lag. The core challenge moving forward is not merely building better AI, but building robust governance systems around the deployment of these powerful, commercially-developed tools into environments where the margin for error is non-existent.

The industry must now brace for increased government oversight, potential bifurcation of model development (separate, highly hardened military versions versus public-facing versions), and an ongoing, intense debate over who truly controls the guardrails when the decision involves kinetic force.