The AI Infrastructure Revolution: How Modular Control Planes and Function Calling are Rewriting Deployment

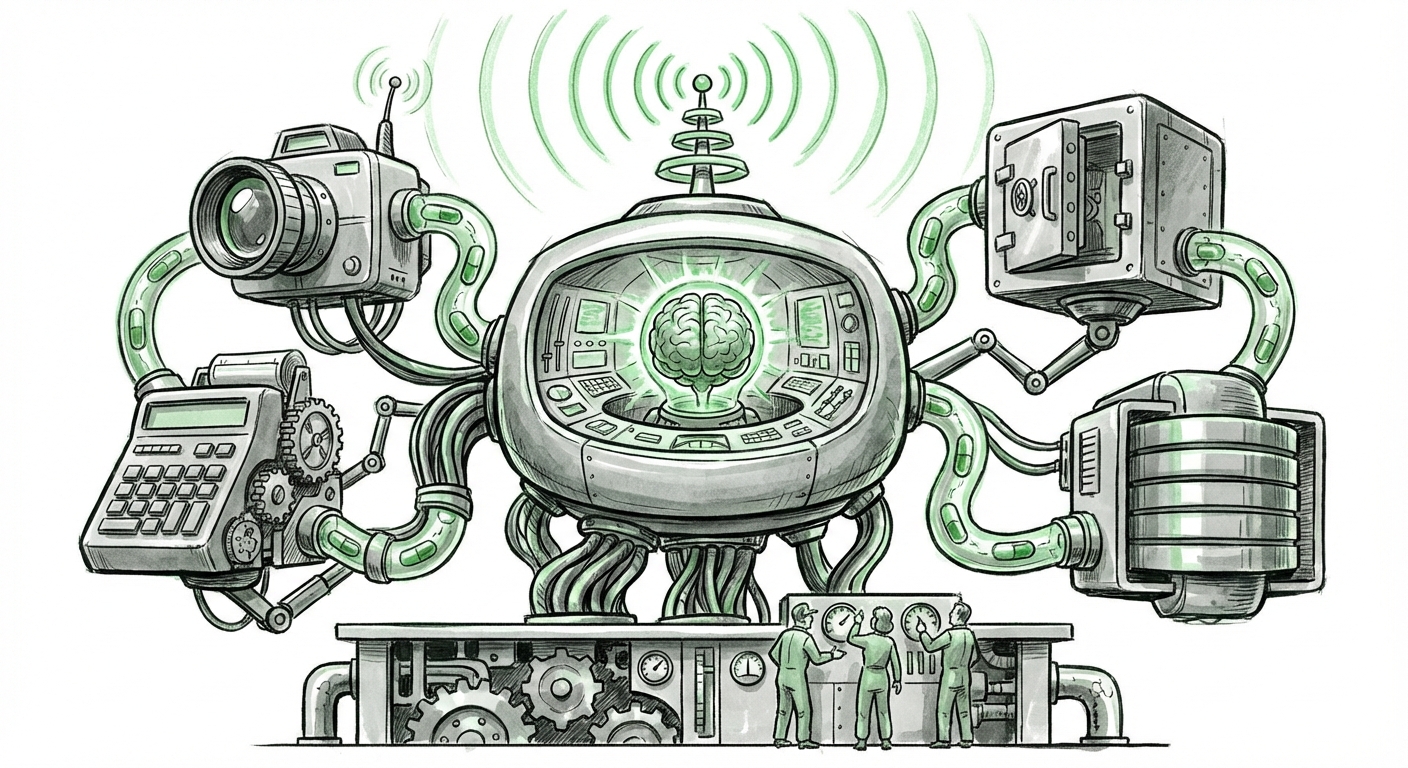

The world of Artificial Intelligence is moving beyond the hype cycle of just building bigger models. The current, more profound shift is happening in the engine room: **infrastructure**. How we package, deploy, manage, and connect these powerful systems is evolving rapidly from experimental setups into industrial-grade pipelines. A recent focus on Modular Control Plane (MCP) Architecture, where specialized servers are deployed as API endpoints and connected via standardized function calling, signals that AI is entering its maturity phase.

This development isn't just a technical footnote; it represents the industrialization and modularization of AI, essential for moving foundation models from interesting demos to reliable enterprise solutions. To understand the full scope of this revolution, we must look at the foundational standards, the management layer required, and the economic necessity driving this complexity.

The Rise of the AI ‘Toolbox’: Function Calling as the Universal Connector

Imagine you have a very smart assistant (the Large Language Model, or LLM). This assistant is great at talking and reasoning, but it can’t actually book a flight or check the current stock price itself. It needs tools. This is where Function Calling—or Tool Use—comes in. It has rapidly become the standardized language for allowing an LLM to interact with the outside world.

In essence, Function Calling is a standardized way for the LLM, based on a user's prompt, to output a specific instruction (like a command) formatted in a predictable way (usually JSON). This command tells the calling application: "Hey, execute this external function using these specific parameters."

This technical standard is crucial because it decouples intelligence from execution:

- Modularity: The core reasoning engine (the LLM) doesn't need to store every piece of current knowledge or every possible business process. It simply needs to know *how to ask* for that information.

- Specialization: External tools can be highly specialized—perhaps one endpoint handles rapid image recognition (a visual MCP server) and another handles complex SQL database querying. The LLM acts as the central conductor.

The concept of deploying "Public MCP servers as an API endpoint" directly leans on this functionality. The MCP *is* the external tool. Its entire purpose is to provide specialized, scalable services (like multimodal processing or complex reasoning) that the primary LLM workflow calls upon request. This trend confirms that the future of sophisticated AI isn't one massive model doing everything, but many cooperating specialized models managed by a centralized intelligence layer.

To understand the technical bedrock of this, exploring the foundational specifications that enable this communication is key. The established patterns in tool definition laid out by industry leaders confirm this is the accepted path forward for interoperability. (For developers and architects, understanding the JSON schema behind these calls is paramount for integration.)

The Control Plane Mandate: Why MLOps Needs Dedicated Infrastructure

If AI is becoming modular—a collection of specialized, interconnected services—someone needs to manage the complexity. This management layer is the Control Plane, often referred to in the context of sophisticated MLOps (Machine Learning Operations).

For infrastructure teams, the challenge is scaling inference for these highly demanding models. Running a standard web server is one thing; running a server optimized for parallel matrix multiplication needed for a multimodal model is another. This necessity fuels the push for dedicated AI deployment platforms.

These dedicated platforms—the practical realization of the MCP concept—address critical enterprise needs that standard cloud VMs often struggle with:

- Latency and Throughput: Specialized hardware (like specific GPU configurations) and optimized serving software are needed to maintain low latency, especially when complex, multimodal inputs are involved.

- Governance and Versioning: When an LLM calls an external tool, that tool must be secure, auditable, and version-controlled. The MCP serves as the gatekeeper, ensuring that the "tool" being called hasn't been silently updated in a way that breaks the main workflow.

- Cost Optimization: As we move to higher volumes, the cost efficiency of dedicated, high-utilization infrastructure often overtakes the variable cost of public APIs, especially when data sovereignty or compliance requires private hosting.

This shift mirrors historical trends in software engineering, where managing databases or caching layers required specialized, tightly controlled services rather than simple virtualization. The Control Plane handles the "how" (deployment, scaling, security), allowing the core LLM to focus on the "what" (reasoning and decision-making). (For CTOs and decision-makers, this signals a necessary investment in specialized MLOps tooling that integrates deeply with existing enterprise security frameworks.)

The Great De-Monolithization: Composable AI Architectures

The move toward modular MCPs connected via function calling is the physical manifestation of a philosophical shift in AI design: Composable AI. The idea that a single, trillion-parameter model can solve every problem reasonably well is fading in favor of systems built from interconnected, specialized components.

Why compose? Simplicity, accuracy, and efficiency:

- Accuracy: A small, highly trained model focused only on identifying anomalies in X-rays will almost always outperform a generalist model asked to do the same task.

- Efficiency: You only pay the compute cost for the specific component needed. If a user just asks for the current weather, you don't invoke the massive visual processing MCP; you call a small, inexpensive weather tool.

- Speed of Development: Teams can swap out individual components—upgrading the text reasoning model or deploying a better image recognition MCP—without rebuilding the entire AI application stack.

This architecture creates intelligent 'Agent' systems. The central LLM serves as the agent’s brain, observing the task, formulating a plan, and then sequentially "calling functions" (i.e., invoking specific MCP endpoints) to execute that plan. This orchestration layer is perhaps the most complex and exciting part of modern AI infrastructure.

Industry research highlights that success in complex, multi-step reasoning tasks now heavily relies on the quality of this orchestration, not just the size of the initial model. The ability to chain diverse models together reliably proves that the future of robust AI applications is network-based, not monolithic.

The Business Drivers: Economics, Latency, and Sovereignty

While the technical elegance of MCP architecture is clear, the adoption is ultimately driven by tangible business realities. Deploying models via dedicated, managed APIs addresses three core pressures facing large organizations:

1. Cost Scalability

For high-volume use cases—imagine millions of customer service interactions or continuous automated content moderation—the marginal cost of querying a public, general-purpose API quickly becomes prohibitive. Deploying proprietary or highly optimized MCPs allows businesses to benefit from bulk infrastructure purchasing and high utilization rates, leading to significant cost reductions over time. (For finance and infrastructure managers, this represents the break-even point where fixed investment in optimized infrastructure yields better long-term ROI than variable public usage fees.)

2. Latency Guarantees

In real-time applications—like autonomous control, live translation, or high-frequency trading analysis—a few extra milliseconds of latency from a distant, shared cloud endpoint can be catastrophic. A dedicated MCP deployed geographically closer to the application, or even on-premise, provides the necessary performance guarantees essential for mission-critical applications.

3. Data Sovereignty and Compliance

Many regulated industries (finance, healthcare, government) cannot send sensitive data to general-purpose third-party endpoints for processing. The MCP architecture allows organizations to host the specialized inference engines securely within their own compliance perimeter (private cloud or on-premise), while still leveraging the abstract, standardized interface of function calling.

What This Means for the Future of AI Workflows

The convergence of standardized function calling and specialized, managed infrastructure (MCPs) points toward an AI ecosystem that is fundamentally more robust, reliable, and scalable than what we see today:

For Developers: Your job shifts from being a model fine-tuner to being a workflow designer. Success will depend on knowing which specialized tool (MCP) to call, when to call it, and how to stitch the outputs back together using LLM reasoning. Expect sophisticated orchestration frameworks (like LangChain or Semantic Kernel equivalents) to become standard operating procedure.

For Infrastructure Teams: The focus moves from standard VM provisioning to designing and managing high-density, specialized AI workloads. Understanding accelerators (GPUs, TPUs, ASICs) and optimized serving frameworks (like vLLM or TensorRT) becomes a core competency, as they are the engineers running these critical MCP servers.

For Business Leaders: AI initiatives become less risky. By adopting a modular architecture, you gain the agility to switch underlying models or specialized services without rewriting the entire application. This layered approach mitigates vendor lock-in and future-proofs investment against the next wave of foundational model breakthroughs.

In summary, the trend toward Modular Control Planes and standardized function calling is the key to the next era of AI adoption. It is the necessary bridge that transforms bleeding-edge research into dependable, industrial-strength software components.