The Great Pivot: Why Meta's New Applied AI Division Signals the End of AI Research in Isolation

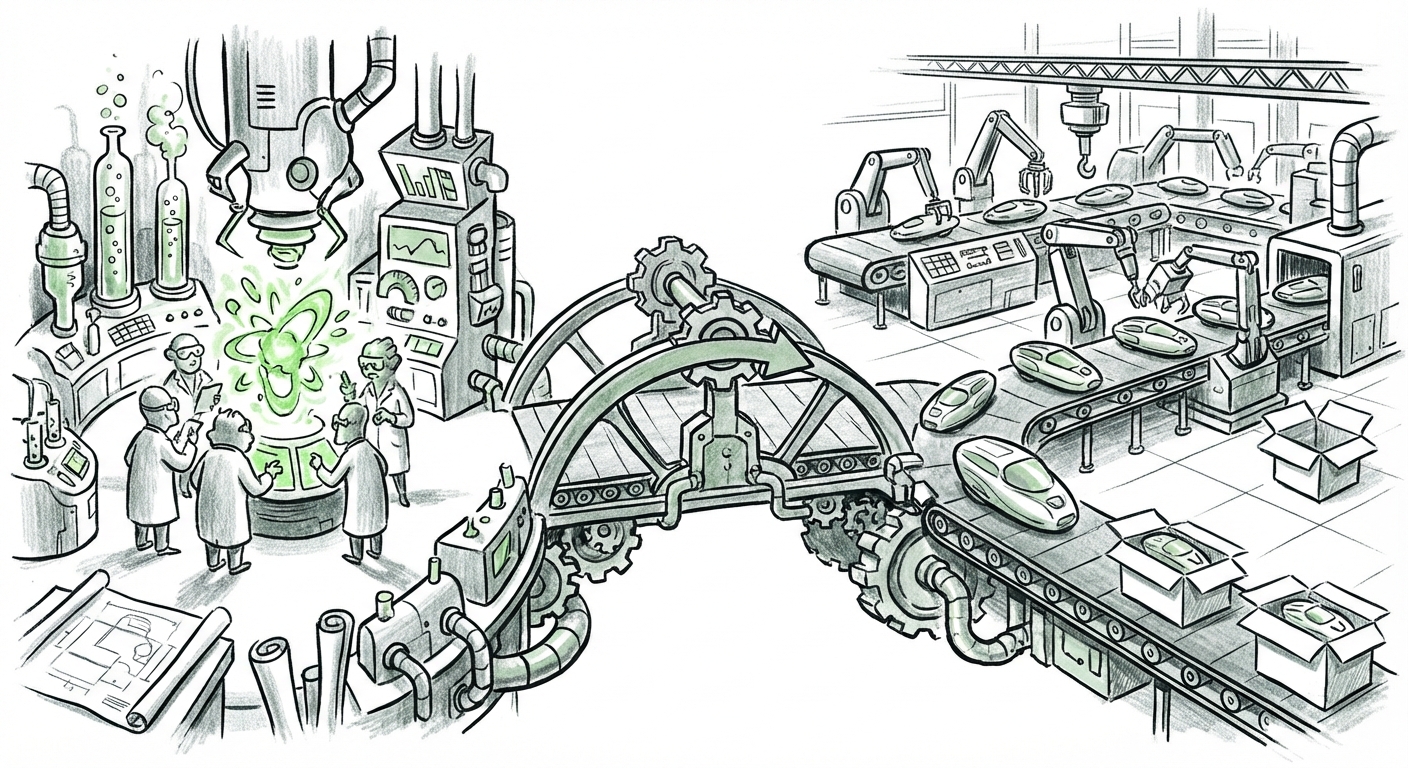

The world of Artificial Intelligence often feels divided. On one side, we have the glittering laboratories—the research centers where brilliant minds create foundation models capable of astonishing feats. On the other side, we have the messy, demanding reality of product development, where those models must be shrunk, optimized, and deployed to billions of users without crashing servers or bankrupting the company.

Recently, Meta (formerly Facebook) made a significant organizational move that blurs this line forever: the creation of a dedicated **Applied AI Engineering division**, as reported by the Wall Street Journal. This isn't just a title change; it's a strategic declaration that the most important work in AI today is not *discovery*, but *deployment*.

For tech strategists, engineers, and even curious consumers, this move by Meta provides a crucial lens through which to view the next wave of AI innovation. It tells us that the bottleneck has shifted. We have the powerful engines (the LLMs); now, we desperately need the world-class mechanics to put them into the cars that people actually drive every day.

The Shift: From Lab Bench to Production Line

For years, large tech firms maintained separate spheres. Research labs like Meta’s FAIR (Fundamental AI Research) focused on pushing the boundaries of what AI *could* do. Product teams then tried to take those breakthroughs and integrate them. This often led to slow, painful transitions, where a model proven in a controlled environment failed spectacularly when faced with the chaotic, high-volume traffic of Instagram or WhatsApp.

The new Applied AI Engineering division suggests Meta is collapsing this distance. They are marrying the two worlds. This is a direct response to the industry's evolving needs, a trend corroborated by external analysis suggesting the industry is recognizing the "engineering gap."

What does 'Applied' really mean here? Imagine building the world's fastest race car engine (the research model). Applied AI Engineering is responsible for turning that engine into a reliable sedan that starts every morning, handles heavy traffic jams (high user load), and has a great infotainment system (a seamless user experience). It’s the discipline of making the theoretically possible practically viable.

The Engineering Imperative: Why Research Isn't Enough

Having powerful models like Meta’s Llama series is excellent for setting performance benchmarks, but they are often too large, too slow, or too expensive to run constantly for everyone. This is where the new division steps in. Their core mission will likely center on:

- Optimization and Quantization: Making large models smaller and faster so they can run efficiently on Meta’s massive infrastructure, drastically cutting down on computational costs (inference costs).

- Integration Layering: Building the secure, stable pipelines needed to connect raw AI output to specific product interfaces—whether that’s improving content recommendations on Facebook or generating instant translations in Messenger.

- Reliability and Safety at Scale: Ensuring that when an AI feature is live for a billion users, it adheres strictly to safety guidelines and doesn't suddenly generate problematic or nonsensical content.

The Competitive Landscape: Following the Giants

Meta’s move does not happen in a vacuum. It is a necessary countermeasure in the ongoing AI arms race against Google and Microsoft. If competitors are already embedding their generative AI capabilities deeply into their core offerings, Meta cannot afford to lag in deployment speed.

Microsoft’s Copilot Strategy: Microsoft has shown the blueprint for rapid deployment by deeply integrating OpenAI's technologies (via Copilot) across Windows, Office, and Azure. Their organizational structure had to evolve rapidly to support these real-time integrations. Meta is likely modeling its new division to achieve similar integration velocity across its social graph.

Google’s Gemini Push: Similarly, Google is working aggressively to integrate Gemini across Search, Android, and Workspace. These companies understand that the user experience is now defined by the seamlessness of AI tools. The separation between a research team that produces a cutting-edge multimodal model and a product team that implements it is proving to be too slow for this pace of competition.

By establishing a dedicated Applied AI Engineering unit, Meta is signaling to the market and its own engineers that deployment speed is now a top metric for success, on par with achieving SOTA (State-of-the-Art) results in research papers.

The Herculean Task: Operationalizing LLMs in the Real World

The challenges facing this new division are not theoretical; they are intensely practical and expensive. Scaling Large Language Models (LLMs) for a global platform like Meta is perhaps the greatest computational challenge facing the tech industry today. This is where we see the crucial intersection of future AI implications and current engineering realities.

The Cost of Conversation

Running a single complex AI query might cost pennies. When you multiply that by billions of daily interactions across billions of users, those pennies quickly become unsustainable operating costs. An applied engineering team is tasked with achieving revolutionary breakthroughs in inference efficiency.

To grasp this simply: if an AI feature requires the entire system to "think hard" every time a user scrolls, the electricity bill alone would cripple the service. The applied engineers must find clever ways to pre-calculate answers, only run the most complex models when absolutely necessary, and use highly efficient, custom hardware optimized just for Meta’s specific models (like their custom silicon efforts).

This focus on optimization is critical for the future. If AI tools are too costly to operate widely, they will remain niche or locked behind high subscription paywalls. Meta's goal, by focusing on applied engineering, is to make advanced AI features accessible and affordable across their entire, free-to-use platform.

Bridging the Reality Gap: The Metaverse Connection

Perhaps the most compelling reason for Meta to double down on *applied* engineering right now lies in its long-term bet: Reality Labs and the Metaverse. The future vision of the Metaverse requires AI that can understand complex 3D environments, generate convincing virtual avatars, and manage real-time, naturalistic conversational agents.

These applications—virtual customer service agents, dynamic world-building tools, AI companions in VR—require AI systems to operate with near-zero latency. A delay of even a few hundred milliseconds in a virtual reality conversation destroys the immersion. This specialized, high-stakes requirement justifies a dedicated, highly focused engineering division that can tailor models specifically for real-time, spatial computing.

Actionable Insights for Business and Technology Leaders

Meta's organizational shift is a bellwether for the entire technology sector. What lessons can other businesses draw from this strategic realignment?

1. If You Don't Have an Applied AI Team, Build One Now

If your company has successfully experimented with AI models in a sandbox environment but struggles to make them work reliably in production, you have hit the "Implementation Wall." The solution is usually organizational, not technical. You need dedicated teams whose KPIs (Key Performance Indicators) are tied directly to product uptime, latency, and cost-per-inference, not just model accuracy scores.

2. Talent Acquisition Must Evolve

The demand for pure AI researchers will remain high, but the most valuable hires moving forward will be MLOps Engineers, Applied Scientists, and AI Infrastructure Specialists. These are the individuals who understand how to containerize, monitor, secure, and scale models built by others.

3. Expect Faster Feature Rollouts

For users of Meta products (Facebook, Instagram, etc.), this organizational change means we should expect to see experimental AI features transition into stable, everyday features much faster than before. The friction between breakthrough and mainstream adoption is being systematically removed.

For society, this rapid productization raises important ethical considerations that must be handled by this new applied team. Speed is critical, but speed without guardrails is dangerous. This division will also be responsible for implementing and enforcing those guardrails at an unprecedented scale.

Conclusion: The Age of Engineering Dominance

The decision by Meta to formalize its Applied AI Engineering division is perhaps the clearest signal yet that the technological center of gravity in AI has moved. We are exiting the "Research Hype Cycle" and entering the "Engineering Deployment Cycle."

The breakthrough models of the last few years—the foundational capabilities—were the hard part. Now, the hard part is the relentless, grueling, essential work of turning those breakthroughs into reliable, cost-effective, and deeply integrated products used by the world. Meta is reorganizing to win this deployment war, ensuring that their vast user base feels the direct impact of their billions of dollars invested in AI innovation. The future of AI isn't just about what AI *can* learn; it’s about what engineering teams can successfully make it *do* for everyone, everywhere, instantly.

Corroborating Context and Further Reading

The analysis above is grounded in current industry trends concerning the operationalization of AI. To gain deeper context on this organizational pivot and the technical challenges involved, the following areas of research are critical:

- The Industry Pivot: Understanding the broader move from pure research to engineering focus, often discussed in relation to bridging the gap between promising models and production viability.

- Competitive Alignment: Observing how key competitors like Microsoft and Google are structuring their teams to rapidly deploy foundational models like GPT and Gemini across their product suites.

- Scaling Hurdles: Researching the fundamental engineering problems—specifically LLM inference costs and latency—that dedicated Applied AI divisions are chartered to solve.

- Internal Strategy Tracking: Monitoring follow-up reports detailing the specific integration goals of this new unit, particularly concerning Meta's long-term vision for Reality Labs.