Qwen 3.5 and the Global AI Race: Decoding the Open-Weight Challenge to Proprietary Dominance

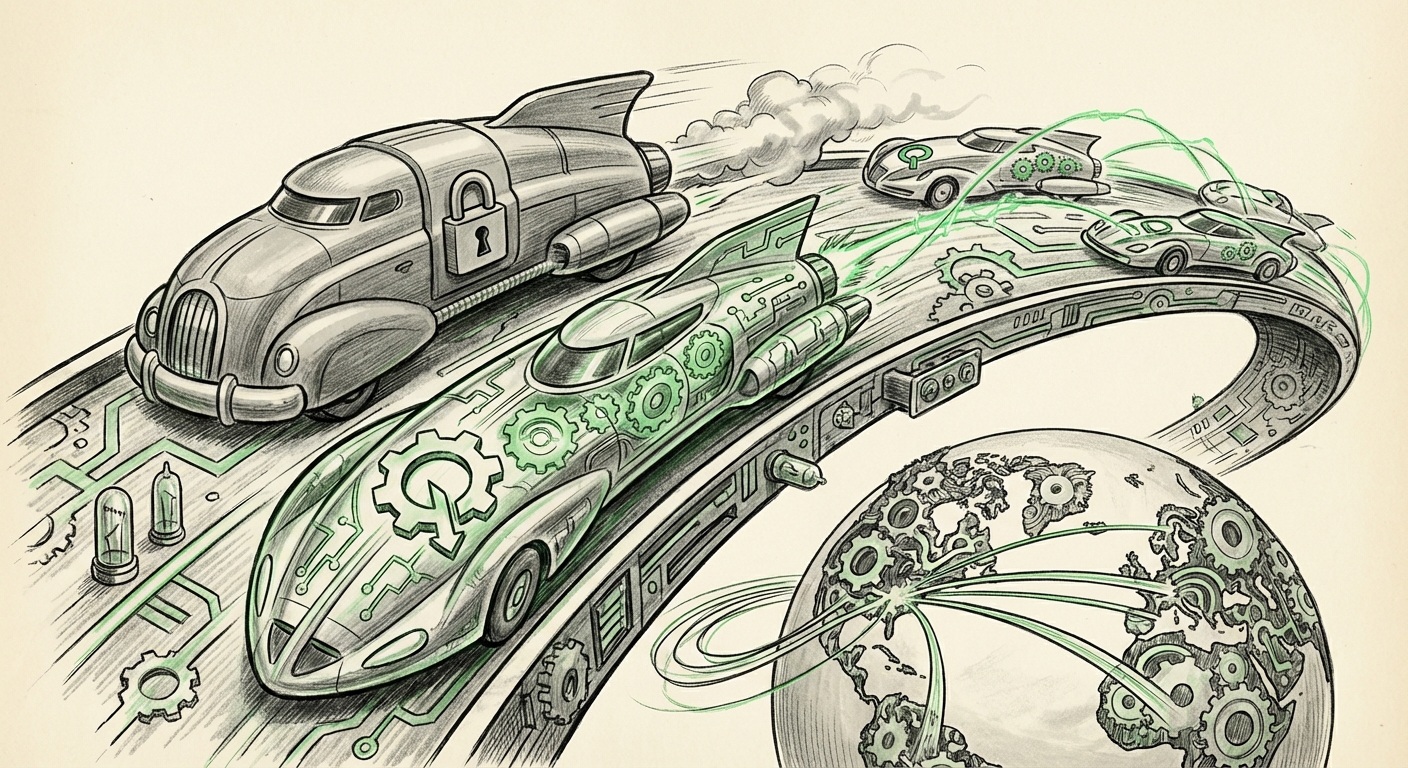

The artificial intelligence landscape is defined by speed. Just as established leaders seem to solidify their positions, a rapid iteration from another corner of the globe throws the entire hierarchy into question. The recent unveiling of Qwen 3.5 by the Alibaba team is precisely one of these seismic events.

For many observers, the conversation around frontier AI models has been dominated by a handful of US-based giants. However, Qwen 3.5 serves as a loud, clear signal: the global AI race is fierce, multilateral, and accelerating, driven significantly by powerful, often open-weight, challengers.

As an analyst tracking these shifts, it is crucial to move beyond the headline performance claims. We must contextualize Qwen 3.5 within the broader technological, commercial, and strategic ecosystem. What does this release actually mean for the future of AI development and deployment?

The New Global Equilibrium: Performance Meets Accessibility

The core tension in today's AI market is the trade-off between absolute performance (usually found in closed models like GPT-4 or Claude 3) and accessibility (found in open-weight models like Llama or Mistral). Qwen 3.5 aggressively targets the middle ground, often claiming parity or near-parity with proprietary benchmarks while maintaining an open or readily available structure.

1. Validating the Performance Claims: The Benchmark Battleground

When a new model launches, the first question for any developer or researcher is: Can it actually do what they say it can? This is where rigorous, objective evaluation becomes non-negotiable. The technical viability of Qwen 3.5 must be verified against established standards.

We look to community-driven leaderboards to place Qwen 3.5 in context. These platforms aggregate performance scores across dozens of standardized tests, providing an unbiased view of reasoning, coding, and knowledge recall capabilities. If Qwen 3.5 ranks highly here, it validates that non-US labs are mastering the scale and training required for SOTA performance. (See the ongoing comparisons found on the Hugging Face Open LLM Leaderboard for real-time context on where such models sit.)

For the Engineer: A top score means this model is immediately viable for production deployment. It removes the justification for exclusively relying on expensive API calls to closed providers.

2. The Open-Weight Revolution: Unlocking Enterprise Agility

Perhaps the most significant implication of Qwen’s strategy is its commitment to open or open-weight distribution. While "open source" is a complex term in the LLM world, releasing weights—the foundational parameters of the model—allows enterprises to take control of their AI destiny.

For CTOs and Enterprise Architects, the move toward open-weight models is less about ideology and more about risk mitigation, cost control, and data sovereignty. Relying solely on a few proprietary APIs creates a single point of failure and subjects businesses to unpredictable price changes or sudden policy shifts.

Contextual analysis in the industry shows a growing trend where companies are seeking hybrid strategies. The availability of a model like Qwen 3.5 means organizations can:

- Self-Host: Run the model securely within their private cloud or on-premises infrastructure, critical for highly regulated industries (finance, healthcare).

- Fine-Tune Aggressively: Customize the model deeply on proprietary data without sending that data to a third-party vendor.

- Optimize Costs: Once deployed, inference costs are often significantly lower than paying per-token for API access, especially at high volume.

This competitive pressure forces the entire market to become more accessible, lowering the barrier to entry for sophisticated AI application development.

Future Implications: Architecture, Modality, and Geopolitics

The emergence of highly competent models like Qwen 3.5 is not just about better chatbots; it is about the evolution of the underlying technology and the shifting balance of global technological power.

3. The Architecture Race: Pushing Technical Frontiers

To leapfrog competitors, these teams are not just scaling up existing transformer models; they are innovating on efficiency and capability. We must look beneath the surface to understand the architectural secrets driving these performance gains.

Modern SOTA models leverage complex concepts like Mixture-of-Experts (MoE) architectures or novel attention mechanisms to achieve performance gains without a proportional increase in training cost or inference latency. When Qwen announces a major upgrade, it implicitly suggests they have either adopted or invented a breakthrough in these areas. (Investigating recent research summaries on SOTA architecture, such as those often found on platforms like Towards Data Science, helps decode *how* these models are being built.)

Furthermore, the future is inherently multimodal. For Qwen 3.5 to be competitive, it must integrate vision, potentially audio, and complex data processing natively. This capability dictates its future use cases—moving beyond text generation into complex data analysis, diagnostics, and advanced robotics integration.

4. Geopolitical Undercurrents in the Cloud Wars

The strategic origin of Qwen—Alibaba—cannot be ignored. This is not just a tech story; it is an economic and geopolitical one. In a world increasingly focused on technological self-sufficiency, the development of world-class foundational models by non-Western entities ensures a diversified supply chain for critical AI infrastructure.

Alibaba Cloud uses models like Qwen as key differentiators against global competitors like AWS and Azure. For investors and geopolitical analysts, tracking Qwen’s progress reveals the investment priorities and strategic depth of major Asian technology players in securing leadership positions in the next computing paradigm. These models become tools not only for internal development but for securing global market share in cloud services and enterprise AI integration. (Monitoring reporting from major financial news outlets on Alibaba’s cloud strategy confirms this commercial thrust.)

Actionable Insights for Tomorrow’s AI Leader

What must technical leaders and business strategists do now that the competition is this dynamic?

For AI Engineers and Developers: Diversify Your Toolkit

Insight: Avoid vendor lock-in at all costs. Stop assuming that only one or two proprietary APIs cover your needs. Treat models like Qwen 3.5, alongside the latest from Llama or Mistral, as first-class citizens in your R&D pipeline. Benchmarking should always be model-agnostic.

Actionable Step: Dedicate 20% of your current model testing budget to evaluating open-weight alternatives that match your specific task (e.g., coding generation vs. creative writing). If Qwen 3.5 excels in your niche, begin planning a transition path that keeps the model self-contained.

For CTOs and Business Strategists: Embrace Hybrid Deployment

Insight: Data governance and cost efficiency are now achievable with leading performance. The gap between closed and open models is closing faster than projected, making proprietary dependence a potentially costly strategic error.

Actionable Step: Develop an internal "AI Core Policy" that mandates testing and potential adoption of open-weight models for all non-sensitive internal applications within the next two quarters. This hedges against future vendor price shocks and ensures compliance flexibility.

For Investors and Analysts: Look Beyond the Horizon

Insight: The investment focus is shifting from *who trained the biggest model* to *who can most efficiently deploy the best model*. The success of open-weight models like Qwen creates massive opportunity in the infrastructure layer (GPU optimization, model serving platforms, specialized fine-tuning services).

Actionable Step: Evaluate companies based on their multi-model compatibility. Firms that can seamlessly integrate and optimize models from different providers (US, Europe, China) will exhibit greater resilience and adaptability in the long term.

Conclusion: The Age of Inevitable Competition

The arrival of Qwen 3.5 is more than just another weekly update; it is a clear marker in the maturation of the global AI ecosystem. It tells us three things unequivocally:

- Performance is Decentralizing: The technical barriers to creating world-class LLMs are falling, spreading capability across continents and institutions.

- Openness is a Strategic Weapon: Open-weight releases democratize access but also force proprietary leaders to justify their closed ecosystems based on innovation speed or unique data moats, not just raw performance.

- The Pace Will Not Slow: The expectation for yearly, or even quarterly, leaps in capability is now the baseline for operating in the AI industry.

The future of AI deployment will not be monolithic. It will be a mosaic of specialized, high-performing, and often self-managed models, with Qwen 3.5 standing as a powerful testament to this new, intensely competitive, and ultimately more accessible, era.