Supreme Court AI Copyright Decision: Why The Fight Over Human Authorship is Just Beginning

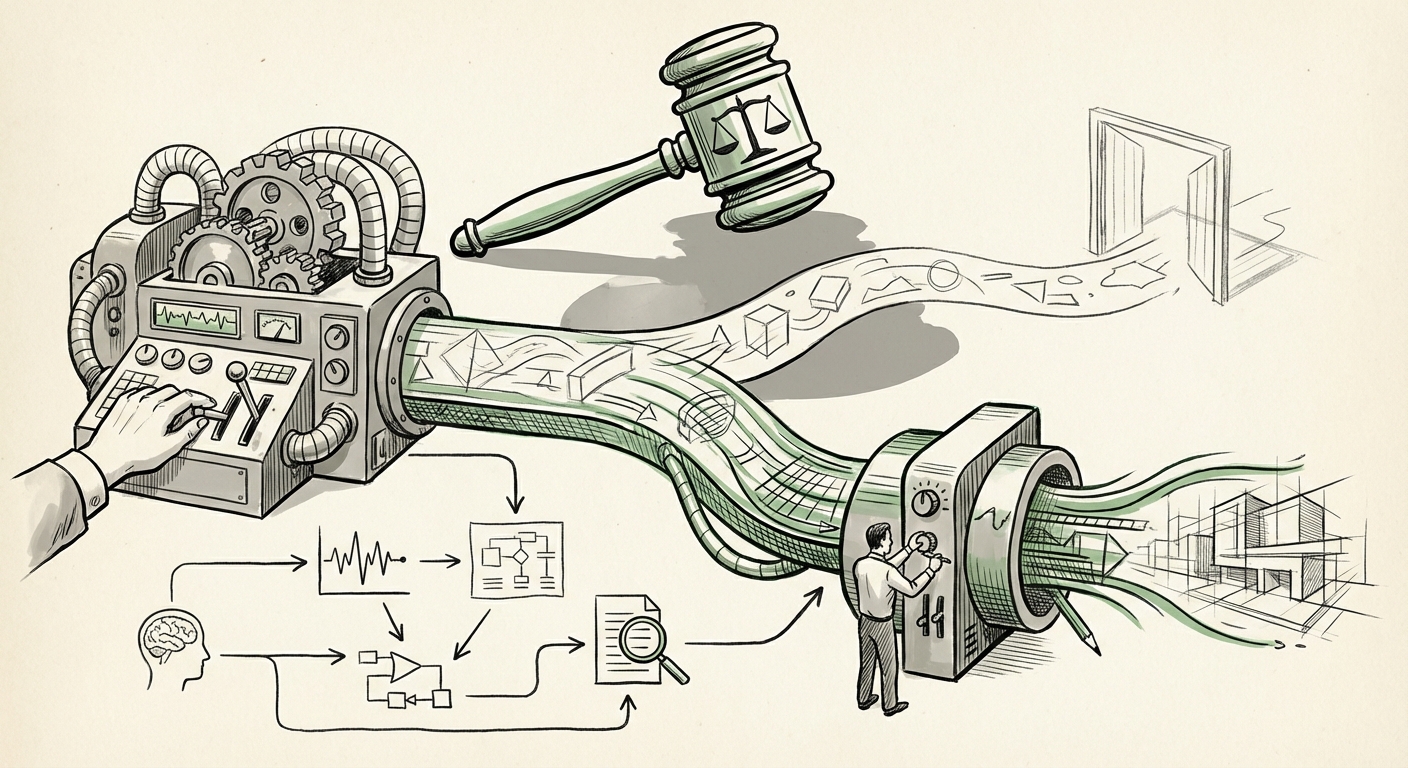

The recent refusal by the US Supreme Court to recognize an Artificial Intelligence system, without human guidance, as the sole author of a creative work sounds like a landmark decision. After all, if a machine cannot own its work, doesn't that clarify the messy landscape of intellectual property in the age of generative AI? As an analyst, I must report that the reality is far more nuanced: this ruling settled the most extreme case, but deliberately side-stepped the most pressing, real-world questions facing creators and tech companies today.

This decision, which affirmed the long-standing principle that copyright requires human authorship, effectively closes the door on the concept of "AI as author." However, by refusing to elaborate on what constitutes sufficient human contribution when an AI tool is used, the court has left a massive regulatory vacuum. This vacuum doesn't just leave creators guessing; it freezes investment, complicates licensing agreements, and sets the stage for years of subsequent, detailed litigation.

The Narrow Scope: Why This Ruling Settles Very Little

The case involved Stephen Thaler, who sought copyright protection for an image generated solely by his AI system, DABUS. The court’s stance was firm: the US Copyright Act reserves protection for the "fruits of intellectual labor" that "are founded in the creative powers of the mind." A machine, lacking a mind in the human sense, cannot meet this threshold. This confirms the Copyright Office's existing policy.

But for the vast majority of AI-generated content produced today—the images created via detailed prompts, the code assisted by GitHub Copilot, the marketing copy refined by large language models (LLMs)—the legal status remains ambiguous. Consider the spectrum of creation:

- Pure AI Output (The Thaler Case): No human input beyond turning the machine on. Result: No copyright.

- AI-Assisted Output (The Current Reality): A user spends hours refining prompts, selecting outputs, editing images, and structuring text. Is the human effort—the selection, arrangement, and refinement—enough to secure the copyright?

Legal analysts studying the implications of this ruling suggest that future litigation will immediately pivot to testing the boundaries of that second category. We are moving from a debate about machine autonomy to a debate about human ingenuity in orchestration. The critical question now being debated, as evidenced by legal commentary focusing on the "human authorship requirement," is where the "spark of creativity" ignites: in the prompt itself, or in the subsequent modifications?

The Legal Fault Line: Prompt Engineering as Authorship

For the creative economy, the core threat is the inability to secure ownership over one's work. If a marketing agency uses an LLM to draft a major campaign and the resulting content is deemed uncopyrightable because the human input wasn't sufficiently "creative," the agency loses its primary asset—its intellectual property.

This uncertainty directly impacts the valuation of AI startups and the licensing models of stock content providers. As experts analyze the fallout, they point to the growing need for regulatory clarity on **prompt engineering**. Is a highly specific, iterative prompt structure akin to an architect’s detailed blueprint, or is it merely an instruction? If the latter, then vast amounts of commercially produced content may technically reside in the public domain the moment they are created.

This regulatory ambiguity forces businesses into defensive postures. They must now prioritize internal documentation—logging the exact iterative steps taken between human and machine—to defend any future claim. This lack of clear guidance, as noted by sources tracking the impact of AI copyright ruling on creative industries, is already causing friction in licensing negotiations where ownership certainty is paramount.

Global Divergence: The International Legal Patchwork

Technology does not respect national borders, but copyright law certainly does. When the US Supreme Court provides only a highly constrained ruling, the global technology sector must look elsewhere for direction. This comparative analysis is crucial for any company operating internationally.

While the US firmly anchors copyright to natural persons, other jurisdictions have explored different pathways. For instance, the United Kingdom has long maintained provisions for "computer-generated works" where the author is deemed to be the person who made the arrangements necessary for the work's creation. Though this concept has not been fully tested in the context of modern deep learning models, it provides a potential legislative off-ramp that the US currently lacks.

Furthermore, the European Union’s comprehensive approach, particularly through the developing AI Act and related IP discussions, signals a more structured, risk-based regulatory framework. If the US legal system remains mired in common law interpretation for years, we could see a significant divergence where AI innovation flows toward jurisdictions offering clearer paths to commercial IP protection, even if that protection is mediated rather than absolute. This UK vs US vs EU approach to AI generated content copyright debate is essential for future technology scouting.

The AI Developer’s Dilemma: Input vs. Output

The public discourse often focuses on who owns the output. However, for the engineers and AI labs building the foundational models, the immediate concern often lies with the input.

While the Supreme Court protected human creators by denying AI authorship, it did nothing to resolve the massive class-action lawsuits alleging that current LLMs were trained unlawfully on billions of copyrighted works without permission or compensation. The developer view on copyright law hindering innovation post-SCOTUS decision often centers here. If developers cannot establish legal certainty over their training data usage (Fair Use arguments notwithstanding), the pace of foundational model training could slow considerably due to litigation risk.

The current ruling is a double-edged sword for developers. On one hand, it simplifies the output ownership question (it defaults to "human"). On the other hand, it increases pressure to prove that the *process* used to create the output—the model itself—was legally sound from the start. Expect future technical roadmaps to heavily emphasize data provenance, synthetic data generation, and transparent opt-out mechanisms for rights holders, driven less by moral imperative and more by legal necessity.

Future Implications: The New Creative Contract

What does this regulatory environment mean for the next five years of technology and creativity? We are entering an era defined by "Co-Creation Contracts."

1. Redefining "Substantial Contribution"

Businesses must immediately establish internal standards for AI usage that emphasize human control. This isn't about writing a five-word prompt; it’s about documented creative direction. Contracts with freelance creators, software developers, and agencies must explicitly state what percentage of the final work must be demonstrably human-authored or human-modified to qualify for ownership transfer. Failure to define this internally means relinquishing ownership claims to the public domain.

2. The Rise of AI Auditing and Provenance Tools

If the law requires proof of human intervention, the market will supply tools to prove it. We will see an explosion in provenance tracking software—digital watermarking, metadata tagging, and blockchain-based ledgers that timestamp creative iterations between human input and machine generation. These tools will become as vital as encryption for securing IP in hybrid workflows.

3. Investment in "Low-Autonomy" Models

Investors might shy away from fully autonomous creative AIs, as their output is commercially difficult to monetize without guaranteed IP. Instead, capital will flow to "co-pilot" technologies—tools that demonstrably augment human capacity, making the human operator measurably faster or more capable, thereby strengthening the human authorship claim over the final product.

4. Litigation as Standard Practice

The initial ruling, while definitive on the extreme, guarantees ongoing legal battles on the edges. Every major creative endeavor utilizing generative AI will likely face a challenge to its copyright validity until appellate courts or Congress step in to create a clear statutory framework. This means legal departments, not just R&D teams, will be central to AI strategy.

Actionable Insights for Technology Leaders

For CTOs, Chief Legal Officers, and creative directors, the message is clear: proactive governance beats reactive defense.

- Mandate Documentation Over Output: Do not reward sheer volume of AI generation. Reward the rigor of the human process. Create internal protocols requiring detailed logging of all prompts, iterations, filtering decisions, and post-generation edits for any work intended for commercial protection.

- Review Input Licensing Exposure: Engage with legal counsel to assess model dependency. If your core business relies on outputs from models trained on questionable datasets, begin establishing dual-track strategies that use proprietary, clean-data models where IP risk is highest.

- Engage with Policy Makers: The status quo is unsustainable for mass commercial deployment. Industry consortia must continue pushing legislative bodies to define what level of "creative control" over an AI output constitutes sufficient human authorship to satisfy the law, potentially offering a middle ground similar to the UK's approach but tailored for modern diffusion models.

- Invest in Human Skill Specialization: The value proposition shifts from *generating* content to *directing* generation. Training programs should focus less on basic model use and more on advanced prompt engineering, aesthetic curation, and complex post-production editing, transforming human operators into certified "AI Directors."

The Supreme Court has drawn a clear line in the sand: the machine does not enter the kingdom of authorship. But the vast, fertile territory stretching between that line and legitimate human endeavor remains unclaimed territory. Navigating this ambiguity will be the defining challenge for intellectual property in the generative AI decade. Success will belong not to those who leverage AI most aggressively, but to those who can most convincingly prove their indispensable human hand guided the machine along the way.