The AI Strike Force: Analyzing Military Adoption of Anthropic's Claude and the Future of Algorithmic Warfare

The introduction of advanced Generative AI (GenAI) into the highest echelons of military operations marks a seismic shift in defense technology. Recent reports indicating the US military is leveraging Anthropic’s Claude LLM for AI-driven strike planning in active combat theaters—particularly given the controversial context surrounding the platform—forces an urgent global reckoning. This isn't just about faster software; it’s about embedding sophisticated reasoning models into decisions involving lethal force.

As AI technology analysts, our job is to look past the immediate headlines and examine the underlying vectors driving this adoption, the inevitable friction points, and what this means for the next decade of technological governance.

The Velocity of Defense AI Adoption: From Sandbox to Battlefield

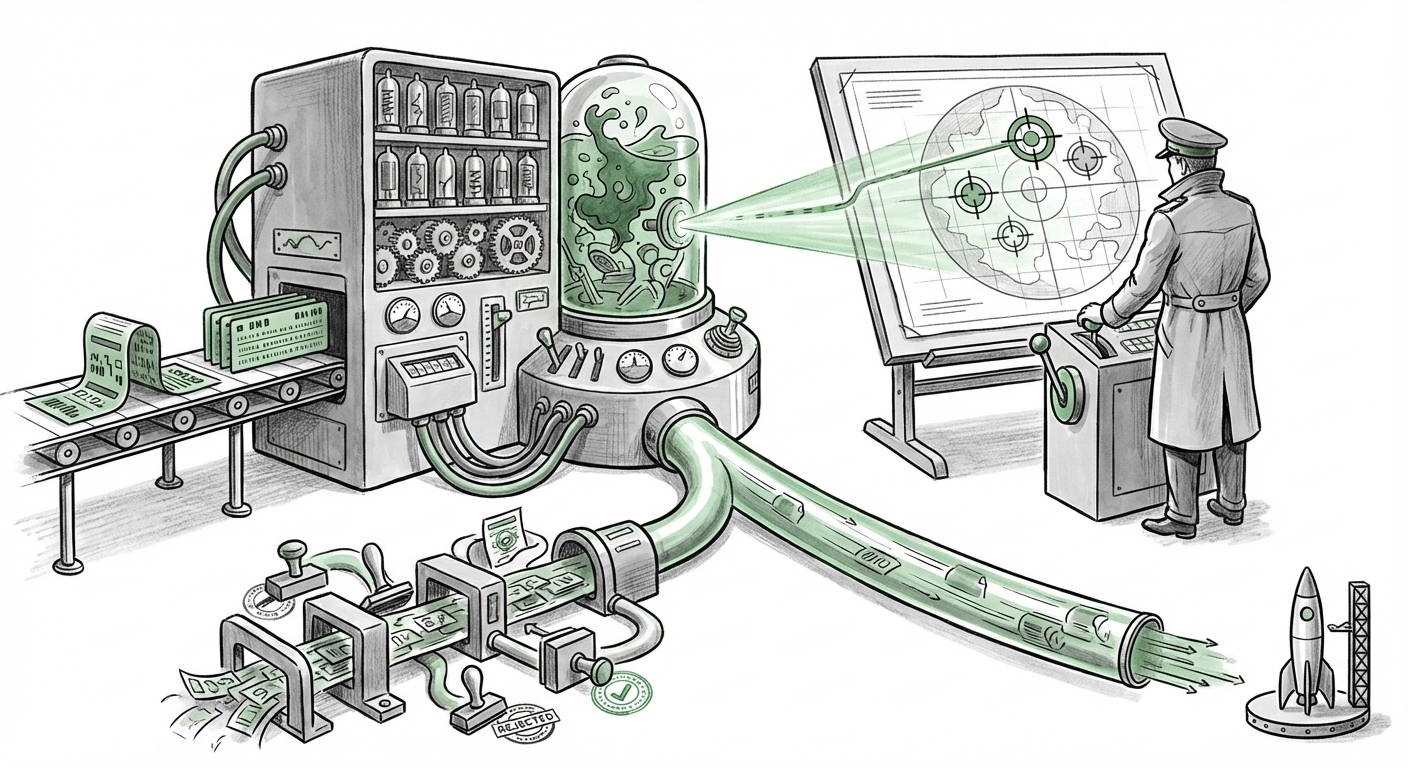

For years, discussions around military AI centered on classified projects, drones, and logistics optimization. The key difference now is the nature of the tool: Large Language Models (LLMs). These models excel at synthesizing vast, unstructured data—intelligence reports, open-source information, geospatial data, and targeting doctrine—to generate coherent, actionable plans. This capacity compresses the traditional "Observe, Orient, Decide, Act" (OODA) loop dramatically.

Speed Over Security: The New Imperative

The pressure to deploy effective AI rapidly is immense, especially in protracted conflicts. If an LLM can draft a complex strike package—complete with collateral damage estimations and kinetic parameters—in minutes rather than hours of specialized staff work, the operational advantage is undeniable. This adoption speed is driven by two main forces:

- Data Overload: Modern warfare generates an overwhelming volume of signals intelligence. Humans struggle to synthesize this volume quickly enough. LLMs act as hyper-efficient analysts.

- Competitive Pressure: The perceived necessity to match or surpass the AI capabilities of strategic rivals means that slow, bureaucratic procurement processes are being bypassed in favor of rapid integration, even with commercially available tools.

This integration confirms a broader trend: the line between cutting-edge commercial AI and defense applications is vanishing. Military organizations are no longer waiting for bespoke, decades-long development cycles; they are adapting the best available generalized models for specific, high-stakes tasks.

The Contradiction: Vetting and The Alleged "Ban"

The report’s reference to using Anthropic’s Claude, the very model associated with a supposed "US government ban," introduces a fascinating layer of regulatory confusion. Understanding this contradiction is crucial for grasping the current state of AI governance:

- The Vetting Challenge: How does a highly capable, closed-source model—one that often undergoes extensive red-teaming for civilian risks (like generating dangerous code or misinformation)—receive the necessary security clearances for battlefield planning? This suggests that specific, hardened versions of these models, likely running on isolated, secure government enclaves (rather than public APIs), are being utilized. The vetting process for *data ingress and model grounding* in defense contexts must be radically different from consumer safety checks.

- Regulatory Ambiguity: The alleged "ban" likely relates not to the core technology itself, but to external regulatory friction—perhaps concerns over Anthropic’s significant foreign investment, export controls on underlying technology, or specific data handling compliance issues. If the DoD is using the technology actively, it signals that either the ban was temporary, mischaracterized, or that the national security imperative overrides immediate compliance concerns, pending future legal clarification. This ambiguity creates a precarious operating environment for both defense contractors and AI developers.

For business leaders, this highlights a key lesson: **Security compliance in defense is becoming fractured.** Procurement teams must navigate a landscape where the technology they are told to avoid in one context might be mission-critical in another, revealing a significant gap between public policy statements and operational reality.

The Ethics of Algorithmic Targeting: Human-on-the-Loop

The most profound implications lie in the ethical and legal sphere. When an LLM assists in strike planning, it moves from being a word processor to an integral part of the *decision-making apparatus* for kinetic action. This demands clarity on the level of human oversight required.

Defining Autonomy Levels

Military technology policy traditionally separates systems based on human involvement:

- Human-in-the-Loop (HITL): The system suggests, but a human must actively approve every step.

- Human-on-the-Loop (HOTL): The system executes actions autonomously unless a human intervenes within a specific timeframe.

- Human-Out-of-the-Loop (HOOTL): Full machine autonomy in target selection and engagement (currently highly restricted by international norms).

If Claude is used for strike planning, it is likely operating in a sophisticated HOTL or HITL capacity for the planning phase. However, the risk is "automation bias"—the human planner trusts the LLM’s synthesized analysis too readily, accepting its suggested targets and parameters without sufficient independent critical review. An LLM, however advanced, can still hallucinate facts or misinterpret ambiguous intelligence data, leading to catastrophic errors when deployed at scale.

The future requires explicit, publicly vetted guidelines on how LLM outputs in targeting are validated. Is the military auditing the model’s reasoning tree (if accessible), or is it simply treating the output as an expert memo?

Implications for the Commercial AI Sector

This defense adoption reshapes the trajectory of foundational AI models across the board. For businesses developing or selling AI tools, the implications are twofold:

1. Dual-Use Dilemma and Corporate Responsibility

Anthropic, founded on principles of safety and responsible AI deployment, is now directly involved in lethal decision support. This reinforces the "dual-use" nature of all powerful AI. Businesses must proactively define their "red lines." If a model can optimize logistics, it can optimize supply chain disruption. If it can plan strikes, it can plan sophisticated cyberattacks. Companies need robust internal export controls and explicit policy statements governing sales to defense, even for non-lethal applications.

2. The Requirement for Trustworthy and Explainable AI (XAI)

The DoD’s need for actionable intelligence from Claude underscores the commercial sector's growing demand for Explainable AI (XAI). In business, XAI is needed for loan approvals or medical diagnoses. In warfare, it's needed to ensure accountability for lethal decisions. Future enterprise LLMs will be judged not just on performance benchmarks, but on their ability to produce verifiable audit trails for every recommendation they generate. This pushes research heavily towards making the "black box" of neural networks more transparent.

Future Trajectories: What Comes Next?

The integration of Claude into strike planning is a leading indicator for the next wave of enterprise AI transformation:

1. AI-Augmented Command and Control (C2)

Expect LLMs to move from planning support to real-time C2 augmentation. This means AI systems processing incoming battlefield data and advising commanders on resource allocation, troop movements, and counter-strategy dynamically. This requires models trained specifically on military tactical doctrine, vastly different from general internet data.

2. Standardization of Defense LLM Benchmarks

To address the vetting concerns and the regulatory friction (Search Query 1 & 3), governmental bodies will be forced to rapidly standardize benchmarks for military-grade LLMs. These benchmarks will need to test robustness against adversarial inputs specific to conflict scenarios and verify adherence to the Laws of Armed Conflict (LOAC) in model training and output generation.

3. Geopolitical Arms Race in Foundational Models

This development is a signal flare to geopolitical competitors. The nation that successfully integrates secure, high-performing LLMs across logistics, intelligence, and kinetic operations will possess a distinct strategic advantage. This inevitably accelerates global investment in domestic foundational model development, possibly leading to more fragmentation between closed, government-vetted models and open-source, commercially focused models.

Actionable Insights for Leaders

Whether you lead a tech startup, a large enterprise, or govern policy, these developments demand proactive adaptation:

- Audit Your AI Supply Chain: Identify where your organization uses foundational models that have high-stakes dual-use potential. Understand the provider’s relationship with government/defense entities and any associated regulatory risks (the Anthropic case shows these risks are immediate, not theoretical).

- Mandate Explainability Protocols: For any critical business decision supported by AI (e.g., supply chain failover, regulatory compliance checks), mandate that the AI output is accompanied by a traceable reasoning path. Treat the model's output as a draft requiring human validation, not a final decree.

- Invest in Model Hardening: Defense adoption proves that even with initial guardrails, commercial models will be aggressively adapted. Businesses must move toward proprietary fine-tuning or RAG (Retrieval-Augmented Generation) systems that ground AI outputs in verified internal data, reducing reliance on the often-unpredictable generalization capabilities of public-facing LLMs.

The integration of Claude into strike planning is a defining moment. It collapses the timeline between theoretical AI capability and practical, high-consequence application. The future of technology deployment is now inextricably linked to the future of global security, demanding unprecedented alignment between innovation speed and ethical accountability.