The AI Power Bill: Why Tech Giants Pledging to Pay for Energy Marks a New Era of Infrastructure Accountability

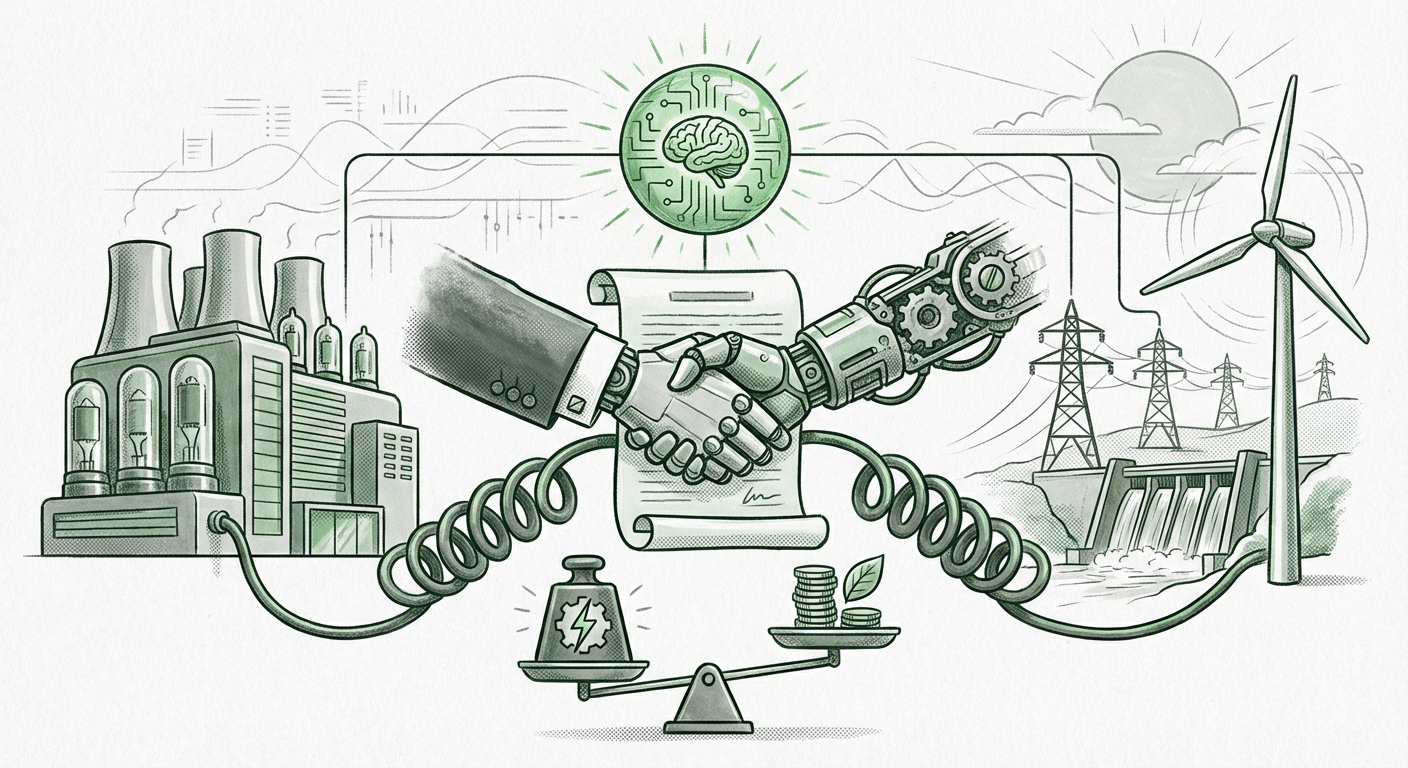

The artificial intelligence revolution is exhilarating, promising breakthroughs in every sector from medicine to manufacturing. Yet, beneath the surface of stunning demos and massive investment lies a looming, tangible cost: power. The computational resources required to train and run Large Language Models (LLMs) are unprecedented, turning data centers into industrial-scale power sinks. This reality was starkly underscored by a recent, voluntary pledge from tech titans—including Google, Microsoft, Meta, Amazon, and OpenAI—to cover the electricity costs of their own AI data centers following discussions with the White House.

While this pledge is *non-binding*, its significance is profound. It represents the moment when the physical infrastructure demands of AI moved from being an internal operational headache to a visible topic of national policy concern. For those tracking technology trends, this isn't just about who pays the utility bill; it's about the future viability, sustainability, and regulatory path of artificial intelligence itself.

The Scale of the Thirst: Quantifying AI's Energy Appetite

To understand the weight of this pledge, we must first grasp the sheer scale of the energy consumption. AI, particularly generative AI that powers chatbots and complex image generators, demands significantly more electricity than traditional cloud computing. This is due to two main phases: Training and Inference.

Training a cutting-edge LLM can involve processing trillions of data points across thousands of specialized chips for months, burning through energy equivalent to powering hundreds of homes for a year. As reports often indicate when analyzing the Energy consumption trends of large language models (LLMs) 2024, the projected growth is exponential. If AI adoption continues its current trajectory, some analysts warn that data centers could consume a significant percentage of global electricity generation within the next decade. (This context helps explain why the White House stepped in—it’s a major energy security issue, not just a corporate footnote.)

For the average user or small business owner, this translates to a simple fact: the engine of tomorrow’s intelligence requires enormous fuel today. The pledge signals that the industry recognizes that these energy costs are no longer minor overhead; they are a primary input cost that must be budgeted for explicitly, moving them out of the realm of general operational expenditure and into a dedicated line item for AI expansion.

The Hardware Bottleneck: Why New Chips Demand More Power

The energy demand spike is inextricably linked to hardware innovation, particularly Graphics Processing Units (GPUs) and specialized AI accelerators. Companies like Nvidia have delivered chips—such as the H100 and the forthcoming Blackwell architecture—that offer breathtaking performance gains for AI tasks. However, this performance comes at a steep thermal and electrical price. (Searching for the Impact of AI hardware (Nvidia H100s/Blackwell) on grid stability reveals that these chips can draw 700 to 1000 watts *per chip*, compared to traditional server CPUs drawing significantly less.)

For infrastructure planners, this means that simply adding more servers won't work; adding more *AI-optimized* servers strains the local power grid far more intensely. This concentration of massive power draw in specific geographic zones—often near major fiber routes or existing power substations—creates immediate problems for utility companies responsible for grid stability.

From Paying the Bill to Sourcing the Power: The Sustainability Question

The pledge to "cover the electricity costs" is an acknowledgment of financial liability, but the next logical step, and the one scrutinized most by regulators and investors, is *how* that energy is sourced. Paying the bill for grid power generated by fossil fuels is vastly different, environmentally, than paying for dedicated, carbon-free electricity.

This leads us to the critical intersection of corporate pledges and environmental responsibility, often explored through research into Hyperscaler data center power usage commitments renewable energy. For years, tech giants have touted aggressive goals for 100% renewable energy matching. However, matching energy consumption with purchased Renewable Energy Credits (RECs) doesn't mean the AI data center is running on clean power 24/7.

The Shift to 24/7 Carbon-Free Energy (CFE)

The future expectation, driven by shareholder pressure and regulatory foresight, is a move toward 24/7 CFE. This requires building or contracting for new renewable energy capacity (solar, wind, geothermal) that directly serves the data center around the clock, rather than simply buying offsets from energy generated elsewhere at a different time. For the companies signing this pledge, covering the costs also means accelerating massive investments in long-term Power Purchase Agreements (PPAs) or even direct investment in localized clean energy generation.

If a major tech firm is training a massive new model, they can no longer afford the reputational risk of running it solely on peak-time fossil fuel-generated power. Therefore, the pledge implicitly pressures them to speed up their transition timelines, making sustainability a prerequisite for scaling AI infrastructure, not just a marketing add-on.

Implications for the Future of AI Development

This confluence of high cost, high power draw, and governmental attention sets the stage for dramatic shifts in how AI is developed and deployed.

1. The Rise of Energy-Aware Model Design

For software engineers and AI researchers, efficiency will become a primary metric, equal in importance to accuracy. We will see a greater focus on:

- Sparsity and Quantization: Techniques that allow models to run effectively using fewer calculations or lower precision numbers, drastically reducing the computational workload (and thus the power demand) during inference.

- Algorithmic Innovation: Research prioritizing models that achieve parity with larger, older models using dramatically less training data and compute time. The era of brute-force scaling might slow down as efficiency gains become more economically attractive.

- Optimized Hardware Co-Design: Tighter integration between the software (the model architecture) and the hardware (the custom silicon) to ensure no wasted cycles or energy consumption.

2. Infrastructure Centralization and Geographic Strategy

The high power demands mean that data centers can no longer be placed haphazardly. Future locations will be determined less by proximity to tech hubs and more by access to abundant, reliable, and ideally, *new* clean energy sources. We might see a slowdown in expansion in power-constrained regions and an acceleration in areas with untapped geothermal, hydro, or vast solar/wind resources.

Furthermore, the cost burden might lead to a bifurcation: the largest, most resource-intensive foundational models will likely remain centralized in hyperscaler facilities that can negotiate massive energy deals. Smaller, more specialized, or application-specific models might be pushed toward edge computing or smaller, more localized, and inherently more power-efficient deployments.

3. The Regulatory Horizon is Darkening

While the current pledge is voluntary, governmental interest signals that mandatory regulation is likely forthcoming. Policymakers are increasingly concerned about AI’s impact on two fronts: grid resilience and climate goals. If the industry doesn't police its power usage effectively, governments will step in with mandates.

These potential regulations could take several forms:

- Mandatory Disclosure: Requiring companies to publicly report the energy consumption (kWh) and carbon intensity of their training runs.

- Zoning and Permitting: Local utility commissions gaining more authority to approve or deny new data center construction based on demonstrated grid impact and clean energy sourcing plans.

- Energy Taxes or Tariffs: Penalties levied on facilities that draw heavily from carbon-intensive sources during peak demand times.

The tech giants’ voluntary pledge is a preemptive move—an attempt to shape the regulatory environment before it becomes overly restrictive. They are showing willingness to internalize the cost and manage the risk, hoping to avoid heavier external mandates.

Actionable Insights for Businesses and Policymakers

For every stakeholder, the message from this energy pledge is clear: AI scaling is physically bounded, and the economics of power are changing how we build the future.

For Businesses Relying on AI:

Demand Transparency in Service Level Agreements (SLAs): When contracting with cloud providers, look beyond raw GPU hours. Ask for metrics on the energy efficiency (MFLOPs/Watt) of the specific instance you are using and the carbon intensity of the region your workloads are running in. Choosing a more energy-efficient, slightly slower model may become the more responsible and fiscally prudent long-term choice.

For Utility Companies and Infrastructure Planners:

Prepare for Concentrated, High-Density Loads: Traditional load forecasting models focused on population density are obsolete for AI clusters. Planners must now collaborate directly with chip manufacturers and cloud builders to understand power requirements months or years in advance for these hyper-concentrated loads. Investing in grid hardening and localized energy storage solutions will be critical for accommodating this new wave of demand.

For Policymakers:

Incentivize Efficiency, Don't Just Restrict Growth: Instead of broad moratoriums, create targeted incentives for innovation in energy-efficient AI hardware and CFE implementation. Policies that favor data centers locating near geothermal or nuclear power plants (which offer high-density, constant baseload power) could steer development away from straining intermittent renewable sources or stressed regional grids.

Conclusion: Accountability in the Age of Intelligence

The tech giants agreeing to shoulder the AI power bill is more than a headline; it’s a tacit admission that the energy footprint of advanced computing has reached a magnitude that can no longer be ignored or outsourced politically. It marks a pivot point where the pursuit of raw computational power must mature alongside a profound commitment to infrastructural responsibility.

The next phase of the AI race will not just be won by the company with the biggest model, but by the company that can run the most powerful model with the lowest energy cost and the clearest path to carbon neutrality. The voluntary pledge has set the baseline for accountability; the market, technology, and inevitable regulation will define the pace of sustainable progress.