The Accountability Algorithm: Why Apple Music's AI Tags Are Forcing the Music Industry's Hand

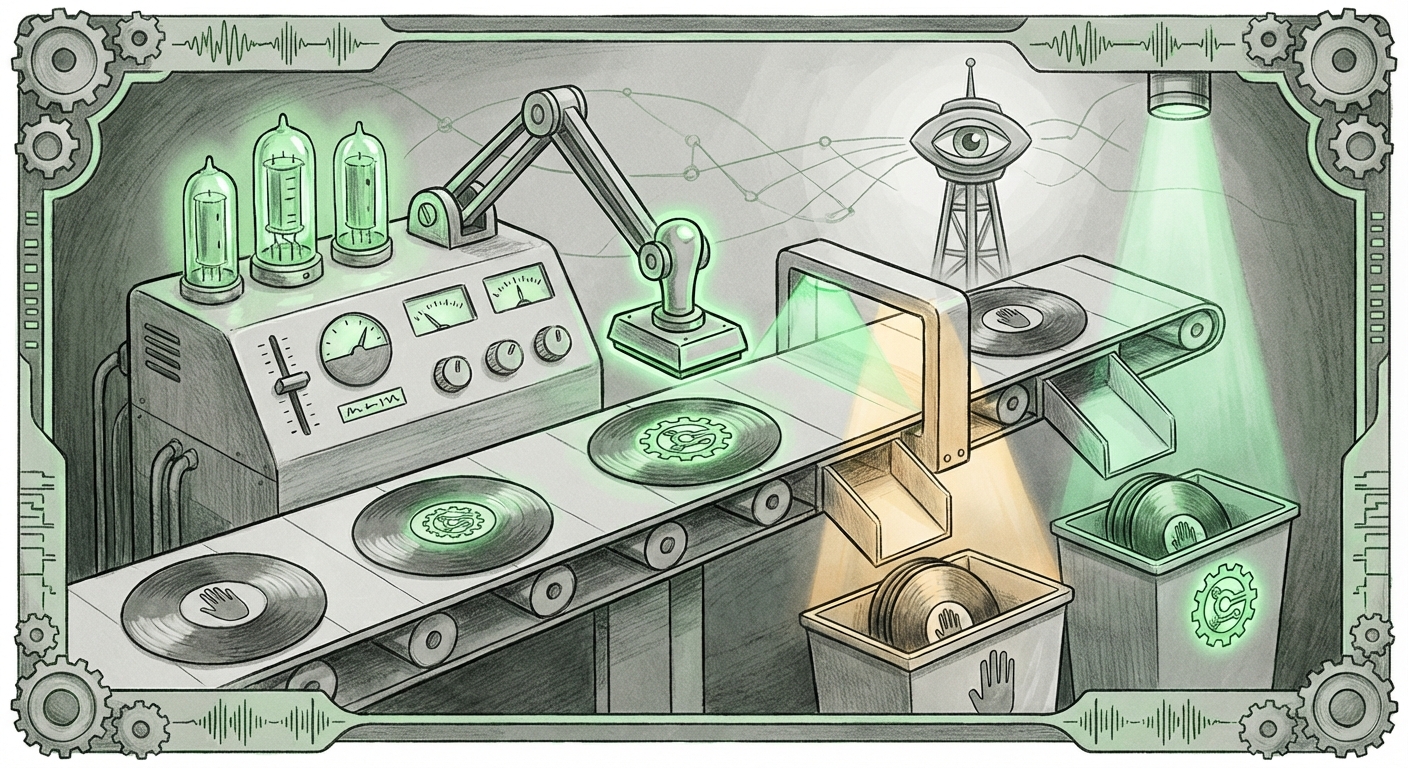

The collision between artificial intelligence and creative industries is no longer a theoretical debate; it is a logistical reality playing out on the world’s largest digital storefronts. Apple Music recently announced the rollout of Transparency Tags, a system requiring labels and distributors to flag AI-generated content across four specific categories: Artwork, Tracks, Compositions, and Music Videos. As an AI technology analyst, this move isn't just a new UI feature; it’s a profound signal that the era of unmonitored synthetic media distribution is ending. Crucially, Apple is not taking on the verification burden itself; instead, it is delegating accountability upstream to the content gatekeepers: the labels and distributors.

The Delegation Dilemma: Why Self-Reporting is the First Step

The core of Apple's strategy is the concept of delegated trust. For Apple to effectively manage the deluge of content generated by sophisticated tools like Sora, Suno, and Stable Diffusion, it cannot realistically employ legions of human reviewers or advanced forensic AI to check every single submission. Instead, they are leveraging the existing supply chain hierarchy. Labels and distributors are the entities responsible for vetting, clearing rights, and delivering content to the platform.

This places a significant, immediate burden on these intermediaries. They must now integrate AI detection and declaration workflows into their existing metadata pipelines. For business strategists, this means AI governance is moving from an R&D curiosity to a mandatory compliance checklist. If a distributor fails to correctly tag a fully AI-generated track, they risk contractual penalties with Apple—a powerful incentive to comply.

However, self-reporting is inherently fragile. As we investigate parallel industry movements, it becomes clear that this system might be a necessary, albeit temporary, placeholder until true technological authentication is widely adopted.

Contextualizing the Move: The Platform Wars of Transparency

Apple rarely sets the industry standard alone; they usually cement a standard already being tested elsewhere. To understand the longevity of Apple’s tags, we must look at the competitive landscape, specifically querying the likely responses from platforms like Spotify (Search Query 1: "Spotify AI music labeling policy").

If Spotify, the global streaming leader, adopts a similar, if not stricter, policy, we can confirm the emergence of a de facto industry standard. Conversely, if Spotify chooses a vastly different approach—perhaps focusing more heavily on banning music trained on copyrighted material rather than just labeling synthetic content—it creates fragmentation. This fragmentation complicates life for labels and distributors who must then tailor metadata delivery for multiple major partners, undermining the efficiency of the transparency initiative.

For the business audience, fragmented disclosure standards translate directly to increased operational complexity and higher compliance costs. The push for industry-wide consensus, perhaps driven by international bodies, becomes paramount.

The Need for Global Governance: IFPI and Standardization

Platform policies alone are not enough; true accountability requires industry-wide agreement on definitions and implementation. This is where international bodies like the International Federation of the Phonographic Industry (IFPI) come into play (Search Query 2: "IFPI guidelines on generative AI music disclosure").

The IFPI's involvement signals that AI music disclosure is moving from a Silicon Valley issue to a global legal and commercial necessity. IFPI guidelines attempt to harmonize how "AI-generated," "AI-assisted," and "human-created" are defined across different territories and legal frameworks. This is vital for two reasons:

- Legal Certainty: Clear guidelines help labels navigate international copyright laws, especially concerning moral rights and derivative works.

- Interoperability: If the IFPI standardizes the required metadata fields, platforms like Apple, Spotify, and Amazon Music can all accept the same disclosure packet, simplifying distribution workflows immensely.

In the context of Apple’s tags, we are likely seeing the initial rollout of a policy that aligns, or will soon align, with emerging IFPI frameworks. This transition accelerates the maturation of the entire digital music ecosystem in the face of AI disruption.

The Technical Underbelly: Moving Beyond Trust to Proof

The most crucial future implication of this trend lies not in the declaration of the label, but in the technological verification available to the platform. Relying on self-reporting, even with contractual penalties, is a short-term measure. Advanced generative models are becoming adept at cloaking their origins.

This brings us to the technological frontier (Search Query 3: "Challenges of watermarking AI-generated audio"). For transparency to be robust, content needs to be digitally indelible—something that can be proven regardless of who submits it.

The Promise of Watermarking and Provenance

Digital watermarking involves embedding hidden, machine-readable signals within the audio file itself. These signals can denote the creation method, the model used, or the date of generation. Standards organizations, such as those behind the Coalition for Content Provenance and Authenticity (C2PA), are actively developing frameworks for media, which must eventually be adapted for complex, time-based audio signals.

The technical challenge is significant. Unlike an image where a watermark might survive minor compression, audio watermarks must withstand transcoding across various formats (MP3, AAC, FLAC) and potential signal processing without breaking the human listening experience. If platforms like Apple successfully integrate mandatory, robust watermarking standards in the near future, the "Transparency Tag" will evolve from a simple checkbox provided by the distributor to a verifiable, immutable proof enforced by the platform’s ingestion technology.

This technological shift changes everything for AI development. It incentivizes developers to build provenance-aware models that inherently create traceable outputs, fostering a more trustworthy synthetic media environment.

The Human Element: Artist Rights in the Age of Disclosure

While technological and business logistics are critical, the ethical core of this development rests with the creators themselves. Transparency is moot if it does not translate into tangible protection for human artists (Search Query 4: "Union response to AI music disclosure requirements").

Artists’ unions and guilds are rightfully concerned that transparency tags might inadvertently legitimize the commercial use of AI creations that heavily mimic existing human work, potentially flooding the market and depressing royalty rates for human-made music. Transparency tags help fans differentiate, but what do they do for compensation?

The key implication here is the creation of distinct royalty pools. If a track is flagged as 100% AI-generated, it theoretically should not trigger royalties earmarked for human writers, performers, or mechanical rights holders based on current agreements. However, tracks labeled "AI-Assisted" present a thorny legal landscape. How much assistance warrants a reduction in the human share? These negotiations, often spearheaded by groups like SAG-AFTRA, will be central to the next generation of streaming contracts.

For artists, the actionable insight is clear: engage actively with your unions and distributors. Ensure that your contractual agreements address how AI-assisted content (even content you generate yourself) is categorized and compensated relative to fully organic work.

Practical Implications for Businesses and the Future of AI Content

The Apple Music development marks a pivot point, moving AI adoption from the "Wild West" phase to a regulated, segmented market. Here are the concrete implications:

1. Segmentation of the Market

We are seeing the creation of two distinct tiers of music consumption:

- Tier 1: Verified Human Content: Premium value, higher trust, potentially commanding higher licensing fees for synchronization in film/TV due to IP clarity.

- Tier 2: Transparent Synthetic Content: Clearly identified, likely subject to different royalty splits, and perhaps prioritized for niche, background, or functional music playlists (e.g., ambient study tracks).

2. Enhanced Due Diligence for Distributors

Distributors must invest immediately in systems capable of analyzing metadata fields provided by their artists and flagging inconsistencies before submission. Compliance failure becomes a direct, measurable business risk associated with platform access.

3. The Evolving Role of the Platform

While Apple delegated initial responsibility, their long-term technological investment will determine the standard. We expect the next evolution to be automated verification. Platforms won't just rely on labels; they will use advanced AI (perhaps proprietary models trained specifically to detect other models) to audit the self-declared tags. This creates a powerful feedback loop where technological proof backs up administrative declaration.

Actionable Insights for Navigating This Shift

For entities operating within the digital content creation space, proactive adaptation is essential:

- For Record Labels & Publishers: Immediately audit your content pipeline. Develop clear internal standards for what qualifies a track as "AI-Generated" versus "AI-Assisted," aligning these internal definitions with any emerging IFPI recommendations.

- For Independent Artists & Producers: If you use AI tools, begin tagging your own work *before* platforms mandate it. This builds a history of compliance and trust with your chosen distributor. Furthermore, start researching digital watermarking solutions for future projects.

- For Technology Providers: The greatest opportunity lies in building robust, interoperable digital provenance tools. Solutions that can reliably watermark audio and integrate seamlessly with metadata standards (like C2PA adaptations) will become essential infrastructure for the music tech stack.

- For Fans and Consumers: Look for the tags. Your engagement preferences will shape the market. Supporting clearly labeled human artistry will reinforce its economic viability against synthetic alternatives.

Conclusion: Building Trust in the Algorithmic Soundscape

Apple Music's implementation of Transparency Tags is a crucial, albeit imperfect, first move toward structuring the future of digital creativity. By mandating disclosure upstream, they are forcing transparency into the supply chain. This is not merely about tracking what is synthetic; it is about establishing the foundational trust required for massive-scale content distribution in the AI era.

The future of AI in music will be defined by three interconnected pillars: Accountability (through mandatory labeling), Verification (through evolving watermarking technology), and Legislation (through global standards like those sought by the IFPI). As platforms evolve from passive hosts to active validators of content origin, we are witnessing the creation of a new technological contract between creators, distributors, and consumers—a contract built on the principle that if AI creates, it must be clearly identifiable.