The Great AI Pivot: Why ChatGPT's Commerce Stumble Defines the Next Era of Online Shopping

The world of Artificial Intelligence moves at breakneck speed, and sometimes, that speed leads to sharp, instructive detours. The recent shift in OpenAI’s strategy regarding direct commerce within ChatGPT serves as one of the clearest lessons yet in the current limitations—and future potential—of Large Language Models (LLMs) in the consumer marketplace.

Reports indicate that OpenAI’s initial vision to turn ChatGPT into a one-stop shopping destination—where users could research and purchase items directly—has hit a significant roadblock. With only a handful of retailers participating and users hesitant to complete financial transactions within the interface, OpenAI is strategically handing over the final checkout steps to established partners like Instacart and Target. This isn't a failure of AI capability; it is a crucial realignment concerning consumer trust and transactional friction.

The Research-to-Purchase Gap: Where LLMs Excel and Stumble

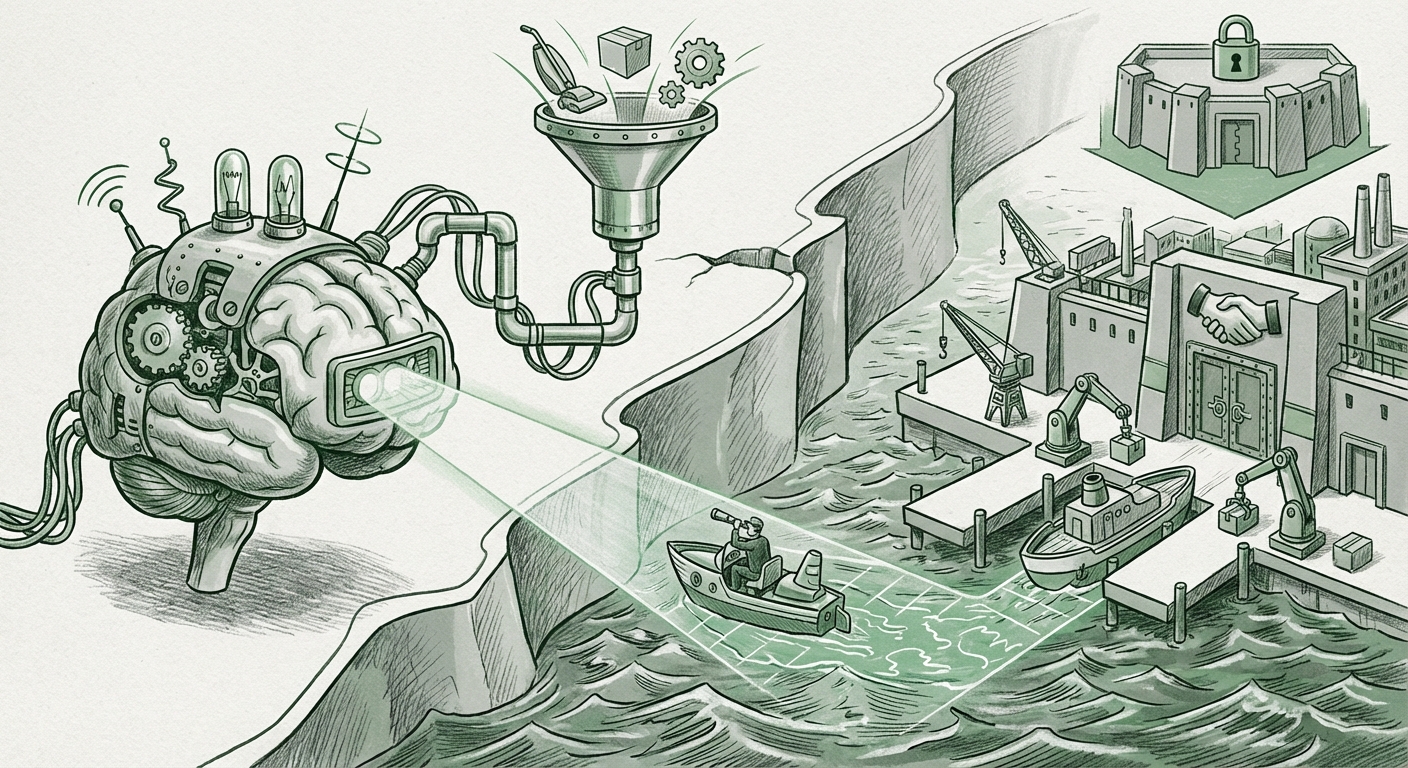

Generative AI shines brightest when it reduces cognitive overhead. ChatGPT excels at synthesizing vast amounts of product data: comparing features, summarizing reviews, and suggesting ideal options based on complex user prompts ("I need a durable, eco-friendly vacuum cleaner under $300 for a house with two shedding dogs"). This is the information aggregation phase, and AI is unparalleled here.

However, when the conversation shifts from "What should I buy?" to "Enter your 16-digit card number," the environment changes entirely. For many consumers, the immediate, frictionless checkout experience offered by known entities like Amazon or Target far outweighs the novelty of buying inside a chat window.

Corroborating the Pivot: The Trust Deficit

This observed behavior is not unique to OpenAI. In analyzing broader technology adoption, analysts suggest this points directly to a "Trust Gap" concerning high-value or sensitive purchases. Consumers are comfortable asking an AI for advice, but completing a financial transaction requires an established chain of accountability.

- Security Perception: Entering payment information into an application whose primary function is conversational AI feels inherently riskier than using a specialized, established payment gateway.

- Logistical Certainty: If a user buys a Target item via ChatGPT, who handles the return? Who manages shipping inquiries? Consumers naturally default to the platform with clear, easy-to-access policies—the retailer itself. This ties directly into the value proposition of AI assisting in post-purchase support rather than mediating the initial sale itself.

The move to partner integrations validates the idea that LLMs are currently strongest as hyper-efficient research brokers, not transactional clerks. The success of AI in the commerce lifecycle is currently weighted heavily toward the top funnel.

The Future Framework: Agents vs. Integrated Features

OpenAI’s decision forces us to consider the future architecture of AI commerce. Is the winning model the LLM embedded deeply into every app (a feature model), or is it a sophisticated, standalone AI shopper (an agent model)?

The current market suggests users are waiting for the latter—true, autonomous AI shopping agents that can manage complex negotiations, compare cross-platform pricing in real-time, and handle the entire lifecycle of a purchase without needing to bolt on basic checkout functionality. OpenAI’s implementation felt like a feature bolted onto a chatbot, not a fully realized purchasing agent.

This creates an exciting competitive landscape:

- Platform Players (Amazon, Google): These companies are building deep transactional rails and are integrating LLMs directly into those proven systems. Their advantage is inherent trust and existing user habits.

- LLM Builders (OpenAI): Their path forward likely involves maximizing their core competency—language processing and intelligence—and integrating via API hooks into the trusted platforms. They profit from the intelligence fueling the recommendation, not the final swipe of the credit card.

This strategic pivot away from direct monetization in difficult verticals suggests OpenAI is keenly focused on its B2B strengths—API licensing and enterprise integration—where the logistical overhead of consumer trust is managed by the client (like Microsoft’s Copilot ecosystem).

Implications for Businesses: AI as the Co-Pilot, Not the Captain

What does this mean for e-commerce executives, marketers, and product managers watching this space?

1. Embrace the AI Research Funnel

Businesses must optimize their product information for consumption by LLMs. High-quality, easily parsable structured data, clear FAQs, and transparent feature matrices are now paramount because that is where the initial customer interaction will occur. If your product description is ambiguous, the LLM will recommend your competitor who has clearer documentation.

2. Fortify the Trust Gateway

For retailers, the takeaway is clear: Your checkout is your moat. The friction point where the user leaves the generic AI environment and enters your branded, secure payment portal is now the most critical stage in the entire customer journey. Ensure that transition is seamless, reassuring, and lightning-fast.

3. Prioritize Post-Purchase Support Integration

The next frontier for generative AI in commerce won't be selling; it will be retention. Businesses should be aggressively testing LLM integration for customer service bots that can instantly access order history, process exchanges, and manage complex warranty claims. This leverages AI's strengths (data retrieval and contextual understanding) where user tolerance for error is higher than during the initial purchase.

Societal and Technological Shifts: The Decentralization of Transaction

On a broader scale, this development suggests a decentralized future for AI influence, rather than a centralized dominance.

If OpenAI were to succeed in hosting billions of transactions, it would fundamentally change the regulatory landscape they operate in, forcing them into the complex world of financial compliance, consumer protection laws, and global payment infrastructure. By stepping back, OpenAI preserves its focus on general intelligence advancement while allowing specialized industries (retailers) to manage their regulated domains.

This decentralization prevents a single AI entity from having undue control over the movement of physical goods and capital. It is a technological admission that while intelligence is centralized in foundation models, execution must remain distributed across proven, specialized platforms.

For the consumer, this maintains a familiar, comforting safety net. We get the benefit of AI-driven discovery without the anxiety of learning a brand-new, untested checkout system every time we ask a chatbot for gift ideas.

Actionable Insights for Navigating the AI Commerce Landscape

The message from the market is loud: AI is a powerful navigator, but human-established ships must handle the anchor drop.

- For Tech Developers: Focus on building robust, secure API bridges. The value is in the handoff protocol—ensuring data flows accurately and securely from the LLM recommendation engine to the partner checkout system (e.g., Instacart’s order API).

- For Retailers: Double down on your digital security posture and customer-facing guarantees. Your ability to reassure the customer during the final 30 seconds of checkout is now your key competitive advantage against the generalized AI interface.

- For Strategists: Stop viewing AI as a replacement for your entire sales stack. Reframe it as an unparalleled co-pilot for your marketing, inventory management, and customer service departments. The transactional layer remains the domain of specialized expertise.

The initial vision of a fully unified AI shopping experience—a truly single point of interaction from thought to delivery—remains a long-term ambition. But the stumble suggests the path to that ambition is not through forcing users to abandon trust, but by intelligently linking unparalleled intelligence to established utility.