From Links to Workspaces: How Google Canvas is Redefining Search & AI Productivity

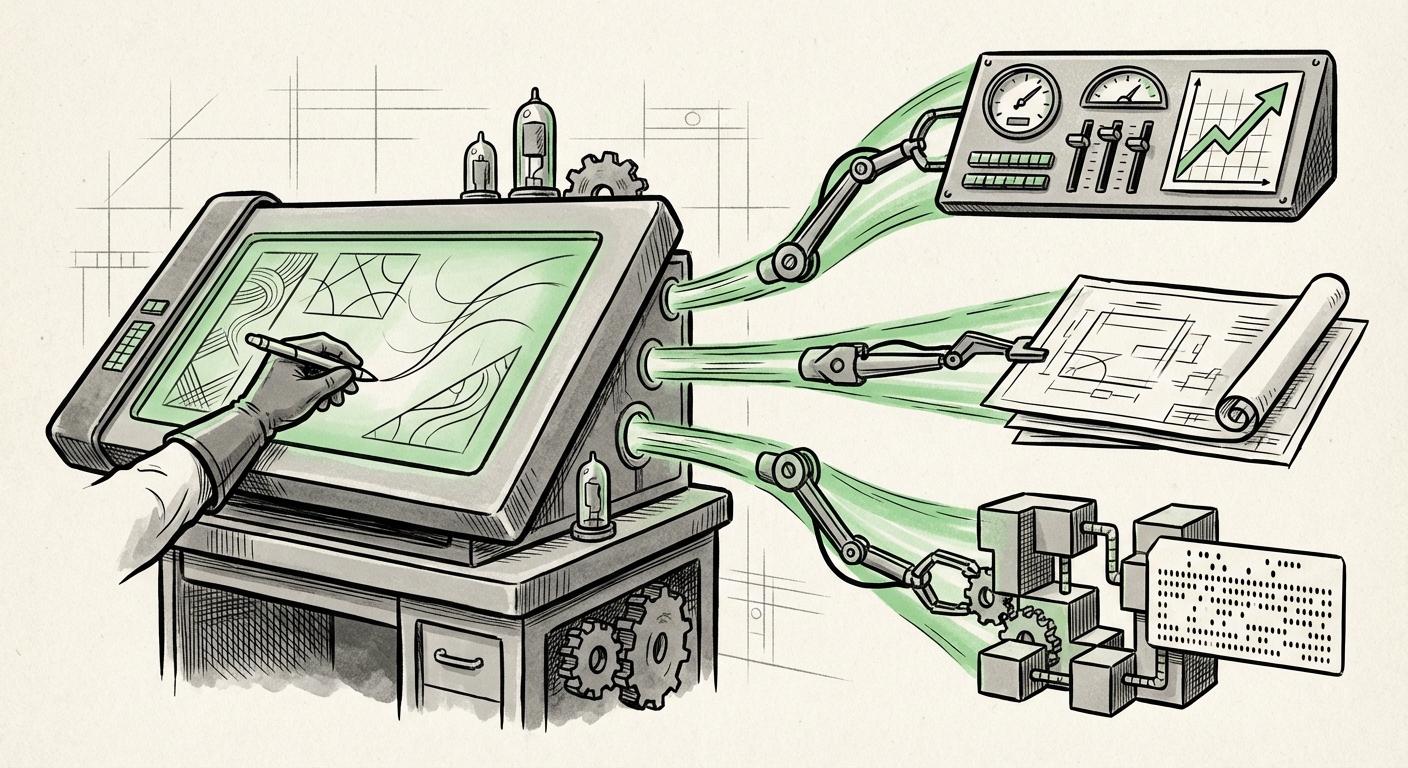

For decades, the search engine was a librarian: you asked a question, and it pointed you toward the right shelf (or in modern terms, the "ten blue links"). Today, that librarian is rapidly transforming into an active architect. The quiet launch of Google's "Canvas" feature for US users signals a monumental shift: search is no longer just about finding information; it is about generating actionable output directly within the search interface.

Canvas, which allows users to build interactive dashboards, draft documents, and even prototype code fragments inside the AI environment, moves the goalposts for what a search engine must accomplish. This is the evolution from passive information retrieval to an active, generative workspace. This move forces us to re-examine the competitive landscape, the underlying technological capabilities, and the profound implications this has for how we work, learn, and build in the age of ambient AI.

The Great Convergence: Search Meets Productivity Suite

The most immediate implication of Canvas is the collision course it sets Google on with established workplace software. Why leave the search environment to open a new document editor or spreadsheet program if your AI assistant can generate a usable prototype right where the research concluded?

The AI Workspace Arms Race

This development places Google's generative capabilities in direct competition with the "Copilotization" of productivity suites, most notably Microsoft’s integration across Office 365. As noted in analyses comparing these platforms (Query 1 focus), the battleground is now shifting from who has the best Large Language Model (LLM) to who can integrate that model most seamlessly into daily workflow.

For businesses, this means a critical decision point is emerging. Do they rely on AI embedded within their primary document creation tools (like Microsoft Word/Excel), or do they adopt an AI-first approach where the entry point—search—becomes the creation hub? Canvas suggests Google is betting on the latter, aiming to capture the initial moment of intent and turn it immediately into a tangible asset.

From Answering to Doing: The New Expectation of Search

As thought leaders discuss (Query 2 focus), the market is demanding that search engines move "beyond traditional results." Users are growing impatient with receiving links that require several more clicks, downloads, and copy-paste operations to achieve the final goal. If a user searches for "Analyze Q3 sales trends and visualize the top three regions," they no longer want ten links to sales reports; they want a dashboard generated instantly.

Canvas satisfies this demand by making the search engine an Action Layer. This fundamental shift elevates user expectations across the board. If Google can build a functional dashboard from a prompt, users will soon expect this level of immediate, structured output from every AI interface they encounter.

The Technical Underpinnings: Why Now?

The feasibility of Canvas hinges on advancements in the underlying AI models, specifically Google’s Gemini family. Creating a dashboard requires more than just coherent text; it requires structured output capable of rendering graphs, tables, and interactive elements.

Gemini’s Multimodality as the Engine (Query 4 Focus)

A feature like Canvas is a direct demonstration of *native multimodality*. It proves that the model isn't just stringing together text responses; it is understanding the intent to structure data visually and programmatically. For AI engineers and deep tech analysts, this validates that Gemini is highly proficient at maintaining context across different output modalities (text for instructions, data structures for tables, and code logic for visualization). This ability to manage complexity within a single generative session is what turns a search box into a development environment.

Democratizing Creation via LLM Prototyping (Query 3 Focus)

The mention of generating "code prototypes" within search points directly to the rapid advancement in LLM-powered low-code/no-code development. For software developers and product managers, this is significant. It means the initial barrier to entry for creating proof-of-concepts—writing scaffolding code, setting up basic data models—is dissolving. Users can now iterate on product ideas or data analysis scripts simply by describing them, turning research into executable drafts almost instantly.

Simply put for a general audience: Imagine needing to create a simple inventory tracker for your small business. Previously, you needed to know what a spreadsheet formula was, or how to code a basic web page. Now, you ask the search engine, "Build me a simple inventory tracker dashboard where I can add item name, quantity, and alert me if quantity drops below 10." Canvas attempts to deliver a working, interactive template immediately.

Practical Implications: Reshaping Work and Learning

The move to interactive, generative search has massive repercussions for efficiency, skill development, and business operations.

For the Modern Business: Productivity Multipliers

Businesses need to prepare for a workflow acceleration driven by ambient AI. The time saved in the early stages of any project—research, initial data structuring, drafting marketing copy, prototyping functionality—will be substantial.

- Faster Ideation Cycles: Teams can test more hypotheses in less time by rapidly generating interactive mock-ups (dashboards) based on real-time data interpretation from search.

- Data Accessibility: When data analysis tools are surfaced directly in the search bar, it lowers the technical hurdle for non-analysts to gain crucial insights, promoting data-driven decisions across all departments.

- Shifting Skill Requirements: The value shifts from the *mechanics* of software use (e.g., knowing every Excel function) to the *quality of the prompt* and the ability to critically evaluate the AI's output.

For Education and Skill Acquisition

Students and lifelong learners benefit tremendously. If a student is researching the causes of World War I, they can instruct Canvas to generate a comparative timeline visualization or a simple Q&A document based on the synthesized information. This turns passive reading into active learning through customization and interactivity.

Actionable Insights: Adapting to the Workspace Search

To capitalize on this evolution, both individuals and organizations must adopt forward-looking strategies:

- Mastering Prompt Engineering for Output: Focus training efforts not just on general knowledge queries, but on instructing the AI to produce structured, multi-format outputs (e.g., "Generate a summary in a bulleted list, a supporting data table, and a Python code snippet to process the data").

- Auditing Workflow Friction Points: Identify routine tasks that currently involve significant context-switching (e.g., searching for data, copying to Excel, formatting, then writing a summary). These are the immediate targets for Canvas-style integration and automation.

- Prioritizing Verification: As AI generates code and data prototypes instantly, the most critical human skill becomes verification. Businesses must establish clear protocols for validating AI-generated prototypes before deploying them in critical systems, especially those involving sensitive data or core business logic.

Conclusion: The Search Engine as Your Co-Creator

Google's Canvas is more than an incremental update to Search; it is a fundamental restructuring of how we interact with digital knowledge. It solidifies the trajectory where the primary interface for all digital tasks converges into a single, intelligent conversational layer. The competition heating up between major tech players is driving innovation that pushes the AI from being a helpful assistant into a genuine co-creator.

The era of the passive search engine is over. We are entering the age of the Actionable AI Hub—a space where research instantly begets creation. Understanding this shift now is essential for any professional aiming to remain ahead of the productivity curve in the rapidly evolving digital landscape.