The End of AGI? Why Yann LeCun's Superhuman Adaptable Intelligence (SAI) is the Next Frontier

The term Artificial General Intelligence (AGI) has long served as the holy grail of AI research. It paints a picture of a machine that can perform any intellectual task a human can. However, this goal—anchored deeply in replicating *human* intelligence—is starting to feel outdated, perhaps even restrictive, to some of the field’s most influential pioneers.

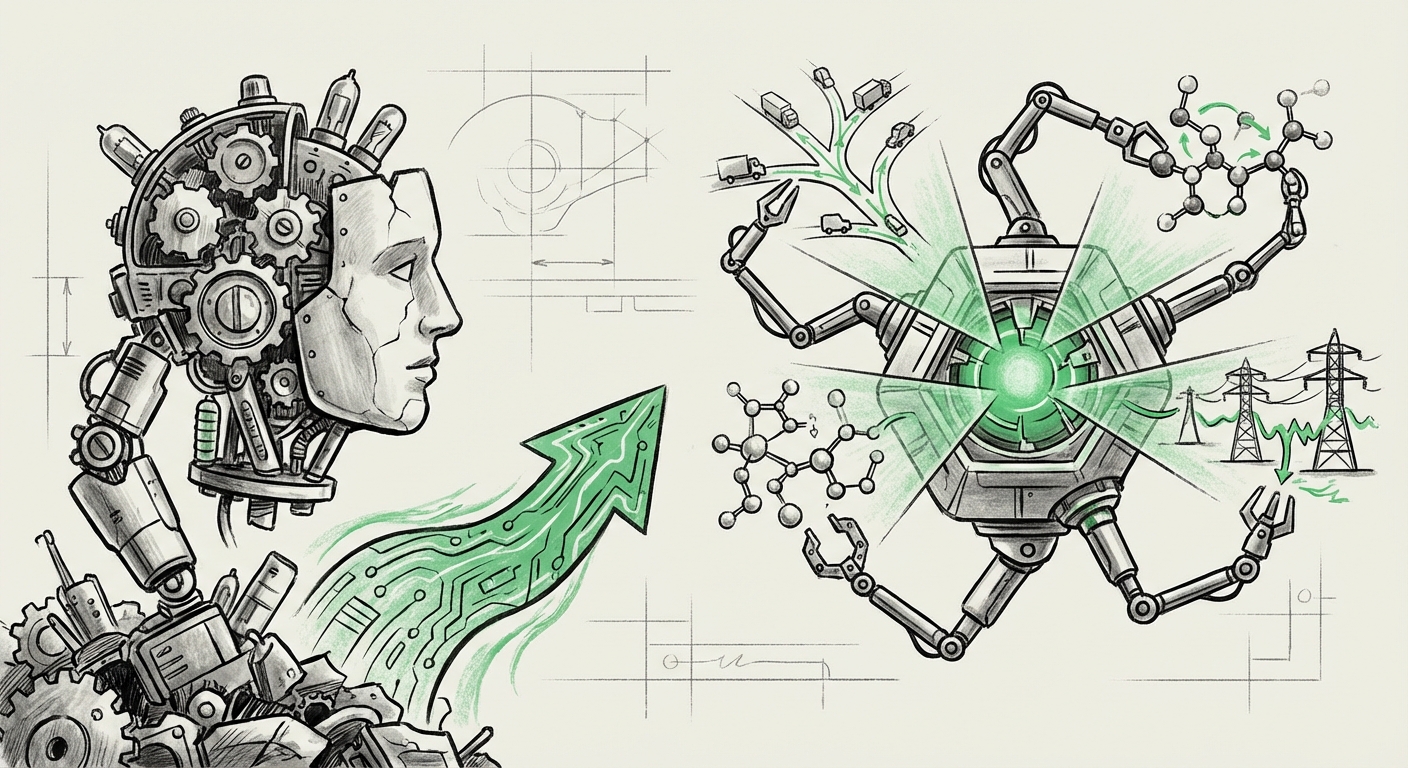

Yann LeCun, Chief AI Scientist at Meta and a Turing Award laureate, is leading a charge to retire AGI. In a recent proposal, he suggests we trade this familiar, yet fuzzy, concept for something more tangible and, frankly, more powerful: Superhuman Adaptable Intelligence (SAI). This isn't just a matter of semantics; it represents a fundamental philosophical shift in what we are building and what we should expect from advanced AI systems.

The Flaw in "General": Why Anthropomorphism Fails AI Goals

Why discard AGI? The core criticism lies in the word "General." Human intelligence isn't truly general; it's a highly specialized, evolutionarily optimized system developed for survival on Earth. We are excellent at certain tasks (social nuance, common sense) and terrible at others (perfect recall, complex physics calculation).

As expert AI researchers argue (a point often surfaced by queries like "Yann LeCun" "AGI flawed concept" intelligence definition), chasing human-level generality forces AI researchers to build systems that are inherently constrained by human shortcomings. SAI flips this script. It suggests we should focus on creating intelligence that is:

- Superhuman: Faster, more accurate, able to process datasets larger than any human brain.

- Adaptable: Capable of learning new skills quickly in complex, changing environments without needing massive retraining.

This is intelligence designed to solve complex problems efficiently, not intelligence designed to pass the Turing Test by convincing a human operator it's human.

The Engine of SAI: Building World Models for True Adaptability

The most crucial technical component underpinning SAI is the concept of "World Models." This is where the engineering focus shifts from raw prediction (which large language models excel at) to understanding cause, effect, and prediction over time. Think of it this way:

- Current LLMs: Are like incredibly talented mimics. They can predict the next most statistically probable word, sentence, or action based on vast amounts of training data.

- SAI Systems: Must possess an internal simulation of how the world works—a model that allows them to ask, "If I do X, what happens next?" without having seen that exact scenario before.

Research surrounding queries like future of AI systems "world models" adaptation capabilities shows that leading labs are intensely focused on self-supervised learning techniques that force the model to understand underlying physical and logical rules. This ability to robustly handle novel situations—to adapt—is what truly separates a useful, superhuman tool from a sophisticated parlor trick.

For the Machine Learning Engineer, the pursuit of SAI means investing heavily in systems that learn robust representations of reality. This moves the field closer to embodied AI—robots or agents that must navigate the messy, unpredictable physical world, which demands adaptability far beyond chatbot interactions.

Industry Validation: The Market Moves Beyond AGI Hype

It is instructive to observe that the technological trajectory is already aligning with LeCun’s suggested terminology, even if the marketing departments are slow to catch up. When we investigate how technology leaders frame their roadmaps (using searches like tech leaders roadmap beyond artificial general intelligence), the pattern emerges: the focus is on specialized, reliable performance, not vague generality.

Major players are not promising a single machine that can write poetry *and* perform surgery equally well. Instead, they are developing hyper-capable agents:

- AI that optimizes global supply chains faster than human teams ever could (Superhuman performance in logistics).

- AI that discovers novel drug candidates by simulating molecular interactions in ways previously impossible (Adaptability in scientific discovery).

For Venture Capitalists and strategists, this means the highest ROI will likely come from investing in systems that achieve Superhuman Utility in defined, high-value domains, rather than waiting for the elusive, all-knowing AGI. SAI provides a much clearer target for commercialization and demonstrable value creation.

The Superhuman Divide: Setting New Benchmarks for AI Capability

The inclusion of "Superhuman" is critical. It acknowledges that AI’s primary value proposition is not mimicry but *transcendence*. We are not trying to build a better human clerk; we are building an intelligence that breaks human cognitive barriers.

Evidence for this is abundant in benchmarks focusing on AI performance metrics exceeding human benchmarks in complex tasks. Whether it's mastering intricate strategy games, designing novel protein structures, or managing energy grids with predictive accuracy far exceeding human intuition, AI is already operating beyond human capacity in narrow fields.

This realization has profound implications for Policy Makers and Ethicists. If an AI system is Superhuman in its ability to, say, detect financial fraud or optimize military targeting, the focus shifts from "Is it conscious?" to "Is it reliable, auditable, and aligned with our goals?" The risks associated with a system operating at speeds and scales beyond our immediate comprehension demand a regulatory structure built around capability and impact, not just intentionality.

Practical Implications: Engineering for Adaptability, Not Generality

What does this terminology shift mean for the immediate future of AI development and deployment?

For the Business Leader: Focus on Real-World Deployment

Businesses should stop waiting for AGI before deploying AI. The SAI paradigm encourages immediate investment in systems that show rapid adaptation in your specific operational environment. If your AI needs to manage rapidly changing inventory across diverse global warehouses, prioritize an architecture capable of incorporating new market data swiftly (Adaptable) over one that can theoretically write a novel (General).

For the Technologist: Mastering the Environment

Engineers must prioritize architectures that integrate memory, planning, and predictive modeling (World Models) deeply into the core learning loop. The focus moves away from simply scaling parameters (more data, more compute) toward achieving architectural efficiency that allows for fast, zero-shot or few-shot adaptation to unforeseen circumstances.

For Society: Redefining Human Roles

If intelligence becomes Superhuman and Adaptable, it means machines will solve problems that currently require complex, multidisciplinary human expertise. This accelerates the need for societal restructuring around education and work. Instead of worrying about AI taking routine jobs, we must prepare for AI taking on complex, dynamic roles in science, engineering, and governance, requiring humans to master supervision, goal-setting, and ethical constraint application.

Conclusion: Embracing a More Powerful Vision

Yann LeCun’s call to adopt Superhuman Adaptable Intelligence is more than a semantic debate; it is a necessary recalibration of ambition. AGI, while inspirational, risks trapping researchers in the difficult, perhaps impossible, task of perfectly replicating the messy complexity of human cognition.

SAI offers a clear, powerful path forward: build intelligence that surpasses human capability in navigating complexity and adapting to novelty. It shifts the goal from imitation to innovation. The AI of the future won't just think like us; it will solve problems we couldn't even properly frame, leveraging its superhuman speed and its foundational understanding of the world’s rules. This is the intelligence that will truly redefine industry, science, and society in the coming decades.