The AI Employee Revolution: How Function Calling Turns LLMs into Actionable Agents

For years, Large Language Models (LLMs) like GPT-4 and Claude have impressed us with their ability to write, summarize, and reason. They were powerful digital assistants, but largely confined to the text box. They could *tell* you how to book a flight or analyze a database query, but they couldn't actually *do* it. This gap between knowing and doing has long been the primary bottleneck in achieving true Artificial General Intelligence (AGI) in the workplace.

A recent development highlighted by Clarifai’s **OpenClaw** framework signifies a monumental leap across this chasm. OpenClaw is not just another wrapper; it is a structural mechanism that turns these powerful LLMs into active participants in digital workflows by exposing them to external systems via standardized API endpoints. This is the genesis of the **Agentic AI** era.

From Digital Scribe to Digital Doer: The Rise of Agentic AI

What exactly does it mean to turn an LLM into an "AI employee"? Imagine your standard customer service bot suddenly having the ability to access inventory databases, process refunds through a payment gateway, and update a CRM—all without human intervention between the request and the action. This capability is powered by the concept of **function calling** (or "tool use").

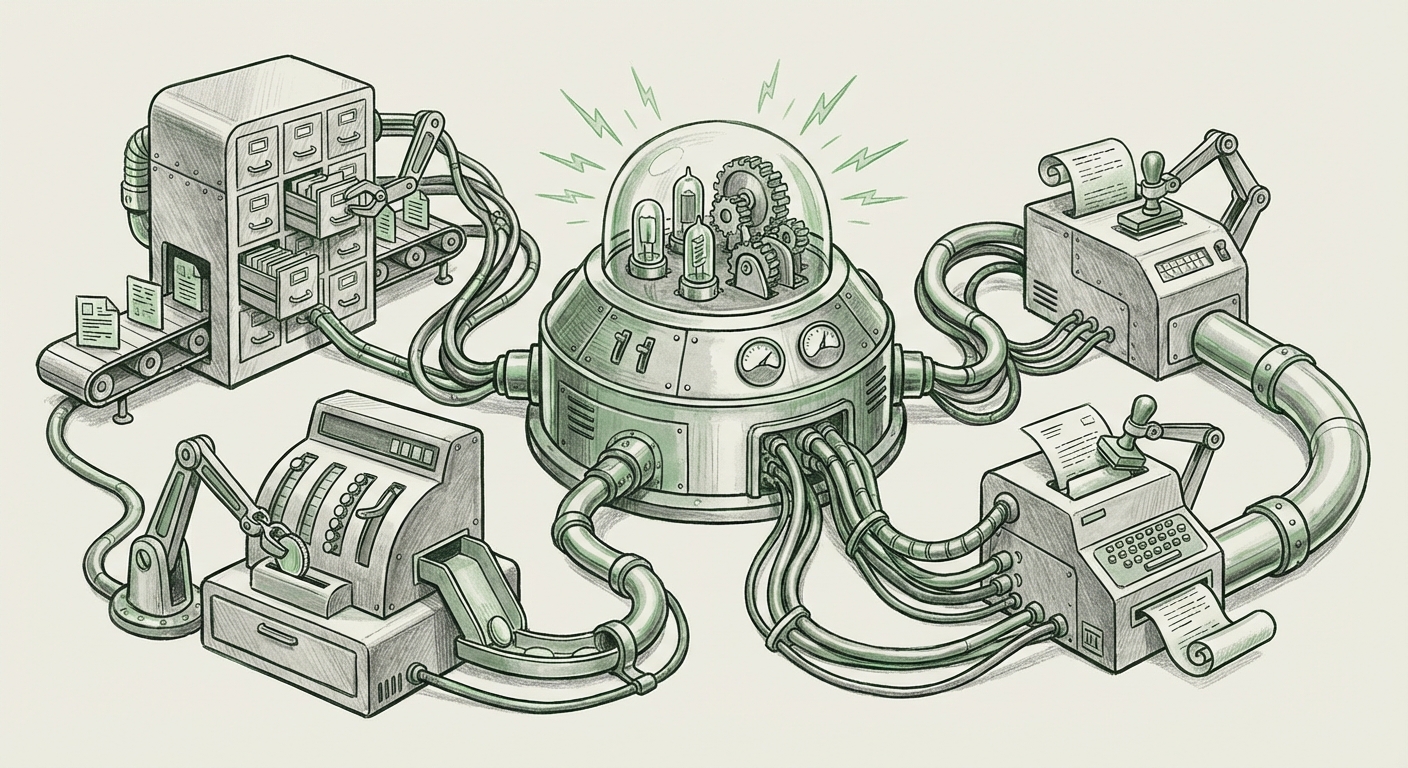

Frameworks like OpenClaw act as the critical middle layer—the management structure—that connects the LLM's decision-making brain to the company's digital body (the APIs). The LLM receives a complex task, reasons about what external tools it needs (e.g., a database lookup tool, an email sender tool), formats the request into the exact language the external system expects (often JSON), and executes the call. This process is what we term Autonomous Workflows.

The Technical Cornerstone: Function Calling Standardization

The power behind this revolution is not magic; it is standardized engineering. The ability for an LLM to reliably output structured data to call an external function is the single most crucial technical enabler here.

To understand why this is so disruptive, we must examine the foundation that enables frameworks like OpenClaw. This groundwork has been laid by the major model providers themselves.

(Note: Real-world examples of this include referencing guides on OpenAI Function Calling specifications, which show developers how to describe their tools to the model using JSON schema.)

When a developer defines a function—say, `get_employee_roster(department_id)`—and provides that definition to the LLM, the model learns not just to talk about it, but to *use* it. This mirrors how human employees are trained on specific software tools. The LLM becomes proficient in the syntax of the external world. Frameworks like OpenClaw then take this capability and build robust deployment pipelines around it, allowing companies to expose internal microservices (like proprietary MCP servers mentioned in the initial research) securely as API endpoints for the LLM to consume.

Contextualizing the Trend: The Ecosystem of Agent Orchestration

OpenClaw is an important instantiation of a much larger trend: the creation of sophisticated LLM agent frameworks. These tools are racing to solve the architectural problems inherent in chaining together multiple model calls and external actions reliably.

Analysis on "The Rise of LLM Agent Orchestration" shows that developers are actively seeking solutions for multi-step reasoning, error correction, and state management across complex tasks.

For businesses, the challenge is no longer "Can we connect the AI to the system?" but "How do we manage hundreds of AI agents operating across our systems?". This transition moves the focus from the raw model performance (which is getting cheaper) to the orchestration layer—the software that manages the agents.

The Middle Layer Battleground

This dynamic fosters a fascinating competition. If the foundation models (GPT-5, Claude 4) are the raw engine, the orchestration framework (LangChain, AutoGen, OpenClaw) is the vehicle. It dictates speed, safety, and integration capacity.

Industry watchers are observing "The Middle Layer Battle," where the proprietary advantage might soon lie not in training the next trillion-parameter model, but in building the most reliable, scalable, and secure agent framework that can effectively utilize *any* available model.

This means developers are gaining powerful, democratized tooling to build specialized "AI employees" tailored to niche corporate functions. An LLM paired with OpenClaw might become your dedicated financial reconciliation agent today, and tomorrow, with slightly different API definitions, it becomes your complex logistics planner.

The Implications: Productivity, Risk, and the Future Workforce

The emergence of highly capable, API-driven AI agents has profound implications spanning productivity boosts, necessary security upgrades, and the fundamental nature of white-collar work.

Productivity Through Autonomy

For technical teams and product managers, this is the dawn of true automation. Tasks that required sequential human steps—validate user request, query DB A, transform data, post to API B, send confirmation email C—can now be compressed into a single, coherent agentic task. This moves AI from being an augmentation tool to an actual autonomous worker capable of complex throughput.

This capability lowers the barrier to entry for advanced automation. Instead of requiring a specialized robotic process automation (RPA) engineer to code every single step, a domain expert can simply define the available tools (APIs) and instruct the LLM on the desired outcome.

The Non-Negotiable Need for Governance

However, granting an LLM access to company infrastructure introduces immense risk. If an LLM has the "function" to delete records or modify deployment configurations, a single hallucination or a poorly constructed prompt could lead to catastrophic outcomes. This reality forces businesses to mature their AI governance strategies rapidly.

Expert analysis on "Governing the Autonomous Workforce" stresses the critical need for Policy-as-Code and strict sandboxing, ensuring that LLM actions are auditable, reversible, and strictly confined by enterprise security policies.

The AI employee must operate within a heavily monitored cage. Future frameworks will need to incorporate sophisticated authorization layers (who can the agent talk to?), constraint layers (what actions are forbidden?), and detailed logging that maps every token generated to every API call executed. This level of security auditing is mandatory before any large enterprise will entrust critical infrastructure management to an autonomous agent.

Actionable Insights for the Road Ahead

For organizations looking to harness the power of agentic AI, a clear strategy must be adopted that prioritizes safety alongside innovation:

1. Start with Internal, Low-Stakes Tooling

Do not immediately connect your flagship customer API to your agent. Begin by defining functions for non-critical, internal data retrieval, or simple dashboard updates. This allows your engineers to become proficient in defining reliable function schemas and understanding the LLM’s reasoning paths without risking production integrity.

2. Invest in Orchestration Maturity

Evaluate agent frameworks based on their ability to manage complexity. Does the framework handle multi-step dependency chains well? How easy is it to swap out GPT for a more cost-effective open-source model when the task permits? The framework you choose today dictates your scalability tomorrow.

3. Embrace the Audit Trail

Security posture must evolve. Assume everything the AI does will eventually need to be explained to a compliance officer. Build logging systems now that capture the LLM’s internal monologue—the prompt, the tool selection, the input parameters, and the output response—for every single transaction.

4. Redefine Roles, Not Just Tasks

The AI employee won't just replace a single task; it will absorb an entire *role* comprised of many sequential steps. Focus hiring and training efforts on roles that manage and supervise AI fleets—the AI Ethicists, the Prompt Engineers who manage tool definitions, and the AI Infrastructure Architects.

Conclusion: Building the Digital Organization of Tomorrow

The momentum is undeniable. The integration of LLMs with external systems via standardized function calling, as demonstrated by platforms like OpenClaw, signifies a fundamental shift from conversational AI to operational AI. We are moving toward a future where the majority of routine, API-driven digital labor will be executed by autonomous agents.

This isn't science fiction; it’s the next iteration of enterprise software deployment, driven by sophisticated orchestration frameworks built upon the reasoning power of the world’s best foundation models. While the technological capability is accelerating at breakneck speed, the real test for business leaders will be mastering the accompanying governance and security structures. The organization that masters the safe deployment of its digital "AI employees" will define the next decade of industrial efficiency.