The LLM Arms Race: Benchmarks, Agents, and Global Power Dynamics Post-M2.5 vs GPT-5.2

The pace of Large Language Model (LLM) development has never been faster. Every few months, a new benchmark surfaces, claiming superior performance, often pitting established titans like OpenAI (GPT), Google (Gemini), and Anthropic (Claude) against rapidly emerging challengers, such as MiniMax. A recent comparative analysis featuring models like MiniMax M2.5, GPT-5.2, Claude Opus 4.6, and Gemini 3.1 Pro highlights not just incremental performance gains, but a fundamental shift in *how* these models are being used and evaluated.

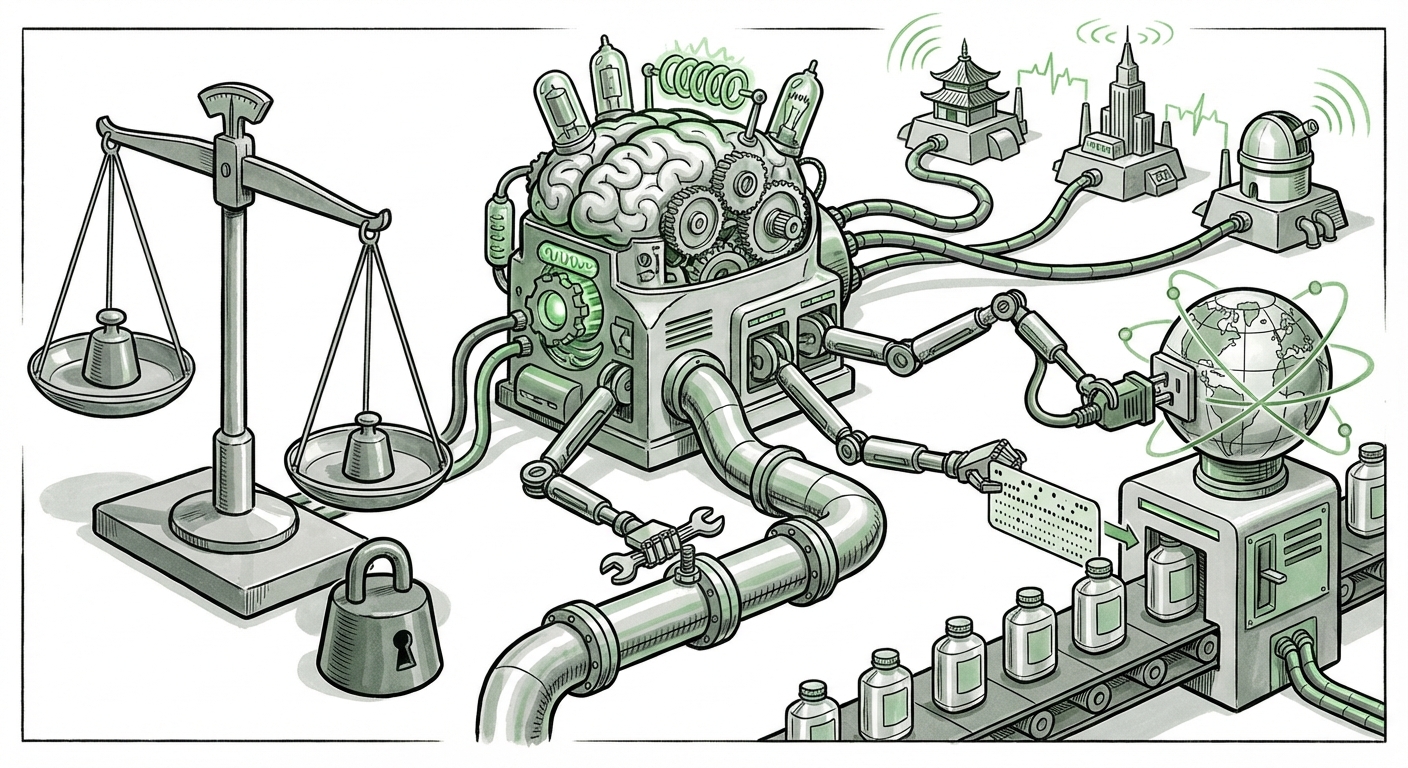

The real story isn't just *which* number is higher on a static test. It’s about **actionability**—the ability of these models to interact with the real world via tools—and the **global distribution** of AI power. To truly understand the future trajectory of AI, we must look beyond the raw scores and explore three critical pillars: the evolution of evaluation, the explosion of agentic workflows, and the geopolitical spread of capability.

The Mirage of Static Benchmarks: Why We Need Smarter Evaluations

When we see headlines comparing Model A scoring 88% on a test versus Model B scoring 87%, it feels concrete. However, this traditional approach to benchmarking—using static datasets like MMLU or HumanEval—is rapidly becoming obsolete. Why? Because top-tier models are essentially 'memorizing' or overfitting to these public tests.

The Saturation Point

For technical audiences, this is the 'law of diminishing returns' applied to evaluation. When the best models cluster within a single percentage point on a fixed test, the difference becomes noise, not signal. For a general audience, think of it like this: If every student takes the same spelling test, eventually everyone gets 100%. That doesn't mean they can suddenly write a novel or argue a complex legal case.

This has spurred a necessary pivot. As corroborated by industry analysis exploring **"new standards for AI evaluation beyond MMLU,"** the industry is now demanding **agentic benchmarks**. These new tests don't just ask if the model knows facts; they ask if the model can plan, execute steps, use tools (like a calculator, a search engine, or a database), correct its own mistakes, and ultimately achieve a complex goal. This shift directly validates the importance of the technological capability highlighted in the benchmark battle: function calling.

The Engine of Agency: Function Calling and API Integration

The most profound technical trend demonstrated by the comparison is the universal emphasis on **Function Calling**. The ability of an LLM to call external APIs—to "use tools"—is the key differentiator between a sophisticated chatbot and a true autonomous agent.

Imagine the LLM as the brain and the external tools (like deploying public MCP servers as API endpoints) as the hands and feet. The brain can read, write, and reason, but it cannot physically book a flight or update a database entry without its hands. Function calling provides those hands.

From Prompting to Orchestration

For developers and IT leaders, this signals the end of purely 'prompt-in, text-out' workflows. The future demands **orchestration**. We need frameworks that manage the LLM's decision-making process as it decides when to call a tool, which tool to use, and how to process the result it gets back.

Sources discussing the **"LLM function calling API standardization trends"** show that this capability is maturing rapidly. It’s moving from an experimental feature to a core, expected component of enterprise AI deployment. Whether it's scheduling meetings, executing complex financial transactions, or managing cloud infrastructure, the LLM’s value skyrockets when it can reliably interact with live, external systems.

Actionable Insight for Business: Start auditing your core business processes for tasks that require interacting with existing enterprise software. These are the ideal targets for immediate integration via LLM function calling agents. The ROI will come not from better text generation, but from automated process execution.

A Multipolar AI World: The Rise of Regional Powerhouses

The inclusion of a model like MiniMax alongside the familiar American giants is a critical indicator of the shifting balance of power in AI development. It proves that cutting-edge capability is no longer exclusively developed within the confines of Silicon Valley or Seattle.

Beyond the Western Narrative

The competitive analysis involving MiniMax forces us to look at the **"Geopolitical and Commercial Landscape of Specialized LLMs."** Regional players often have distinct advantages:

- Data Access: They are trained on vast, locally relevant datasets that Western models might miss, leading to superior performance in specific languages, cultural contexts, or regulatory environments.

- Deployment Speed: Competition is fierce domestically, pushing deployment cycles faster than might be seen in markets where one or two players dominate.

- Strategic Alignment: These models are often strategically positioned to serve massive domestic or regional enterprise needs directly.

This fragmentation is good news for the overall technology ecosystem. When multiple major players compete fiercely on functionality (benchmarks) and capability (tool use), innovation accelerates. It means businesses are no longer forced into a single vendor ecosystem for their most advanced AI needs.

The Implications of Model Specialization

We are moving away from the idea of one 'master model' that does everything adequately. Instead, the future is a mosaic of specialized models. A top-tier generalist model might still win on creative writing, but a focused regional model like MiniMax might offer superior performance in specific coding tasks or high-volume enterprise data processing within its native environment.

This mirrors the evolution of cloud computing: initially dominated by one or two giants, now thriving with specialized providers. AI is following suit. Developers will soon select their foundation model not just by general capability score, but by its specific fluency in API integration and its familiarity with niche data domains.

The Future Landscape: What This Means for You

Synthesizing these three trends—the maturing of evaluation, the adoption of agency, and the globalization of development—paints a clear picture of the next 18 months in AI.

For Developers and Engineers: Master the Orchestrator

The most valuable skills will pivot from simple prompt engineering to **agent orchestration**. Understanding how to securely expose internal APIs to an LLM, define clear function schemas, and implement robust error handling when an LLM calls a tool incorrectly will become standard practice. Frameworks that simplify this integration will see massive adoption, as they manage the messy middle ground between the model and the operational endpoint.

For Businesses and Strategy Leaders: Embrace the Hybrid Model

The era of betting exclusively on one vendor is over. Companies must adopt a multi-model strategy. You might use GPT-5.2 for high-stakes strategic analysis, Claude Opus 4.6 for complex contractual summaries, and a powerful regional model like MiniMax for localized, high-throughput customer service automation where data residency or specific language nuance is key.

Furthermore, the focus must shift from ROI on *content generation* to ROI on *process automation* enabled by function calling. This requires rigorous measurement against agentic benchmarks, not just language fluency scores.

For Society: Navigating Trust and Scale

As models become agents capable of executing actions, the stakes for reliability increase exponentially. If a chatbot merely gives a wrong answer, that is an error. If an agentic system, powered by function calling, incorrectly executes a financial trade or modifies a critical server configuration, the consequences are immediate and severe. This reinforces the need for transparent, robust safety layers and sophisticated, agent-specific testing protocols—the very evolution we see in the demand for better benchmarks.

Conclusion: Action Over Accuracy

The latest skirmishes in the LLM benchmark wars—MiniMax M2.5 battling GPT-5.2 and its peers—reveal that the technology has matured beyond raw intellectual horsepower. While the raw computational capability keeps climbing, the defining feature of the next generation of AI value is **autonomy through connection.**

The future belongs to the models and platforms that can best translate high-level reasoning into reliable, real-world action via standardized API integration. Performance is no longer just about what the model *knows*, but what it can *do*. This transition from knowledge engine to action engine is the defining technological narrative of this era.