The Agent Revolution: How OpenClaw and Function Calling Unlock Dynamic AI Workflows

For years, Large Language Models (LLMs) were brilliant but isolated—they could generate code, summarize text, and answer complex questions, but they couldn't do things in the real world beyond generating the right text sequence. That era is rapidly concluding. We are witnessing the birth of the truly dynamic AI Agent, and a recent development, exemplified by initiatives like OpenClaw, signals a crucial engineering pivot toward making these agents functional, networked, and scalable.

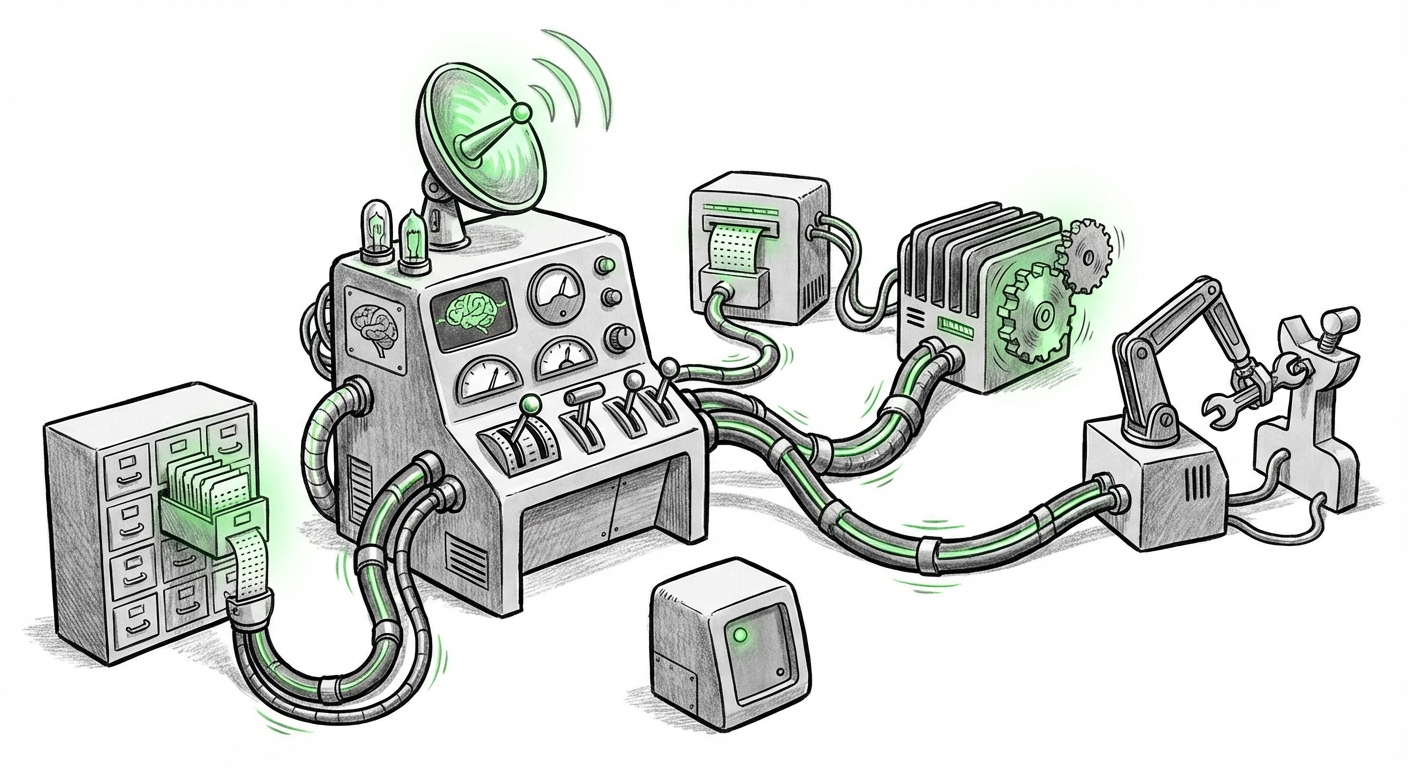

OpenClaw suggests a potent mechanism: deploying public Massive Cluster Protocols (MCP) servers as accessible API endpoints, which LLMs can then integrate into their workflows using function calling. This isn't just an incremental improvement; it's a fundamental shift from AI as a static knowledge base to AI as an active, tool-wielding orchestrator. To understand the magnitude of this change, we must look beyond the specific announcement and analyze the supporting trends in agent frameworks, LLM capabilities, infrastructure standardization, and inherent security risks.

The Evolution: From Chatbot to Action-Taker

The initial wave of generative AI focused on mastering language (the "C" in LLM). The next wave is focused on mastery of action. An AI Agent is essentially an LLM wrapped in a control loop that allows it to reason, plan, select a tool, execute the tool, observe the result, and replan its next step. This is the essence of intelligence in many contexts.

The key mechanism enabling this action is function calling (or tool use). Instead of just reading a document, the agent can now say, "I need the current stock price for AAPL," and the LLM generates a structured command (usually JSON) to call a specific external function—like a real-time data API. The environment executes the API call and feeds the result back to the LLM, which then uses that factual data to answer the user.

Contextualizing the Framework Landscape

OpenClaw’s approach must be viewed against the backdrop of established agentic frameworks. If we examine the competitive landscape of building these agents—looking at comparisons between major libraries like LangChain, AutoGen, or specialized corporate tools—we see a consistent theme: the complexity lies in managing the state and the successful orchestration of heterogeneous tools.

The obsession developers have stems from the promise of reducing boilerplate code. Instead of painstakingly writing the decision logic for when and how to use a database query versus a search engine, the LLM handles the choice. OpenClaw seems to be offering a standardized way to plug into a network of pre-existing computational capabilities (the MCP servers). This suggests a future where building complex workflows requires less custom integration code and more sophisticated prompt design and schema definition.

For AI Engineers and Architects: The real value proposition here is standardization. If the industry coalesces around a few robust methods for tool registration and execution—whether through MCP or another standard—it dramatically lowers the barrier to creating complex, multi-step autonomous applications.

The Foundation: LLM Maturity in Tool Selection

An agent is only as good as its ability to correctly delegate tasks. The entire dynamic agent ecosystem hinges on the LLM’s proficiency at function calling accuracy.

Early models were notoriously unreliable, often hallucinating tool names or generating malformed arguments. Today, top-tier models like GPT-4o or Claude 3 Opus exhibit vastly improved reliability when supplied with clear, machine-readable function schemas (like OpenAPI specifications). Technical deep dives and benchmarks consistently show that while error rates drop significantly with larger models, they are never zero.

This means that while OpenClaw aims to use the LLM to route requests to public services, the operational risk remains tied to the quality assurance of the underlying model. For a CTO concerned with production reliability, they must account for the 0.5% or 1% failure rate where the model sends the wrong parameter or chooses the wrong tool entirely.

For ML Engineers: This ongoing refinement means prompt engineering is evolving into schema engineering. Successfully deploying an agent requires meticulous definition of the tools the LLM has access to, ensuring the descriptions are unambiguous so the model cannot misinterpret its purpose or arguments.

The Infrastructure Layer: Standardization Through Protocols

The most forward-looking element of the OpenClaw concept is the use of **Massive Cluster Protocols (MCP)** as the standardized access layer. This echoes historical shifts in computing where interoperability became paramount.

Think about the transition from proprietary networking standards to TCP/IP, or from disparate in-house communication methods to ubiquitous REST or gRPC APIs. The development community is hungry for a similar standardization for accessing distributed computational resources—especially specialized hardware, large data stores, or legacy enterprise systems—all orchestrated by AI.

If MCP emerges as a viable, low-latency, open standard for exposing these resources to LLMs, it solves a massive headache: every time a developer builds a new agent, they currently have to custom-wire it to whatever tool they need. A standardized protocol allows the LLM to treat an external cluster as just another "function" defined by a public contract, making integration seamless and instantly scalable across different providers.

This is the 'WebAssembly for AI' argument in infrastructure. It suggests a future where computational services are decoupled from the models that call them, leading to unprecedented modularity and innovation in how AI services are chained together.

The Elephant in the Room: Security and Trust

The ability for an LLM to execute arbitrary external functions introduces profound security challenges. When an AI moves from generating text to executing code or making API calls, the attack surface expands exponentially. This is the single biggest hurdle for enterprise adoption, and it must be addressed with rigor.

The primary concern revolves around Prompt Injection. An attacker may craft a seemingly innocent query that, when processed by the LLM, manipulates the agent into issuing malicious commands to the external tool. For instance, if the LLM has access to a database deletion tool, a clever prompt could convince it to delete tables by masking the command within a seemingly benign data request.

Security analysis of current agent systems highlights the critical need for robust sandboxing, stringent access control (least privilege), and input validation on the tool execution side. Just because the LLM *wants* to call the `delete_user_data` function doesn't mean it should be allowed without verifying the user's intent and authorization.

For Security Analysts and Compliance Officers: Any deployment of agent technology like OpenClaw requires treating the LLM not as a trusted entity, but as a potentially compromised communication broker. Security must be built into the API gateway that serves the MCP endpoints, ensuring that the LLM's generated parameters are sanitized before execution.

Future Implications: The Rise of the Composable Enterprise

What do these trends—advanced agents, reliable function calling, and infrastructural standardization—mean for the future?

- Hyper-Automation of Business Processes: The static LLM digitized documents; the Agent will digitize entire workflows. Imagine an accounting department where an agent can handle invoice processing: ingest the invoice (OCR/Vision tool), match it against purchase orders (Database tool), flag discrepancies (Reasoning tool), and initiate payment (Financial API tool)—all with minimal human oversight beyond initial validation.

- Democratization of Complex Software Development: Agents capable of using tools can transition from writing isolated code snippets to managing entire projects. They will use project management APIs, source control APIs, testing environments, and deployment tools in sequence, effectively becoming junior software engineers capable of completing well-defined feature requests.

- The Interoperable AI Layer: If standards like MCP gain traction, we move toward an "AI Internet" where specialized, optimized models (one for vision, one for planning, one for language) can communicate seamlessly through standardized protocols, rather than monolithic models trying to do everything poorly.

Actionable Insights for Today’s Innovators

For organizations looking to leverage the power demonstrated by OpenClaw, several immediate actions are necessary:

1. Audit Your Tool Inventory

Identify the critical, well-defined, read-only or low-risk API endpoints within your organization that an LLM might benefit from using (e.g., fetching internal documentation, checking ticket statuses). Design clear, unambiguous JSON schemas for these tools first. Do not give agents access to high-risk APIs until security guarantees are firmly established.

2. Invest in Agent Orchestration Maturity

Do not treat function calling as a simple feature; treat it as the core of your application logic. Explore frameworks that offer mature state management, error recovery, and human-in-the-loop feedback mechanisms for when the LLM fails its tool selection.

3. Standardize Communication Internally

If your company relies heavily on legacy systems, begin architecting a modern API gateway layer that translates legacy calls into standardized, LLM-friendly function descriptions. This future-proofs your infrastructure against the next generation of AI orchestrators, regardless of which specific framework wins the tooling war.