The AI Capital Crunch: Why Tech Giants Are Cutting Jobs While Building Data Centers

The narrative of the Artificial Intelligence boom has largely focused on software innovation—the stunning capabilities of large language models (LLMs) and the promise of automation. However, beneath the surface of every intelligent query and synthesized image lies a mountain of physical hardware requiring staggering amounts of money to build and power. A recent report concerning Oracle planning significant job cuts to manage the costs of its AI data center expansion is a stark wake-up call: The AI revolution is not just an innovation challenge; it is a colossal financial one.

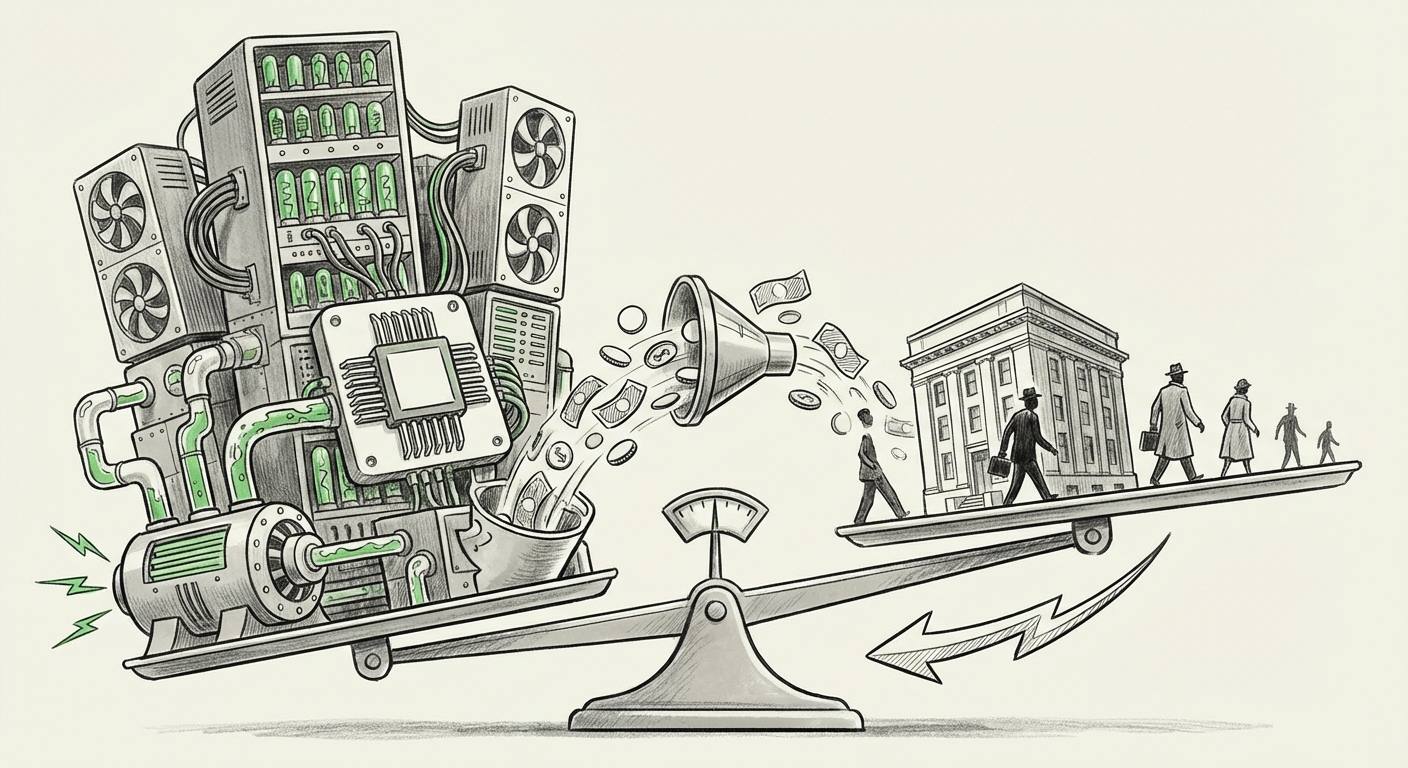

As an AI technology analyst, observing this dynamic—where companies shed existing roles to fund future infrastructure—reveals a critical inflection point in the technology sector. We are witnessing the Great Reallocation of Capital and Labor, driven entirely by the insatiable demand for advanced compute.

The Unseen Cost of Intelligence: AI Infrastructure Spending

To understand why Oracle, or any major cloud provider, would resort to workforce reduction, we must first quantify the underlying expense. Building an AI factory—a modern data center optimized for training massive models—is fundamentally different from building a traditional server farm. It requires specialized, cutting-edge components.

The primary driver of this cost surge is the Graphics Processing Unit (GPU), particularly those designed by Nvidia. These specialized chips are the engines of generative AI, and they are both incredibly powerful and incredibly expensive. Furthermore, these GPUs generate immense heat, necessitating entirely new, costly cooling solutions (like liquid cooling) and massive upgrades to power grid access. This high-CapEx environment is industry-wide, not unique to one company.

When we search for corroborating data, such as examining reports using the query, "Hyperscaler CAPEX" AI data center expansion costs 2024, we find confirmation that the entire industry is prioritizing infrastructure above all else. Microsoft, Google, and Amazon are spending tens of billions of dollars annually just to acquire the necessary chips and build the physical spaces to house them. For Oracle, whose Oracle Cloud Infrastructure (OCI) is playing aggressive catch-up against these titans, the financial strain to secure top-tier compute capacity is acute.

In simple terms, think of it like building a Formula 1 race team. You can have the best drivers (AI models), but if you don't spend the money on the best engine (the GPUs) and the fastest racetrack (the data center), you won't win the race. Oracle is investing heavily to build the track.

The Great Reallocation: Workforce Transformation Under the AI Mandate

If the infrastructure costs are rising, where does the money come from? This is where the second part of the development becomes critical: workforce restructuring. The strategy appears to be a painful, but calculated, shift from maintaining legacy operations to funding specialized AI development and infrastructure expansion.

The query, "tech layoffs" "AI transformation" restructuring 2024, reveals a pattern emerging across the tech ecosystem. Layoffs are not always a sign of failure; sometimes, they are a sign of strategic pivot. When a company decides that its future profitability hinges on offering cutting-edge AI services, it must ruthlessly cut costs in areas deemed non-essential or duplicative.

Automation and Consolidation: The Efficiency Play

For a massive enterprise vendor like Oracle, job cuts often target areas where efficiency can be gained immediately to fund long-term growth:

- Legacy Support and Sales: As workloads migrate to the cloud (and especially AI workloads), traditional, on-premises hardware sales and support roles become less critical.

- Redundant Roles Post-Acquisition: As suggested by searches around "Oracle Cerner" AI integration job cuts context, integrating vast acquisitions brings immediate redundancies in HR, finance, and general operations that can be streamlined using new, cheaper technologies—perhaps even AI itself.

- Operational Consolidation: Investing in automated data center management systems means fewer human operators are needed to run the expanded physical footprint. The new data centers are designed to be lean and efficient.

This trend suggests that the next phase of the AI transformation involves internal automation. The talent being hired is high-end, specialized AI researchers, chip architects, and cloud networking experts. The talent being reduced often falls into roles that are either aging out of relevance or are easily managed by the very software being developed.

Implications for the Future of Enterprise Technology

The Oracle situation serves as a high-profile case study for what the next decade of enterprise technology procurement will look like. The implications are profound:

1. Compute as the Ultimate Scarcity

For the foreseeable future, access to high-end compute (GPUs) will be the primary bottleneck for innovation. Companies that secure access—through large capital outlays or strategic partnerships—will dominate. This further entrenches the power of the hyperscalers (AWS, Azure, GCP, OCI) who can afford these investments.

2. The Bifurcation of the Tech Workforce

We are moving toward a "barbell" labor market within tech. On one end, you have the ultra-elite, highly compensated engineers who build, train, and manage the AI stack. On the other end, you have roles focused on prompt engineering, AI integration, and data curation. The middle ground—generalist IT support, legacy maintenance, and standardized sales roles—faces significant contraction pressure.

3. The Necessity of Strategic IT Investment

For CIOs and business leaders, the message is clear: AI is not a software upgrade; it is an infrastructure re-platforming project. Budgeting must shift dramatically from operational expenditure (OpEx) on older systems to significant capital expenditure (CapEx) for cloud compute access. If a company cannot afford the infrastructure, it cannot afford to play the generative AI game effectively.

Actionable Insights for Businesses and Stakeholders

How should businesses and employees navigate this tension between massive spending and cost-cutting?

For Technology Leaders (CIOs/CTOs):

Audit for AI Readiness, Not Just AI Adoption: Don't just look at which AI tools you are using. Look at your infrastructure budget. Are you prepared to handle the data egress and compute demands that advanced models create? If you rely heavily on older providers who are slow to invest in new accelerators (as seen by Oracle’s need to aggressively invest), you risk higher long-term costs or inferior performance.

Embrace "Compute-Aware" Architecture: Design systems that are conscious of their computational needs. Moving to a model that allows for workload elasticity—shifting burst tasks to external providers while keeping core, predictable data local—can help manage the initial, brutal upfront CapEx requirements.

For Employees and Talent:

Become a Specialist in Automation Vectors: Focus your upskilling on roles that directly enhance the efficiency of the new infrastructure. This means mastering cloud architecture, specialized data pipelines, and MLOps (Machine Learning Operations) rather than general IT management.

Understand the P&L Narrative: If your current role supports a legacy revenue stream that AI directly threatens (e.g., managing aging on-prem hardware), proactively seek roles in emerging areas like cloud migration or AI integration within your current organization. Understand the balance sheet story your employer is telling.

Conclusion: A Necessary, Painful Evolution

Oracle’s reported maneuver—slashing jobs to fuel its data center expansion—is not an isolated incident; it is the bleeding edge of a fundamental economic reality defining the AI era. The cost of realizing true artificial intelligence at scale is monumental, requiring immediate, non-negotiable capital investment. This creates a necessary, though socially challenging, dynamic where old operational efficiencies must be painfully extracted to fund tomorrow’s computational might.

The future of AI is built on silicon and electricity, not just clever code. Those organizations that successfully navigate this Great Reallocation—prioritizing the right infrastructure investments while transforming their workforce to manage that infrastructure—will be the ones setting the pace for the next decade of technological progress.