The IP Showdown: How Government Licensing Demands Will Reshape the Future of Frontier AI

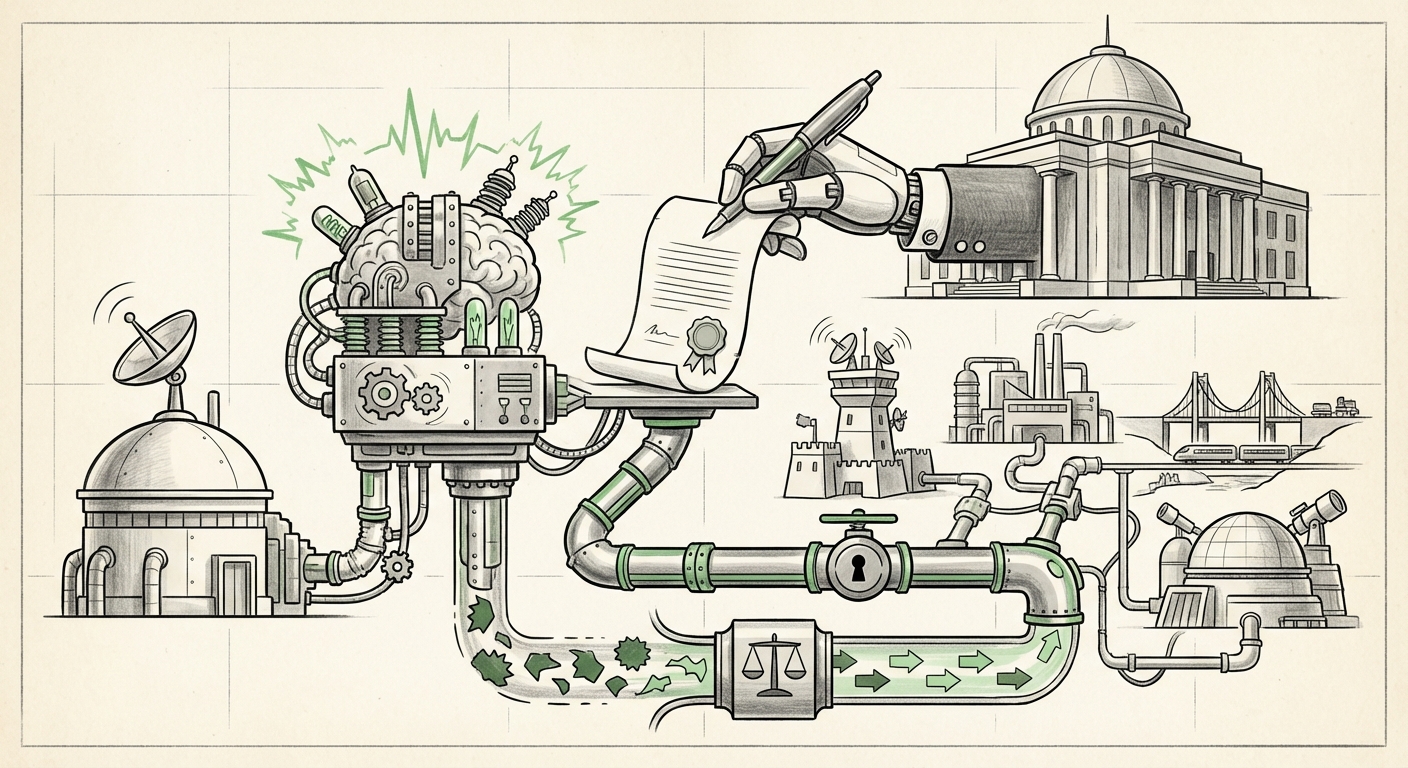

The rapid ascension of Artificial Intelligence has placed it squarely in the crosshairs of governments worldwide, moving it from a niche technological concern to a critical domain of national security and economic policy. In the United States, this regulatory trajectory is taking a fascinating, and potentially disruptive, turn. Recent reports indicate that the Trump administration has been drafting new rules for AI contracts that would mandate companies grant the government an irrevocable license for "all lawful use" of their systems, alongside banning ideological bias in AI outputs.

This proposal isn't just boilerplate contract language; it is a fundamental attempt to redefine the relationship between the state and the innovators building the most powerful—and potentially most valuable—intellectual property on the planet: foundational AI models. For technologists, investors, and policy wonks alike, understanding the context and implications of this move is paramount to charting the future course of AI development.

Contextualizing the Contract: From Software Rights to AI Sovereignty

To grasp the weight of demanding "all lawful use" rights, we must first look at how the U.S. government traditionally buys technology. In federal procurement, the rules are governed by the Federal Acquisition Regulation (FAR). For decades, when the government pays for custom software or research, it often secures specific rights to use that product, usually summarized as "Government Purpose Rights" or "Unlimited Rights."

The critical question here is whether this new AI mandate seeks to merely extend existing **FAR clauses** for software licenses or if it attempts to claim unprecedented control over the *underlying foundational models* themselves—which are distinct from a single application built on top of them. If a company develops a massive LLM and contracts for its use in a specific task (like document review), does the government now own the right to deploy that core model in entirely unrelated areas?

Searching for precedents reveals that while the government demands high levels of access for defense-related technology, demanding universal rights over proprietary, high-cost, dual-use foundational models is a significant leap. This potential change requires us to examine the **US federal government AI procurement strategy roadmap** to see if this is a reactionary move based on immediate security needs or a long-term strategy to capture national AI capability. For government contractors, this signals a massive shift in risk assessment and potential returns on investment.

The Innovation Trade-Off: IP Dilution vs. Access

The lifeblood of frontier AI development is massive, sustained private investment. Companies developing models like GPT-4, Claude, or Gemini are spending billions on compute power and research talent. Their business model relies heavily on retaining control over the core weights and architecture of these models, licensing access in controlled ways.

As legal experts exploring the **legal analysis of mandatory IP licensing for government AI research** have noted, overly broad government licensing terms can act as a potent chilling effect. If a startup knows that engaging in a single government contract means forfeiting broad rights to its most valuable IP, the incentive to pursue that contract—or even to develop that frontier model in the first place—diminishes significantly. Why bear the risk of billion-dollar training runs if the payoff is significantly curtailed by government mandates?

This leads to a central tension: Security demands access, but access may destroy the very innovation pipeline the government needs to secure. For venture capitalists funding these labs, the stability of IP rights is crucial. A system that forces immediate, irrevocable licensing could push high-value R&D further into less regulated, non-US jurisdictions, threatening American digital sovereignty.

The Philosophical Minefield: Banning Ideological Bias

Perhaps the most conceptually difficult element of the proposal is the mandate to ban "ideological bias" in AI outputs. While superficially appealing—who wants a biased tool?—this requirement immediately plunges regulators into philosophical quicksand.

What standard defines "ideological bias"? In the context of content moderation, national security assessments, or even economic modeling, neutrality is rarely absolute; it is often defined by the values embedded in the training data or the safety guardrails imposed by the developer. The very act of defining what constitutes an acceptable, non-biased output is itself an ideological imposition.

This is where the parallel drawn in initial reporting—to China’s approach—becomes relevant. Beijing has explicitly mandated that AI systems align with core socialist values. While the US proposal aims for a standard of *neutrality* rather than alignment with a single party line, the mechanism involves the government dictating the acceptable philosophical boundaries of machine output. A deep dive into **China's algorithmic bias regulation versus US proposals** highlights that while the intent differs wildly, the mechanism—state-enforced ideological constraint—shares structural similarities.

This raises severe concerns for compliance officers working under directives like **Executive Order 14110 (Safe, Secure, and Trustworthy AI Development and Use)**. EO 14110 focuses heavily on safety testing and disclosure. A new contract rule dictating acceptable ideology could supersede, contradict, or heavily complicate existing compliance frameworks, forcing developers to satisfy security demands while simultaneously navigating subjective political constraints.

Implications for Global AI Governance and Competition

The US approach to AI regulation has historically leaned toward sector-specific rules and voluntary frameworks, contrasting sharply with the comprehensive, binding legislation seen in the European Union’s AI Act. This new contract-based approach represents a powerful, immediate regulatory lever that bypasses slow-moving legislation.

If the US leverages its immense purchasing power to dictate the IP terms and output constraints for frontier models, it effectively sets a global *de facto* standard, regardless of whether Congress passes formal legislation. Companies wishing to sell software to the DoD, NASA, or other major agencies will be forced to comply, making the US government a dominant, if indirect, regulator of global AI norms.

This dynamic affects geopolitical competition. Nations vying for AI supremacy are keenly aware that control over foundational models grants leverage. By attempting to secure maximum access and control over outputs, the US seeks to ensure domestic control over critical AI capabilities. However, if this policy drives the most agile and well-funded private labs to prioritize international markets where IP rights are more robustly protected, the goal of national AI leadership could be inadvertently undermined.

Practical Implications: What Businesses Must Do Now

For companies operating at the cutting edge of AI, these developments require immediate strategic pivots:

- Re-evaluating the IP Moat: Businesses must stress-test their current IP protection strategies. If government contracts are now a primary route to market, the value of proprietary weights must be assessed against the cost of granting an irrevocable license. Are there ways to license *access* to models without licensing the *underlying architecture* that satisfies these new requirements?

- Contractual Segmentation: Firms should explore rigorous segmentation between R&D funded purely by venture capital (where government demands might be avoided) and projects specifically aimed at federal contracts. Clear demarcation will be essential for legal defense.

- Defining "Lawful Use": Legal teams must proactively seek clarity on what constitutes "lawful use" in the context of these licenses. Is the government seeking the right to use the model for intelligence gathering, economic forecasting, or simply internal administrative efficiency? Ambiguity here is a liability.

- Preparing for Bias Audits: Even if the definition of "ideological bias" remains opaque, companies must strengthen their internal red-teaming and bias auditing processes. Compliance will likely be enforced through audits of training data and alignment fine-tuning, not just the final output.

Conclusion: The State as Co-Owner of Intelligence?

The proposed contract rules represent a defining moment in AI governance. They suggest that in the eyes of certain policymakers, foundational AI models—the infrastructure of future economic and military power—cannot remain purely private assets when national interests are at stake. The government is positioning itself not just as a customer, but potentially as an irrevocable co-owner of deployed intelligence systems.

This ambition promises greater governmental insight and control, but it carries the tremendous risk of stifling the very innovation that created these models. The future of AI leadership will be determined not just by which nation trains the biggest model, but by which regulatory framework best manages the delicate, often conflicting, demands of national security, market innovation, and ideological constraint.