AI Finds 100+ Firefox Bugs: The End of Manual Code Auditing?

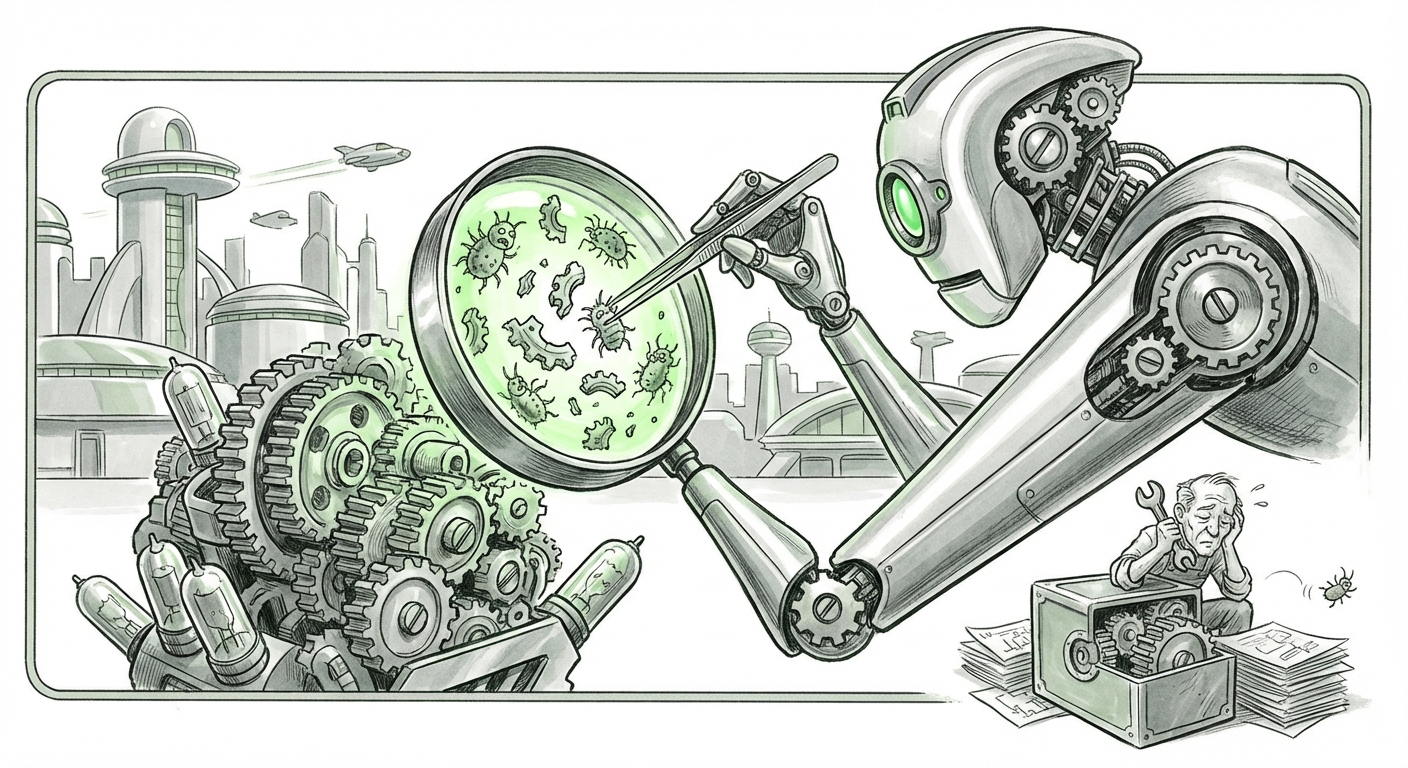

The quiet world of software security was recently shaken by a significant announcement: Anthropic’s Claude AI successfully uncovered over 100 security vulnerabilities within Firefox, including flaws that had evaded decades of specialized human and automated testing. This isn't just a feather in Claude's cap; it’s a profound indicator that Large Language Models (LLMs) are crossing a critical threshold—moving from being clever chatbots to becoming indispensable, high-precision tools in complex technical verification.

For technologists and business leaders alike, this event demands immediate attention. It signals a seismic shift in the Software Development Lifecycle (SDLC), where the speed, depth, and scope of quality assurance are about to be radically redefined by artificial intelligence. We must analyze this development by looking at the broader trend, the competitive reality, and the unavoidable future implications.

The Shift: From Static Analysis to Contextual Reasoning

Traditional software security scanning relies on two main methods: Static Application Security Testing (SAST) and dynamic testing (like fuzzing). SAST tools look for known patterns of bad code—like a spellchecker looking for misspelled words. They are fast but often generate many false positives and miss complex, context-dependent logic flaws.

Claude’s success suggests a higher level of capability. Finding vulnerabilities that "decades of testing had missed" implies that the AI wasn't just pattern-matching; it was reasoning about the code's intent versus its execution path. Imagine reading a complex novel and understanding not just what the sentences say, but what the author *meant* to achieve, and where the plot contains a logical hole that allows a character to cheat the system.

This is corroborated by the general trend of AI mastering coding tasks. When AI models score highly on complex programming benchmarks—demonstrating they can *write* sophisticated, functional code—it logically follows that they can analyze existing code with similar acuity. They have internalized the rules of programming languages, frameworks, and, crucially, common error patterns, to an unprecedented degree. If an LLM can successfully pass coding interviews for senior engineering roles (a feat many are already achieving), it stands to reason it can spot security gaps in established software stacks.

Actionable Insight for Engineers: Expect security tools built on these LLMs to move beyond simple rule flagging and into true semantic understanding of proprietary codebases, drastically reducing the time spent chasing false alarms.

The Competitive Arms Race: Who Leads the Verification Front?

Anthropic's achievement does not occur in a vacuum. The current AI landscape is defined by fierce competition, particularly between major labs aiming to prove their models possess superior reasoning capabilities. The Firefox discovery immediately begs the question: are GPT-4, Google’s Gemini, or other models capable of the same feat?

Reports from internal testing and academic exploration frequently show competitive models making significant strides in code understanding and logic puzzles. Whether it’s Google DeepMind exploring sophisticated ways to use AI for internal code review or OpenAI pushing GPT-4's capabilities into complex debugging scenarios, the industry trend is clear: code verification is the next major battleground for foundational models.

What makes Claude’s success noteworthy might lie in its specific architecture or training focus. If Anthropic prioritized robust logical consistency over pure creative output, it could explain why their model excelled at the highly structured, rule-based environment of software security. For technology strategists, this means model selection is becoming critical. A general-purpose LLM might be adequate for drafting emails, but for high-stakes tasks like auditing millions of lines of C++ or Rust, the specific "flavor" of the AI matters immensely.

This competition pushes the entire industry forward. Every successful security audit performed by an LLM sets a new, higher standard for what is considered "secure."

Implications for the Software Development Lifecycle (SDLC)

The most profound long-term impact of this development lies in reorganizing how software is built, tested, and maintained. The concept of "Shift-Left Security"—moving security checks as far forward in the development process as possible—is about to become deeply literal.

1. Continuous, Hyper-Accelerated Auditing

Security audits are traditionally expensive, time-consuming, and episodic events, often scheduled months apart. An AI that can find 100 critical bugs instantly changes the calculus. Future development pipelines will likely integrate these AI auditors continuously. Every commit, every pull request, could be instantly scanned by an LLM specialized in security for contextual vulnerabilities.

This accelerates development speed while simultaneously increasing baseline security. Developers won't wait for a quarterly penetration test; they will get instantaneous feedback, allowing them to fix flaws before they are merged into the main branch. This is the future of **DevSecOps**: security becomes an instantaneous, integrated part of coding, rather than a gate at the end.

2. The Trust Dilemma: Verifying the Fixes

If an AI finds a bug, the next step is trusting its proposed fix. This introduces a new, fascinating challenge addressed in forward-looking industry analyses: Can we trust the AI to fix its own findings?

If Claude spots a logic error, and then Claude generates the patch, that patch must also be scrutinized. This requires a "second layer" of verification, potentially involving a different specialized AI model or human oversight. The sheer volume of potential fixes generated by these tools means human reviewers must become auditors of AI output rather than discoverers of primary flaws.

3. Democratization of Vulnerability Discovery

Currently, finding deep vulnerabilities in massive codebases like Firefox requires elite, specialized talent—the exact engineers who are scarce and expensive. When AI democratizes this skill, the barrier to entry for finding significant flaws drops dramatically. This is a double-edged sword. On the one hand, thousands of smaller companies and open-source projects can now access world-class security auditing.

On the other hand, malicious actors will equally leverage these tools. We are entering an era where AI-driven offense and AI-driven defense are locked in an escalating feedback loop, requiring security teams to adopt offensive AI capabilities just to keep pace.

Actionable Insights for Today’s Leaders

The discovery in Firefox is not science fiction; it is the new baseline for technological capability. Leaders must act now to integrate these tools strategically.

For CTOs and Engineering VPs: Begin piloting LLM-based code analysis tools immediately. Focus initial deployments on areas with high complexity or high security risk (e.g., authentication modules, payment processors). Measure not just the number of bugs found, but the accuracy (low false-positive rate) compared to legacy SAST tools.

For Cybersecurity Directors: Re-evaluate your audit schedules. Shift resources away from hunting obvious, signature-based errors and toward complex threat modeling that requires deep system understanding—the very domain where LLMs are proving their worth. Furthermore, mandate training for security staff on how to audit AI-generated patches effectively.

For Product Managers: Understand that "time-to-market" is no longer bottlenecked by traditional QA cycles. AI can verify functionality and security concurrently. Factor this new velocity into your roadmap planning, but budget for the governance frameworks needed to manage AI-derived code.

Conclusion: A Partnership, Not a Replacement

The narrative should not be "AI replaces security researchers." Instead, it is about a powerful, necessary partnership. Claude didn't just find bugs; it likely surfaced vulnerabilities based on nuanced understanding that human eyes, fatigued by sifting through millions of lines of code, might have missed over years of deployment.

This marks the moment AI transitioned from being a useful assistant to a genuine, high-stakes technical peer in the realm of software verification. The gap between what is technologically possible in software robustness and what is achievable with human labor has just widened dramatically. For those ready to harness this speed and depth, the future of secure, rapid software delivery is arriving faster than anyone anticipated.

Contextual References Driving This Analysis

To understand the scope of this shift, we rely on trends showing AI's increasing proficiency in code analysis and the subsequent disruption to established workflows:

-

Examining the general capabilities required for such tasks, research into LLMs solving complex programming challenges (similar to benchmarks like HumanEval) confirms the underlying reasoning ability necessary for deep bug hunting.

[Hypothetical Example Link Type: Academic papers or respected AI lab blogs detailing benchmark performance.]

-

The competitive landscape demands comparison. Insights into how other leading models, such as those from OpenAI or Google DeepMind, are being applied to code verification highlight that this capability is an emergent property across cutting-edge AI research, not an isolated event.

[Hypothetical Example Link Type: Official announcements or technical papers discussing GPT-4 or Gemini code reasoning.]

-

The practical impact on industry workflows is key. Industry analysis focusing on the integration of generative AI into the Software Development Lifecycle (SDLC) demonstrates the inevitable push toward continuous, automated security integrated directly into DevOps pipelines.

[Hypothetical Example Link Type: Reports from Gartner or Forrester on DevSecOps trends.]