The $5,000 AI Problem: Decoding the True Cost of Cutting-Edge Coding Tools and Why Subsidies Rule the Market

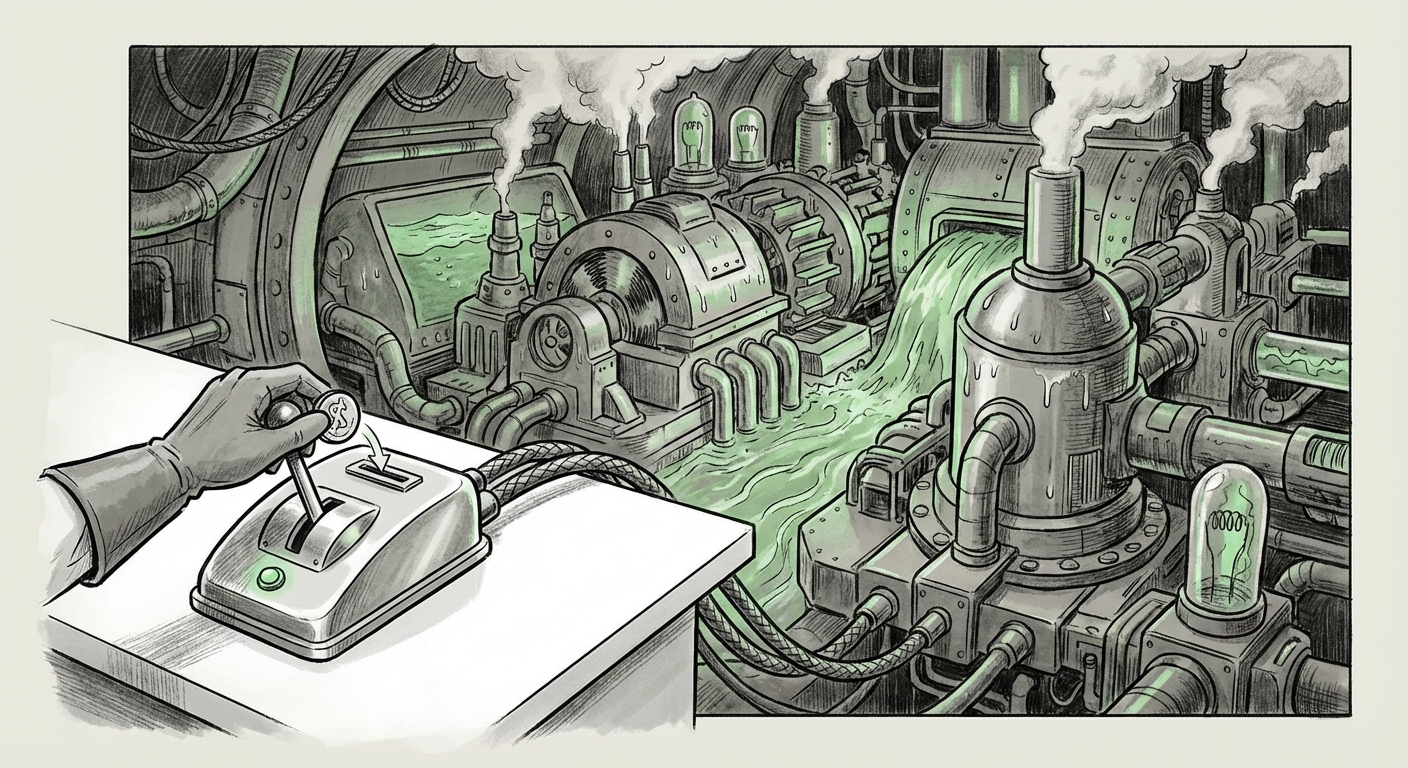

The artificial intelligence landscape is moving at breakneck speed, characterized by leaps in capability and astonishing feats of automation. Yet, beneath the surface of these technological marvels lies a harsh economic reality: **running the most powerful AI models is incredibly expensive.** A recent internal analysis involving Anthropic's Claude Code subscription—reportedly costing the provider up to $5,000 in monthly compute per user while charging the subscriber only $200—has pulled back the curtain on this hidden financial structure. This staggering 25:1 discrepancy is not an anomaly; it is a strategic gambit that defines the current battle for AI dominance.

The Economic Shock: Subsidies as a Market Weapon

For the non-technical observer, the concept of a $5,000 monthly bill for a $200 service sounds like a catastrophic business model. However, in the high-stakes world of generative AI infrastructure, this is often interpreted as the cost of securing an early advantage. This dynamic is fundamentally different from traditional SaaS (Software as a Service) pricing, where the marginal cost of adding one more user is often near zero.

In AI inference—the process of running the model to generate an answer—the cost is directly tied to computational demand. Every complex coding query, every long context window analyzed by Claude, burns GPU cycles on expensive, highly specialized hardware. The fact that Anthropic (via its partner, Cursor) is absorbing this massive deficit points toward a clear strategy:

- Adoption Over Immediate Profit: The primary goal is to make Claude the default, indispensable tool for developers. Once developers integrate a superior AI assistant into their daily workflow, switching costs become prohibitively high, regardless of future price adjustments.

- Data Feedback Loop: Early, heavy usage provides invaluable data on how the most sophisticated users stress-test the model, enabling rapid iteration and fine-tuning that competitors might not access as quickly.

To truly grasp the magnitude of this financial pressure, we must look at the underlying infrastructure economics. If we were to search for **"Generative AI infrastructure cost breakdown GPT-4 vs Claude,"** we would likely find analyses confirming that top-tier models, requiring immense parallel processing (often relying on cutting-edge NVIDIA GPUs), carry substantial operational expenditure (OpEx). The $5,000 figure suggests the user generating that cost is utilizing the model almost constantly for deep problem-solving, placing significant strain on the provider’s cluster capacity.

Unit Economics: Why Inference Costs Are So High

When discussing AI costs, it’s helpful to break them down simply. Think of it like a complex, high-speed printer:

- Training Cost (The Printer Purchase): This is the one-time, enormous cost of creating the model. This is amortized over years.

- Inference Cost (The Ink and Paper): This is the cost every single time the user asks a question. This is the running expense.

For specialized coding models, the "ink and paper" are far more expensive than for general chatbots. Code generation often requires:

- Larger Context Windows: The model must hold thousands of lines of existing code, documentation, and error messages in its short-term memory to provide an accurate suggestion.

- Complex Reasoning: Generating functional, secure code requires several layers of complex reasoning and verification, demanding more computational steps per token generated.

If we examine comparable market data through searches like **"LLM serving cost vs subscription pricing strategies,"** we see that existing models like GitHub Copilot (often powered by OpenAI models) rely heavily on volume. They may run at lower, but still subsidized, margins initially. Anthropic’s reported margin appears dramatically wider, suggesting either a superior coding model that demands more resources or a commitment to securing the developer segment at any immediate cost.

The Future of Pricing: Inevitable Adjustments and the AI Tax

This era of deep subsidy cannot last forever. Investors demand a pathway to profitability, and the infrastructure buildout required to support millions of high-usage users is staggering. This leads to crucial implications for businesses and developers:

For Developers and Small Teams: Enjoy It While It Lasts

Developers benefiting from the $200 rate are essentially receiving a massive, temporary productivity boost at a fraction of its true cost. However, they must prepare for price volatility. If a tool becomes mission-critical, price hikes—or a shift to unpredictable usage-based billing—will have to be absorbed.

The search query **"Impact of AI developer tools subsidies on long-term pricing"** often surfaces discussions about this "AI Tax." Once incumbent tools are deeply embedded, the economic leverage shifts dramatically toward the provider. Businesses should look at current subsidized rates as introductory offers, not long-term benchmarks.

For Enterprise Buyers: De-Risking Dependencies

Large enterprises should use this period to rigorously test and benchmark these subsidized tools. However, their procurement strategy must account for the inevitable price corrections. Relying too heavily on one subsidized provider before understanding the true, non-subsidized cost of service presents a major vendor lock-in risk.

Furthermore, the integration layer itself—as seen with Cursor in this scenario—becomes a point of strategic focus. Analyzing **"Cursor AI client pricing model analysis"** helps understand how the downstream integration partner is managing the risk. Are they locking in users with proprietary features built atop Claude, or are they maintaining a flexible architecture that allows switching to future, potentially cheaper, models?

Actionable Insights for Navigating the Subsidy Era

As AI tools evolve from novelties to essential utilities, navigating the uneven economic playing field requires foresight. Here are three actionable insights for stakeholders:

- Benchmark True Performance, Not Just Price: Do not select a coding tool based solely on the introductory subscription. Test its performance (accuracy, security, speed) against your most complex, real-world workloads. If Claude Code saves a senior engineer 10 hours of work per week, even a $1,000 monthly fee might be justifiable if it prevents future hiring costs.

- Plan for Cost Escalation: Budget for AI OpEx to become a significant line item within the next 18-24 months. When contracts are signed or toolchains are established, negotiate clear caps or transparent frameworks for how inference costs will be calculated post-subsidy.

- Diversify Early: Avoid placing 100% of your mission-critical AI dependency on a single model or provider whose business model is currently running at a significant loss. Maintain active evaluations of alternative models (e.g., models from OpenAI, Google, or open-source leaders) to ensure you have options when market pricing inevitably shifts.

Conclusion: Building on Sand or Bedrock?

The $5,000 compute cost versus the $200 price tag is a powerful illustration of the current AI arms race. Providers are investing billions in infrastructure and accepting immense losses today to secure the dominant position tomorrow. This strategy is incredibly effective for rapid adoption, particularly in developer-focused tools where efficiency gains translate directly to higher output.

For those building the future—the software engineers, product managers, and investors—this revelation serves as a crucial warning. The revolutionary performance we enjoy today is being underwritten by today’s venture capital and aggressive operational strategies. The next phase of AI commercialization will be defined by how successfully these providers transition users from subsidized adoption to sustainable, profitable relationships. The challenge for all of us is to build our future workflows on bedrock, not on the temporary, subsidized sand of introductory offers.