The Rise of Autonomous Agents: Why Anthropic Turning Claude Code into a Background Worker Changes Software Development Forever

The world of Artificial Intelligence tools has long operated on a simple model: you ask a question, you get an answer. Generative AI assistants, from ChatGPT to early versions of coding tools, have been powerful but fundamentally reactive. They wait for your explicit command.

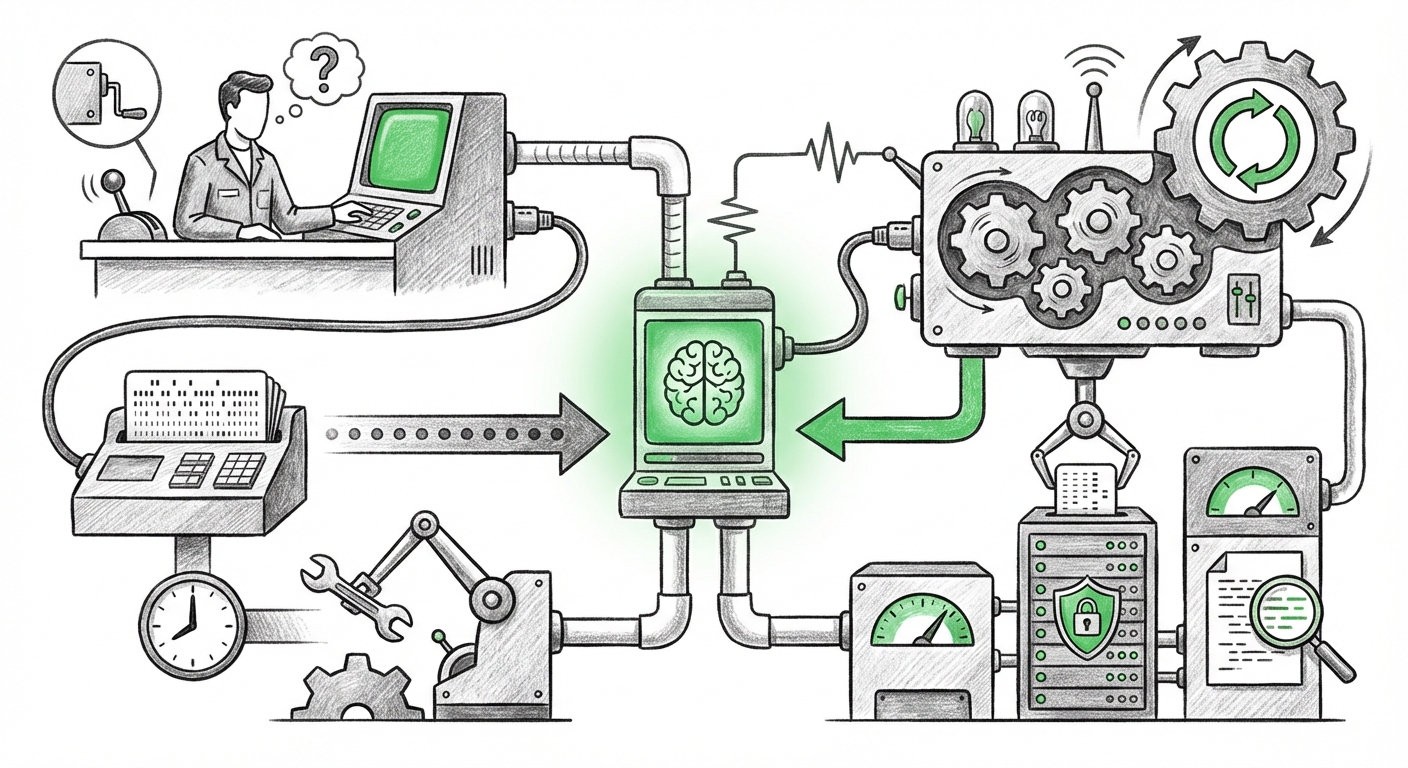

Anthropic’s recent announcement regarding Claude Code Desktop—allowing users to schedule recurring, automatic tasks—represents a pivotal moment that shatters this reactive paradigm. By transforming Claude Code into a true background worker capable of running tasks like routine error log checks or automatically generating pull requests for identified bugs, the industry is witnessing the swift transition from AI assistant to AI Agent.

This development signals that AI is no longer just a co-pilot sitting next to the programmer; it is becoming an autonomous, persistent member of the engineering team, working even when the human team is asleep. To understand the true impact, we must look beyond the feature itself and examine the underlying trends in agent architecture, the competitive landscape, and the critical security considerations inherent in local autonomy.

From Prompt to Persistence: The Architecture of AI Agents

The ability to schedule tasks moves AI from being a simple tool to an integrated system component. This capability is at the heart of what researchers call Agent Architecture. A true AI agent must possess three key traits that scheduling unlocks:

- Persistence: The ability to remain active and monitor environments over long periods.

- Autonomy: The capacity to decide *when* to act based on pre-set conditions (like a recurring schedule).

- Tool Use: The capability to interact with the environment (e.g., checking logs, creating code changes).

The move to scheduled tasks confirms that the industry consensus, which we are actively tracking across market analysis, is that the next wave of AI value will come from these autonomous processes. When an AI system can constantly patrol development artifacts—running diagnostics every three hours, for example—it drastically shortens feedback loops that previously required manual triggers. This aligns perfectly with the broader technological vector towards Autonomous Software Engineering, where LLMs are tasked with managing entire development lifecycles, not just writing snippets.

For executives and strategists, this means AI investment must now shift from focusing solely on better LLM response quality to building robust execution frameworks around those models.

The DevOps Revolution: AI as the Automated SRE

The specific tasks Anthropic highlights—checking error logs and creating fix PRs—are cornerstones of modern DevOps and Site Reliability Engineering (SRE). This is where the practical implications hit hardest, promising massive gains in efficiency and code quality.

Traditionally, triaging errors is time-consuming. A developer might only look at error logs during a scheduled check-in or when an alert fires. If Claude Code can run this analysis automatically and, crucially, suggest the **first draft of the fix** as a ready-to-review pull request, the benefits are transformative:

- Reduced MTTR (Mean Time To Resolution): Bugs that might linger for hours waiting for developer attention can be flagged and patched almost immediately.

- Proactive Maintenance: The system acts as a constant, tireless reviewer, catching subtle regressions that human eyes might miss during routine sprints.

- Democratization of AIOps: Complex log analysis, usually reserved for specialized SRE teams, becomes accessible via automated scheduling across all development projects.

This trend shows AI is moving deep into the software supply chain. We are seeing the initial stages of AI managing the "plumbing" of software—monitoring, patching, and maintaining—freeing up human engineers to focus on complex, creative problem-solving.

The Competitive Landscape: Autonomy as the New Frontier

Anthropic is making a bold play in the coding agent space. While competitors like GitHub Copilot have dominated the immediate, in-line coding experience, the introduction of persistent, scheduled autonomy sets a new benchmark.

If market leaders like Microsoft/GitHub have not yet fully integrated persistent, background scheduling into Copilot—focusing instead on contextual suggestions within the IDE—Anthropic is defining the next competitive battleground: Who can build the most reliable, persistent digital teammate? The emergence of specialized agents like Devin, designed for end-to-end task completion, further validates the trajectory toward full autonomy. The race is now on to see which platform can deliver the most seamless, low-friction automation of routine engineering tasks.

For engineering leadership, the decision pivots: Do you optimize for the best *in-the-moment* coding helper (the current strength of many assistants), or do you invest in the system that works continuously in the background, managing tedious upkeep?

The Critical Pivot: Local Execution and Security Governance

Perhaps the most nuanced aspect of Anthropic’s move is the emphasis on local execution of these scheduled tasks. Running an autonomous agent that can read logs, decide on a course of action, and generate code requires deep access to the local development environment, the network, and potentially sensitive code repositories.

This introduces significant security trade-offs that IT governance teams must immediately address. While local execution often promises enhanced data privacy (code doesn't leave the machine), it simultaneously increases the attack surface and privilege level granted to the AI.

The Local Agent Security Dilemma

If an AI agent is scheduled to run every few hours, it must have the permissions to interact with the system constantly. This means it needs access credentials that traditionally required human oversight. If that locally running agent is compromised, or if the scheduling logic contains an unexpected vulnerability, the resulting autonomous activity could be malicious or deeply disruptive.

Future adoption hinges on developing robust security layers around these local agents. We need industry standards for:

- Least Privilege for Agents: Defining precisely which files, network endpoints, and command-line tools the scheduled worker is allowed to touch.

- Audit Trails: Ensuring every autonomous action is logged immutably, so human supervisors can trace the *reason* the AI generated a specific PR or modified a file.

- Mandatory Human Gateways: For high-risk actions (like pushing to the main branch), even an autonomous system must pause and demand explicit human confirmation, regardless of the schedule.

This shift necessitates that cybersecurity strategies evolve from protecting against external threats to rigorously governing the behavior of trusted internal tools.

Actionable Insights for the Future

The background worker capability is the starting gun for a new era of integrated AI. Here is what technology leaders must consider now to prepare:

For Engineering Managers and CTOs:

Redefine the Developer Role: Begin training engineers not just on how to write code, but how to supervise and correct AI-generated maintenance tasks. The future developer spends less time on routine bug fixing and more time on architectural design and complex system integration.

Pilot AIOps Integrations: Identify the most repetitive, low-risk tasks (like checking dependency updates or applying standard linting fixes) and pilot scheduled agents there first. Measure the time savings against the administrative overhead of monitoring the agent.

For Cybersecurity and IT Leaders:

Establish Agent Governance Policies: Before large-scale rollout, define strict sandboxing protocols for any persistent, locally running LLM. Treat the scheduled agent with the same scrutiny as a new third-party integration service.

Focus on Output Validation: Since the agent will be creating code, invest in stronger automated testing suites (unit, integration, and security scans) that run *after* the AI generates its PRs, serving as the final safety net before deployment.

Conclusion: The Dawn of Unsupervised Software Maintenance

Anthropic’s move to embed scheduled, autonomous tasks within Claude Code is more than a product feature; it is a concrete manifestation of the industry’s pursuit of general-purpose AI agents capable of unsupervised work. We are crossing the threshold where AI transitions from being a helpful tool to an active, persistent force within the operational machinery of software development.

The implications are vast: unparalleled efficiency in maintenance, a fundamental shift in developer focus, and an immediate call to action for cybersecurity teams to govern these powerful new entities. The future development cycle will not be measured in sprint increments, but in continuous, AI-driven feedback loops that operate 24 hours a day, ensuring our complex software systems remain resilient, even when we step away from the keyboard.