The Real-Time Video Revolution: How Bytedance's Helios Unlocks Next-Gen AI Content Creation

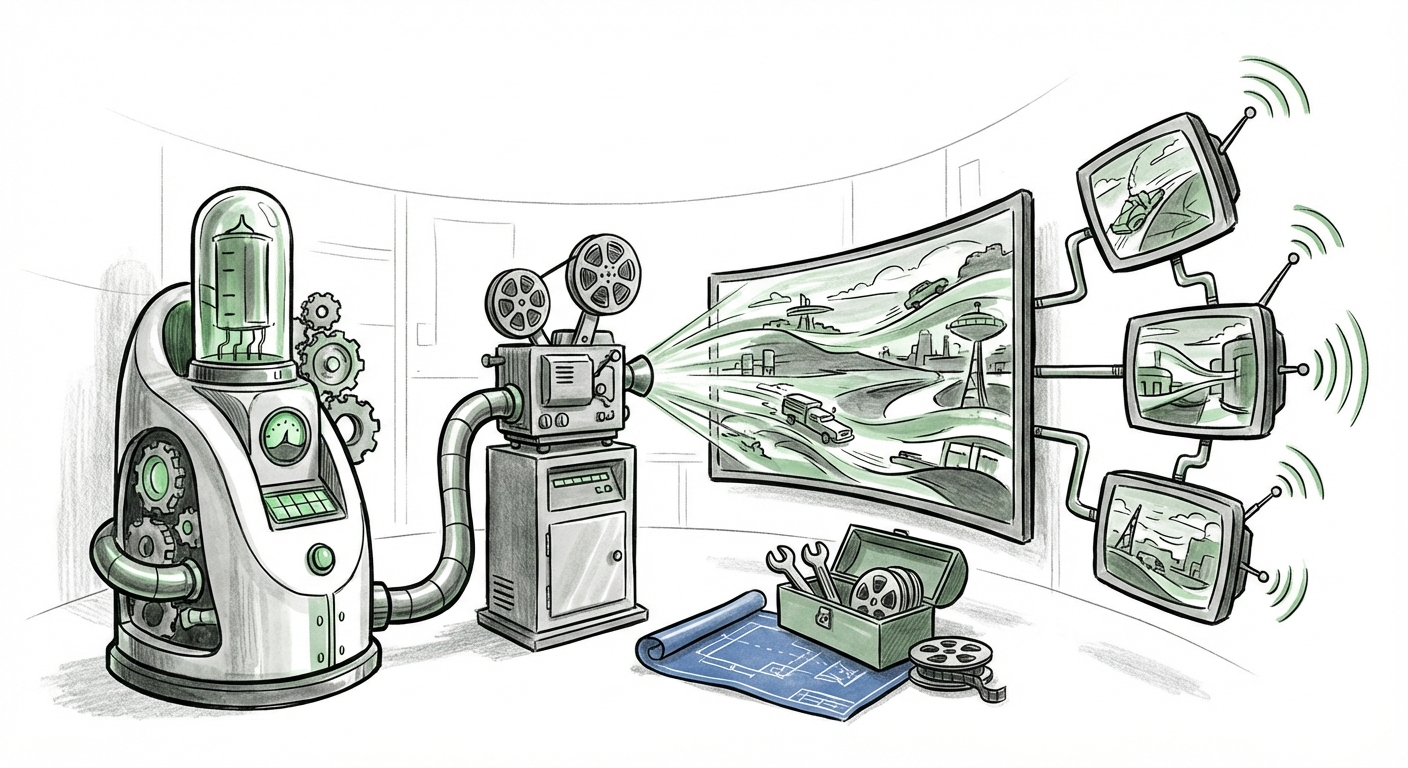

The world of generative AI has been captivated by the promise of high-fidelity video creation, models capable of turning simple text prompts into stunning, coherent motion pictures. However, this promise has always been tethered by a harsh reality: immense computational cost. Generating even a few seconds of high-quality video often requires vast, specialized server farms, making true creativity slow and expensive.

That reality just got a serious challenge. Bytedance, the powerhouse behind TikTok, has released details on its **Helios model**, marking a pivotal moment. Researchers claim Helios is the first 14-billion-parameter video generation model to achieve **19.5 Frames Per Second (FPS)** while rendering surprisingly long clips—up to a minute in duration—on a **single GPU**. Crucially, the model's code and weights are publicly available. This is not just an incremental update; it signals an inflection point driven by three simultaneous shifts: radical efficiency, aggressive open-sourcing, and shifting competitive dynamics.

The Efficiency Barrier Shattered: Near Real-Time Generation

To understand the significance of 19.5 FPS, we must first appreciate the context of video generation efficiency. For video to feel natural, it needs to approach 24 FPS (standard cinema). When proprietary models like OpenAI’s Sora or Google’s Veo showcase their power, they do so under conditions of extreme, specialized computation. They generate video frame-by-frame or chunk-by-chunk using massive parallel processing, often yielding beautiful results but demanding infrastructure that only the largest tech giants can afford.

Helios changes the math. Achieving near real-time speed (19.5 FPS) on *one* standard GPU means that generating a minute-long scene could take approximately 60 seconds, rather than the potentially hours or days required by less optimized architectures. For the audience of AI engineers and hardware analysts, this speaks directly to breakthroughs in **inference optimization**.

This optimization likely stems from advancements in how the model handles temporal consistency—ensuring objects look the same from one frame to the next without flickering or suddenly changing shape. If Bytedance has managed to bake this consistency directly into a highly efficient decoding schedule for their diffusion process, it means they have sidestepped the massive overhead associated with sequential rendering.

What this means for the future: This move immediately lowers the barrier for high-quality video production. We are moving from a world where generating video is a specialized batch process to one where it can become an interactive tool, much like current image generation. This hints at future applications where video is generated instantly alongside voice or text input, moving AI creation closer to a conversation.

The Open-Weight Gambit: Democratizing the Video Frontier

Perhaps more disruptive than the speed is the decision to release the **code and weights publicly**. For years, the most advanced generative models (like the initial DALL-E or Sora) have been kept "closed," accessible only via controlled APIs. This allows the owning company to maintain competitive advantage and control safety protocols.

Helios is following the path paved by Meta’s Llama family—the open-weight strategy. This is a declaration that Bytedance believes rapid iteration and community adoption are more valuable than exclusive control over the core technology. For the AI strategist and developer communities, this is a major inflection point.

- Accelerated Innovation: Thousands of independent developers and smaller labs can now download, inspect, modify, and fine-tune Helios. They can test novel applications or specialize the model for specific domains (e.g., architectural visualization, specialized animation styles) far faster than a single corporate team can.

- Standardization of Benchmarks: When a high-performing model is open, it becomes a de facto standard against which all future, efficiency-focused models must be judged.

- Trust and Transparency: Open weights allow external researchers to audit the model for bias, safety flaws, or unexpected emergent behaviors, building broader industry trust.

This strategy directly contrasts with the closed ecosystems being built by competitors. While closed models might offer polished, user-facing products (like a sleek Sora interface), the open-weight approach ensures that the foundational *technology* propagates widely, often leading to unexpected and disruptive applications.

The Competitive Landscape: Bytedance vs. The Closed Ecosystems

Bytendance’s involvement is not accidental; it is deeply strategic. As the owner of TikTok, they sit at the epicenter of short-form video consumption globally. Releasing a state-of-the-art, open video model serves several critical business functions, positioning them powerfully against rivals like Google and Meta.

Analyst reports often frame AI competition as a race for talent and foundational technology. By releasing Helios, Bytedance is positioning itself as a leader in *efficient* and *accessible* AI infrastructure, directly appealing to the broader developer community. This can act as a magnet for top engineering talent who prefer contributing to open projects rather than closed silos.

If Helios can be easily integrated into community workflows, it creates an environment where video creation—and subsequent distribution—is heavily favored on platforms that support these open standards, indirectly benefiting Bytedance’s core platforms. This is a clear competitive salvo, asserting that high-end generative capabilities do not need to remain locked behind the walls of Silicon Valley’s largest incumbents.

Future Implications: What Helios Means for Business and Society

The convergence of high-fidelity, fast rendering, and accessibility heralds profound changes across several sectors:

1. The Explosion of Personalized Content

For businesses, especially those focused on digital marketing and e-commerce, the implications are immediate. Imagine generating 60-second product demonstration videos tailored specifically to a user’s past purchasing behavior—all generated on demand, rather than being painstakingly pre-rendered.

If the iteration time drops from hours to seconds, creative teams can experiment wildly. Marketers can A/B test dozens of video narratives in the time it previously took to produce one. This speed fundamentally changes the economics of digital content creation, making mass customization viable.

2. Redefining Entertainment Production Workflows

Independent filmmakers and small animation studios will gain access to tools that were previously reserved for massive studios. While Helios may not perfectly match the ultimate photorealism of the most computationally expensive proprietary models, its accessibility and speed mean it can handle 90% of most creative needs instantly.

This democratization shifts power away from centralized rendering farms toward individual creative professionals equipped with powerful consumer or prosumer hardware.

3. Ethical and Societal Challenges Magnified

Speed and accessibility are double-edged swords. The easier it is to generate high-quality, minute-long video content cheaply, the more difficult it becomes to discern authenticity. The challenge of deepfakes and misinformation, already pressing, will escalate dramatically.

When complex video generation moves from a cloud API to a downloadable package running locally on a high-end PC, provenance tracking becomes exponentially harder. Societal efforts to develop robust watermarking and verification technologies must keep pace with Bytedance’s (and others’) inference breakthroughs.

Actionable Insights for Navigating the New Video AI Landscape

For organizations looking to capitalize on this shift, mere observation is insufficient. Action is required across three fronts:

- Invest in Optimization Expertise: If your business relies on future video pipelines, understanding the specific optimization techniques used in Helios (Query 3 context) is paramount. Knowledge of efficient diffusion scheduling, quantization, or novel attention mechanisms will be critical for building proprietary solutions on top of, or alongside, open-source foundations.

- Establish Open-Source Governance Policies: For enterprise adoption, understand the licensing implications of using open-weight models like Helios. Does the license permit commercial use? How will internal data be used to fine-tune the weights? Creating clear policies for engaging with open models ensures compliance and maximizes creative freedom.

- Benchmark Against Single-GPU Latency: Stop viewing AI video generation solely through the lens of total training cost or maximum output resolution. Start prioritizing *inference latency*. The winner in real-world deployment will be the model that can deliver the required quality fastest on the most accessible hardware. Helios sets a new, high bar for this benchmark.

Bytendance’s Helios is more than just a research paper achievement; it is a declaration that the era of slow, centralized video AI is ending. The combination of 14 billion parameters, near real-time speed, and open availability suggests that the next wave of digital content creation will be faster, cheaper, and profoundly more decentralized than we previously anticipated.