The LLM Operational Frontier: Why Function Calling and API Deployment Define the Next AI Era

For the last few years, the conversation around Large Language Models (LLMs) focused almost exclusively on raw intelligence: How smart is the next model? Can it reason better? This has led to a breathtaking parade of benchmark scores. However, recent industry analysis, such as the comparison between theoretical heavyweights like MiniMax M2.5, GPT-5.2, Claude Opus 4.6, and Gemini 3.1 Pro, signals a profound pivot. The new battleground is not just intelligence, but operational utility.

The core takeaway from analyzing these top-tier models in a practical deployment context—specifically leveraging public Managed Cloud Provider (MCP) servers as API endpoints and mastering function calling—is clear: The future of AI isn't just about better chatbots; it’s about building robust, reliable, and automated AI systems.

The Move from Lab Benchmarks to Production Readiness

When we compare models, we often turn to standardized tests (benchmarks) to gauge overall capacity. These tests, which often include metrics like MMLU or HELM scores, measure general knowledge, common sense, and complex reasoning capabilities. As highlighted by the need for corroborating data (Search Query 3), these standardized measures are vital for understanding the "ceiling" of a model's intelligence.

However, an incredibly smart model trapped in a slow or expensive service is useless for high-volume enterprise tasks. The analysis of hypothetical models like GPT-5.2 or Claude Opus 4.6 operating over public APIs forces us to consider three real-world realities:

- Latency: How fast does the API respond?

- Cost: What is the price per token for complex, multi-step requests?

- Reliability: Can the endpoint handle enterprise-level traffic spikes?

This is why discussions around Large Language Model API deployment latency and cost analysis (Search Query 2) are becoming central to IT strategy. For a business automating customer service or executing complex data pipelines, a half-second delay in API response time can translate into thousands of dollars in lost customer satisfaction or inefficient processing time.

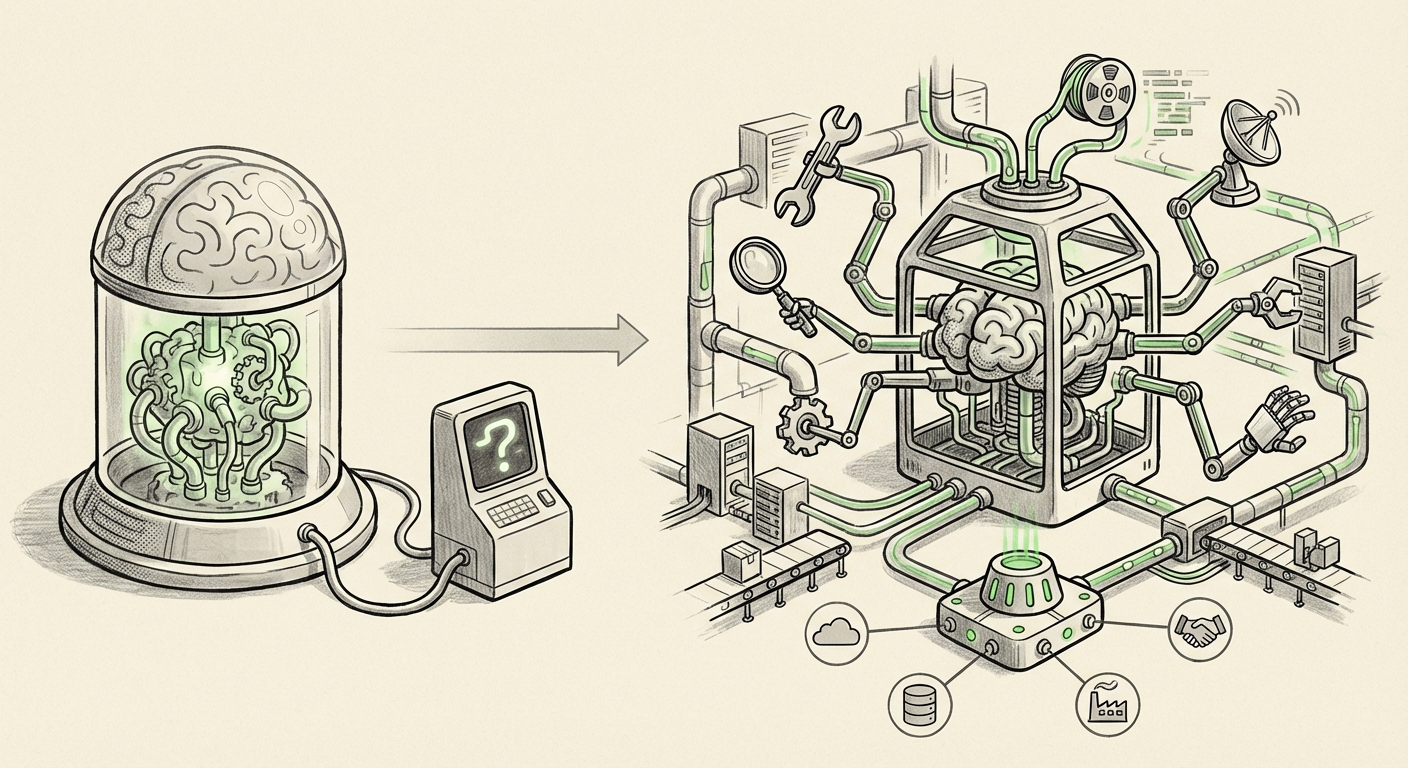

The Rise of Function Calling: Giving LLMs Hands and Feet

The most significant feature highlighted in the modern LLM comparison is **function calling** (or tool use). To put it simply, raw LLMs are fantastic at generating text, but they are fundamentally limited to the knowledge they were trained on. They cannot, on their own, check the current stock price, book a flight, or query a proprietary internal database.

Function calling changes this equation. It allows developers to describe external tools—functions, APIs, or databases—to the LLM in a structured format. When the user asks a question requiring external data (e.g., "What is the weather in London and draft an email summarizing the meeting notes from yesterday?"), the LLM doesn't guess. Instead, it correctly formats a request (a "function call") telling the hosting platform, "Execute the `get_weather('London')` function and the `read_file('meeting_notes.txt')` function."

Why This Feature is the New Benchmark

The complexity of modern AI demands more than just text completion. It requires action. This is the foundation of **Agentic AI workflows and external tool orchestration** (Search Query 5). Success in this area relies entirely on the model's ability to:

- Intent Recognition: Correctly deciding when a tool is needed.

- Parameter Generation: Filling in the correct arguments for the tool (e.g., knowing the city code for London).

- Output Interpretation: Taking the result from the external tool (e.g., "Sunny, 22C") and seamlessly weaving it back into a coherent, natural language answer.

Independent LLM function calling benchmarks (Search Query 1) are emerging precisely because the industry recognizes that a model that excels at this orchestration is far more valuable than one that simply scores higher on a vocabulary quiz. The ability to reliably chain tools together—from checking the weather to updating CRM records—is the difference between a novelty and a mission-critical application.

The Deployment Dilemma: Public MCP Servers

When models are deployed as "Public MCP servers as an API endpoint," it means businesses are accessing the AI brains hosted by major cloud providers or specialized platform providers like the one cited in the comparison. This approach has massive implications:

Pros: Speed to Market and Scalability

For most companies, hosting a model that is nearing the scale of GPT-5.2 locally (on-premise or self-managed) is prohibitively expensive and technically demanding. Using a public API endpoint abstracts away all the hardware management, GPU cluster maintenance, and complex serving infrastructure.

This allows developers to focus solely on the application logic—the clever prompts and the tool integration—rather than the MLOps nightmare of running cutting-edge infrastructure.

Cons: Control, Cost, and Data Sovereignty

The reliance on public endpoints introduces vendor lock-in and introduces costs that must be meticulously managed. As noted in deployment analysis (Search Query 2), the cost structure shifts from capital expenditure (buying hardware) to operational expenditure (paying per query). For high-throughput use cases, this Opex can balloon quickly if the model is not performing efficiently, leading developers to search for models that offer equivalent performance at a fraction of the operational cost.

This dynamic is fueling the push toward the open-source ecosystem. The competitive pressures noted in discussions around the rise of open-source LLMs vs proprietary APIs (Search Query 4) mean that open models are quickly catching up on features like function calling, often allowing organizations to bring the inference stack in-house or use smaller, cheaper hosting options, thereby reducing dependency on the few hyperscalers.

Future Implications: The Agent Economy

The synthesis of world-class intelligence (benchmarks), precise action-taking (function calling), and scalable access (API deployment) creates the foundation for the next major wave of AI: truly autonomous agents.

Actionable Insights for Businesses

What should technology leaders take away from this operational shift?

- Prioritize Tool Quality Over Raw Score: When selecting a foundational model for an enterprise workflow, demand high-quality, low-latency function calling performance documentation. A 2% drop in MMLU score might be acceptable if the model's tool-use accuracy increases by 15%.

- Develop Robust Agent Orchestration Layers: Invest in frameworks (like LangChain or custom code using libraries that support these models) that can gracefully handle API failures, retry function calls, and manage complex sequences of tool use. The LLM might fail gracefully, but your orchestration layer must succeed robustly.

- Factor in Total Cost of Ownership (TCO): Model A might be cheaper per token than Model B, but if Model A requires twice as many calls to achieve the same outcome due to weaker reasoning in tool selection, Model B has a lower TCO. This requires rigorous testing against your specific application workload, not just public leaderboards.

- Maintain Multi-Model Strategy: Given the rapid iteration cycle (where a model like the hypothetical Gemini 3.1 Pro could be superseded next quarter), organizations should architect systems to swap out the underlying LLM provider easily. API standardization is key to avoiding lock-in.

Conclusion: The Invisible Infrastructure

The comparison between leading LLMs, when viewed through the lens of API deployment and tool integration, reveals that AI development is maturing from an exploratory science into a rigorous engineering discipline. The cutting-edge LLMs are rapidly becoming less about their distinct personalities and more about their seamless integration into existing software ecosystems.

The future success of AI deployment will belong not just to the companies that build the largest models, but to the organizations that can most effectively integrate these models’ sophisticated reasoning into real-world business processes via reliable, cost-effective API endpoints equipped with powerful tool-use capabilities. Function calling is the universal language that translates raw potential into tangible business value.