The Tipping Point: Analyzing the Implications of 900 Million Weekly AI Users

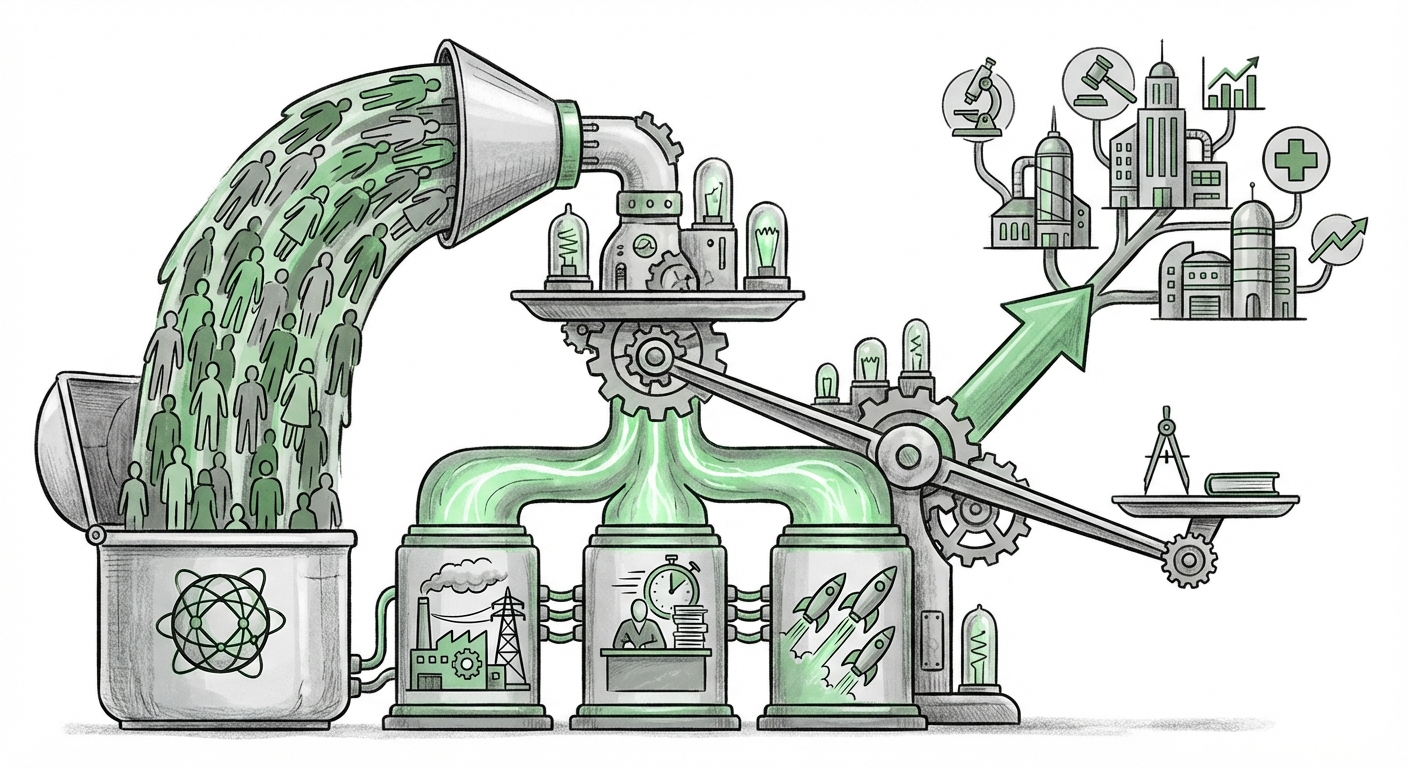

The digital world runs on metrics: Daily Active Users (DAU), Monthly Recurring Revenue (MRR), and engagement rates. When a single application, like ChatGPT, reportedly clocks **900 million weekly users**, it signals more than just popularity; it signals a fundamental societal migration to a new utility. This isn't just about viral success; it’s evidence that Artificial Intelligence has crossed the chasm, moving from an experimental technology to a daily necessity for nearly a billion people.

This staggering figure forces us to ask critical questions: What is the competitive reality underpinning this adoption? How much sheer computing power does it take to run a service this popular? And most importantly, what does this mean for the future of work, infrastructure, and governance?

From Niche Tool to Universal Utility: What 900 Million Users Really Means

A service achieving 900 million weekly active users means it is embedded in daily routines. Think of search engines, major social platforms, or email clients—these are the benchmarks for mass adoption. For an AI model, which fundamentally changes how knowledge is accessed and tasks are executed, this level of usage suggests several key shifts:

- Behavioral Change: Users are not just testing AI; they are relying on it for writing, debugging code, drafting communications, brainstorming, and learning. The "AI-first" approach is becoming the default.

- Erosion of Traditional Interfaces: Traditional search and basic software functions are being augmented or replaced by conversational interfaces. If you need an answer, you ask the AI first, before navigating ten different websites.

- The New Productivity Baseline: For a growing segment of the workforce, using AI is no longer a competitive advantage; it is the minimum requirement for efficiency.

To understand if this hyper-adoption is sustainable and representative of the entire sector, we must look beyond the headline number and investigate the supporting pillars of this digital revolution. We need context from the market, the server room, and the boardroom.

The Three Pillars of AI Scale: Contextualizing Hyper-Adoption

Analyzing the 900 million user figure requires looking at the technology from three essential angles: the crowded market, the heavy hardware load, and the measurable impact on human work.

1. The Competitive Landscape: Is Everyone Growing This Fast?

A single company achieving this scale is impressive, but true sector maturity is seen when competitors are also experiencing explosive growth. We must confirm the vitality of the entire ecosystem.

| Search Focus | Value Proposition |

|---|---|

| "Global generative AI usage statistics Q1 2024" | Seeks aggregated market reports to confirm if growth is uniform across major players (Google Gemini, Claude, etc.), validating the mass-market shift. |

If industry reports show that Google and Anthropic are also seeing hundreds of millions of interactions weekly, it solidifies the narrative: AI has become a foundational layer of the modern internet. For businesses, this means choosing an AI partner is becoming as crucial as choosing an operating system. The competitive fight will shift from who has the best base model to who offers the best integration, enterprise security, and specific vertical expertise.

2. The Infrastructure Arms Race: Paying for Popularity

Every question asked, every piece of code generated, and every email drafted by 900 million weekly users requires significant computational inference power. This translates directly into massive energy consumption and capital expenditure (CapEx) by the cloud providers who host these models.

| Search Focus | Value Proposition |

|---|---|

| "AI chip demand forecast and hyperscaler capital expenditure 2024" | Examines the massive investment by cloud giants (AWS, Azure) in specialized hardware (like GPUs) required to sustain the response times necessary for so many concurrent users. |

For the layperson, this means the cost of running AI is soaring. For decision-makers, this highlights a critical vulnerability: AI's utility is currently shackled to the availability of cutting-edge hardware. Companies must budget not just for API calls, but for the massive national and global investment in server farms needed to keep the AI "on." High CapEx figures corroborate the scale of the user base, proving the demand is translating into real-world infrastructure commitment.

3. The Economic Justification: Productivity in the Wild

Why are so many people using these tools? Because they work. The true measure of mass adoption is seen when the tool demonstrably improves output efficiency across professional domains.

| Search Focus | Value Proposition |

|---|---|

| "Impact of large language models on white-collar productivity statistics" | Seeks hard data on how much faster knowledge workers are becoming due to LLMs, justifying the widespread adoption seen in the user numbers. |

If studies show that marketing teams are completing first drafts 40% faster, or developers are reducing bug identification time by 25%, this quantifiable ROI explains the 900 million user figure. This is the engine driving enterprise integration. Businesses that fail to adopt standardized LLM workflows risk being outpaced by competitors leveraging these documented efficiency gains.

The Future: Implications for Business Strategy and Societal Structure

The scale of AI usage sets the stage for the next phase of technological evolution, bringing both immense opportunity and unavoidable challenges.

Actionable Insight for Businesses: AI Literacy is Non-Negotiable

With nearly a billion people using the tool weekly, familiarity with prompt engineering and AI outputs is rapidly becoming a mandatory skill, much like basic computer literacy became in the 1990s. Businesses must:

- Standardize Tooling: Move beyond ad-hoc personal use. Select approved, secure LLM platforms and train teams on standardized workflows to capture maximum productivity gains consistently.

- Focus on Verification: Because the usage is so high, the rate of AI-generated misinformation or inaccurate "hallucinations" scales proportionally. Establish rigorous human-in-the-loop verification processes for all critical outputs.

- Re-evaluate Roles: Analyze which tasks are now being consistently absorbed by AI. Focus employee development on high-level strategy, complex problem-solving, and relationship management—tasks where human nuance remains paramount.

The Inevitable Regulatory Reckoning

When a platform reaches this size, national governments and supranational bodies take notice. 900 million weekly interactions touch upon privacy, intellectual property, and market dominance in ways that small-scale beta testing never did.

| Search Focus | Value Proposition |

|---|---|

| "EU AI Act implications for widespread consumer AI adoption" OR "FTC scrutiny of large AI model monopolies" | Addresses the growing governance challenges, focusing on how massive user data handling and market concentration will be addressed by upcoming legislation. |

This scale forces regulators’ hands. We can expect rapid solidification of rules surrounding data lineage, transparency (knowing when you are interacting with AI), and accountability for harmful outputs. Companies operating at this scale must transition from viewing compliance as an afterthought to embedding regulatory adherence directly into model deployment.

The Path Forward: From Generalist Tool to Specialized Intelligence

The current phase is defined by the generalist LLM—the Swiss Army knife capable of writing a poem, summarizing a meeting, or debugging a line of Python. However, the future trajectory suggested by this massive user base points toward specialization.

When a billion people are using a general tool, the next leap in value comes from tailoring that tool precisely to specific, high-stakes domains. We will see a fragmentation of the leading models into highly specialized agents:

- Hyper-Specific Industry Models: AI trained exclusively on validated medical journals, complex legal precedents, or proprietary financial data. These models will command a premium because their "hallucination" risk is lower, and their utility in critical workflows is higher.

- Embedded AI: Instead of visiting a website to use the AI, the intelligence will be embedded directly into the software that runs the world—the CRM, the ERP system, the diagnostic equipment. The 900 million users will access AI without realizing they are doing so, making the interaction seamless and invisible.

- Personalized Models (Small but Mighty): As personal computing power grows and techniques like quantization improve, users will demand smaller, private models running locally on their devices, prioritizing data sovereignty over massive cloud-based generalism for sensitive tasks.

The reality of 900 million weekly users means that the foundational shift is complete. The age of AI as a curiosity is over; the age of AI as essential infrastructure has begun. The next decade will be defined not by acquiring users, but by deepening the utility, securing the supply chain, and building the societal guardrails necessary to support this new digital reality.