The Great AI Benchmark Divide: Why Coding Dominates While 92% of Jobs Wait in the Wings

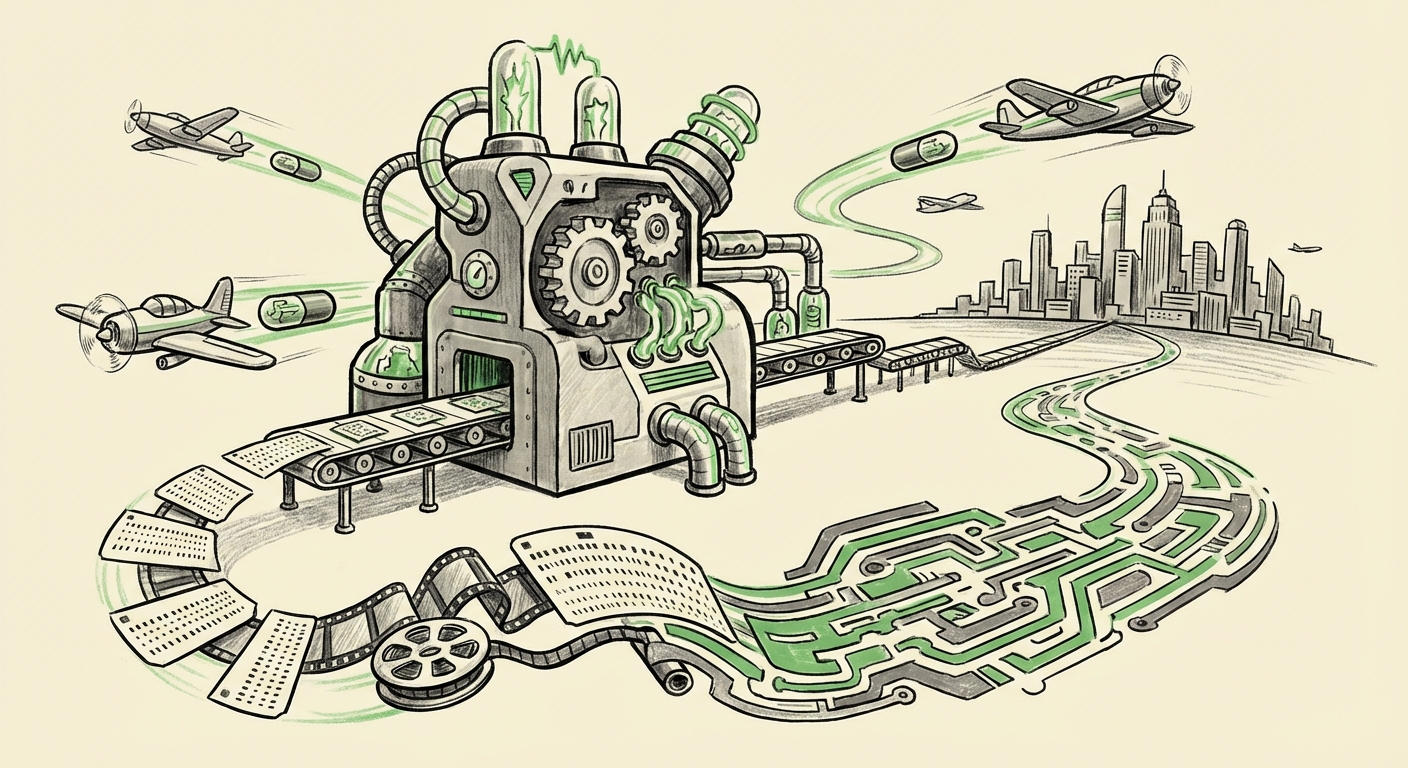

The current wave of Artificial Intelligence, driven by sophisticated Large Language Models (LLMs), promises a transformation of the global workforce comparable to the Industrial Revolution. However, a critical tension is emerging at the very foundation of AI development: how we measure progress. A recent study highlights a striking imbalance: AI agent benchmarks are overwhelmingly focused on coding tasks, effectively ignoring the vast majority of the US labor market—roughly 92% of jobs that don't involve writing software.

This isn't just an academic curiosity; it represents a major strategic bottleneck. If we only build AI that excels at coding, we risk creating brilliant, specialized tools while leaving the massive sectors of administration, service, healthcare coordination, and complex knowledge work vastly underserved by agentic AI. As technology analysts, we must dissect this divide, understand its origins, and map out the necessary pivot toward holistic evaluation.

The Siren Song of Code: Why Benchmarks Skew Technical

Why has coding become the de facto proving ground for AI agents? The answer lies in measurability and the nature of software development itself.

1. Deterministic Success

Coding is highly structured. If you ask an agent to write a function, the output is either correct (it passes the unit tests) or incorrect. Benchmarks like HumanEval and MBPP provide clear, binary pass/fail metrics. This clarity is invaluable for engineers iterating rapidly on model weights and architecture.

For the business of AI development, this means faster R&D cycles. Researchers can quickly determine if a model improvement translates into measurable, reproducible gains. This speed is addicting, especially in a highly competitive field.

2. The Availability of Training Data

The internet is saturated with high-quality, structured code, documentation, and repositories (like GitHub). This rich, labeled environment is perfect for supervised fine-tuning and reinforcement learning. Conversely, defining "success" in a customer service interaction, a strategic planning session, or a nuanced negotiation is subjective, context-dependent, and difficult to label cheaply at scale.

3. The Developer as the First User

Initially, LLMs were primarily tools for developers. It made perfect sense to focus on improving the tool for the people building and testing it. The "AI coder" became the low-hanging fruit—the most direct and immediate application of advanced reasoning capabilities.

The Elephant in the Room: Neglecting the 92%

The focus on coding creates a significant blind spot. The bulk of economic activity, and therefore the bulk of AI-driven labor disruption, lies outside software engineering. This "92%" includes roles that require:

- Complex Context Switching: Moving between email triage, database lookups, report generation, and scheduling.

- Nuanced Communication: Handling sensitive client communications, managing emotional tone, and navigating organizational politics.

- Ambiguous Goal Definition: Tasks where the prompt is vague (e.g., "Improve our Q3 marketing ROI") rather than concrete (e.g., "Write a Python script to parse this CSV").

A recent study highlighted in *The Decoder* reveals that current agent benchmarks are failing to capture this reality, pointing to a severe misalignment between research focus and real-world utility for the mass market. This narrow testing scope risks creating "brilliant but brittle" agents.

Digging Deeper: Corroboration and Context

To understand the ramifications of this divide, we must look beyond the initial finding and examine the technical critiques and economic consequences. By investigating specific areas, we gain a fuller picture of where AI development needs to pivot:

Technical Rigor vs. Real-World Messiness

If we look at the "Critique of HumanEval and MBPP benchmarks," we find that even within coding, researchers question the validity of testing. If the foundation benchmark is flawed (e.g., due to data contamination or focusing only on short-term syntactic correctness), the entire pipeline of agent advancement built upon it is shaky. This technical fragility is amplified when we try to apply that same logic to non-deterministic tasks.

The Looming Economic Shift

The focus on coding means AI investment rushes toward automating engineering teams, while administrative and service jobs—often comprising the majority of a company’s payroll—are left waiting for robust solutions. Analyses concerning the "Economic impact of LLMs on service sector labor" show that these sectors face massive disruption potential. If agents cannot reliably handle tasks like processing insurance claims or managing supply chain logistics today, the promised productivity boom in those areas will remain just that: a promise.

The Search for Holistic Metrics

The imperative for researchers now is to address the question posed by searching for "AI agent benchmarks beyond code generation." We need evaluations that force agents to interact with complex, multi-step environments, use proprietary software interfaces, maintain long-term memory across sessions, and adapt to shifting human preferences. Frameworks like **AgentBench**, when expanded beyond their current limitations, or entirely new methodologies focusing on planning, tool use, and external validation are necessary.

Implications for the Future of AI Development

This benchmark obsession shapes investment, academic publishing, and corporate strategy. What happens when we prioritize the wrong metrics?

1. The Productivity Paradox in Non-Technical Fields

Searching for "The productivity paradox of specialized AI agents" reveals a core business concern. Companies are seeing LLMs excel in R&D labs but struggle to implement them widely. A finance team needs an agent that can correctly reconcile two separate ledger systems, not just write a SQL query. If benchmarks only reward isolated coding success, companies deploying these agents for broader knowledge work will face frustration because the agents lack the necessary grounding in enterprise context, process adherence, and tool mastery required for the 92%.

2. Widening the Skills Gap

An overemphasis on coding AI accelerates the demand for high-level programmers who can manage and guide these tools, while simultaneously delaying the necessary development for tools that augment the skills of administrative staff, paralegals, or customer support agents. This risks creating a labor market cleaved in two: a small, highly augmented group of coders, and a large group whose non-coding roles remain largely untouched by meaningful AI augmentation, leading to slower overall productivity growth.

3. Stagnation of True General Intelligence

True Artificial General Intelligence (AGI) requires agents capable of solving problems in the messy, physical, and human world. Coding is a clean simulation of reasoning. By limiting testing to code, we are training systems to become excellent *simulators* of intelligence rather than *demonstrators* of generalized intelligence. The complex, iterative, and sometimes frustrating process of real-world problem-solving—which defines most white-collar work—is being bypassed.

Actionable Insights: Shifting the Development Paradigm

For AI labs, enterprises, and policymakers, recognizing and correcting this benchmark bias is vital for ensuring equitable and widespread technological progress.

For AI Researchers and Developers: Mandate Diverse Benchmarks

The industry must actively fund and prioritize the creation of robust, automated evaluation environments for non-coding tasks. This means investing in benchmarks that test interaction with SaaS platforms, complex document interpretation, multi-turn negotiation simulations, and adherence to regulatory standards. The goal must shift from "Can it write code?" to "Can it successfully run a small business unit?"

For Enterprises: Test Against Your Actual Workflow

Businesses should resist the urge to adopt agent technology based purely on abstract coding leaderboard scores. Instead, implement rigorous, internal testing frameworks that mirror the most complex 20% of your high-volume, non-technical work. If an agent fails your internal invoice processing test, its ability to write a novel Python library is irrelevant to your bottom line.

For Policymakers and Educators: Prepare for the True Disruption

If the innovation pipeline remains focused on code, policy should pivot to focus on workforce retraining for the service and knowledge sectors *now*. Educational institutions must emphasize critical thinking, complex communication, and process management over rote technical skills that AI is rapidly commoditizing. We need to prepare workers for collaboration with agents designed for nuanced, ambiguous tasks, not just debugging them.

Conclusion: The Road Beyond the Terminal

The obsession with coding benchmarks is a reflection of engineering convenience, not market necessity. While coding prowess is a magnificent achievement, it represents a tiny fraction of where AI agents need to prove their worth to unlock the next stage of economic productivity. The future of truly transformative AI—the kind that touches the lives of most people daily—depends on our collective willingness to step away from the clean, deterministic terminal environment and embrace the chaos, complexity, and context of the other 92% of the human labor landscape.