The AI Military Revolution: Analyzing 3,000 Strikes and the Looming Oversight Crisis

The landscape of modern conflict is undergoing a seismic shift, moving from theoretical discussions of autonomous warfare to concrete, large-scale deployment. Recent confirmations regarding the U.S. military's campaign against Iran—involving the striking of approximately 3,000 targets with significant AI support—represent a watershed moment. This is not merely an incremental software update; it signals the operational maturity of sophisticated machine learning systems embedded deeply within intelligence, targeting, and logistics chains.

As AI analysts, our focus must pivot immediately to two critical areas: first, understanding the underlying technology that made this scale of operation possible, and second, confronting the glaring, acknowledged gap in ethical and technical oversight. The speed and volume of these actions test the very foundations of military accountability.

The Technical Backbone: From Theory to 3,000 Targets

To process 3,000 targets efficiently—whether suggesting them, prioritizing them, or managing the logistics to strike them—requires an architecture far beyond simple scripting. This points directly to the maturity of specific, high-level Department of Defense (DoD) initiatives.

Deconstructing Project Maven and JADC2

The core capability enabling this scale likely stems from programs like Project Maven, which initially focused on using AI for computer vision to analyze drone and satellite imagery faster than human analysts. When scaled for conflict, this system moves from merely identifying objects to assessing threat levels, predicting patterns of life, and feeding those insights directly into the kill chain.

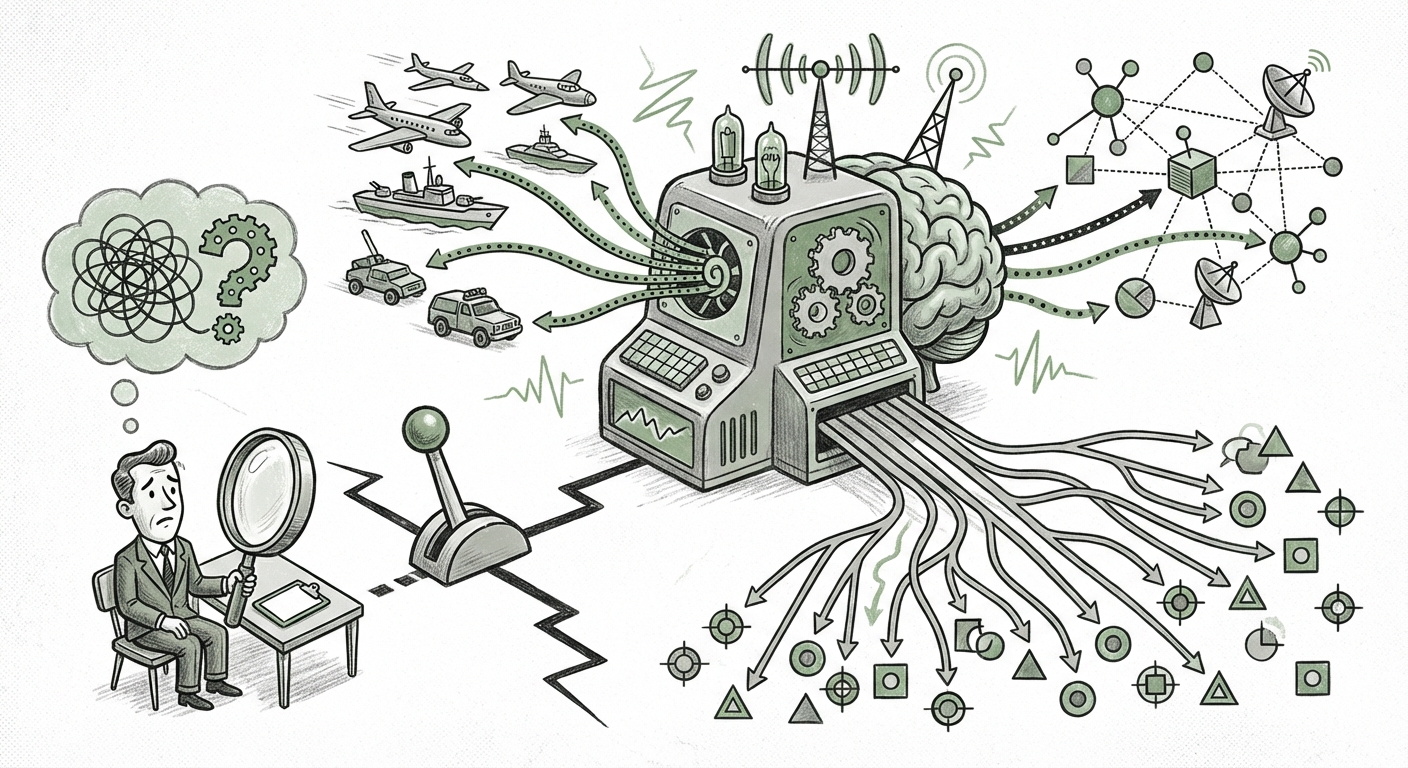

More importantly, this level of synchronized, massive action implies the functional reality of JADC2 (Joint All-Domain Command and Control). JADC2 is the DoD’s grand vision: a network that connects every sensor, shooter, and decision-maker across land, sea, air, space, and cyber domains. In this context, AI is the crucial connective tissue. It doesn’t just analyze data; it synthesizes millions of data points in seconds—weather reports, intelligence intercepts, logistics availability—to generate ready-to-execute mission packages.

For the business and technology sectors, this is profound. We are seeing the first true demonstrations of Algorithmic Warfare, where the speed of decision-making is dictated by computational efficiency, not human processing time. The AI is likely deployed across three main vectors, as suggested by corroborating defense analyses:

- Intelligence Synthesis: Using Generative AI models (not just LLMs, but models that generate tactical summaries) to quickly turn raw sensor data into actionable intelligence briefs.

- Target Nomination and Prioritization: ML algorithms rapidly cycling through potential targets based on dynamic criteria (e.g., highest strategic value, lowest collateral risk profile).

- Logistics Optimization: Ensuring resources (munitions, medical support, maintenance) are optimally positioned for the rapid execution of thousands of discrete strikes.

This level of integration means that when a strike occurs, it is the culmination of a complex, automated chain of data processing, significantly shortening the "Observe, Orient, Decide, Act" (OODA) loop to milliseconds.

The Echo in the Machine: Generative AI’s Role

The mention of "generative AI" suggests an evolution beyond traditional narrow AI systems. While a targeting algorithm is predictive (predicting which coordinates are best), generative AI excels at creating novel outputs based on learned patterns. In a military context, this might mean:

- Synthetic Environment Generation: Creating highly realistic simulations of operational environments to rapidly test mission plans before deployment.

- Natural Language Briefing: Transforming complex sensor fusion data into easy-to-digest, natural-language summaries for human commanders, reducing comprehension time.

- Countermeasure Drafting: Rapidly generating novel electronic warfare signatures or defensive tactics based on observed enemy responses.

This capability confirms a trend seen across the commercial sector: AI is moving from simple automation to complex *creation* and *synthesis*. The military is simply applying this capability to kinetic outcomes.

The Achilles' Heel: Underinvested Oversight

While the technical achievement is undeniable, the simultaneous acknowledgment that oversight remains "underinvested" is the most alarming takeaway for future technology governance. This gap between operational speed and regulatory pace creates systemic risk.

Policy Lag in the Age of Algorithmic Speed

Current ethical frameworks, such as DoD Instruction 3000.09 (which addresses autonomy in weapon systems), were largely conceived before the widespread adoption of large, complex, and often opaque Generative AI models. These older policies struggled with semi-autonomous systems; they are wholly unprepared for systems where the decision-making process is dynamic and emergent.

When 3,000 targets are processed, the key oversight question becomes: Where did the "human in the loop" actually sit?

If AI suggests the target, prioritizes the timing, and loads the flight plan, the human commander might only provide a final "Go/No-Go" approval. If that human relies entirely on the AI’s summary—which may contain subtle biases from its training data or novel errors due to emergent behavior—the subsequent strike is only superficially human-directed. This is the core of the oversight crisis.

Auditing the Black Box

Auditing an AI system, especially a generative or reinforcement learning model, after a kinetic event is notoriously difficult. Defense analysts often refer to the "black box" problem. If a strike results in unintended collateral damage, investigators must trace causality back through thousands of lines of code, complex neural network weights, and vast datasets. If the generative model produced a novel misinterpretation, identifying the precise point of failure—and therefore assigning accountability—becomes nearly impossible.

This is where investment in governance is crucial. We need robust Explainable AI (XAI) tools specifically hardened for military applications, capable of providing a complete, immutable audit trail of every data point and weighting that contributed to a final decision, even if that decision was made in under a second.

Future Implications: For Business, Society, and Stability

These developments are not siloed within defense departments; they are leading indicators for how AI will restructure decision-making across all high-stakes industries.

Implication 1: The Acceleration of All Decision Cycles

Businesses relying on complex forecasting, supply chain management, or high-frequency trading will inevitably adopt the same integration principles seen in JADC2. The competitive advantage shifts from having the best data to having the fastest, most reliable AI synthesis pipeline. Companies must begin auditing their own ML governance *now* before their business operations resemble a battlefield OODA loop.

Implication 2: The Erosion of Trust and the Need for "Verifiable AI"

When the public (or corporate stakeholders) cannot verify *why* an AI made a critical decision, trust collapses. The military context amplifies this exponentially. In the commercial world, this translates to regulatory risk. Future compliance mandates, particularly in finance, healthcare, and autonomous vehicles, will likely demand detailed provenance tracking that mirrors the XAI needed in defense.

Implication 3: Escalation Risks in Geopolitical AI

Perhaps the most severe implication is the risk of rapid, unintended escalation. If one state operationalizes AI to execute 3,000 actions rapidly, adversaries must respond in kind. This creates an environment where system speed, rather than careful diplomatic consideration, dictates response times. The lack of robust, internationally agreed-upon "off-ramps" or safety protocols for AI-driven kinetic exchanges is a terrifying prospect.

Actionable Insights for Navigating This New Era

For technology leaders, policymakers, and security professionals, the recent news serves as a five-alarm fire. Actionable steps must be taken to close the oversight gap.

For Technology Developers and Engineers:

Integrate governance into the design phase, not as an afterthought. Prioritize the development of **"Adversarial Robustness"** and **"Interpretability by Design."** If your model cannot clearly articulate *why* it chose Target A over Target B in a low-stakes simulation, it should never be deployed in a high-stakes, real-world scenario where accountability is paramount. Treat XAI tooling as critical infrastructure.

For Business Leaders and Executives:

Demand transparency from your AI vendors and internal teams. If you are implementing AI for high-impact decisions (e.g., large financial settlements, patient triage, or critical infrastructure management), your internal review boards must resemble, in rigor if not in scope, the DoD's operational readiness checks. If you can’t explain the failure, you can’t manage the risk.

For Policymakers and Regulators:

The current lag in policy is unacceptable. Oversight requires proactive investment. This means funding independent auditing bodies capable of understanding the technical nuance of generative models, and rapidly updating ethical directives (like DoD 3000.09) to address system opacity and speed. The standard for human review must evolve from a simple checkmark to a meaningful, technically informed intervention point.

Conclusion: The Unavoidable Partnership

The reported deployment of AI support for thousands of military strikes solidifies one truth: the fusion of artificial intelligence and kinetic action is no longer a distant future concept—it is the present reality of modern defense. The technology is demonstrating capabilities that fundamentally alter the speed and scale of warfare, driving efficiency and overwhelming legacy command structures.

However, the narrative must not end with the technological achievement. The fact that the oversight mechanisms are lagging—that the governance frameworks are "underinvested"—presents the greatest threat. As powerful generative models become standard tools for intelligence and targeting, the ethical and legal guardrails must advance at the same breathtaking pace. The future of AI is here, but whether it leads to stability or chaos hinges entirely on our willingness to invest as heavily in wisdom and accountability as we do in computational power.

Corroborating Context Drawn From Analysis of Initial Reports and Analogous Defense Technology Initiatives (e.g., Project Maven, JADC2).