The AI Benchmark Blind Spot: Why Coding Dominance Ignores 92% of the Real World

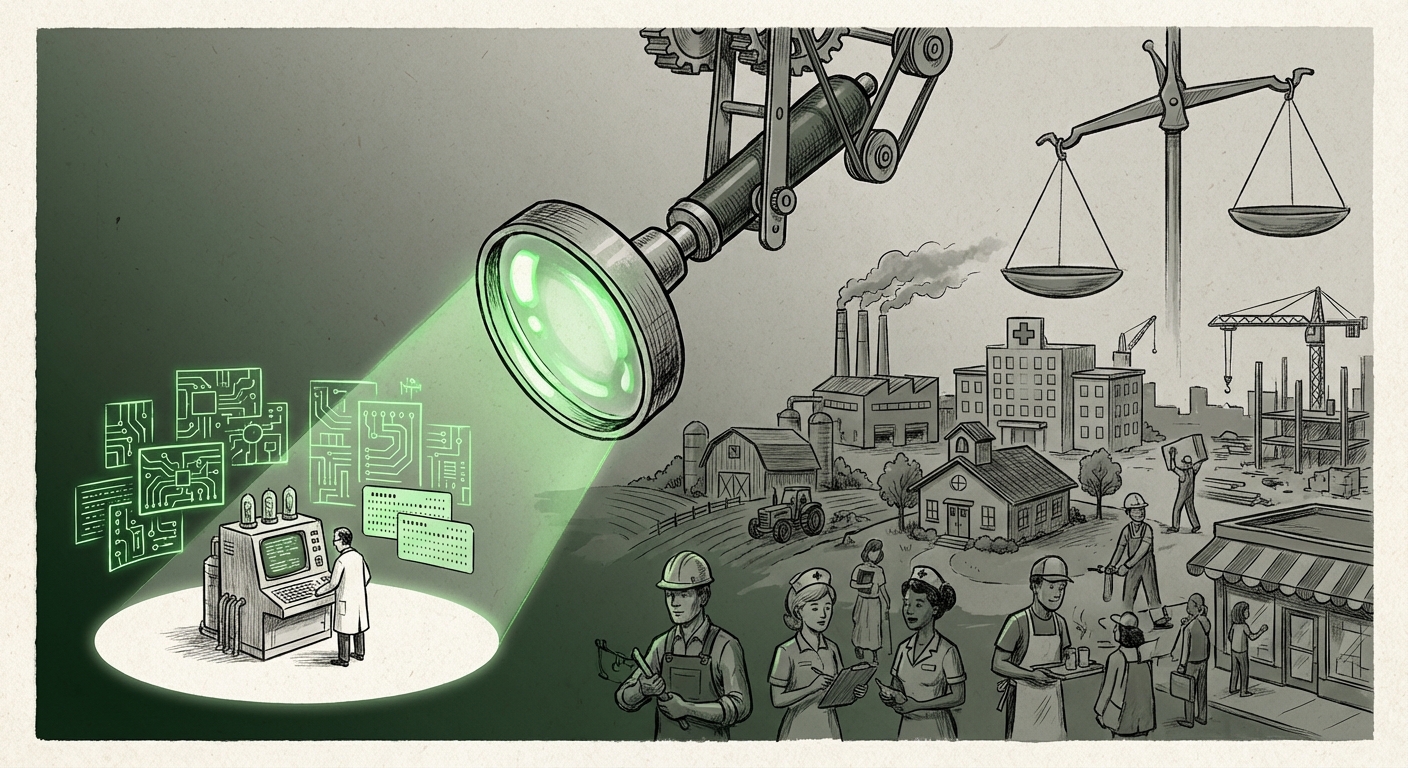

The explosion of Artificial Intelligence agents—autonomous programs designed to execute complex tasks—has been exhilarating. We see agents writing complex software, managing cloud infrastructure, and even composing music. Yet, beneath this flurry of innovation lies a critical, structural flaw in how we are measuring progress. A recent study highlights a major disconnect: current AI agent benchmarks are overwhelmingly obsessed with coding, effectively ignoring the vast majority (92%) of the U.S. labor market.

As an AI technology analyst, this imbalance is more than just an academic curiosity; it represents a significant misalignment between current R&D priorities and real-world utility. If AI development continues to optimize only for the easiest-to-measure tasks, we risk creating a powerful technology capable of optimizing a small slice of the economy while leaving the majority untouched, or worse, misunderstood.

The Siren Song of Verifiability: Why Coders Rule the Benchmarks

Why has coding become the default metric for advanced AI agents? The answer lies in the nature of software development itself: verifiability.

Imagine testing two agents. Agent A is asked to write a function to sort a list of names. This task has a clear outcome: the code either runs correctly or it throws an error. Agent B is asked to "handle a difficult customer complaint, de-escalating the situation while ensuring customer retention." The success of Agent B is subjective, requiring nuanced judgment, emotional intelligence, and adherence to vague corporate policy.

In the high-stakes world of AI research, where publication and funding depend on demonstrable progress, researchers gravitate toward tasks with clean, binary results. As studies confirm this trend, we see a technical rationale for the bias. Coding tasks, often using standardized datasets like HumanEval or MBPP, offer reliable, repeatable, and *quantifiable* metrics. This aligns perfectly with the current limitations of Large Language Models (LLMs).

The Technical Constraint: Reasoning vs. Reliability

This preference isn't purely arbitrary; it’s rooted in current LLM capabilities. Tasks requiring high factual accuracy and consistent complex reasoning—areas where models often struggle with hallucination rates—are avoided in formal benchmarks. Coding, while complex, benefits from the deterministic nature of logic and syntax. If an agent can reliably generate correct Python, researchers can claim a significant step toward general intelligence.

However, this focus provides a distorted view of general capability. A model that passes 90% of coding tests might still fail catastrophically when asked to process a dense, unstructured legal document or manage a dynamic inventory system. The benchmark prioritizes technical dexterity over contextual wisdom.

The Neglected 92%: Beyond the Terminal Window

The 92% represents the bulk of the American economy: retail, healthcare administration, logistics, education, customer service, and skilled trades. These sectors rely on tasks characterized by high degrees of ambiguity, physical interaction, and emotional context. These are the areas where LLMs, even as agents, face their greatest challenges.

Challenges in Service and Administrative Tasks

When we investigate what researchers *are* testing outside of code, we find pockets of effort in administrative automation or simple data extraction. However, these often fall short of true agentic behavior. For example, an agent designed for administrative tasks might accurately schedule a meeting, but fail when that meeting requires rescheduling due to three conflicting priorities, a sensitive personnel issue, and an unexpected time zone change.

Similarly, in fields like **healthcare**, agents are tested on recognizing patterns in radiology scans (a narrow, image-based task), but rarely on tasks that mimic front-line nursing coordination or insurance claim negotiation—tasks that require navigating complex regulatory language while maintaining patient empathy.

The labor market impact potential here is massive, but the development path is being skewed. If AI systems are only rigorously tested on what they can *do* in a sterile digital environment, we are building tools ill-equipped for the messy reality of human operations.

The Roadmap Gap: Why We Need New Metrics

To address this 92% gap, the AI community must fundamentally change how it measures success. Relying on existing, code-centric metrics will only perpetuate the bias. We require a new generation of benchmarks that reflect the complexity of the non-coding world.

1. The Rise of Qualitative and Mixed-Method Benchmarks

We need benchmarks that incorporate qualitative feedback loops. Instead of just checking if a task was completed, we must ask: *How well was it done?* Did the agent adhere to the *spirit* of the policy? Did it optimize for speed or for human satisfaction?

This leads to the need for general intelligence metrics that move beyond simple accuracy scores. Researchers must develop frameworks that evaluate:

- Contextual Depth: The ability to recall and apply historical context across multiple interactions.

- Ethical and Policy Adherence: Successfully navigating internal company guidelines, which are often nuanced and contradictory.

- Error Recovery: How gracefully the agent handles unexpected inputs or outright failures, a critical feature for real-world reliability outside of deterministic code execution.

2. Focusing on Sector-Specific "Agent Sandboxes"

Instead of a single universal benchmark, future progress may come from sector-specific "agent sandboxes." For instance, a Legal Agent Benchmark (LAB) might focus on document synthesis and relevance ranking under time pressure, judged by practicing attorneys, not just computer scientists. This approach forces developers to build tools tailored to industry friction points, not just digital elegance.

This aligns with observations that specialized applications, even in niche areas like **legal discovery**, are seeing slower but more trustworthy adoption precisely because the evaluation standards are tailored to that domain's high stakes.

Implications for Business and Society: A Bifurcated Future?

The current trajectory—heavy optimization for coding, light testing everywhere else—carries serious economic and societal implications. We risk a bifurcated impact of AI:

- Hyper-Productivity in Tech: Software engineering and related digital creation roles see massive, rapid productivity gains, potentially leading to rapid consolidation or displacement among junior developers.

- Stagnation Elsewhere: Sectors that drive most employment (retail, education, elder care) see minimal transformative change, leading to uneven economic growth and a widening skills gap where only the digitally fluent truly benefit from the new wave of intelligence.

Businesses must recognize this danger. Investing in AI tools that only solve 10% of your operational complexity is poor strategy. Leaders in manufacturing, healthcare, and finance need to pressure AI vendors not just for demonstration videos, but for verifiable proof of agent performance in unstructured, high-variability environments. If the vendor only has code samples, your organization is likely to be under-served.

Actionable Insights for Moving Forward

How do we pivot development focus toward the true needs of the market?

For AI Researchers and Developers:

Embrace Ambiguity: Start building benchmarks for subjective tasks. Partner with non-technical domains experts (HR managers, nurses, logistics coordinators) to design failure modes that matter. Prioritize robust error handling over achieving near-perfect accuracy on simplified problems. Stop measuring progress primarily by GitHub commits and start measuring it by reliable workflow completion.

For Business Leaders and Investors:

Demand Cross-Sector Benchmarks: When evaluating AI platforms, ask pointed questions about their agent's performance in administrative, coordination, or customer-facing simulation environments. If an agent excels only at generating clean YAML files, its ROI outside of IT departments is questionable. Look for investment signals in companies developing agents for logistics, supply chain coordination, or personalized tutoring—areas that clearly demonstrate applicability beyond the IDE.

For Policymakers and Educators:

Redefine Digital Literacy: As AI becomes more powerful, the skills gap widens between those who *build* the AI (coders) and those whose jobs are *affected* by the AI (everyone else). Education systems must pivot to emphasize critical thinking, complex communication, and human-agent collaboration, preparing the workforce for augmentation in nuanced roles, rather than just expecting replacement.

The coding obsession is a temporary comfort zone—a reflection of what is currently easiest to test. The true measure of transformative AI will not be found in the elegance of a compiled program, but in its ability to reliably and wisely navigate the complexity of the other 92% of human endeavor. Ignoring this reality means we are building incredibly sophisticated tools that only fit the smallest lock in the world.