AI Brain Fry: Why Cognitive Overload is the Next Big Bottleneck in Automation

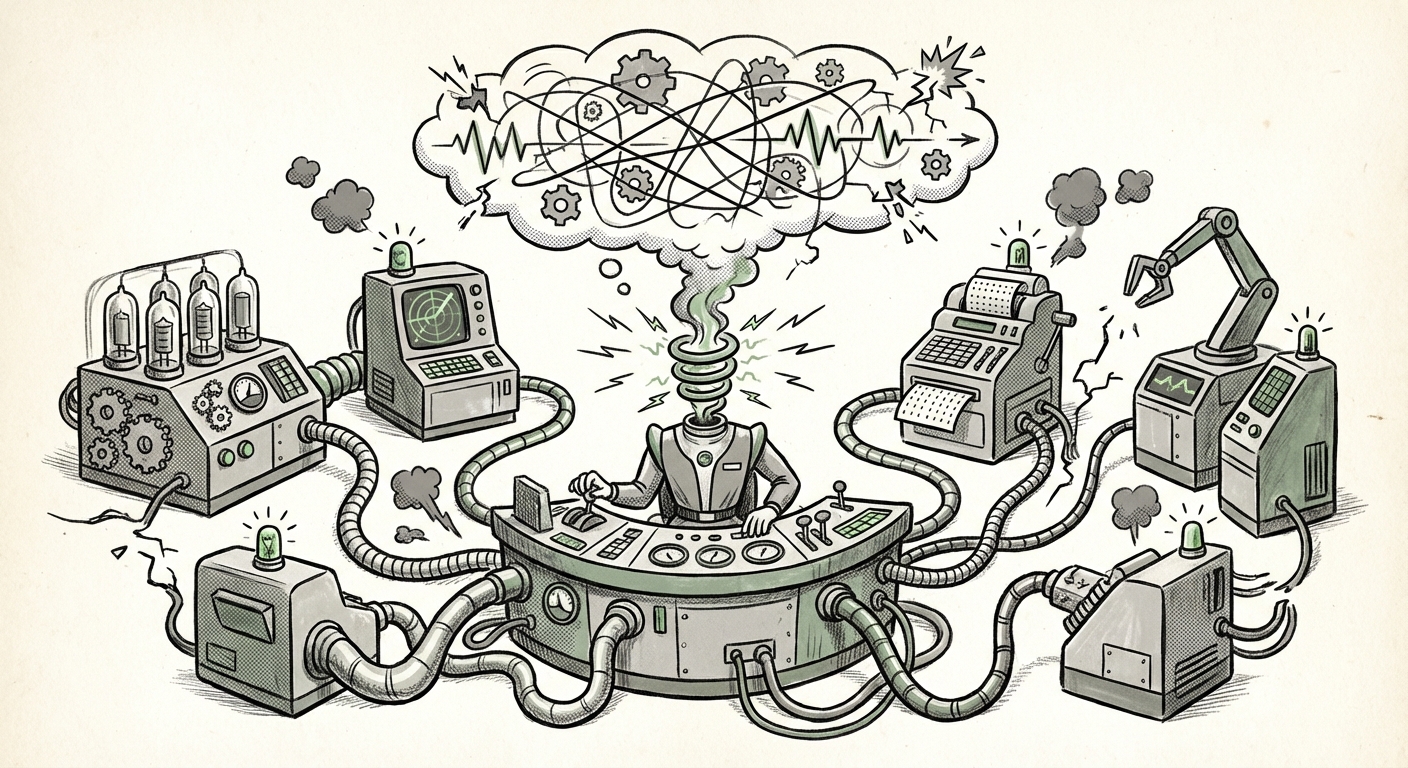

The promise of Artificial Intelligence in the workplace is seductive: agents that handle tedious tasks, assistants that draft complex reports, and systems that make our jobs faster and easier. Yet, a critical finding from a recent BCG study involving nearly 1,500 workers suggests we are hitting a severe, human-imposed speed bump. Workers are suffering from what we can now term "AI Brain Fry"—a measurable cognitive exhaustion caused by simultaneously overseeing too many AI tools.

As an AI Technology Analyst, I view this development not as a failure of the technology itself, but as a crucial design flaw in our current implementation strategy. We focused intensely on *AI deployment*; now, the future depends entirely on Human-AI teaming optimization.

The Core Problem: Monitoring Multiplied Workloads

Imagine being a manager overseeing five employees. Now, imagine that each of those employees is an AI agent, generating work that requires your sign-off, validation, or course correction. You aren't just managing five outputs; you are managing five potentially autonomous processes running concurrently. This is the reality for many early adopters.

The BCG study confirms that this overload leads to tangible negative outcomes: higher error rates and an increased intent among workers to quit. This isn't just about feeling tired; it’s about system breakdown. When the human safety net—the person responsible for catching the AI's inevitable mistake—is cognitively fried, the entire system becomes less reliable than if the human had done the job alone.

The Mechanics of Brain Fry: Cognitive Load vs. Attention

Why does this happen? The human brain has finite resources for sustained, high-intensity attention. We are excellent at focus, but poor at *vigilance* over long periods, especially when the monitored system is usually correct.

When AI is performing well (say, 90% accuracy), the human supervisor’s brain naturally defaults to a low-alert state. This phenomenon is linked to the well-documented issue of Automation Bias. We trust the machine until it fails. The problem arises because AI failures are often catastrophic or subtle, requiring intense cognitive resources to diagnose when they finally occur. If a worker is juggling three such streams, they cannot maintain the necessary high-alert state for any single stream.

To understand this better, we look for corroborating evidence in related fields, such as aviation or autonomous driving, where monitoring complex automated systems has long been studied. Research focusing on the "cognitive overhead" of supervising multiple AI agents confirms that simply adding tools does not equal productivity; it multiplies the mental switching cost.

The Autonomy Paradox and The Last Mile Problem

This situation creates what can be called the "Autonomy Paradox." We adopt AI to gain autonomy and reduce drudgery, but instead, we gain a new, high-stress form of oversight. Our autonomy is replaced by responsibility for the AI's performance.

This directly intersects with what system architects call The "Last Mile" Problem in AI Workflows. The "last mile" is the final step before deployment—the human validation point. If the AI performs 99 complex steps but requires the human to spend critical time re-validating step 85, the efficiency gain is eroded. When a worker oversees ten AI processes, they are effectively trying to run ten "last miles" simultaneously. They cannot mentally reconstruct the complex internal logic of each AI to spot the error efficiently.

This challenge is documented in studies concerning Human-in-the-Loop (HITL) architecture fatigue. These analyses show that when the AI's generated work is too opaque or too fast, the human is forced into an impossible position: either rubber-stamp the output (leading to errors) or slow down the process entirely by micromanaging (defeating the purpose of the AI).

The HR Crisis: Technostress and Attrition

The finding that workers report an increased "intent to quit" is perhaps the most financially salient consequence. This is a clear marker of Technostress and digital burnout.

Modern workplaces often demand proficiency in multiple communication platforms, project management suites, and now, multiple specialized AI assistants. When AI is implemented without a strategy for consolidation, it simply adds *another layer* of mandatory monitoring atop the existing digital clutter. For employees, this is not modernization; it is intensification. Organizations must recognize that poorly integrated AI is an amplifier of existing workload stress.

The Pivot: From Deployment to Optimization

The evidence is clear: raw AI deployment volume does not guarantee ROI. The next wave of successful AI integration will be defined by quality of partnership, not quantity of tools. This demands a strategic pivot for technology leaders, HR departments, and system architects alike.

1. Strategic Consolidation Over Proliferation (For Architects)

System designers must stop deploying isolated AI agents for every niche task. The future lies in creating Intelligent Orchestration Layers. These layers act as a highly curated interface between the human supervisor and the army of AI agents.

Instead of presenting ten different dashboards for ten agents, the orchestration layer should only surface the 1-2 most critical points where human judgment is indispensable. It should aggregate decisions, summarize risks, and provide a unified "status report" on the collective work of the AI team, dramatically reducing the cognitive switching cost.

2. Redefining Human Roles (For HR and Operations)

If humans are responsible for validating complex AI outputs, their training must change. They need to transition from *operators* to *auditors* or *exception handlers*. This requires deep training not just in using the AI, but in understanding the AI’s failure modes and confidence metrics.

Furthermore, businesses must track metrics related to cognitive load, not just time-to-completion. Metrics like "Average Validation Time per Agent" or "Number of Concurrent Agent Streams Supervised" should become KPIs for team health.

3. The Necessity of AI Transparency (For Trust and Vigilance)

To combat Automation Bias fueled by fatigue, AI systems need to communicate their certainty clearly. A worker overseeing multiple agents needs immediate visual feedback: "Agent A is 99% confident, proceed," versus "Agent B detected a rare edge case, confidence 55%—requires review."

This level of necessary transparency, often falling under the umbrella of Explainable AI (XAI), is crucial for maintaining the vigilance required to prevent errors, even when the supervisor is experiencing low-level burnout.

Future Implications: The Rise of the AI Team Manager

The "AI Brain Fry" phenomenon signals the maturation of AI integration. We are moving past the novelty phase and into the hard reality of operational sustainability.

In the near future, the most valuable employees won't be those who can use the most tools, but those who can effectively manage the *relationship* between human teams and AI teams. This new role—the AI Team Manager—will require skills bridging data science literacy, psychological insight into attention management, and mastery of workflow architecture.

If companies fail to address cognitive load now, the productivity gains promised by AI will stall. We will find ourselves in a state of pervasive, low-level digital exhaustion, where employees are constantly on guard but rarely effective, leading to high turnover and poor quality control.

The technology itself is incredibly powerful, but its power must be mediated by human capacity. The conversation must shift from "How fast can we deploy AI?" to "How effectively can our people partner with the AI we deploy?" Only by respecting the limits of human attention can we unlock the true potential of machine intelligence.