Beyond the Hype: Why AI Brain Fry Signals the End of Human Oversight and the Rise of True AI Orchestration

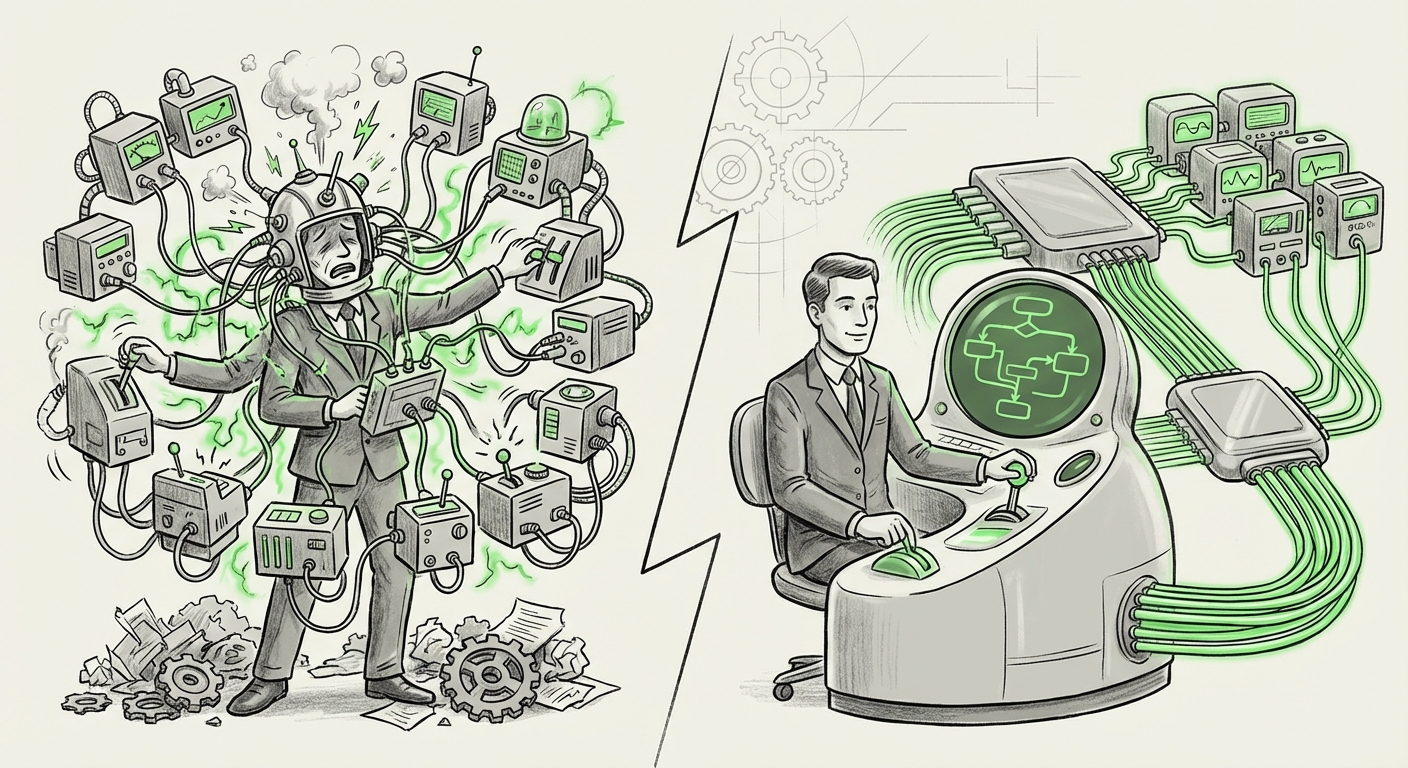

The initial rush to integrate Generative AI into every workflow has yielded exciting, often miraculous, productivity boosts. We replaced simple tasks with intelligent assistants, believing we were simply adding digital helpers. However, a critical new finding from a recent BCG study, warning of "AI Brain Fry," signals a major pivot point in AI adoption. This phenomenon—cognitive exhaustion caused by workers having to constantly monitor too many AI outputs—reveals that our current model of human-AI collaboration is fundamentally unsustainable at scale.

As an AI technology analyst, I see this not as a failure of the technology, but as a failure of the interface and the architecture we have imposed upon it. The next phase of AI integration will not be about capability improvement; it will be defined by **interface design, cognitive load management, and robust organizational scaling.** We are hitting the limits of human attention when tasked with being the final, tireless quality checker for dozens of semi-autonomous agents.

The Discovery: When More AI Equals Less Productivity

The core message from the study is stark: piling on AI tools does not automatically equate to linear productivity gains. When workers are forced to juggle oversight for numerous distinct AI tools—one for drafting emails, one for data analysis, one for scheduling, and so on—the cognitive switching cost becomes prohibitive. The human brain, despite its plasticity, has a finite capacity for vigilance and context switching.

This isn't just anecdotal fatigue; the consequences are measurable: higher error rates (because vigilance drops) and, crucially, increased intent to quit (because the job feels like perpetual babysitting rather than value creation). We have inadvertently created a new form of management burden.

Corroboration: Why the Brain Can’t Keep Up

To understand why this "Brain Fry" is inevitable under current paradigms, we must look at the science of automation. Our extended research framework confirms this bottleneck from three crucial angles:

- The Cognitive Science Foundation (Supervisory Control): The issue touches upon deep principles in Human-AI Teaming Theory. When automation is introduced, humans shift from being the *doer* to being the *supervisor*. However, sustained, low-stakes supervision is mentally taxing. Research often highlights the "monitoring paradox": when automation works well (which is most of the time), human attention lapses, making us less prepared when the automation inevitably fails or produces an error. We are programmed to look for anomalies, and when everything looks "mostly right," the effort required to verify that "mostly right" status drains our mental batteries quickly.

- The Enterprise Reality (Tool Sprawl): Business leaders are implementing AI wherever a quick win is visible. This has led to massive SaaS sprawl, now compounded by specialized AI agents. Instead of one integrated workflow, an employee might use five different tools, each demanding different input formats and output checks. This complexity skyrockets the agent monitoring overhead, neutralizing the productivity gains and making the promised ROI of AI tools questionable due to uncalculated human overhead costs.

- The Psychological Toll (Algorithmic Stress): The phenomenon strongly correlates with literature on algorithmic management. Whether it is a warehouse worker being timed by an algorithm or an office worker constantly checking an AI’s generated report, the feeling of being perpetually monitored or having to justify the machine’s work creates profound stress and a sense of lost autonomy. This directly feeds into the HR nightmare of increased burnout and attrition mentioned in the initial study.

The Implication: The Need for Abstraction, Not Just Automation

If the problem is too many interfaces requiring too much low-level attention, the technological answer cannot be simply "better AI models." The answer must be better architecture—a system that manages the AI assistants so the human doesn't have to.

This brings us to the most forward-looking trend: the shift toward AI Orchestration Layers and Ambient Intelligence.

The Future of Work: From Supervisor to Strategic Editor

The goal must be to evolve the human role from the tedious "QA checker" back into the strategic contributor. This is achieved when AI agents are managed by a higher intelligence.

Imagine a complex business task, like launching a new marketing campaign. Today, a manager might ask five different GenAI tools to generate ad copy, analyze competitor spending, schedule outreach, and draft initial emails. The manager must check all five outputs.

In the future model, the manager interacts with a single "AI Manager Agent" or an "Orchestration Layer." This layer communicates with the five specialized agents, synthesizes their outputs, flags only the critical decisions or major variances requiring human judgment, and presents a single, actionable summary.

This transition—from constant review to strategic review—is profound. It aligns with the search for autonomous agent workflows. When AI systems can manage and correct other AI systems without constant human intervention, we unlock true scalability.

For AI architects, this means prioritizing platforms that treat individual AI tools as interchangeable microservices, all governed by robust error-handling and context-aware protocols. This reduces the cognitive burden because the worker is no longer responsible for the *operation* of the tools, only the *direction* and *final validation* of the outcome.

Practical Implications for Business Strategy

Businesses rushing to deploy AI must immediately recalibrate their strategy from "tool deployment" to "workflow management." Ignoring "AI Brain Fry" is signing up for organizational entropy.

1. Re-evaluating AI ROI Through the Lens of Cognitive Load

The simple calculation of "one AI tool = X hours saved" is obsolete. Organizations must calculate the Human Supervision Overhead Cost (HSOC). If an employee saves 5 hours using AI but spends 4 hours verifying, managing context-switching between those AI tools, the net gain is negligible, and the risk of error is magnified.

Actionable Insight: Before adopting a new specialized AI tool, mandate a simulation where the user interacts with three such tools simultaneously. Measure the time taken to complete a standardized task that requires checking all three outputs. If the cognitive strain is high, reject the tool stack until an orchestration layer is in place.

2. Designing for "Quiet AI"

The best AI tools of tomorrow might be the ones you rarely see. Interface design must shift from being attention-grabbing (pop-ups, constant notifications) to being ambient and summary-driven.

This applies directly to the concept of Ambient Intelligence. If an AI agent flags a high-risk discrepancy in a financial model, it shouldn't send 50 notifications. It should send one alert summarizing: "Risk identified in Q3 projections, potential impact $500k. See synthesized anomaly report [link]." The human jumps in only for the high-value cognitive lift.

Actionable Insight: Prioritize AI tools that integrate deeply into existing platforms (like an operating system layer) rather than standalone web applications. Demand native summarization and batch-reporting capabilities from vendors.

3. Redefining Roles: From Operator to Auditor

The job description of the knowledge worker is changing faster than HR policies can keep up. If AI handles the drafting, calculating, and summarizing, the human must become an expert in auditing, ethical review, and strategic alignment.

This is where the psychological literature on AI stress becomes critical. Employees need clear boundaries. If their role is to be the final approver, they need dedicated time blocked out for that review, not constant interruptions. They need trust that the system defaults to safety and only pushes anomalies forward.

Actionable Insight: Invest heavily in training employees not just on *how* to use the AI, but on *how to spot its flaws* (model hallucinations, bias, and logical errors). This elevates the worker from a tired supervisor to a critical system evaluator, restoring a sense of meaningful contribution.

The Future Trajectory: Towards True AI Autonomy

The "AI Brain Fry" phenomenon is a necessary stress test for the industry. It proves that simply building more capable LLMs or agent tools without addressing the **Human-Machine Interface (HMI)** will lead to adoption ceilings.

The long-term implication is clear: we must accelerate the development of robust, self-correcting **AI orchestration layers**. These layers are the meta-AI that manage the productivity of the specialized AI tools.

When AI achieves true orchestration—where multiple agents can collaborate, negotiate, correct each other, and report back in a filtered, synthesized manner—the human role shifts entirely. We stop babysitting data-entry bots and start debating high-level strategic choices presented by an AI C-suite assistant.

This is the path toward sustainable, scalable AI adoption. It requires engineers to think less about building better chatbots and more about building better *traffic controllers* for the burgeoning digital workforce. The era of human micro-management of AI is ending; the era of strategic oversight and architectural trust is just beginning.