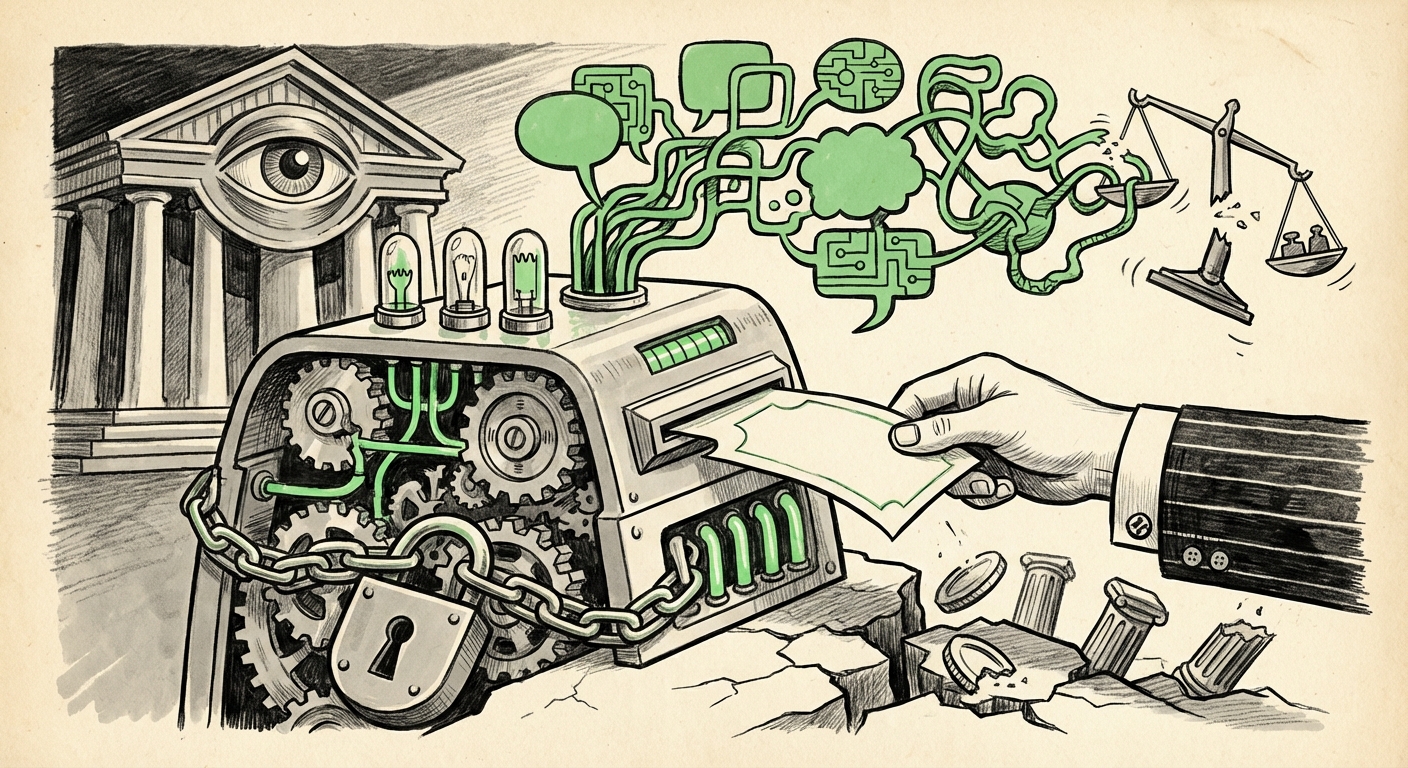

The AI Financial Advisor Dilemma: Liability, Accuracy, and the Race to Regulate Generative Advice

The promise of Artificial Intelligence has always been democratizing expertise. For decades, high-quality financial advice was reserved for the wealthy; now, millions are turning to consumer-facing LLMs like ChatGPT for guidance on crucial life decisions, such as retirement planning. This mainstream adoption, while revolutionary for accessibility, creates a volatile new landscape fraught with technical pitfalls and profound legal questions. This analysis synthesizes the current state of play, examining the necessary regulatory scaffolding, the inherent flaws in current technology, and the future trajectory of AI in personal finance.

The Adoption Wave: Democratization Meets Risk

The immediate appeal of using tools like ChatGPT for finance is undeniable. They are available 24/7, require no minimum asset threshold, and can process vast amounts of general information instantly. This surge in usage confirms a critical technology trend: users will readily adopt the most accessible tool, even if it operates outside traditional professional boundaries.

However, as highlighted by recent reports, this accessibility comes with stark warnings from industry experts. Financial advice is not general knowledge; it requires nuance, empathy, and adherence to strict standards of care. When an AI suggests selling a stock portfolio based on incomplete personal data or misinterprets a complex tax implication, the consequences for an individual’s life savings are immediate and devastating.

Corroborating the Tension: What Experts Are Seeing

Our analysis must move beyond the anecdote to understand the systemic pressures at play. The tensions discussed in the initial reports are being actively investigated across three critical vectors:

- Regulatory Response: Bodies like the U.S. Securities and Exchange Commission (SEC) are closely monitoring this space. The need for explicit "SEC guidance on AI financial advisors" is urgent because current rules were written for human fiduciaries, not probabilistic algorithms. The question looms: Can an algorithm truly serve as a fiduciary?

- Technical Integrity: The fundamental limitation of current LLMs is their tendency to "hallucinate"—to generate confident but entirely false information. When this occurs in a quantitative field like finance, the risk profile skyrockets. Research into "hallucination rates in LLMs for quantitative tasks" reveals that while progress is being made, zero-error tolerance required for financial models remains a high bar.

- Liability Frameworks: If an AI provides incorrect advice leading to millions in losses, where does the accountability rest? The evolution of concepts like "fiduciary duty in artificial intelligence liability" will define the next decade of FinTech development. Is the developer responsible, the platform hosting the model, or does the user assume all risk?

The Technical Chasm: Precision vs. Probability

To appreciate the risk, one must understand how LLMs function. They are fundamentally pattern-matching systems designed to predict the next most likely word in a sequence based on their training data. They do not understand financial law or the intricate interplay of capital gains, inflation hedging, and personal risk tolerance in the way a Certified Financial Planner (CFP) does.

For a business or technology audience, this translates directly into failure modes:

- Context Window Limitations: A chatbot might offer generic advice that fails when confronted with a specific, unusual state tax law or a complex trust structure that falls outside its standard training corpus.

- Data Staleness: Tax laws, interest rate environments, and market conditions change constantly. Unless the LLM is deeply and continuously integrated with real-time, verified market data (a massive engineering feat), its advice can quickly become obsolete.

- The 'Confidently Wrong' Factor: Because LLMs are optimized for fluency, they deliver flawed quantitative results with the same convincing tone as accurate ones. This undermines the very basis of trust required in financial relationships.

The technological implication is clear: general-purpose LLMs are unsuitable for providing actionable, high-stakes financial advice in their current form. They are excellent sounding boards, organizational tools, or summarizers of financial news, but they are poor substitutes for certified planning.

The Regulatory Gauntlet: Catching Up to Innovation

The current regulatory environment is characterized by a reactive posture. Regulators are playing catch-up, attempting to apply decades-old concepts like "suitability" and "fiduciary responsibility" to software agents. This is the bedrock concern for incumbent financial firms.

When analyzing the need for "SEC guidance on AI financial advisors," we see a clear trajectory:

Regulators are likely to mandate one of two paths for any firm using AI to offer personalized advice:

- Human-in-the-Loop Mandate: Require that all AI-generated recommendations be reviewed, personalized, and signed off by a licensed human advisor who assumes the fiduciary duty. This is the safest, most immediate path.

- Auditability and Explainability Requirements: Demand that the AI system’s decision-making process be fully transparent and auditable by regulators. Given the "black box" nature of deep learning, achieving true explainability for complex financial scenarios is an enormous technical hurdle.

For the technology sector, this regulatory uncertainty stifles investment in fully autonomous advisory systems. Companies are hesitant to deploy systems where the liability could rest entirely on their shoulders should a system error lead to a client’s financial ruin.

The Future Trajectory: FinTech's Next Frontier

While general chatbots warn of limits, the FinTech sector is already moving past these warnings by seeking solutions, as reflected in the trend toward "FinTech adoption LLM personalized planning 2024." The future is not the generalist chatbot replacing the advisor, but rather specialized AI systems:

Specialization and Hybrid Models

The winning strategy involves creating highly constrained, domain-specific AI agents:

- Fact-Grounded Models: LLMs augmented with Retrieval-Augmented Generation (RAG) systems that pull answers only from certified, up-to-date regulatory documents and market feeds.

- Goal-Oriented Agents: Instead of open-ended conversation, these systems are programmed with specific, measurable objectives (e.g., "Maximize tax-advantaged savings for a family of four retiring in 20 years").

- Hybrid Advice Platforms: The convergence model where AI handles data aggregation, scenario modeling, and basic triage, freeing up the human advisor to focus solely on complex emotional decisions, behavioral coaching, and ultimate liability acceptance.

This specialization significantly reduces the chance of hallucination because the model is operating within a narrow, verifiable data set.

Practical Implications and Actionable Insights

For both consumers and established institutions, navigating this transition requires proactive measures.

For Consumers: Proceed with Extreme Caution

The most critical actionable insight for the millions currently using these tools is simple: Verify Everything.

- Never Trust Tax or Legal Advice: Treat any specific tax, legal, or investment directive from a chatbot as an unvetted starting point, not a final instruction.

- Use for Education, Not Execution: Use AI to explain complex terms (like "Roth conversion ladder") or summarize market news, but never to execute portfolio changes based on its output.

- Demand Transparency: If an advisory firm offers AI tools, ask pointed questions about oversight, data sources, and where the ultimate liability for errors resides.

For Businesses and Developers: Define the Guardrails

Businesses must prioritize de-risking their AI deployments before pursuing broad adoption:

- Isolate High-Risk Functions: Do not allow general-purpose LLMs to generate outputs related to personalized portfolio allocation, tax calculations, or insurance structuring until they pass rigorous, quantifiable testing against historical and synthetic datasets.

- Embrace Regulatory Clarity: Actively participate in discussions around "fiduciary duty artificial intelligence liability." Proactively designing systems that clearly delineate where human oversight begins and ends is crucial for securing Directors & Officers (D&O) insurance and maintaining client trust.

- Build Verification Layers: Treat the LLM output as an inference that must be validated by a traditional quantitative engine before being presented to the user. The LLM structures the narrative; the deterministic engine ensures accuracy.

Conclusion: The Inevitable, Yet Controlled, Future

The migration of personal finance inquiry onto generative AI platforms is irreversible. The convenience is too compelling, and the technology is rapidly improving its ability to synthesize complex information. However, the financial services industry is unique because trust is its core product. Unlike recommendations for movies or travel plans, financial advice carries a direct, measurable, and potentially ruinous cost for error.

The future of AI in finance will not be a sudden replacement of human expertise but a complex, staggered integration. Success belongs to those who can master the technical limitations—taming hallucinations through strict grounding and domain specialization—and those who actively engage with the evolving regulatory framework to clearly define liability. The accessibility gained by this technology must be balanced by an unwavering commitment to fiduciary integrity.