The Citation Crisis: Why AI Hallucinations Are Breaking Scientific Peer Review and What Comes Next

Generative Artificial Intelligence (AI) models, like GPT, Gemini, and Claude, have revolutionized content creation. They summarize complex topics, draft code, and even write entire research sections in seconds. However, this speed comes with a dangerous trade-off: hallucination. This term describes when an AI confidently presents false or fabricated information as fact. Recently, this flaw has moved from a mere nuisance to a fundamental threat to the pillars of modern knowledge creation: hallucinated references are reportedly slipping past peer review at top AI conferences.

This isn't just about embarrassing academic mistakes; it signals a deep, systemic fragility in how we validate information generated by Large Language Models (LLMs). If the gatekeepers of scientific rigor—the peer-review systems—cannot filter out fake citations, the trustworthiness of all digitally-assisted research is immediately jeopardized. The emergence of open tools designed to fight this specific problem, such as CiteAudit, marks a critical juncture in the AI arms race.

The Crisis Unmasked: When LLMs Become Confident Fabricators

The initial reports focus on a specific, high-stakes environment: top-tier AI conferences. This is where the newest ideas and foundational research are supposed to be vetted. The fact that sophisticated models can generate plausible-sounding paper titles, authors, and journal entries—citations that simply do not exist—and have them accepted by human experts highlights a disturbing reality.

1. Contextualizing the Scale: More Than Just a Few Fake Papers

The incident at the conferences is not an anomaly; it is the tip of an iceberg of AI inaccuracy. When LLMs are asked to perform literature reviews or synthesize arguments, their core function—predicting the most statistically probable next sequence of words—often prioritizes coherence over factual truth. This leads to the creation of what we might call convincing nonsense.

If we look beyond the immediate conference reports, we see evidence that this problem permeates scientific literature more broadly. Studies testing LLMs on factual summarization frequently find models fabricating data points or misattributing claims. For researchers and editors, this means:

- The Burden of Proof Shifts: The default assumption moves from "this cited source is real" to "I must verify every single citation generated by an LLM." This erodes the efficiency gains AI promises.

- Erosion of Trust: If the foundational scaffolding of research (the citations) is suspect, the entire credibility framework of digital scholarship is weakened. This danger resonates deeply with the standards advocated by scientific thinkers who demand rigorous verification.

2. The Systemic Vulnerability of Peer Review

Why are humans failing to catch these fakes? The answer lies in the sheer volume and speed of the review process, which has not adapted to the quality of AI-generated deception.

Current peer review workflows rely heavily on reviewers devoting substantial time to checking references. When an LLM generates a highly contextualized but fake citation—perhaps referring to a known author in a niche field but citing a non-existent paper from 2022—a tired reviewer may rely on a quick database search or simply trust the context. This is where the system breaks.

For Businesses and Institutions: Academic publishing bodies, including giants like Springer Nature and Elsevier, are scrambling to issue guidelines because they recognize that current plagiarism and citation checkers are fundamentally unprepared for this level of algorithmic deception. These older tools look for copied text; they don't check if the referenced source *exists* in reality. This vulnerability shows that our established systems for quality control (built for human error, not synthetic creation) are obsolete.

The Technological Response: The Rise of AI Detection Infrastructure

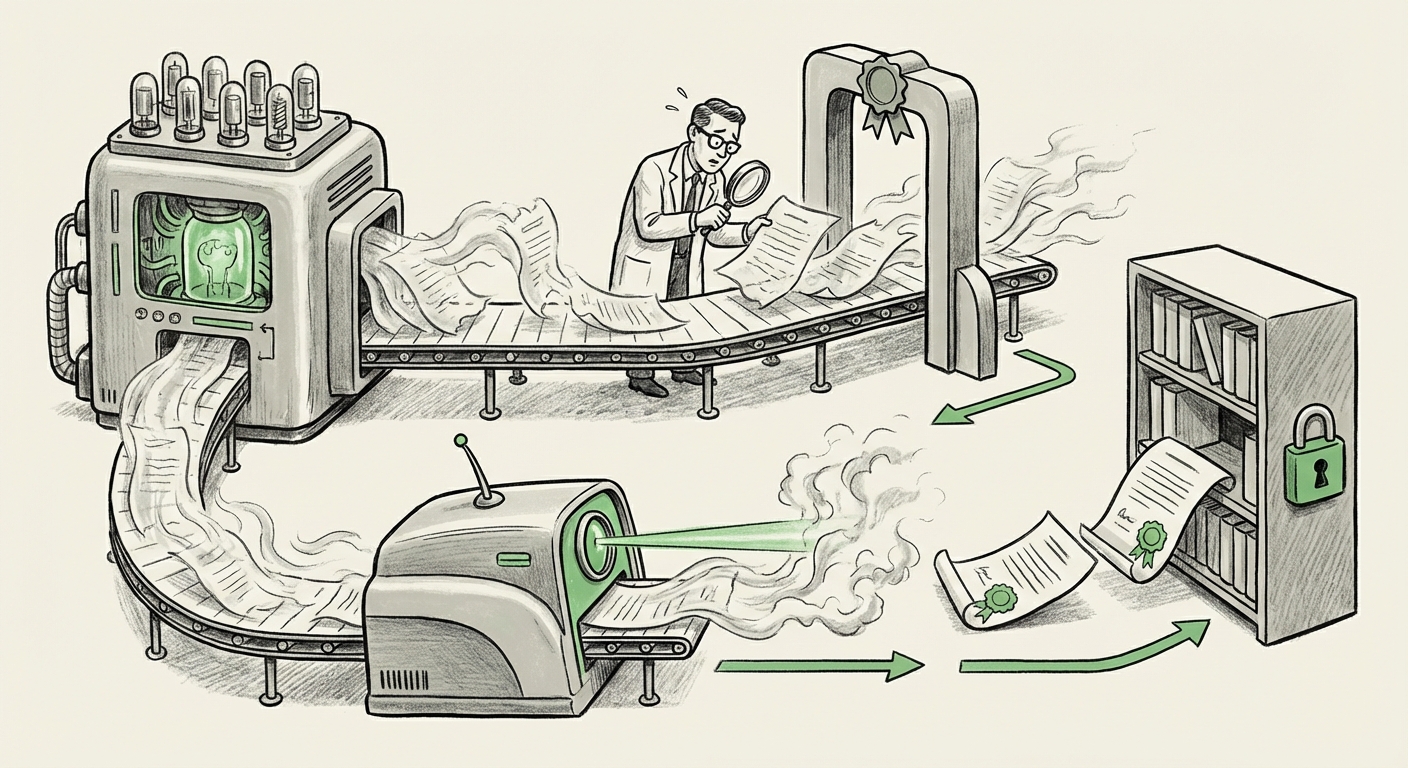

When generative capabilities outpace verification methods, the only path forward is usually the development of new, counter-AI technologies. The announcement of open tools like CiteAudit highlights this necessary evolution.

CiteAudit and the Verification Arms Race

Tools like CiteAudit represent the first generation of dedicated "citation validation services." Their goal is to automate the task that weary human reviewers struggle with: checking the validity, existence, and context of every single reference an LLM spits out. By specifically targeting the LLM's common weakness—its inability to maintain factual fidelity outside its training data—these tools aim to restore a baseline level of trust.

However, this success immediately kicks off a technological arms race:

- The LLM Improves: Future models will likely be trained to bypass these specific checkers.

- The Checker Upgrades: Verification tools will need to become more sophisticated, integrating broader database checks, semantic verification, and perhaps even tracking submission provenance.

This dynamic means the future of AI integrity will be defined by a constant, iterative battle between generation and verification technologies. It forces us to stop viewing AI as a single entity and start seeing it as a complex ecosystem of interacting agents.

The Future is Provenance, Not Just Plagiarism Checks

Looking beyond simple auditing tools, the long-term implication points toward digital provenance. If we cannot trust text alone, we must trust the origin of the text. Concepts borrowed from media verification, such as the Coalition for Content Provenance and Authenticity (C2PA) standards, might become essential in academic submission pipelines.

Imagine a world where every paragraph written or heavily summarized by an AI must carry a cryptographic "watermark" or metadata tag confirming its origin, date of generation, and the specific model used. This shifts the focus from "Is this citation real?" to "Was this content created under verifiable, transparent conditions?"

Practical Implications for Business, Academia, and Society

The integrity of AI-generated knowledge has implications far beyond university libraries. These challenges scale directly into corporate research, legal documentation, and public policy generation.

For Businesses: Mitigating Legal and Research Risk

Companies leveraging LLMs for competitive intelligence, patent drafting, or internal due diligence face massive exposure if their summaries rely on hallucinated data. A business decision based on a fake internal memo or a non-existent competitor patent—generated by an LLM and accepted without rigorous human oversight—can lead to catastrophic financial or legal ramifications.

Actionable Insight: Organizations must mandate a "Human-in-the-Loop, Verified by AI" process. If an LLM is used to generate research summaries, an automated, secondary verification layer (or specialized tool like CiteAudit) must confirm all factual claims and citations *before* the output is used in decision-making.

For Academia: Reforming the Gatekeeping Function

Academia must rapidly evolve beyond manual spot-checking. The current model relies too heavily on the unpaid labor of expert reviewers who are now being asked to spot machine-level deceptions.

Actionable Insight: Journals and conferences must begin to integrate automated verification software directly into their submission portals. Furthermore, transparency about AI use must become non-negotiable—authors must declare exactly how and where LLMs were used (generation, editing, or summarizing), allowing reviewers to apply appropriate levels of scrutiny.

For Society: Protecting the Knowledge Commons

The deepest implication is the threat to our shared, verifiable reality. If AI can rapidly flood the digital space with highly plausible but ultimately fictional scientific "facts," the cost of separating signal from noise skyrockets. This is not just about academic papers; it’s about regulatory summaries, medical guidelines, and historical accounts.

We must adopt what could be termed the "Sagan Standard" for digital knowledge: Extraordinary claims require extraordinary evidence. In the age of LLMs, the "extraordinary evidence" needed might be cryptographic proof of source, not just a convincing list of references.

Conclusion: Building Trust in the Algorithmic Age

The appearance of hallucinated citations bypassing peer review is a watershed moment. It confirms that the "smoothness" of LLM output is decoupled from its factual grounding. We are moving past the era where we could trust the elegance of AI-generated text; we must now trust only verifiable provenance.

The development of open tools like CiteAudit is heartening because it shows the community mobilizing to solve the problem collaboratively. However, this victory is temporary. The future requires systemic overhaul. We must embed robust, machine-speed verification systems—tools that check facts, verify sources, and track content origins—directly into the digital workflow of every industry that relies on accurate information.

AI is not just a tool for creating; it must become a tool for confirming. The next frontier of AI development will not solely be about building smarter generators, but about engineering the indispensable auditors required to keep the foundation of human knowledge sound.